A leaked performance comparison indicates a significant performance gap is forming in the next generation of AI-accelerating laptop chips. According to a benchmark result shared by tech leaker @mweinbach, Apple's upcoming M5 Max chip, featuring its next-generation Neural Processing Unit (NPU), demonstrates approximately twice the inference speed of Intel's forthcoming Panther Lake processor NPU when running the Parakeet v3 automatic speech recognition model.

What the Benchmark Shows

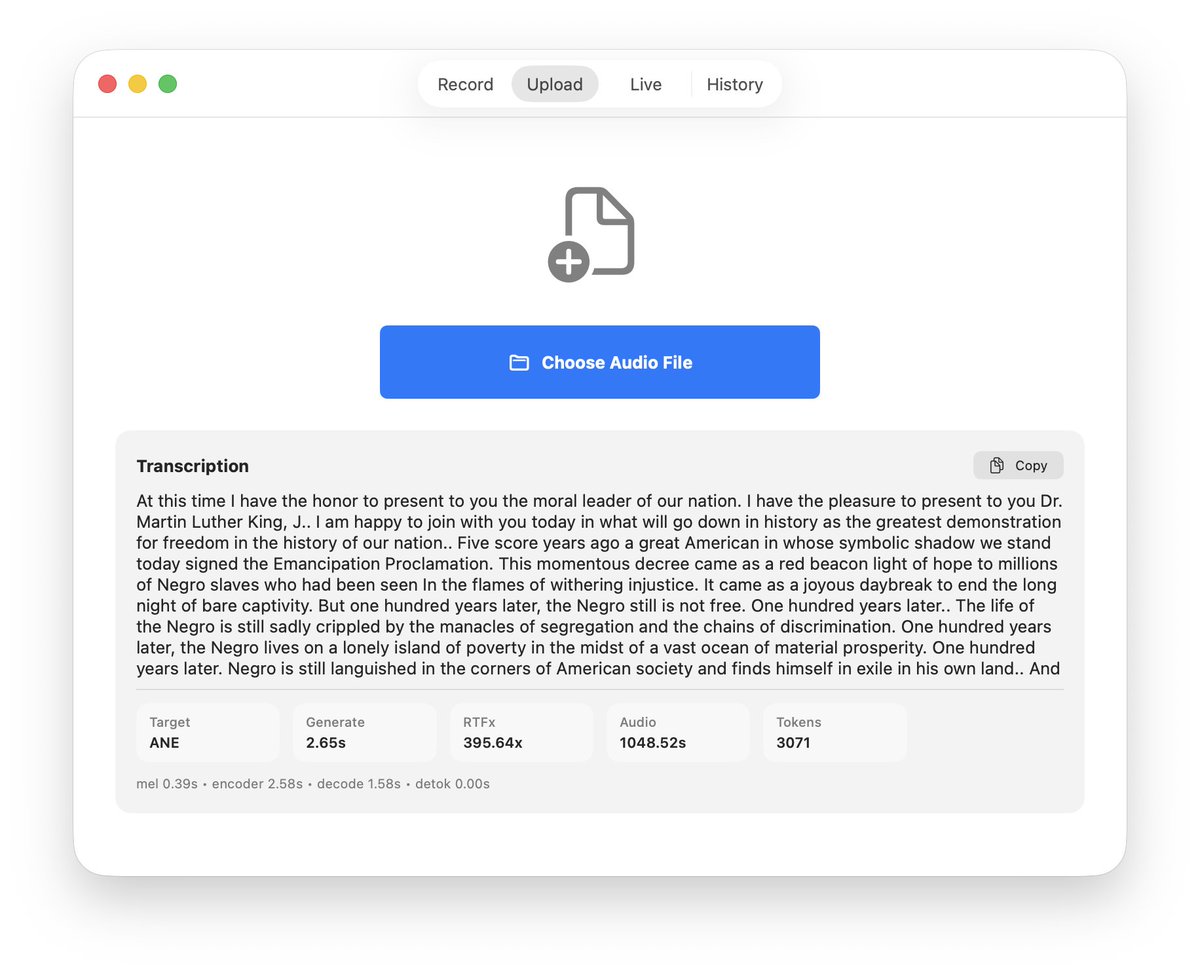

The benchmark uses Parakeet v3, a state-of-the-art, 1.1 billion parameter speech recognition model from NVIDIA's NeMo toolkit. Parakeet models are commonly used to stress-test AI inference hardware for real-time transcription tasks, a core workload for on-device AI assistants. The test measures tokens generated per second, a direct metric for real-time usability.

The result is a straightforward comparison: 2x performance for the Apple M5 Max NPU over the Intel Panther Lake NPU. While absolute numbers (like exact tokens/second) were not disclosed, the 2x multiplier is a substantial lead in a critical, growing workload category. This suggests Apple's architectural improvements and silicon process advantage are translating directly into a tangible AI performance lead for its premium laptop segment.

Technical Context: The On-Device AI Arms Race

Both Apple's M-series and Intel's Core Ultra (Meteor Lake, then Lunar Lake, then Panther Lake) platforms have aggressively integrated dedicated NPU blocks to handle the exploding demand for efficient, private, on-device AI. These NPUs are designed to run models like Parakeet, Llama, or Phi locally, offloading work from the CPU and GPU to save power and reduce latency.

- Apple's Strategy: Apple has consistently increased NPU performance with each generation (M2, M3, M4), tightly integrating it with its Core ML framework and marketing the capability for features like Live Voicemail transcription, camera processing, and Siri. The M5 generation represents the next step in this cadence.

- Intel's Strategy: Intel's NPU, introduced with Meteor Lake, is a key pillar of its "AI PC" vision. Panther Lake is the generation following the currently shipping Lunar Lake, expected to bring major architectural improvements and higher TOPS (Trillions of Operations Per Second) ratings.

This leaked benchmark provides one of the first direct, application-level comparisons between these two future platforms, moving beyond theoretical peak TOPS to actual model throughput.

Why This Matters for Developers and Users

For AI engineers and application developers, NPU performance dictates what models can feasibly run on a laptop in real-time. A 2x performance advantage means:

- Larger Models: The M5 Max could run speech or language models with roughly twice the parameters at the same speed, enabling more accurate or capable local AI.

- Lower Latency: Faster inference means quicker responses for interactive AI features, improving user experience for live transcription or assistant interactions.

- Power Efficiency: NPUs are designed for efficiency. Higher performance at a given power envelope (or equivalent performance at lower power) translates to longer battery life for AI-powered tasks.

This leak, if accurate, sets high expectations for Apple's M5 launch and puts pressure on Intel to demonstrate competitive real-world performance with Panther Lake, beyond marketing peak figures.

Limitations and Caveats

This is a single data point from an unofficial source. Key details are missing:

- The specific system configurations (thermal design power, memory bandwidth, cooling).

- The software stack and drivers used for each NPU (e.g., Apple's Core ML vs. Intel's OpenVINO).

- Performance across a broader suite of models (vision, language, diffusion).

- Performance-per-watt metrics, which are often more important than peak speed for mobile devices.

Benchmarks can also be optimized differently for each platform. The final performance gap in shipping products may vary.

gentic.news Analysis

This leak fits directly into the escalating narrative we've been tracking: the battle for on-device AI silicon supremacy is no longer about CPU cores; it's about the NPU. As we noted in our coverage of Intel's Lunar Lake launch last year, Intel staked its entire client roadmap on delivering leading AI compute. This early Panther Lake data suggests that closing the gap with Apple's vertically integrated silicon team remains a formidable challenge.

Historically, Apple's control over its entire stack—from silicon design to the Metal and Core ML frameworks—has yielded efficiency advantages. This Parakeet v3 result implies that advantage is extending decisively into the AI inference domain. For Intel, whose strategy relies on a broad ecosystem of OEM partners, demonstrating competitive NPU performance is critical to the viability of the "AI PC" category it is championing.

Looking at the entity relationships, this performance leak also indirectly benefits Arm's architecture, which underpins Apple Silicon. It serves as a high-profile validation case for Arm-based designs in high-performance mobile AI, just as Qualcomm's Snapdragon X Elite (also Arm-based) begins competing in the Windows on Arm space. The trend is clear: x86 dominance in the client space is being challenged not just on CPU efficiency, but now on the new battlefield of AI acceleration. The next 12-18 months, with launches from Apple (M5), Intel (Panther Lake), and Qualcomm (next-gen Oryon), will define the hardware landscape for the next generation of local AI applications.

Frequently Asked Questions

What is Parakeet v3?

Parakeet v3 is a 1.1 billion parameter automatic speech recognition (ASR) model developed by NVIDIA. It's part of the NeMo toolkit and is considered a state-of-the-art model for transcribing English speech. It's commonly used as a benchmark for AI inference hardware because speech recognition is a latency-sensitive, practical application for on-device AI.

What is an NPU (Neural Processing Unit)?

An NPU is a specialized processor core designed specifically to accelerate neural network operations, which are the foundation of modern AI and machine learning. It's more efficient at these tasks than a general-purpose CPU or even a GPU for certain inference workloads. Apple, Intel, AMD, and Qualcomm all now integrate NPUs into their latest mobile and desktop processors.

When will Apple M5 and Intel Panther Lake be released?

Based on typical release cadences, Apple's M5 chip is expected to be announced in late 2026, likely appearing first in new MacBook Pro models. Intel's Panther Lake is officially slated for a 2026 launch, following the Lunar Lake processors that launched in 2025. This benchmark suggests both companies are in the final stages of testing their next-generation designs.

Does a faster NPU mean better AI features?

Generally, yes. A faster, more efficient NPU allows devices to run larger, more capable AI models locally without needing an internet connection. This enables more responsive, private, and complex AI features—like real-time video effect filtering, advanced photo editing, instant document summarization, and low-latency live transcription—directly on your laptop or phone.