OpenAI and a consortium including Nvidia, AMD, and Intel open-sourced MRC, a networking protocol that sprays traffic across hundreds of paths to cut GPU idle time. The protocol, contributed to the Open Compute Project, is already integrated into emerging 800Gb/s network interfaces.

Key facts

- MRC sprays packets across hundreds of network paths simultaneously.

- Reroutes traffic around failures in microseconds.

- Integrated into emerging 800Gb/s network interfaces.

- Nvidia open-sourced MRC on May 6, 2026.

- Consortium includes OpenAI, AMD, Broadcom, Intel, Microsoft, Nvidia.

OpenAI and a consortium of tech giants — AMD, Broadcom, Intel, Microsoft, and Nvidia — open-sourced a new networking protocol called Multipath Reliable Connection (MRC), contributed to the Open Compute Project (OCP). The protocol is designed to solve congestion and failure challenges in massive AI clusters as hyperscalers scale to hundreds of thousands of GPUs [per the source].

How MRC Works

Large AI training systems depend on tightly synchronized communication between accelerators. Even small delays inside the network fabric can leave expensive GPUs idle while workloads wait for slower nodes to catch up — a problem infrastructure operators often call the "straggler effect." OpenAI said MRC attempts to reduce those slowdowns by dynamically spraying packets across hundreds of available paths while rerouting traffic around failures in microseconds [per OpenAI's technical post].

The protocol relies on multi-plane networking and SRv6-based source routing, which allows network interface cards to encode routing decisions directly into packet headers rather than depending entirely on switch-level routing logic. This enables MRC to distribute traffic across hundreds of network paths simultaneously instead of relying on more rigid routing schemes [per the source].

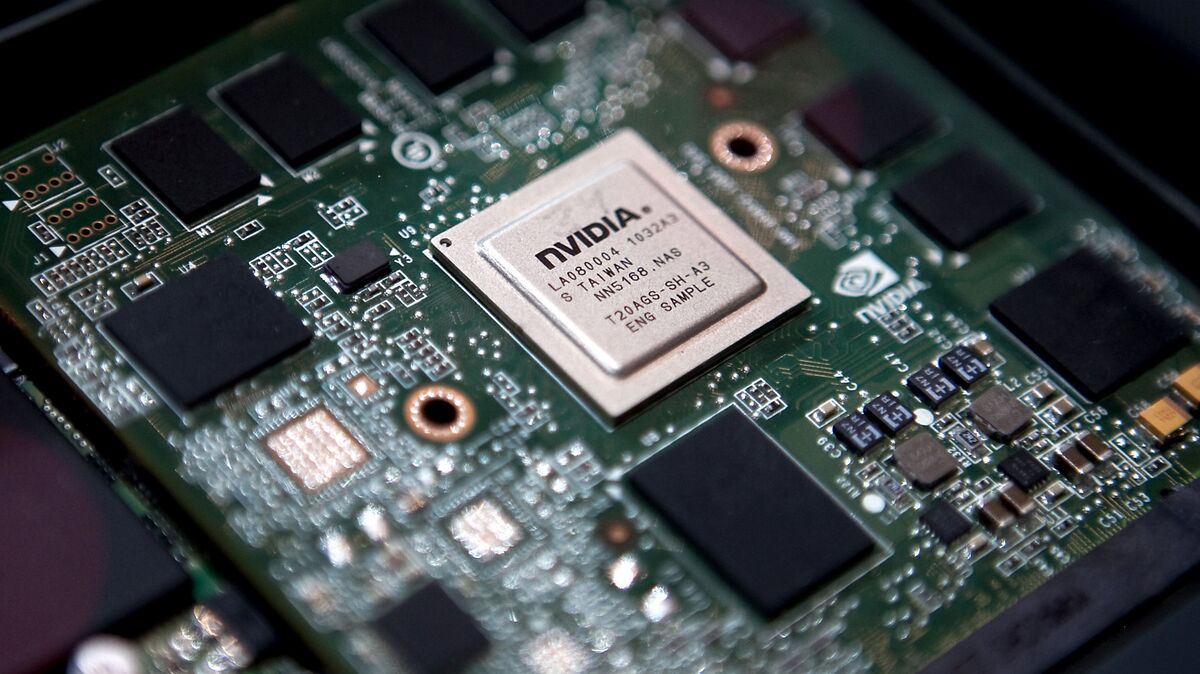

The Unique Take: Networking Is the New Memory Wall

The standard narrative is that GPU compute is the bottleneck in AI training. MRC reveals the opposite: as clusters hit 100K+ GPUs, the network fabric becomes the primary constraint. Nvidia's own Spectrum-X MRC, already powering frontier gigascale AI deployments, was open-sourced by Nvidia on May 6, 2026 [per ServeTheHome]. This suggests that even Nvidia, which profits from selling InfiniBand and Ethernet gear, sees proprietary protocols as a dead end for the scale needed by frontier labs.

Ron Westfall, networking and infrastructure practice lead at HyperFrame Research, said the protocol signals a broader shift away from traditional networking architectures designed around static paths and isolated connections. "OpenAI is treating the entire AI fabric as a single fluid system instead of a series of isolated connections," Westfall said [per the source]. He noted that hyperscalers are increasingly prioritizing predictable latency and resilience as AI clusters grow larger.

Consortium Dynamics

The consortium includes AMD, Broadcom, Intel, Microsoft, and Nvidia — competitors who rarely collaborate on infrastructure standards. That OpenAI led the effort, with Microsoft as a key investor [per knowledge graph], suggests MRC is already deployed in production at OpenAI's Blackwell clusters. Nvidia open-sourced MRC on May 6, 2026, confirming the protocol is not just a theoretical proposal but a shipping technology [per recent history].

OpenAI wrote: "Network congestion, link, and device failures are the most common sources of delay and jitter in transfers. These problems get more frequent, and harder to solve, as the size of the cluster increases" [per the source].

What to watch

Watch for OCP adoption milestones: how many hyperscalers adopt MRC in their next-generation network fabric designs, and whether the protocol drives measurable reductions in GPU idle time at 100K+ GPU scale. Also monitor if competing protocols emerge from Google or Amazon.