A recent analysis by tech commentator @mweinbach provides a stark, quantitative perspective on the scale of hardware required for the AI boom, directly comparing the memory footprint of AI infrastructure to the world's largest consumer electronics platform.

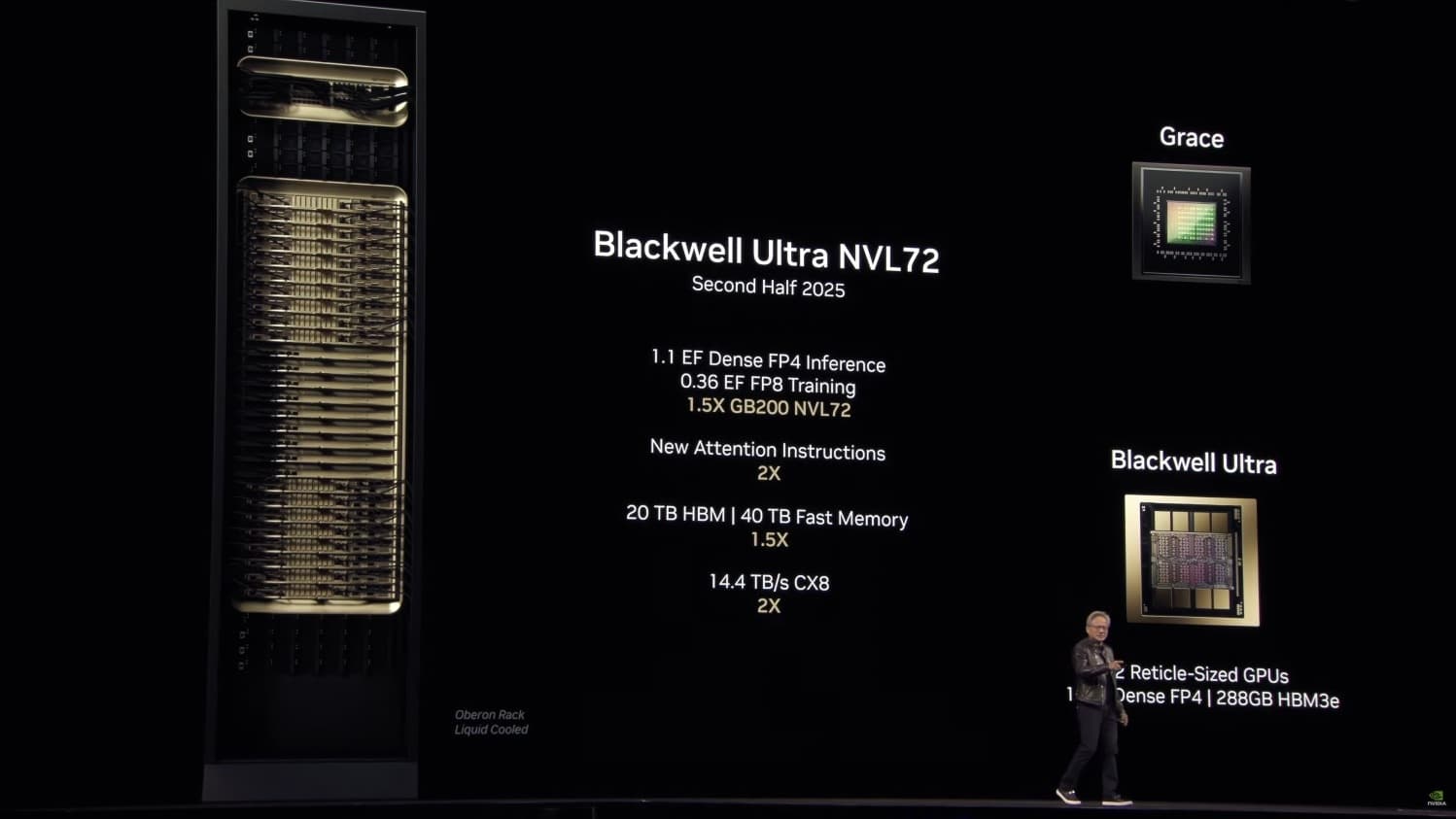

The projection states that in 2026, Nvidia is expected to ship between 70,000 to 80,000 "GB200" Grace Blackwell Superchip racks. Using a conservative estimate of 70,000 racks, each containing 17 terabytes (TB) of High Bandwidth Memory (HBM), the total shipment volume would reach approximately 1.19 exabytes (EB) of HBM memory dedicated to AI training and inference.

For comparison, the analysis estimates Apple will ship roughly 240 million iPhones in 2026. Assuming an average of 10 gigabytes (GB) of Low-Power Double Data Rate 5 (LPDDR5) memory per device—a conservative figure given current Pro models already ship with 8GB—this equates to about 2.4 exabytes of mobile DRAM.

What the Numbers Reveal

The core finding is not that AI memory demand is small, but that its sheer volume is now comparable to—and quantifiable against—the most massive consumer hardware supply chains on Earth. Nvidia's projected 1.19 EB of HBM for data centers would represent about half the total physical memory volume Apple ships for its entire annual iPhone lineup.

This comparison underscores two divergent hardware paradigms:

- AI Infrastructure (Nvidia): Extreme performance, specialized memory (HBM3e/HBM4), concentrated in data centers.

- Consumer Mobile (Apple): High-volume, power-efficient memory (LPDDR5/6), distributed across billions of devices.

The 17TB-per-rack figure aligns with known specifications for Nvidia's GB200 NVL72 platform, which connects 36 Grace Blackwell Superchips. Each Superchip combines two B200 GPUs (each with up to 192GB of HBM3e) and a Grace CPU, with the full rack leveraging NVL72 fabric for a unified memory space.

The Memory Divide: HBM vs. LPDDR

The analysis also highlights the technical and economic gulf between the memory types fueling these markets.

HBM (High Bandwidth Memory): Used in Nvidia's AI accelerators (H200, B200, GB200). It is a stacked, ultra-wide-interface memory directly connected to the GPU die via a silicon interposer, offering unparalleled bandwidth (e.g., HBM3e at over 10 TB/s) but at a high cost per gigabyte and complex manufacturing. Its supply is constrained, dominated by SK Hynix, Samsung, and Micron.

LPDDR5/6 (Low-Power DDR): Used in smartphones, laptops, and other edge devices. It is a cost-optimized, high-density, power-efficient memory standard produced in colossal volumes. Its supply chain is mature and geared for consumer electronics scales.

The fact that the AI industry's annual demand for the premium, capacity-constrained HBM could reach a volume ballpark of 50% of the entire iPhone fleet's standard memory is a powerful indicator of the capital intensity of the AI arms race.

gentic.news Analysis

This projection, while speculative, fits squarely into the escalating narrative of AI's hardware hunger that we have tracked closely. It follows Nvidia's Q1 2025 financial results, where data center revenue shattered records, driven overwhelmingly by demand for the Hopper and Blackwell GPU platforms. The 70-80k rack shipment figure for 2026 suggests Nvidia expects this demand not only to sustain but to accelerate, as cloud providers and large enterprises build out dedicated AI clusters.

The comparison to Apple is particularly apt. As we covered in our analysis of Apple's on-device AI strategy with Apple Intelligence, the company is pushing advanced LLMs to the edge, creating its own massive demand for device memory. This analysis reveals a split path: Apple integrates AI into its ubiquitous hardware stack, while Nvidia fuels the centralized data center build-out. Both are racing toward the same goal—dominance in the AI compute layer—but from opposite ends of the hardware spectrum.

Furthermore, this underscores a critical bottleneck we've highlighted in our reporting on the HBM supply chain: SK Hynix's near-monopoly on advanced HBM3e production. A demand spike of this magnitude for 2026 will intensify pressure on memory manufacturers to ramp HBM capacity, likely benefiting Samsung and Micron as they race to catch up. If accurate, this projection implies that the AI infrastructure market's appetite for the most advanced memory will continue to outstrip supply, keeping costs high and allocation strategic.

Frequently Asked Questions

What is a "GB200 rack"?

A GB200 rack refers to a server rack built around Nvidia's Grace Blackwell Superchip. The most powerful configuration, the GB200 NVL72, links 72 Blackwell GPUs (in 36 Superchips) with a unified memory space, allowing them to function as a single massive GPU for training giant AI models. The 17TB memory figure cited is for this top-tier rack configuration.

Is 10GB of memory per iPhone accurate for 2026?

It's a conservative estimate. As of 2024/2025, iPhone Pro models ship with 8GB of RAM. With the introduction of Apple Intelligence and more on-device AI features, memory demands are increasing. By 2026, an average of 10GB across the entire iPhone lineup (including base models) is plausible, if not slightly low, making the 2.4 exabyte total a reasonable projection.

What does 1.19 exabytes of HBM mean for AI progress?

It represents the physical hardware required for the next leap in model scale. Training frontier models like GPT-5, Gemini 2.0, and their successors requires thousands of GPUs working in concert with vast, fast memory pools. This volume of HBM shipment would support the simultaneous training of multiple next-generation models and a massive expansion in large-scale inference, enabling more powerful and widely available AI services.

How reliable is this 70-80k rack projection?

It is an industry analyst estimate, not an official Nvidia forecast. However, it is based on extrapolations from Nvidia's current datacenter revenue growth, cloud provider CapEx announcements (from Microsoft Azure, Google Cloud, AWS, and Oracle Cloud), and the published specs of the Blackwell platform. The figure is considered ambitious but within the realm of possibility given the current demand trajectory.