What Happened

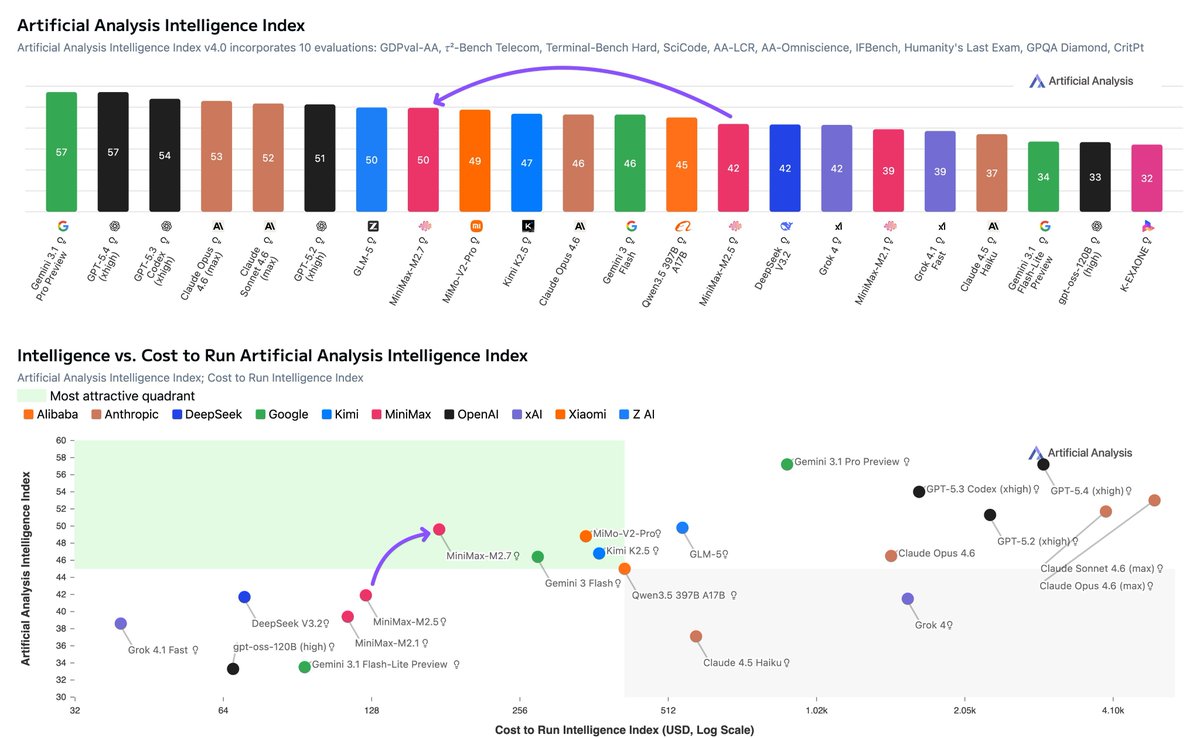

Minimax has announced the release of its M2.7 model. The announcement, made via a post on X (formerly Twitter), highlights several claimed performance benchmarks and a novel training approach.

The key claims are:

- Self-Evolving Training: Described as the "first model that helped build itself," M2.7 reportedly ran over 100 autonomous optimization loops during its own reinforcement learning (RL) training phase, leading to a claimed 30% "internal improvement."

- Coding Performance: The model is said to score 56.2% on SWE-Pro (described as "near Opus 4.6") and 55.6% on VIBE-Pro. For production debugging tasks, it is claimed to reduce resolution time to under 3 minutes.

- ML Research Agent Capability: On MLE Bench Lite, the model allegedly achieves a 66.6% medal rate, tying the performance of Gemini 3.1.

- Office & Analytical Work: It claims a top open-source ELO rating of 1495 on GDPval-AA with 97% skill adherence, capable of handling end-to-end analyst workflows including reports, models, and presentations.

- New Features: The release includes native multi-agent support and a new open-source interactive character demo called OpenRoom.

Context

Minimax is a Chinese AI company known for its large language models. The "self-evolving" claim, if substantiated, would represent a significant technical narrative, suggesting a move towards more automated and iterative model improvement processes. The benchmark comparisons position M2.7 against leading models from Anthropic (Claude 3.5 Opus) and Google (Gemini 3.1) in specific domains like coding and ML research.

It is crucial to note that this information comes from a promotional social media post. Independent verification of the reported benchmarks (SWE-Pro, VIBE-Pro, MLE Bench Lite, GDPval-AA) and the "self-evolving" training methodology is not available in the source material. The details of the autonomous optimization loops and the nature of the 30% improvement are not specified.