NVIDIA has released Lyra 2.0 on the Hugging Face platform, marking a significant step forward in generative AI for 3D environments. The framework is designed to generate persistent and explorable 3D worlds at scale, directly tackling two of the most stubborn technical hurdles in long-horizon video generation: spatial forgetting and temporal drifting.

Key Takeaways

- NVIDIA has released Lyra 2.0 on Hugging Face, a framework designed to generate persistent, explorable 3D worlds at scale.

- It specifically addresses the core technical challenges of spatial forgetting and temporal drifting in long-horizon video generation.

What Happened

NVIDIA has officially published the Lyra 2.0 framework on Hugging Face, making the research and model weights accessible to the broader AI community. The release is accompanied by a research paper and repository detailing the methodology. The core stated achievement is the generation of coherent, long-duration 3D scenes that maintain consistency over time and space—a capability that has eluded many previous video and world generation models.

The Core Technical Challenge

The announcement highlights that Lyra 2.0 specifically solves spatial forgetting and temporal drifting. These are critical failure modes in generative video models:

- Spatial Forgetting: When a model generates a sequence (e.g., a camera panning through a room), objects or scene details introduced earlier are "forgotten" or inconsistently represented later, breaking the illusion of a persistent world.

- Temporal Drifting: The scene's content, style, or geometry gradually and unintentionally changes over the generated sequence, leading to an unstable world that doesn't hold together.

By addressing these issues, Lyra 2.0 aims to move beyond short video clips to create explorable 3D worlds where a user's viewpoint can move consistently through a generated environment.

Context and Availability

The release on Hugging Face follows the standard practice for disseminating major AI research from industry labs, facilitating immediate experimentation, reproduction, and potential fine-tuning by developers and researchers. The framework's availability suggests NVIDIA is seeking to establish a new benchmark and possible foundation model approach for 3D world generation, a field currently populated by research from entities like OpenAI (Sora), Google (Lumiere, Genie), and Meta.

gentic.news Analysis

This release is a direct and competitive entry into the high-stakes arena of generative 3D and video world models. While OpenAI's Sora demonstrated breathtaking short-form video generation earlier in 2025, its limitations in long-horizon consistency were noted. NVIDIA's Lyra 2.0 appears to be a research-driven counter, explicitly architected to solve the persistence problem that Sora and others grappled with.

Strategically, releasing on Hugging Face is significant. It's not a closed product launch but an open framework release. This suggests NVIDIA is aiming to foster a research community and developer ecosystem around its approach, potentially hoping to establish Lyra as a foundational tool in the same way PyTorch or Diffusers became standards. This aligns with NVIDIA's broader strategy of enabling the AI ecosystem with its hardware and software stack—more advanced generative models create more demand for its GPUs and AI Enterprise software.

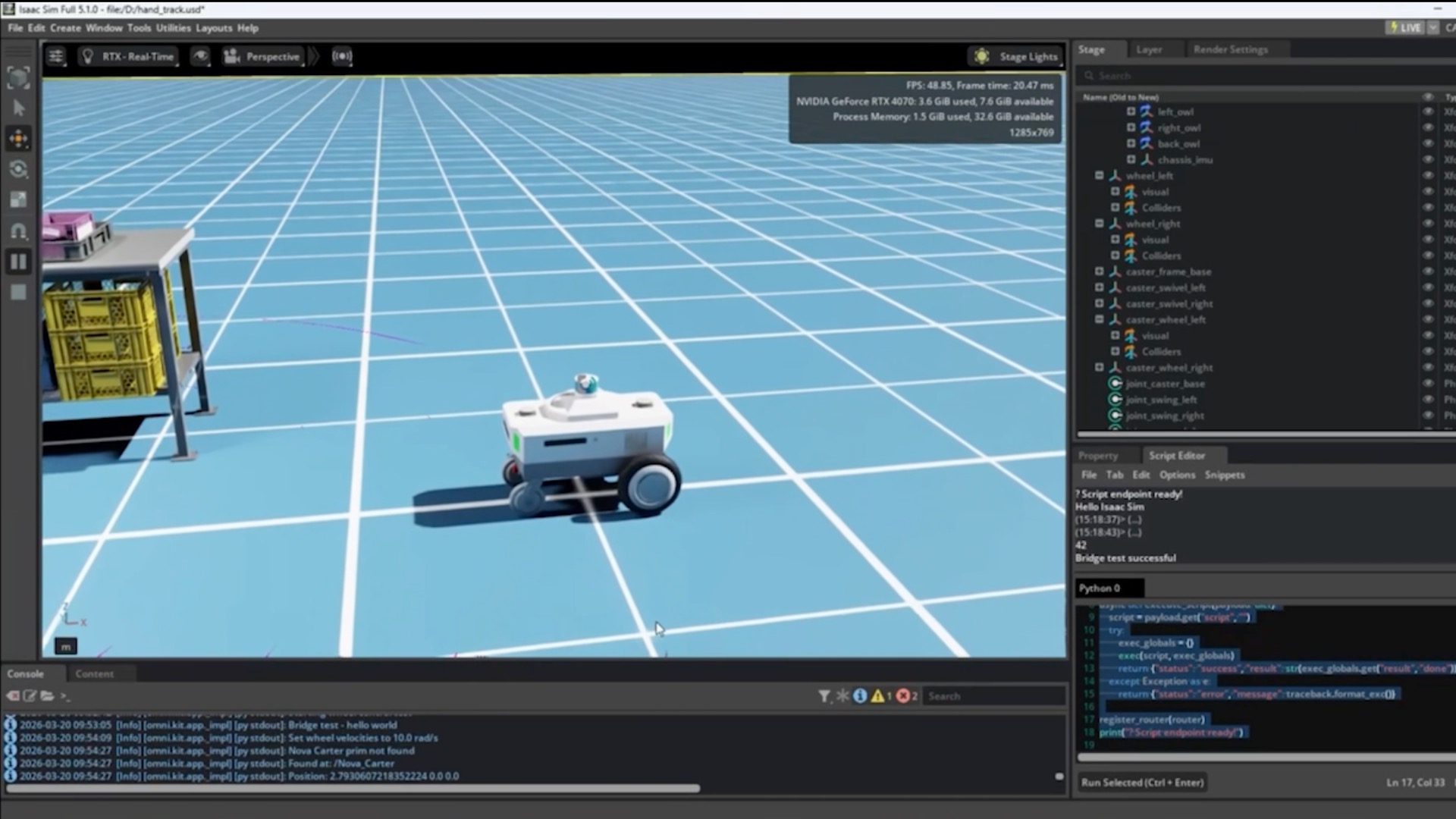

The focus on "explorable worlds" also points to the next frontier: AI for simulation and digital twins. Persistent, consistent 3D generation is not just for entertainment; it's critical for generating synthetic training data for robotics, autonomous vehicles, and creating scalable virtual environments for enterprise applications. NVIDIA's Omniverse platform is the obvious downstream destination for technology like Lyra, where it could be used to rapidly populate metaverse-scale digital twins with AI-generated content.

Frequently Asked Questions

What is NVIDIA Lyra 2.0?

NVIDIA Lyra 2.0 is an AI framework released on Hugging Face for generating persistent, explorable 3D worlds. It is designed to overcome the key technical challenges of spatial forgetting and temporal drifting that plague long-duration video generation models.

How does Lyra 2.0 differ from OpenAI's Sora?

While both are generative models for visual scenes, Sora primarily excels at generating high-fidelity, short-duration video clips. Lyra 2.0 is architected specifically for long-horizon consistency, aiming to create a single, coherent 3D world that can be explored over time without forgetting details or drifting in appearance.

Is Lyra 2.0 available for developers to use?

Yes. By being released on Hugging Face, the framework's research paper, code, and likely model weights are available for the community to download, study, and experiment with, following the standard open-science model for AI research.

What are the practical applications of persistent 3D world generation?

The primary applications include rapid content creation for games and virtual reality, generating vast and varied synthetic training environments for robotics and AI agents, populating digital twin simulations for architecture and city planning, and creating dynamic backdrops for film and animation.