NVIDIA has quietly released a significant optimization tool on Hugging Face that could reshape how developers approach large language model inference. The Kimi-K2.5 Eagle head brings Eagle-3 speculative decoding to Moonshot's reasoning models, promising what NVIDIA describes as "blazing fast inference" while maintaining model accuracy.

What Is the Kimi-K2.5 Eagle Head?

The Kimi-K2.5 Eagle head represents a specialized implementation of speculative decoding—an advanced inference acceleration technique. Rather than being a standalone model, it functions as an optimization layer designed specifically for Moonshot's reasoning architectures. By integrating this component, developers can potentially achieve substantial speed improvements without sacrificing the quality of model outputs.

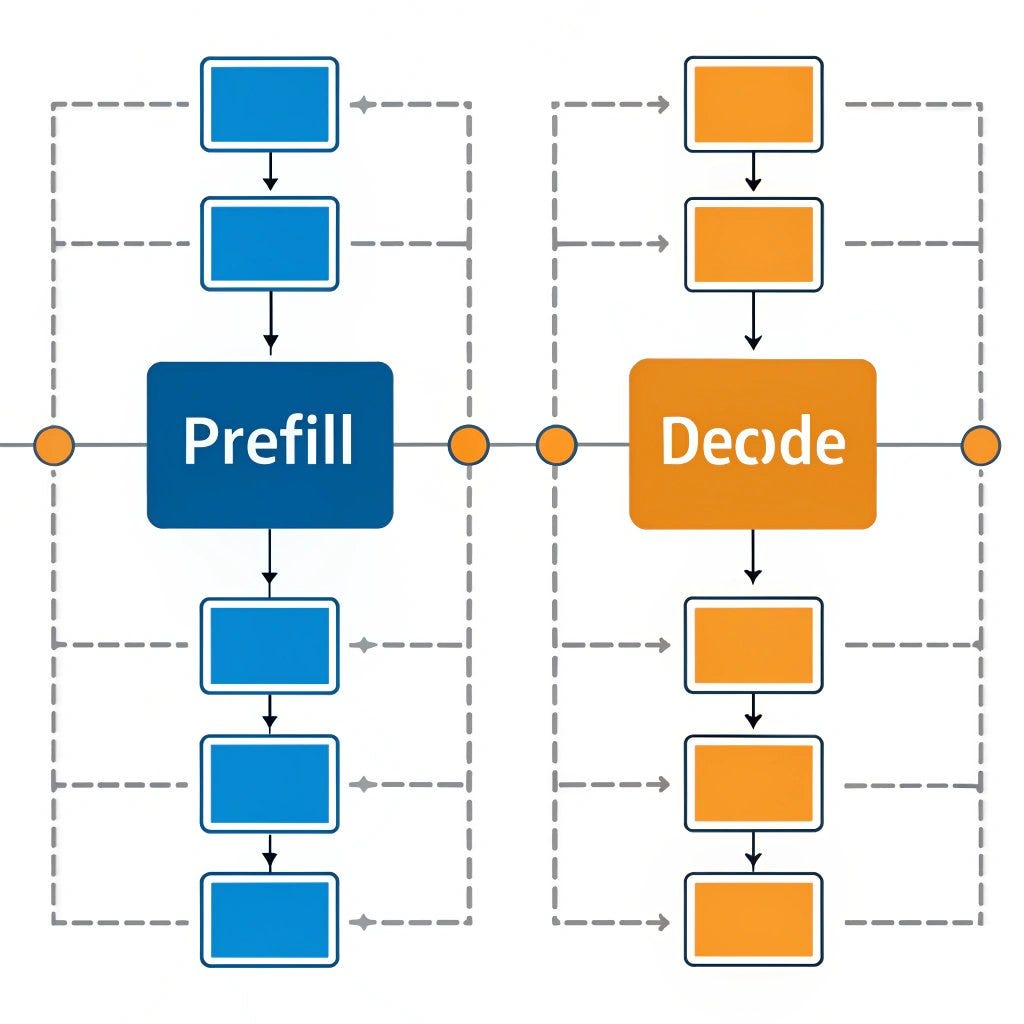

Speculative decoding works by using a smaller, faster "draft model" to predict potential token sequences, which are then verified by the larger, more accurate target model. This approach reduces the computational burden of running the primary model for every single token generation step.

The Eagle-3 Speculative Decoding Breakthrough

Eagle-3 represents the third generation of NVIDIA's speculative decoding methodology, building upon previous iterations with refined algorithms and optimization strategies. What makes Eagle-3 particularly noteworthy is its ability to maintain high accuracy while dramatically reducing latency—a critical consideration for real-time applications.

According to the announcement, this implementation brings Eagle-3 specifically to "Moonshot's reasoning model," suggesting targeted optimization for complex reasoning tasks rather than general text generation. This specialization could prove particularly valuable for applications requiring logical deduction, mathematical reasoning, or multi-step problem solving.

Implications for AI Development

The release of the Kimi-K2.5 Eagle head on Hugging Face makes this advanced optimization technique immediately accessible to the broader AI community. Hugging Face's platform serves as a central hub for machine learning models and tools, meaning developers can integrate this acceleration technology with relative ease into their existing workflows.

This development arrives at a crucial moment in AI evolution, as the industry increasingly focuses on inference efficiency alongside model capabilities. With growing concerns about computational costs and environmental impact, techniques like speculative decoding offer a pathway to more sustainable AI deployment.

Practical Applications and Use Cases

While the announcement doesn't specify exact performance metrics, the promise of "blazing fast inference" suggests significant practical benefits for:

- Real-time AI assistants requiring quick responses to complex queries

- Scientific research tools that perform multi-step reasoning

- Educational applications providing instant feedback on problem-solving

- Enterprise decision support systems analyzing complex scenarios

The targeted nature of this optimization—specifically for reasoning models—indicates NVIDIA's recognition of the growing importance of reasoning capabilities in AI systems, particularly as models move beyond simple pattern matching toward more sophisticated cognitive tasks.

The Broader Context of Inference Optimization

NVIDIA's release reflects a broader industry trend toward inference optimization. As large language models grow increasingly capable, their computational demands have created bottlenecks for practical deployment. Speculative decoding represents one of several approaches being developed to address this challenge, alongside model quantization, distillation, and architectural innovations.

What distinguishes the Kimi-K2.5 Eagle head is its specific tailoring to reasoning models and its availability through Hugging Face. This combination of specialization and accessibility could accelerate adoption across research institutions and commercial applications alike.

Looking Forward

The release of the Kimi-K2.5 Eagle head signals NVIDIA's continued investment in inference optimization technologies. As AI models become more integrated into daily applications—from customer service to creative tools to analytical platforms—efficiency improvements like those promised by Eagle-3 speculative decoding will become increasingly critical.

Developers working with Moonshot's reasoning models now have a powerful new tool to enhance performance, potentially opening doors to applications previously limited by latency constraints. As the AI community experiments with this technology, we can expect further refinements and potentially similar optimizations for other model architectures.

Source: NVIDIA's release of the Kimi-K2.5 Eagle head on Hugging Face as reported by HuggingPapers on X/Twitter.