A new technical paper from PayPal's AI research team demonstrates how speculative decoding—specifically the EAGLE3 algorithm—can dramatically accelerate and reduce the cost of running their production Commerce Agent. The study, published on arXiv on March 27, 2026, shows that by applying this inference-time optimization to their fine-tuned llama3.1-nemotron-nano-8B-v1 model, they achieved throughput improvements of 22-49% and latency reductions of 18-33% with zero additional hardware. Most strikingly, the optimized system on a single NVIDIA H100 GPU matched or exceeded the performance of NVIDIA's NIM inference microservice running on two H100s, enabling a 50% reduction in GPU cost for the same workload.

Key Takeaways

- PayPal engineers applied EAGLE3 speculative decoding to their fine-tuned 8B-parameter commerce agent, achieving up to 49% higher throughput and 33% lower latency.

- This allowed a single H100 GPU to match the performance of two H100s running NVIDIA NIM, cutting inference hardware cost by 50%.

What PayPal Built and Tested

PayPal's Commerce Agent is a specialized large language model that handles customer service and transaction-related queries. It is built on a fine-tuned version of NVIDIA's 8-billion-parameter Llama3.1-Nemotron model, a product of the relationship between Meta and NVIDIA. This prior fine-tuning effort, referred to internally as NEMO-4-PAYPAL, was focused on reducing latency and cost through domain-specific adaptation.

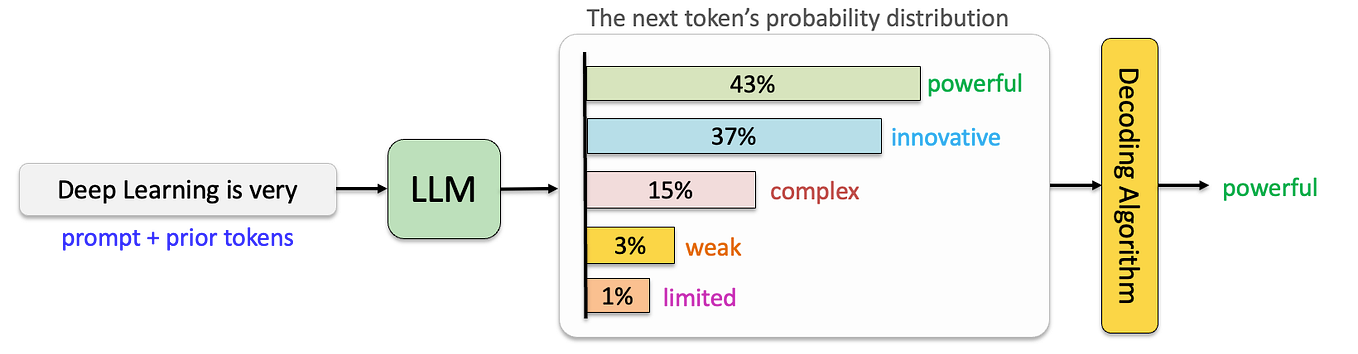

The new research layer adds speculative decoding using the EAGLE3 algorithm. Speculative decoding is an inference acceleration technique where a smaller, faster "draft" model proposes several future tokens in parallel. These speculative tokens are then validated in a single forward pass by the larger, slower "target" model (the main Commerce Agent). Only accepted tokens are kept, leading to potential speedups if the draft model is sufficiently accurate and fast.

In this setup:

- Target Model: The fine-tuned

llama3.1-nemotron-nano-8B-v1Commerce Agent. - Draft Model: The EAGLE3 algorithm, which is designed to be a lightweight, lossless method that doesn't require a separate trained draft model.

- Benchmark Framework: Tests were run using the

vLLMinference engine and compared against NVIDIA NIM (NVIDIA Inference Microservice), a proprietary, optimized serving stack. - Hardware: Identical configurations with 2x NVIDIA H100 GPUs.

- Test Matrix: 40 configurations varying speculative token count (

gamma=3,gamma=5), request concurrency (1 to 32), and sampling temperature (0 for deterministic, 0.5 for creative).

Key Results: The Numbers That Matter

The empirical study yielded clear, quantifiable results that translate directly to production economics.

Throughput Increase gamma=3, optimal concurrency 22-49% Serves nearly 1.5x more requests per second Latency Reduction gamma=3, optimal concurrency 18-33% End-users get responses faster Acceptance Rate gamma=3 across all tests ~35.5% Stable, predictable performance gain Acceptance Rate gamma=5 ~25% Diminishing returns with longer speculation Output Quality LLM-as-Judge evaluation Fully preserved No trade-off between speed and accuracy Hardware Efficiency EAGLE3 on 1x H100 vs. NIM on 2x H100 Matches or exceeds Enables 50% GPU cost reductionThe finding that gamma=3 (speculating 3 tokens ahead) provided the best balance is critical. The stable ~35.5% acceptance rate means the speedup is reliable across different load conditions (concurrency) and task types (temperature). Attempting to speculate further ahead (gamma=5) lowered the acceptance rate to ~25%, making the extra computational overhead less worthwhile.

The most significant business result is the direct comparison to NVIDIA NIM. Running their EAGLE3-optimized stack on a single H100 achieved performance on par with the NIM service utilizing two H100s. For a global service like PayPal's Commerce Agent, this represents a massive potential reduction in inference infrastructure cost.

How EAGLE3 Speculative Decoding Works

Speculative decoding tackles the core bottleneck of autoregressive LLM inference: generating tokens one-by-one, where each step requires a full model forward pass that is memory-bandwidth bound. EAGLE3 is a recent, efficient variant of this technique.

The intuition is simple: instead of waiting for the large target model to generate each token sequentially, use a cheap process to "guess" the next few tokens. Then, ask the large model to check all those guesses at once. If the guesses are good, you've gotten multiple tokens for the cost of roughly one forward pass.

EAGLE3's technical distinction is that it is a lossless and training-free method. It doesn't require training a separate draft model. Instead, it uses a shallow, modified forward pass of the target model itself to draft tokens. Specifically, it leverages intermediate layer features (like the Key-Value cache from early layers) to predict future tokens with minimal computation. This makes it particularly attractive for production systems where maintaining model fidelity and simplifying the deployment stack are priorities.

In PayPal's implementation, the vLLM engine was modified to integrate EAGLE3, allowing it to manage the orchestration between the draft phase and the verification phase on the target 8B model.

Why This Matters for Production AI

This study is not about a theoretical benchmark; it's a production case study from a hyperscale financial platform. The results provide a blueprint for any company running fine-tuned LLMs in cost-sensitive, latency-critical applications.

- Cost is King: The 50% potential GPU cost reduction is the headline. As discussed in our recent coverage of SemiAnalysis's report on disaggregated inference, customer feedback is driving the industry toward more efficient inference architectures. PayPal's work is a direct example of this trend, achieving better efficiency through software optimization on existing hardware.

- Fine-Tuning + Inference Optimization is a Powerful Stack: The research builds on their prior

NEMO-4-PAYPALfine-tuning work. This highlights a two-step strategy: first, specialize the model for the domain to improve accuracy and reduce prompt length; second, apply inference-time optimizations like speculative decoding to maximize hardware utilization. The combination is multiplicative. - The NVIDIA Ecosystem Play: The study uses NVIDIA's Nemotron-based model and H100 hardware, but benchmarks against NVIDIA's own NIM service. It shows that open-source inference optimizations (

vLLM+ EAGLE3) can compete with and even surpass proprietary vendor stacks in specific scenarios, giving engineering teams leverage and optionality.

gentic.news Analysis

This PayPal study is a significant data point in several ongoing industry narratives tracked in our knowledge graph. First, it underscores the intense focus on inference efficiency, which has become the primary battleground for AI economics in 2026. The quest to do more with less hardware is driving innovation in software, as we've seen with developments like the Parakeet v3 for Apple ANE and now EAGLE3 for GPUs.

Second, it highlights the maturing relationship between foundation model providers and enterprise practitioners. PayPal is using a model from NVIDIA's Nemotron family (stemming from the Meta-NVIDIA partnership reflected in our entity graph) but is not treating it as a black-box API. They are fine-tuning it and radically optimizing its inference, demonstrating a sophisticated, in-house MLOps capability. This moves beyond simple consumption to deep technical control, a pattern we expect to see more among leading tech enterprises.

Finally, the paper's timing is notable. Published just days after NVIDIA and Google Cloud expanded their partnership for agentic AI infrastructure, it serves as a real-world example of the efficiency gains driving that market. While NVIDIA promotes its full-stack solutions (like NIM), the open-source ecosystem (vLLM, EAGLE3) is advancing rapidly, creating a competitive landscape for inference software. PayPal's results prove that these open-source methods are production-ready and can deliver superior cost-performance for tailored models, potentially influencing how other enterprises architect their AI deployments.

Frequently Asked Questions

What is speculative decoding in simple terms?

Think of it like a human translator skimming a sentence ahead before speaking. Instead of translating word-by-word and pausing each time (standard LLM inference), they quickly guess the next few words (draft phase), then verify the whole chunk is correct in one go (verification phase). If the guess is good, they save time. EAGLE3 is a clever way for the AI model to "skim" using its own early internal calculations.

Does EAGLE3 change the quality of the AI's answers?

No, according to PayPal's evaluation using an LLM-as-Judge method, output quality is fully preserved. The process is lossless; the large target model ultimately verifies every token, so the final output is identical to what the standard, slower method would have produced. The gain is purely in computational efficiency.

Why did PayPal compare their system to NVIDIA NIM?

NVIDIA NIM is a standardized, optimized microservice for deploying AI models, representing a state-of-the-art commercial baseline. By showing their EAGLE3-optimized system on one H100 can match NIM on two H100s, PayPal provides a concrete, business-relevant benchmark: they can achieve the same performance at half the GPU cost, which is a compelling argument for their engineering approach.

Can I use EAGLE3 with any LLM?

The EAGLE3 method is model-agnostic in principle, as it uses the internal computations of the target model itself. However, it requires integration into the inference server (like vLLM). The technique is most effective with autoregressive decoder-only models (like Llama, Nemotron, GPT). The exact speedup will depend on model architecture, size, and the specific task.