Key Takeaways

- NVIDIA open-sourced Kimono, a motion diffusion model for humanoid robots, trained on 700 hours of motion capture data.

- It generates 3D human and robot motions from text prompts, supports keyframe and end-effector control, and runs on Unitree G1.

What Happened

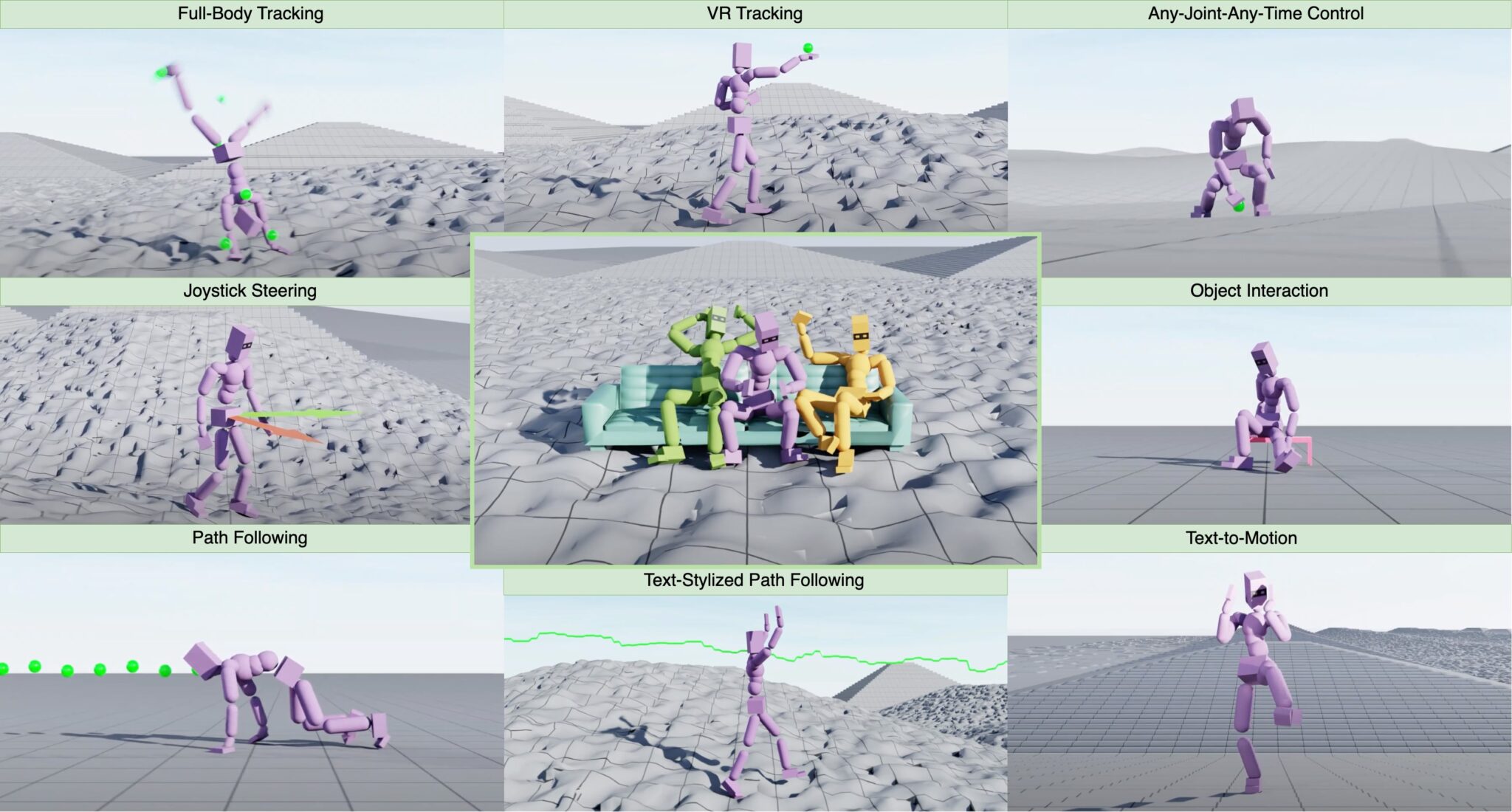

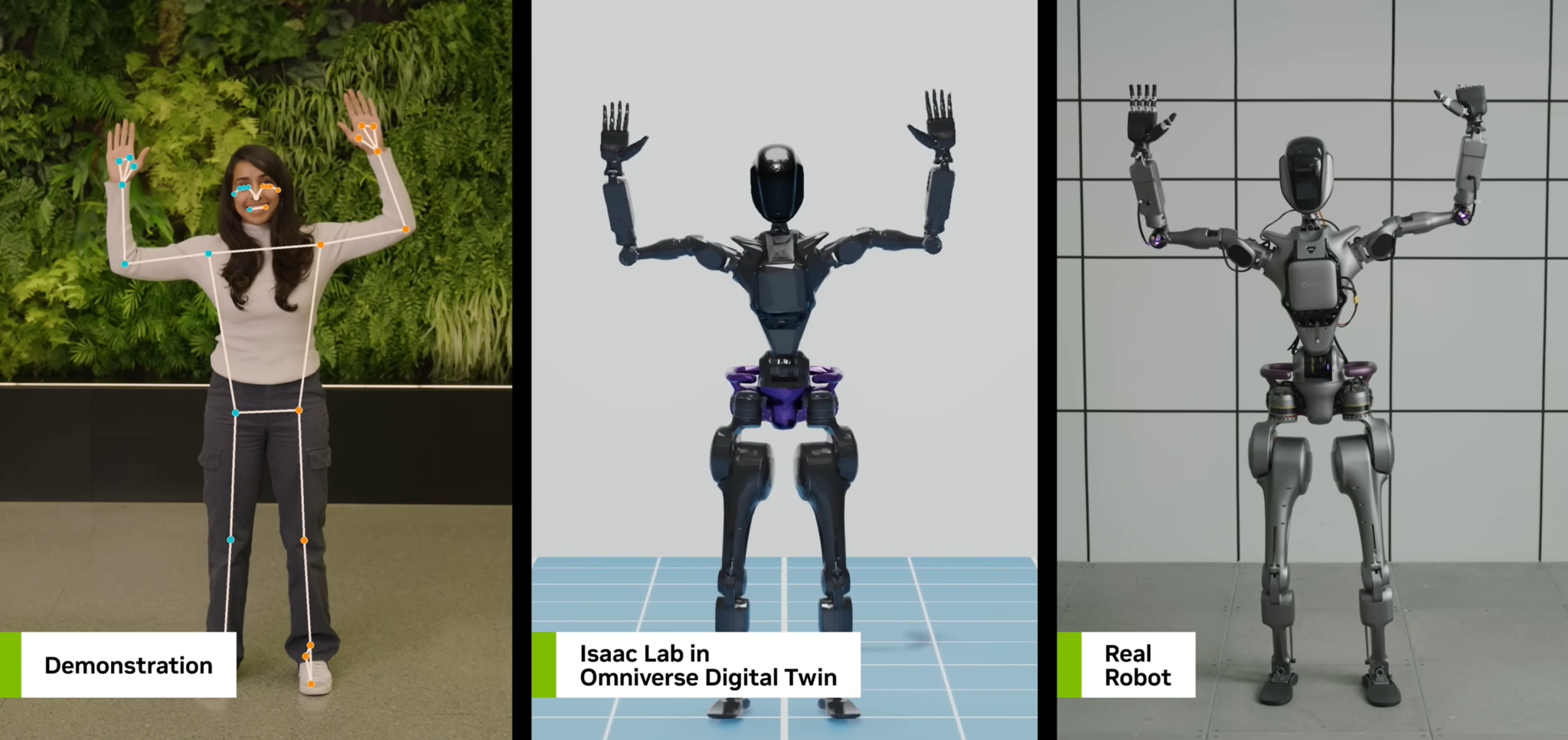

NVIDIA released Kimono, a motion diffusion model for humanoid robots, trained on 700 hours of motion capture data. The model generates high-quality 3D human and robot motions from text prompts, with control via full-body pose keyframes, end-effector positions/rotations, and 2D paths/waypoints.

The model works on human skeletons and the Unitree G1 robot. Outputs can be plugged directly into MuJoCo or retargeted to other robots using GMR. A web-based interactive demo with a timeline editor is available, and inference runs locally with ~17GB VRAM. The model is open-sourced under Apache 2.0.

Technical Details

- Training data: 700 hours of motion capture data

- Input: Text prompts, keyframes, end-effector positions, 2D paths

- Output: 3D human/robot motions

- Hardware requirement: ~17GB VRAM for inference

- License: Apache 2.0

How It Compares

Kimono is notable for its focus on humanoid robots, specifically the Unitree G1, and its open-source release under Apache 2.0. Other motion generation models (e.g., from Google DeepMind, Meta) often target human animation or specific tasks. Kimono's combination of text-to-motion, keyframe control, and robot retargeting is unique.

What to Watch

- Real-world deployment: How well does Kimono generalize to unseen robots and environments?

- Limitations: The model requires 17GB VRAM, limiting accessibility. Real-time performance on embedded hardware is unaddressed.

- Adoption: Will the robotics community standardize on Kimono for motion generation?

Frequently Asked Questions

What is Kimono?

Kimono is a motion diffusion model from NVIDIA that generates 3D human and robot motions from text prompts, trained on 700 hours of motion capture data.

How do I control the motion output?

You can control motion via full-body pose keyframes, end-effector positions/rotations, and 2D paths/waypoints.

What robots does Kimono support?

It works on human skeletons and the Unitree G1 robot, with output retargetable to other robots using GMR.

Is Kimono open source?

Yes, it's released under Apache 2.0. A web demo is available, and inference runs locally with ~17GB VRAM.

gentic.news Analysis

NVIDIA's Kimono is a strategic move to commoditize motion generation for humanoid robots, a space where proprietary solutions have dominated. By open-sourcing under Apache 2.0, NVIDIA aims to set a default standard, similar to its strategy with Isaac Sim and Omniverse. The choice of Unitree G1 as a reference robot is telling — Unitree is a rising competitor to Boston Dynamics and Figure, and NVIDIA's support could accelerate G1 adoption.

The 700-hour mocap dataset is substantial but not unprecedented. Google DeepMind's RT-2 used far more data for web-based robot learning. What's novel is the diffusion-based approach to motion generation, which allows fine-grained control via keyframes and end-effector constraints — a step beyond text-only models.

However, the 17GB VRAM requirement limits deployment to high-end GPUs (e.g., RTX 4090, A6000, H100). Real-world robot controllers often run on embedded systems with far less memory. NVIDIA will need to provide quantization or distillation recipes for edge deployment. The MuJoCo integration is practical but doesn't address sim-to-real gap challenges.

This follows NVIDIA's broader push into robotics: the Isaac platform, Jetson hardware, and partnerships with Amazon Robotics and BMW. Kimono fills a specific gap — motion generation — that complements their existing perception and manipulation tools. The timing is noteworthy: humanoid robot startups raised over $1.5B in 2025, and the field is hungry for reusable software components.