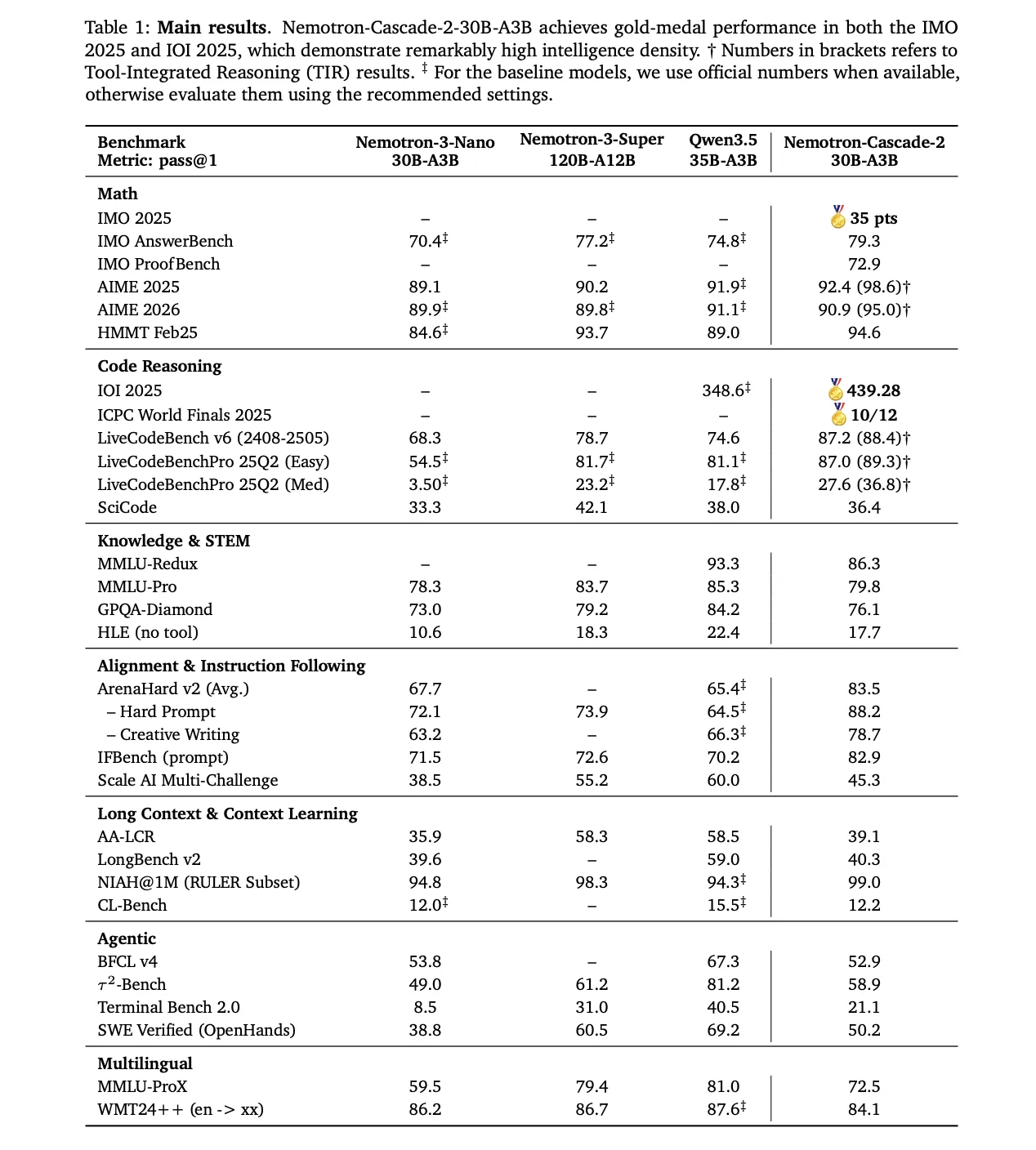

NVIDIA has released Nemotron-Cascade 2, an open-weight 30B Mixture-of-Experts (MoE) model with 3B activated parameters. The model is designed to maximize "intelligence density," delivering advanced reasoning capabilities at a fraction of the parameter scale of frontier models. It is the second open-weight LLM to achieve Gold Medal-level performance in the 2025 International Mathematical Olympiad (IMO), the International Olympiad in Informatics (IOI), and the ICPC World Finals.

Targeted Performance and Strategic Trade-offs

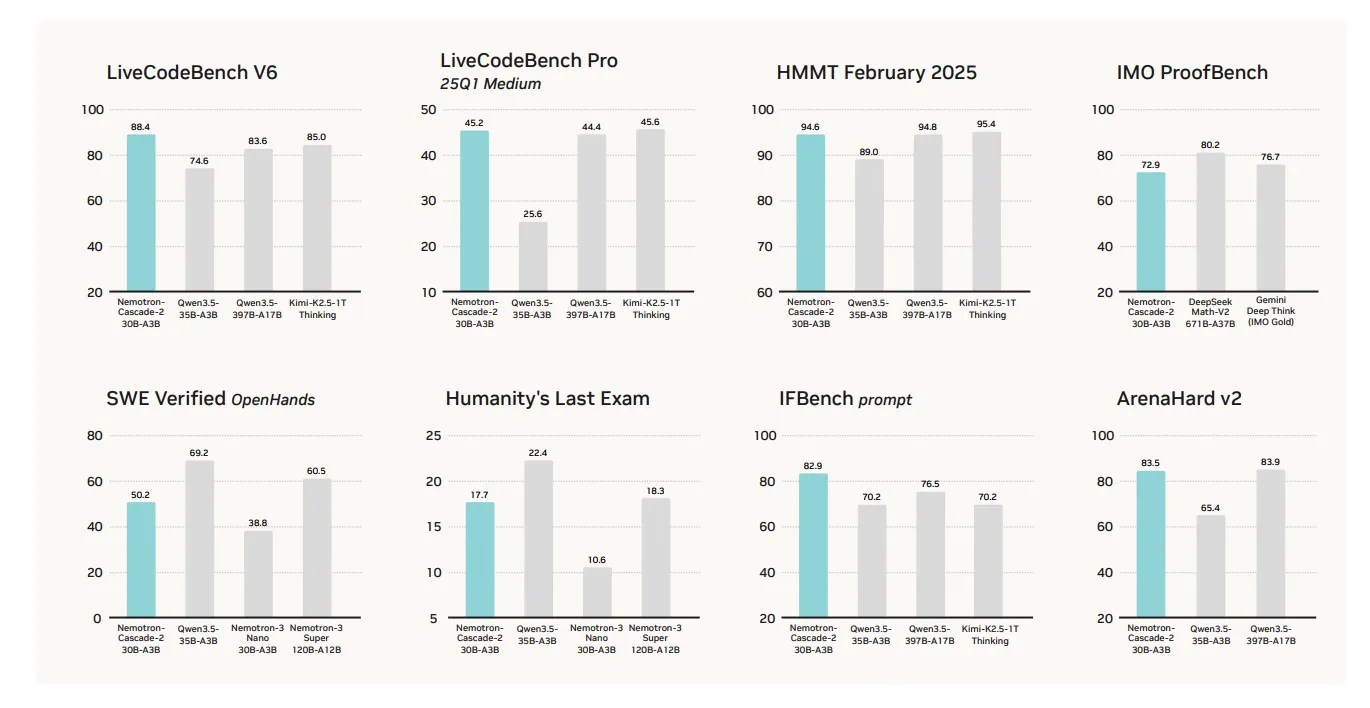

The primary value proposition of Nemotron-Cascade 2 is its specialized performance in mathematical reasoning, coding, alignment, and instruction following. The source material notes it is "surely not a 'blanket win' across all benchmarks," but excels in targeted categories.

Key benchmark comparisons against Qwen3.5-35B-A3B (released Feb 2026) and the larger Nemotron-3-Super-120B-A12B:

Mathematical Reasoning AIME 2025 92.4 91.9 HMMT Feb25 94.6 89.0 Coding LiveCodeBench v6 87.2 74.6 IOI 2025 439.28 348.6+ Alignment & Instruction ArenaHard v2 83.5 65.4+ IFBench 82.9 70.2Technical Architecture: Cascade RL and Multi-domain On-Policy Distillation

The model's reasoning capabilities stem from a post-training pipeline starting from the Nemotron-3-Nano-30B-A3B-Base model.

1. Supervised Fine-Tuning (SFT)

During SFT, NVIDIA's research team utilized a meticulously curated dataset where samples were packed into sequences of up to 256K tokens. The dataset included:

- 1.9M Python reasoning traces and 1.3M Python tool-calling samples for competitive coding.

- 816K samples for mathematical natural language proofs.

- A specialized Software Engineering (SWE) blend consisting of 125K agentic and 389K agentless samples.

2. Cascade Reinforcement Learning

Following SFT, the model underwent Cascade RL, which applies sequential, domain-wise training. This prevents catastrophic forgetting by allowing hyperparameters to be tailored to specific domains without destabilizing others. The pipeline includes stages for instruction-following (IF-RL), multi-domain RL, RLHF, long-context RL, and specialized Code and SWE RL.

3. Multi-Domain On-Policy Distillation (MOPD)

A critical innovation in Nemotron-Cascade 2 is the integration of MOPD during the Cascade RL process. MOPD assembly uses the best-performing intermediate 'teacher' models—already derived from the same SFT initialization—to provide a dense token-level distillation advantage. This advantage is defined mathematically as:

$$a_{t}^{MOPD}=log~\pi^{domain_{t}}(y_{t}|s_{t})-log~\pi^{train}(y_{t}|s_{t})$$

The research team's approach leverages these intermediate checkpoints to distill knowledge back into the main training model, enhancing performance across the targeted domains without requiring separate, full-sized teacher models.

Availability and Implications

Nemotron-Cascade 2 is released as an open-weight model. Its architecture—a 30B parameter MoE with only 3B active parameters—prioritizes efficiency for inference and deployment while targeting state-of-the-art performance in reasoning-intensive tasks. The model's performance profile suggests it is optimized for applications requiring strong mathematical reasoning, competitive programming, and precise instruction following, rather than general-purpose chat.