When NVIDIA CEO Jensen Huang stood on stage at GTC 2024 and promised "30x inference gains" with the company's new Blackwell architecture, the AI industry took notice. What few anticipated was that this projection would prove remarkably conservative. According to recent real-world testing data, rack-scale NVL72 systems are delivering performance improvements of up to 100x compared to strong Hopper baselines, fundamentally altering the economics and possibilities of AI deployment.

The Blackwell Architecture: More Than Just a Spec Sheet

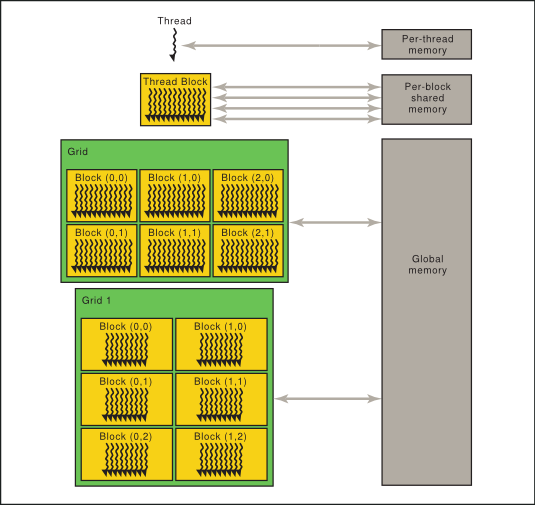

NVIDIA's Blackwell platform represents the company's most significant architectural leap since the introduction of Hopper. While initial announcements focused on the theoretical performance metrics, the real-world implementation tells a more dramatic story. The NVL72 system, which connects 72 Blackwell GPUs via NVIDIA's NVLink technology, creates what the company describes as a "single GPU" with 1.4 exaflops of AI performance and 30 terabytes of fast memory.

What makes these systems particularly effective for inference workloads is their ability to maintain high utilization across massive models. Traditional scaling approaches often hit diminishing returns as model size increases, but the NVL72's unified memory architecture appears to overcome these limitations, allowing for unprecedented efficiency in serving large language models and other complex AI systems.

Real-World Testing Reveals the True Scale

The gap between promised and delivered performance is particularly striking. While 30x improvements would have represented a significant advancement, the 100x gains observed in real-world testing suggest that NVIDIA's engineers either significantly underestimated their own technology or deliberately set conservative expectations. These tests compare NVL72 systems against what NVIDIA describes as "strong Hopper baselines"—presumably well-optimized H100 configurations that already represent state-of-the-art inference performance.

Industry analysts suggest several factors contributing to this performance leap:

- Memory bandwidth optimization: Blackwell's memory subsystem appears to deliver substantially better real-world performance than specifications alone would indicate

- Interconnect efficiency: The NVLink implementation in NVL72 systems minimizes communication overhead between GPUs

- Software stack maturity: NVIDIA's inference optimization software may have reached new levels of efficiency

Implications for AI Deployment Economics

The economic implications of 100x inference improvements cannot be overstated. Inference—the process of running trained AI models to make predictions—represents the majority of computational cost for deployed AI systems. These gains translate directly to:

- Dramatically reduced operational costs: Running the same workload on 1/100th the hardware

- New application possibilities: Models previously considered too expensive for real-time deployment become economically viable

- Energy efficiency breakthroughs: Significantly reduced power consumption for equivalent AI capabilities

For enterprises deploying large language models, computer vision systems, or recommendation engines, this performance leap could reduce annual inference costs from millions to tens of thousands of dollars for equivalent workloads.

The Competitive Landscape Reshaped

NVIDIA's unexpected performance delivery creates significant challenges for competitors. While companies like AMD, Intel, and various AI chip startups have been working to close the gap with NVIDIA, a 100x improvement in real-world inference performance represents a moving target that has accelerated dramatically.

The rack-scale approach exemplified by NVL72 systems also highlights NVIDIA's system-level advantages. While competitors often focus on individual chip performance, NVIDIA's integrated approach—combining hardware, interconnects, and software—creates synergies that are difficult to replicate through component-level competition.

What This Means for AI Development

Beyond immediate economic implications, these performance gains enable new approaches to AI development and deployment:

- Larger context windows: Models can process significantly more information in a single inference pass

- Higher quality outputs: More computational budget can be allocated to improving response quality

- Real-time complex reasoning: Applications requiring multi-step reasoning become feasible at scale

- Ensemble approaches: Running multiple models in parallel for improved accuracy becomes economically viable

The Road Ahead: Verification and Implementation

While the reported 100x gains are extraordinary, the AI community will need to verify these results across diverse workloads and deployment scenarios. Early adopters will likely share detailed benchmarks in coming months, providing clearer understanding of where these improvements are most pronounced.

For organizations planning AI infrastructure investments, the performance leap suggests that waiting for Blackwell-based systems—even if it means delaying projects—could yield extraordinary returns. The transition from Hopper to Blackwell may represent one of the most significant step-function improvements in computing history.

Source: Analysis based on performance data reported following NVIDIA's GTC 2024 announcements and subsequent real-world testing of NVL72 systems.