Key Takeaways

- A technical benchmark compares three popular open-source LLM inference servers—Ollama, vLLM, and llama.cpp—under concurrent load.

- Ollama, despite its ease of use and massive adoption, collapsed at 5 concurrent users, highlighting a critical gap between developer-friendly tools and production-ready systems.

What Happened

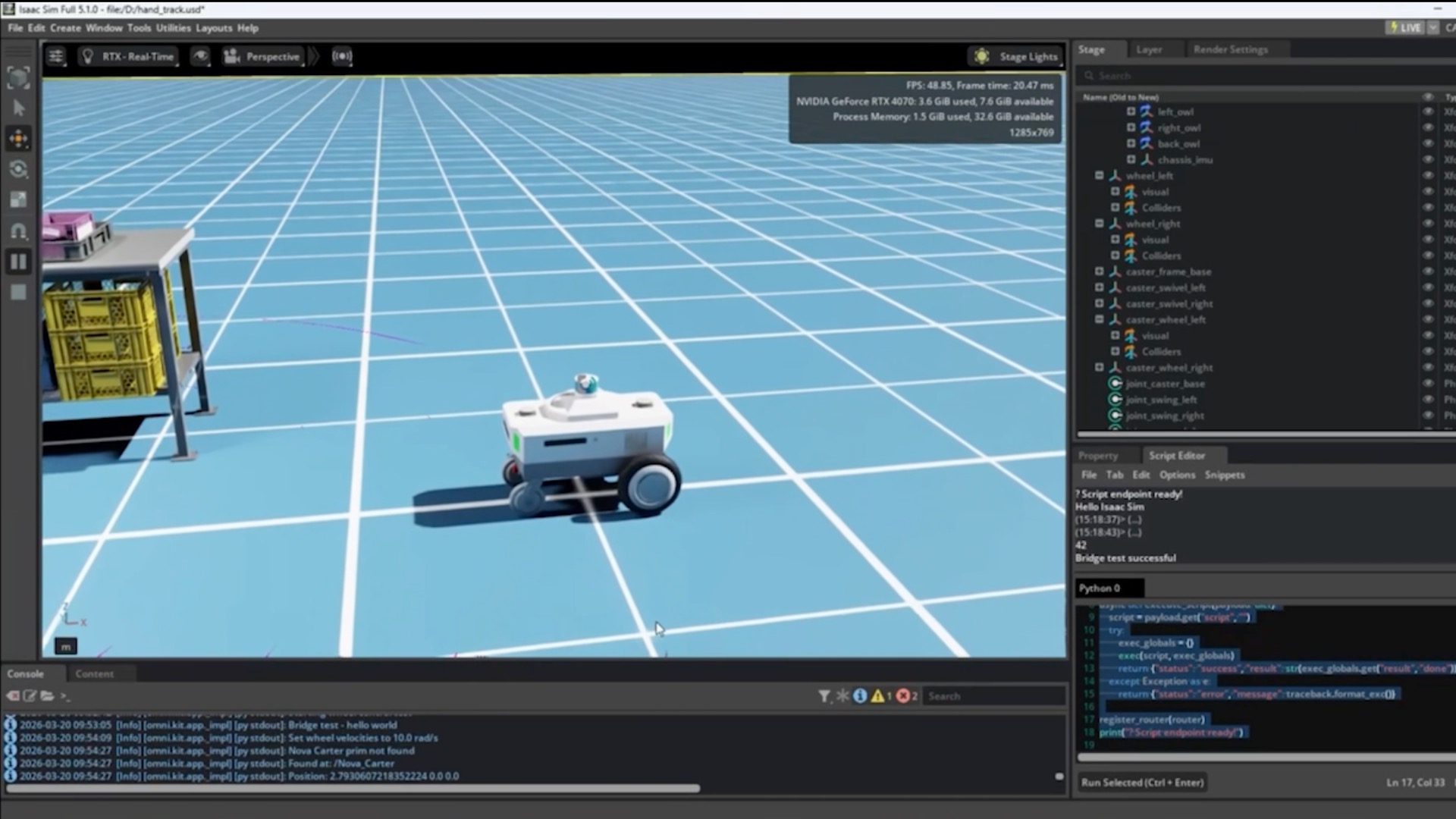

A developer conducted a hands-on performance benchmark of three leading open-source tools for running large language models (LLMs) locally and in production: Ollama, vLLM, and llama.cpp. The test focused on a critical metric for any business application: performance under concurrent user load.

The results were stark. Ollama, celebrated for its simplicity and boasting 52 million monthly downloads, failed completely when subjected to the load of just 5 concurrent users. The author, who had used Ollama for six months under the belief it was "production-ready," found it unable to handle this basic scaling requirement. In contrast, both vLLM (from the team behind LMSYS) and llama.cpp (the foundational C++ implementation) handled the concurrent load successfully, demonstrating the robustness required for real-world deployment.

This test moves beyond theoretical specs to a practical, operational stress test. It reveals that the tool most frequently recommended in tutorials and for quick prototyping has a fundamental architectural limitation when moving from a single-user development environment to a multi-user production scenario.

Technical Details: The Inference Stack Divide

The benchmark underscores a growing divide in the LLM inference stack between developer experience (DX) and production engineering.

- Ollama excels at DX. It provides a simple API, manages model files, and is trivial to set up, making it the default choice for tutorials and individual experimentation. Its architecture, however, appears optimized for serial, not parallel, request handling.

- vLLM is engineered for production throughput. Its key innovation is the PagedAttention algorithm, which manages the KV cache of attention mechanisms much like an operating system manages memory. This allows for highly efficient batching of incoming requests, dramatically improving GPU utilization and throughput under load.

- llama.cpp is the performance-oriented, bare-metal foundation. Written in efficient C/C++, it provides low-level control and is renowned for its performance on CPU and Apple Silicon (via its MLX backend, support for which was added on March 31, 2026). It requires more configuration but offers predictable, scalable performance.

The collapse of Ollama at 5 users is a classic symptom of a system without proper request queuing, context management, or memory optimization for concurrent inference sessions.

Retail & Luxury Implications

For retail and luxury brands deploying AI—whether for personalized shopping assistants, dynamic content generation, or internal knowledge agents—this benchmark is a crucial reality check.

The Prototype-to-Production Trap: Many teams may have started experimenting with on-premise or private-cloud LLMs using Ollama due to its simplicity. A proof-of-concept chatbot that works perfectly for a demo with one user will catastrophically fail the first time it's featured in a marketing campaign, leading to a poor customer experience and brand damage.

Architectural Decisions Have Cost: The choice of inference server is foundational. Selecting a DX-first tool like Ollama for a production pilot can lead to a costly, late-stage re-architecture. Conversely, starting with vLLM or llama.cpp, while involving a steeper initial learning curve, builds on a scalable foundation. This decision impacts server costs (GPU efficiency), engineering maintenance burden, and ultimately, the reliability of the customer-facing service.

The Need for Load Testing as a Discipline: This article reinforces a principle we've covered regarding production AI agents: observability and testing are non-negotiable. As noted in our April 3 article, "4 Observability Layers Every AI Developer Needs for Production AI Agents", understanding system behavior under load is a critical layer. This benchmark is a canonical example of why performance testing under concurrent load must be part of the deployment checklist for any customer-facing AI feature.