This week saw two significant, under-the-radar upgrades from leading AI labs that materially improve how developers—and by extension, technical teams in any industry—can work with AI agents. OpenAI enhanced Codex with subagents, and Anthropic made its 1-million-token context window generally available at standard pricing. While not flashy announcements, these changes address core friction points in professional AI workflows.

What Happened: Two Quiet but Meaningful Upgrades

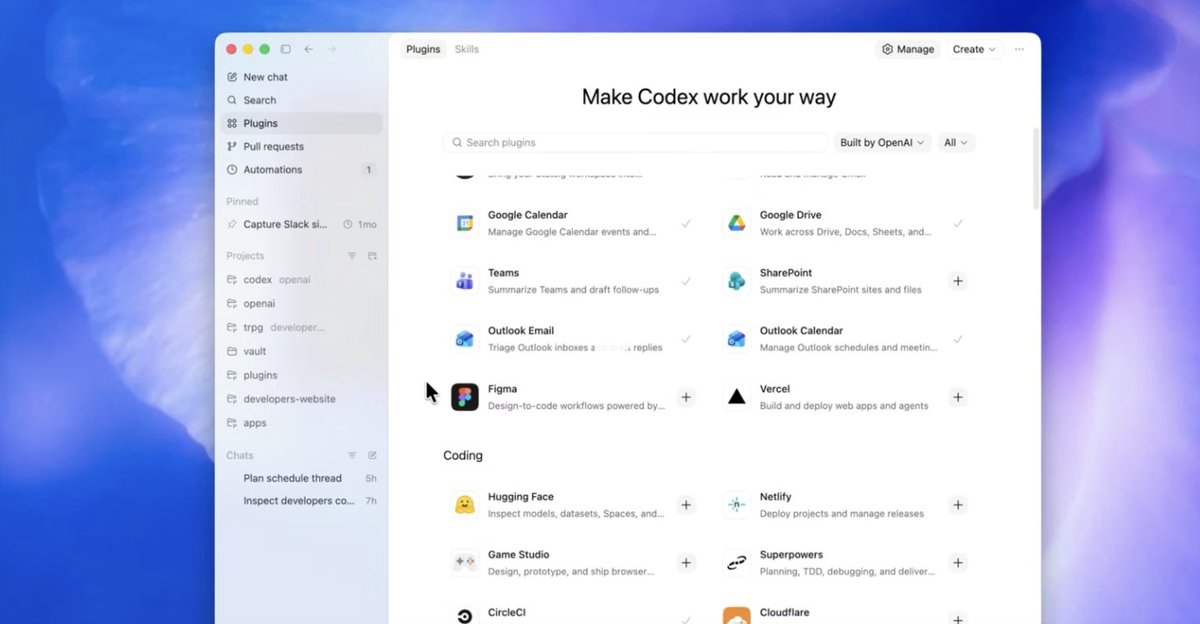

OpenAI Codex: Introducing Subagents

OpenAI's key release was the addition of subagents to Codex. This allows Codex to spawn specialized, parallel agents to explore, execute, or analyze work concurrently. The main thread remains focused on high-level requirements, decisions, and final outputs.

According to OpenAI's documentation, this architecture directly tackles "context pollution" and "context rot." A single, lengthy AI session can become a "digital junk drawer" filled with stack traces, failed tests, and exploratory dead ends. The subagent model cleans this up by separating the manager (the main thread) from the workers (the subagents).

This approach mirrors the product idea Anthropic pioneered with Claude Code and its broader Cowork feature. The industry is converging on this pattern because it's a "materially better operating model for real work," especially when tasks involve complex codebases, logs, specifications, and messy follow-ups.

OpenAI's growth metrics indicate where it sees the battle: Codex now has over 2 million weekly active users, a nearly 4x increase since the start of the year. API usage also jumped 20% in the week after GPT-5.4 launched.

Anthropic Claude: 1M Context, No Premium

Anthropic's move was making the 1-million-token context window generally available for Claude Opus 4.6 and Sonnet 4.6 at standard pricing. There is no long-context premium, and rate limits apply across the full window. Media limits were also expanded to 600 images or PDF pages.

The performance at this scale is striking. On the MRCR v2 (8-needle) benchmark at 1M tokens, Claude Opus 4.6 scored 78.3%. This more than doubles GPT-5.4's score of 36.6% and triples Gemini 3.1 Pro's 25.9%. Even Sonnet 4.6 hits 65.1% at the same context length.

At shorter contexts (256K tokens), the competition is tighter, but as context scales, Anthropic's lead widens significantly. This gives Anthropic a strong claim on the metric that matters for professional agentic work: not just headline window size, but the model's ability to find relevant information after being buried under a "mountain of tokens."

Anthropic also launched a Code Review feature for Claude Code in research preview. This system deploys a team of agents to each pull request. The average review takes about 20 minutes and costs $15–$25. For pull requests over 1,000 lines, 84% receive findings (averaging 7.5 issues), with less than 1% of findings marked incorrect. Internally, Anthropic reports the share of pull requests receiving substantive review comments rose from 16% to 54% after adopting the system.

Technical Details: Why These Upgrades Matter

The Subagent Architecture

The subagent model is an architectural response to a fundamental LLM limitation: the finite context window. As a single session grows, performance degrades due to irrelevant information crowding out critical instructions and outputs. By delegating bounded tasks to parallel subagents, the main agent maintains a clean, high-level strategic view. This is essential for complex, multi-step workflows like analyzing a codebase, generating a report from disparate data sources, or orchestrating a multi-channel marketing campaign.

Long-Context Performance

Anthropic's 1M context achievement without a price premium suggests a potential architectural or inference breakthrough. The MRCR v2 benchmark is particularly relevant because it tests a model's ability to retrieve specific information ("needles") from a massive context (a "haystack"). A high score indicates the model can maintain reasoning coherence over very long documents or sessions. This is critical for tasks like reviewing a lengthy legal contract, analyzing a year's worth of customer service logs, or synthesizing insights from a vast product catalog.

Retail & Luxury Implications

While these upgrades emerged from the software engineering domain, their implications for retail and luxury are direct and significant. The core advancements—managing complex workflows via subagents and processing massive documents with high accuracy—translate to critical business functions.

1. Supply Chain & Inventory Analysis

A supply chain manager could use a Codex-like agent with subagents to concurrently:

- Analyze global shipping logs for delays.

- Parse supplier quality reports.

- Cross-reference inventory levels across warehouses.

- Generate a consolidated risk assessment.

The main agent coordinates the tasks and synthesizes the final recommendation, avoiding the chaos of a single, bloated chat session.

2. Customer Insight Synthesis

Marketing teams could leverage Claude's 1M context to process and analyze:

- A year of unstructured customer feedback from emails, reviews, and call transcripts.

- Entire PDF catalogs from past seasons to identify design evolution.

- Combined image (lookbook photos) and text (product descriptions) data for multimodal trend analysis.

The model's ability to accurately pull specific insights from this "haystack" enables deeper, more nuanced understanding of customer sentiment and brand perception.

3. Legal & Compliance Review

For luxury conglomerates managing countless licensing agreements, partnership contracts, and compliance documents, a 1M-context agent could review entire contract libraries. It could identify non-standard clauses, ensure brand guideline adherence, and flag renewal dates with high accuracy, compressing weeks of legal work into hours.

4. Personalized Clienteling at Scale

High-context agents could maintain detailed, evolving profiles of top clients by processing all interaction history—purchase records, personal notes from sales associates, email correspondence, and preferred styles from image uploads. This enables hyper-personalized outreach and recommendations without the human error of forgetting a minor detail mentioned months prior.

The Code Review case study is also instructive. The same multi-agent review principle could be applied to creative asset approval workflows (e.g., for advertising campaigns or store designs) or merchandising plans, where different agents check for brand consistency, commercial viability, and logistical feasibility in parallel.

Business Impact & Implementation Approach

Business Impact: The immediate impact is on productivity and quality of complex analysis. Teams move from using AI for discrete tasks (write an email, summarize a document) to managing entire analytical workflows. The risk of "manufacturing more uncertainty at a higher speed"—generating code or content faster than it can be reviewed—is mitigated by architectures built for review and synthesis.

Implementation Approach: Adopting these patterns requires a shift in mindset and tooling.

- Workflow Decomposition: Technical teams must learn to break down business problems into parallelizable sub-tasks with clear outputs.

- Orchestration Layer: Implementing subagents requires an orchestration framework (like LangChain, LlamaIndex, or custom code) to manage the main agent and worker agents.

- Model Selection: For document-intensive tasks (contracts, historical analysis), Claude's 4.6 models with 1M context are currently the strongest option. For dynamic workflow orchestration, Codex with subagents or Claude Code's Cowork model are leading patterns.

- Human-in-the-Loop Design: As the source notes, "the highest-return setup is expert human plus agent swarm." Workflows must be designed with clear human oversight points, especially for final decisions on creative, financial, or client-facing outputs.

Governance & Risk Assessment

Privacy & Data Security: Processing million-token contexts containing sensitive business data (client lists, contracts, financials) requires stringent data governance. Enterprises must ensure API compliance with data residency laws and use enterprise agreements with strong data processing protections.

Bias & Accuracy: While benchmarks show high accuracy, these are general models. Performance on domain-specific retail jargon (e.g., specific fabric names, complex SKU structures) must be validated. Hallucinations in long contexts, though reduced, remain a risk for critical decision-making.

Maturity Level: The subagent pattern is rapidly maturing and is being adopted by leading labs. The 1M context capability is state-of-the-art but still novel; best practices for prompting and structuring such massive inputs are evolving. Both are ready for pilot projects in controlled environments but warrant caution for fully autonomous, business-critical deployment.

The key takeaway is that the frontier of AI utility is no longer about raw model capability alone. It's about architectural patterns that make that capability reliable and manageable in complex, real-world business workflows. For retail and luxury, where processes are often a mix of creative, analytical, and operational tasks, these agentic upgrades offer a tangible path to leverage AI beyond simple automation.