What Happened

OpenAI has released an updated version of its privacy filter, retrained on Nvidia's Nemotron-PII dataset. The new model expands PII (Personally Identifiable Information) detection from 8 label types to over 50, with specific improvements for healthcare and enterprise applications.

The update was shared via a tweet from @MaziyarPanahi, who noted the model is "out" and highlighted the jump from 8 to 50+ labels. The retraining leverages Nvidia's Nemotron-PII data, a specialized dataset for detecting personally identifiable information.

Technical Details

The original OpenAI privacy filter supported 8 PII categories (e.g., names, email addresses, phone numbers, social security numbers). The retrained version now covers over 50 PII label types, including:

- Healthcare-specific identifiers (medical record numbers, health plan beneficiary numbers)

- Enterprise data (employee IDs, internal account numbers)

- Financial identifiers (bank account numbers, credit card numbers)

- Location data (precise geolocation, street addresses)

- Digital identifiers (IP addresses, device IDs, browser fingerprints)

The retraining on Nemotron-PII data suggests Nvidia has curated a comprehensive dataset covering a wide spectrum of PII types, likely with synthetic or carefully labeled examples to ensure coverage of edge cases.

Why It Matters

Privacy filtering is a critical component for enterprises deploying LLMs in regulated industries like healthcare, finance, and legal. The jump from 8 to 50+ PII labels represents a significant improvement in coverage, reducing the risk of sensitive data leakage when processing user inputs or training data.

For healthcare specifically, compliance with HIPAA requires robust PII detection. The expanded label set directly addresses this need by covering medical record numbers, health plan information, and other protected health information (PHI) identifiers.

Competitive Landscape

Other providers offer PII detection as part of their content moderation or data sanitization pipelines:

- Microsoft Azure AI Content Safety includes PII detection with ~20 label types

- Google Cloud DLP covers over 120 infoTypes but is not natively integrated with LLM APIs

- Amazon Comprehend offers PII detection with ~15 label types

- Presidio (open-source) provides customizable PII detection but requires deployment effort

OpenAI's retrained filter, now with 50+ labels, positions it competitively against these options while being natively integrated into the OpenAI API ecosystem.

What This Means in Practice

For developers using the OpenAI API, this update means:

- Reduced risk of inadvertently processing sensitive data

- Better compliance posture for regulated industries

- No additional API calls needed — the privacy filter runs as part of the existing moderation pipeline

- Potentially lower costs from avoiding data breaches or compliance failures

gentic.news Analysis

This update is a pragmatic response to the growing demand for enterprise-grade privacy controls in AI systems. OpenAI's decision to retrain on Nvidia's Nemotron-PII data — rather than building its own dataset — is notable. It suggests a collaborative approach where Nvidia provides the data infrastructure, and OpenAI provides the model and distribution.

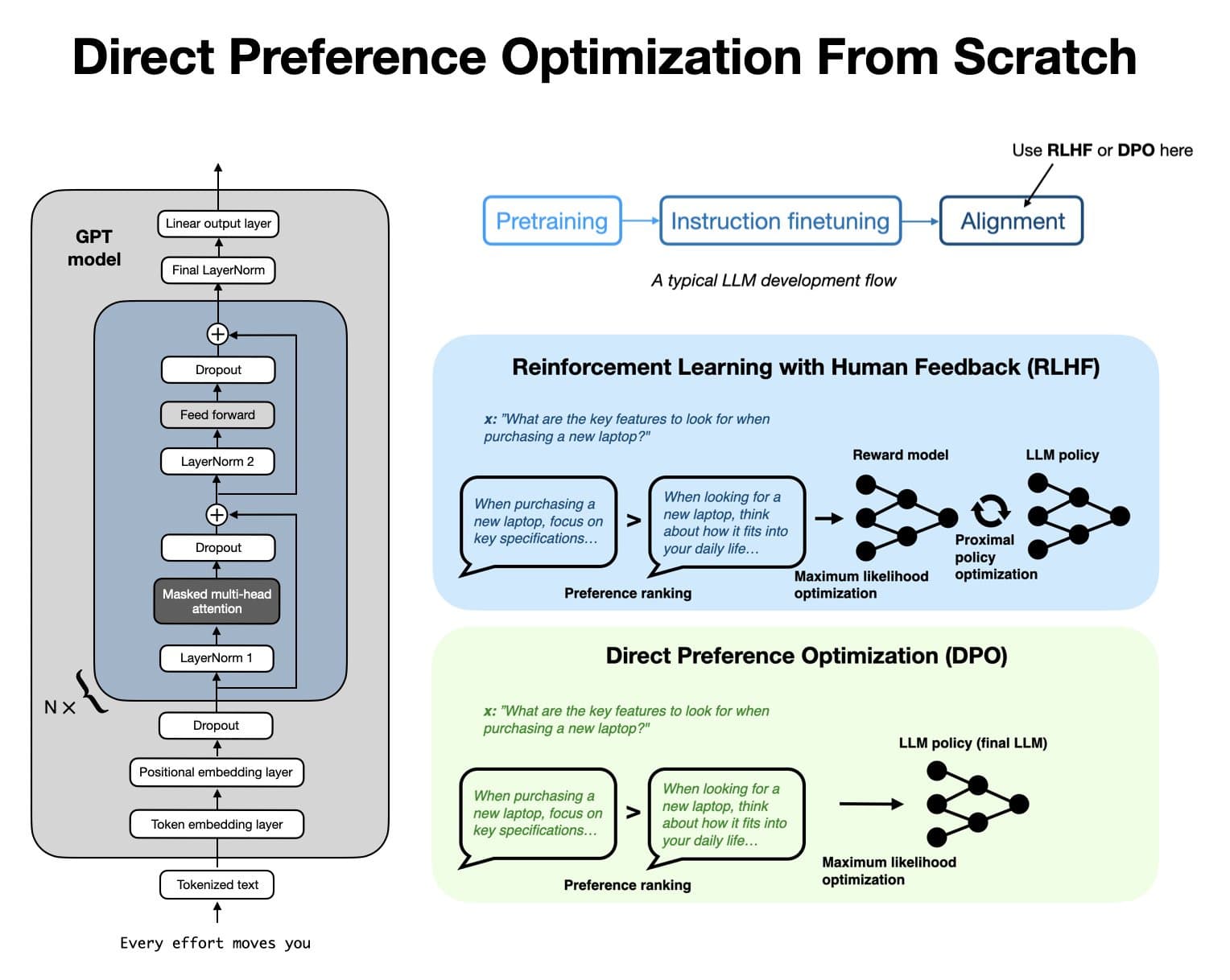

Nvidia has been steadily expanding its Nemotron ecosystem, which includes datasets for synthetic data generation, RLHF, and now PII detection. This follows Nvidia's broader strategy of becoming the "picks and shovels" provider for the AI industry, supplying the data and infrastructure rather than competing directly with frontier model providers.

The timing aligns with increasing regulatory scrutiny. The EU AI Act classifies systems that process biometric data or sensitive personal data as high-risk. Having a robust privacy filter is becoming a compliance necessity, not just a nice-to-have.

We previously covered OpenAI's content moderation updates in January 2026, which focused on safety categories. This PII filter update complements those efforts by addressing a different but equally important dimension of responsible AI deployment.

Frequently Asked Questions

What is the OpenAI privacy filter?

The OpenAI privacy filter is a component of the API's moderation system that detects and optionally redacts personally identifiable information (PII) from user inputs and model outputs before they are processed or returned.

How many PII labels does the new filter support?

The retrained filter supports over 50 PII label types, up from 8 in the previous version. This includes healthcare identifiers, financial data, location data, and digital identifiers.

What is Nvidia's Nemotron-PII dataset?

Nemotron-PII is a dataset curated by Nvidia specifically for training models to detect personally identifiable information. It covers a wide range of PII categories and is designed for enterprise and healthcare use cases.

Does this affect existing OpenAI API users?

Existing API users should see improved PII detection automatically, as the privacy filter is a backend component. No code changes are required, but developers relying on specific PII categories should verify coverage against their use cases.