Jakub Pachocki, the Chief Scientist at OpenAI, has issued a stark warning: the societal challenges posed by artificial intelligence automating intellectual work are intensifying and "coming faster than expected." In a recent statement, he highlighted job displacement, wealth concentration, and the governance of AI-controlled entities as critical, immediate issues.

What Pachocki Said

The core of Pachocki's warning, shared via social media, focuses on the downstream effects of advanced AI systems capable of performing tasks traditionally reserved for human knowledge workers. He identifies three primary areas of concern:

- Job Displacement: The automation of intellectual labor, not just manual or routine tasks.

- Wealth Concentration: The risk that economic gains from AI productivity will accrue disproportionately.

- Governance of AI-Controlled Entities: The challenge of overseeing and regulating autonomous or semi-autonomous AI systems that make decisions or manage resources.

The key takeaway is the timeline. Pachocki emphasizes these issues are "coming faster than expected," suggesting the internal pace of AI capability development at organizations like OpenAI may be outstripping public and policymaker preparedness.

Context: A Recurring Warning from AI Leadership

This is not the first time a leader from a major AI lab has sounded the alarm. OpenAI CEO Sam Altman has frequently testified before Congress and written about the need for societal adaptation to AI. Anthropic's co-founders have built their company's constitution around AI safety. Pachocki's comments, however, carry specific weight given his role overseeing the core research direction at OpenAI, the company behind GPT-4, GPT-4o, and the recently unveiled o1 series of reasoning models. His warning implies the technical foundations for widespread intellectual automation are either present or imminent.

The statement arrives amid a heated global debate on AI regulation. The European Union's AI Act is being implemented, the U.S. has issued an executive order on AI safety, and international bodies are scrambling to establish guardrails. Pachocki's warning serves as a direct input to these discussions, underscoring the urgency from the perspective of those building the most powerful systems.

gentic.news Analysis

Pachocki's warning is a significant data point in the ongoing tension between AI capability acceleration and societal resilience. As Chief Scientist, his primary focus is ostensibly on pushing the frontiers of what AI models can do—a pursuit clearly demonstrated by OpenAI's release of the o1 model family, which marks a substantial leap in AI reasoning and problem-solving. His public concern about the societal side effects of such advancements indicates these two tracks—capability and safety/alignment—are on a collision course within the leading labs themselves.

This aligns with a pattern we've tracked: as models transition from research demos to integrated economic tools, the discourse from builders shifts from pure performance to impact. We saw a similar pivot with the release of GPT-4o, which was framed not just as a more capable model, but as a step towards more natural, assistive human-computer interaction. Pachocki's comments suggest the next phase of this integration—where AI acts not just as an assistant but as an autonomous executor of complex intellectual work—is the proximate concern.

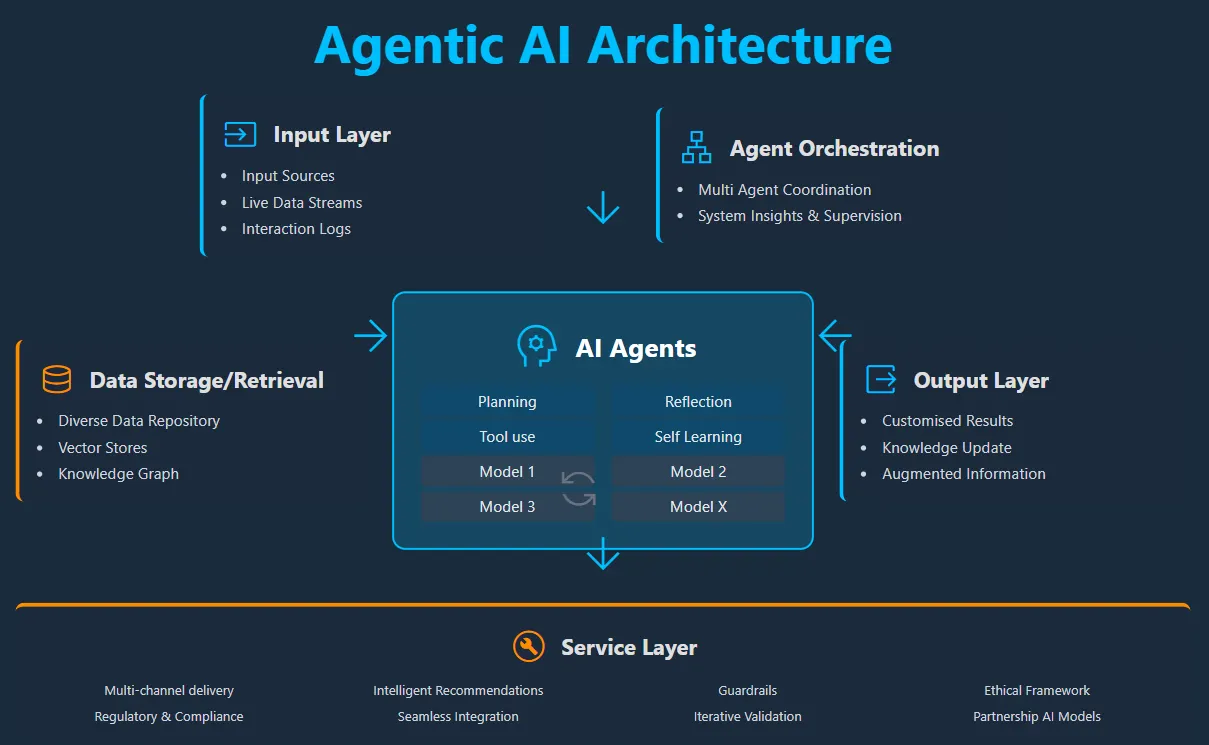

The mention of "governance of AI-controlled entities" is particularly pointed. It moves beyond the well-trodden ground of job loss and points to a near-future scenario where AI systems manage operations, make strategic decisions, or control physical infrastructure with minimal human oversight. This directly relates to research frontiers in AI agents and robotics. The challenge is no longer just aligning a model's output, but aligning the actions of a persistent AI entity operating in the real world—a technically and politically monumental task that, according to Pachocki, is on a fast-approaching horizon.

Frequently Asked Questions

Who is Jakub Pachocki?

Jakub Pachocki is the Chief Scientist at OpenAI, where he leads the company's research direction. He has been a key figure in many of OpenAI's landmark projects, including the development of GPT-4 and the o1 reasoning models. His technical background and leadership role make his assessments of AI's trajectory and impact particularly authoritative within the field.

What does "automating intellectual work" mean?

This refers to AI systems performing tasks that require reasoning, creativity, expert knowledge, and strategic planning—jobs traditionally held by analysts, writers, software engineers, researchers, and managers. It's a step beyond automating routine data entry or manufacturing, targeting the core value-creation activities in knowledge economies.

Is this warning about current AI or future AI?

Pachocki's warning is likely about the imminent future, driven by current and soon-to-be-released technology. Models like OpenAI's o1-preview demonstrate advanced reasoning and coding capabilities that can automate significant portions of software development and analytical work today. His statement suggests the scale of this automation and its penetration into other intellectual professions will accelerate rapidly.

What are "AI-controlled entities"?

This term likely refers to autonomous or semi-autonomous systems where an AI model is given persistent goals, access to tools (APIs, financial systems, control panels), and the authority to take actions to achieve those goals. Examples could range from an AI managing a stock trading portfolio to an autonomous research lab conducting experiments. The governance challenge involves ensuring these entities act safely, ethically, and under appropriate human oversight.