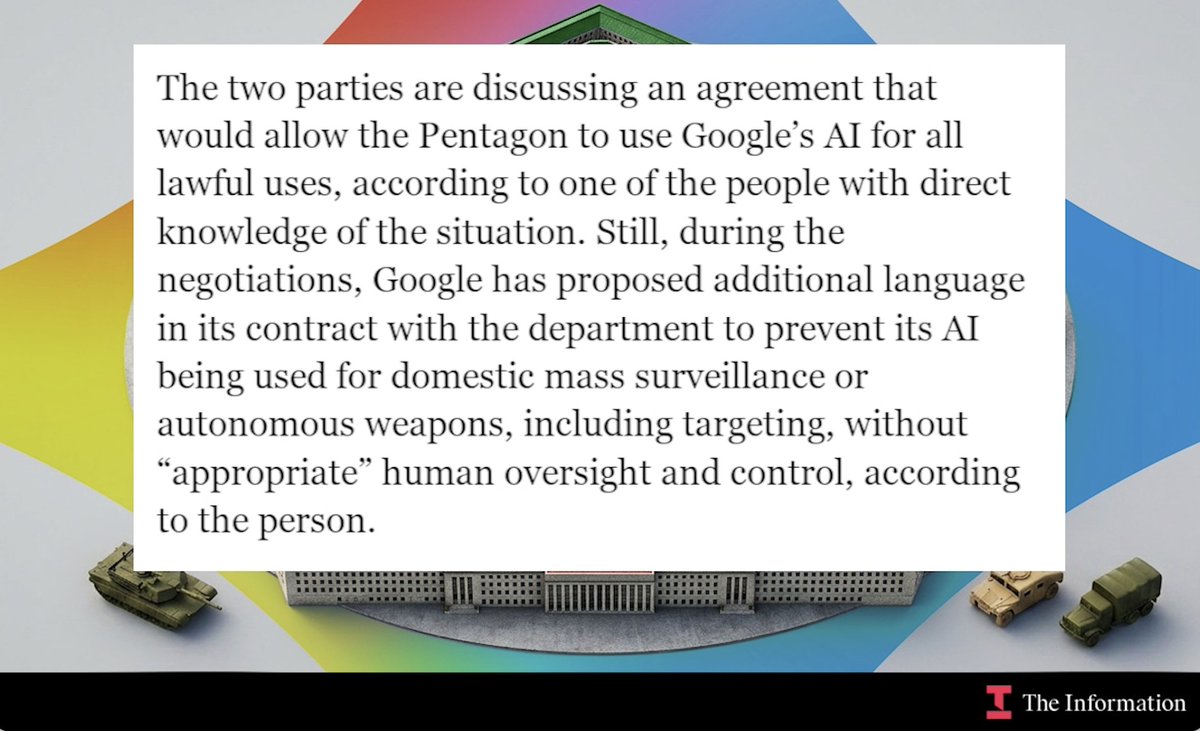

In a significant development for the AI industry's relationship with government agencies, OpenAI has reportedly secured a contract with the U.S. Department of Defense (DoD) that includes specific ethical limitations—something competitor Anthropic failed to achieve in its own negotiations. According to sources, the agreement includes three key restrictions: no use of OpenAI technology for mass domestic surveillance, no use for directing autonomous weapons systems, and no use for high-stakes automated decisions like social credit systems.

The Deal's Specifics and Context

The reported agreement represents a carefully negotiated middle ground between OpenAI's previous prohibition on military use and the practical realities of working with defense agencies. While OpenAI's terms of service previously banned "military and warfare" applications, the company clarified in January 2024 that this didn't preclude working with defense organizations on non-combat applications like cybersecurity, veteran healthcare, and disaster response.

This new contract appears to formalize that distinction with specific guardrails. The prohibition on "mass domestic surveillance" addresses privacy concerns that have plagued AI deployment in other contexts, while the ban on directing autonomous weapons systems aligns with growing international concern about lethal autonomous weapons. The restriction on "high-stakes automated decisions" suggests recognition of AI's limitations in consequential domains affecting human rights and freedoms.

Contrast with Anthropic's Failed Negotiations

What makes this development particularly noteworthy is the contrast with Anthropic's experience. According to available information, Anthropic attempted to negotiate similar ethical restrictions in its own potential DoD contract but was unsuccessful. The reasons for this discrepancy remain unclear, as neither the full OpenAI contract nor details of Anthropic's negotiations are publicly available.

Several factors might explain the different outcomes. OpenAI's established market position and existing government relationships through Microsoft may have provided greater negotiating leverage. Alternatively, the specific timing or nature of the proposed projects might have differed. Some industry observers speculate that OpenAI's more pragmatic approach to government engagement—compared to Anthropic's sometimes more idealistic positioning—could have influenced the outcomes.

Implications for AI Governance and Industry Competition

This development has significant implications for how leading AI companies engage with government entities. OpenAI's successful negotiation of specific ethical boundaries sets a potential precedent for future government contracts, suggesting that companies can maintain ethical principles while participating in defense work. However, it also raises questions about consistency in government contracting and whether ethical standards will be applied uniformly across vendors.

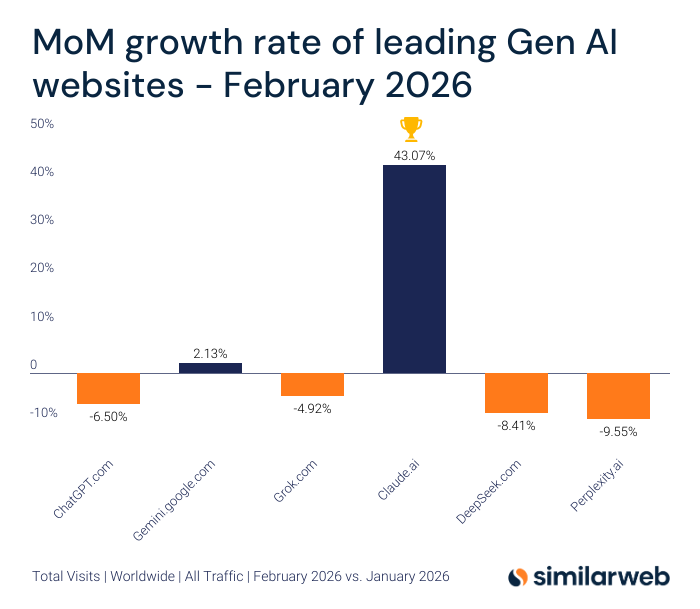

The competitive implications are equally significant. Securing a DoD contract with favorable terms could provide OpenAI with valuable government experience, funding, and credibility that strengthens its position against competitors. For Anthropic, the failed negotiations represent a missed opportunity in a potentially lucrative market segment.

Transparency and Verification Challenges

A major limitation in assessing this development is the lack of public documentation. Without access to the full contract, it's impossible to verify the exact scope of restrictions or whether they contain loopholes or exceptions. The phrase "upon initial review" in source materials suggests even those reporting on the deal haven't seen complete documentation.

This transparency gap is particularly concerning given the sensitive nature of military AI applications. Civil society organizations and AI ethics advocates have consistently called for greater transparency in government AI procurement to ensure proper oversight and accountability. The limited information available about this contract makes independent assessment of its ethical adequacy difficult.

Broader Industry Trends in Military AI Engagement

This development occurs within a broader trend of AI companies navigating complex relationships with military and intelligence agencies. Google faced employee protests over Project Maven in 2018, leading to ethical principles that limited but didn't eliminate defense work. Microsoft has actively pursued defense contracts while establishing responsible AI principles. Amazon continues to provide AI services to government agencies through AWS.

OpenAI's approach appears distinct in its specificity—rather than broad principles, the reported contract includes concrete prohibitions on specific application categories. This could represent an evolution in how AI companies structure government engagements, moving from general guidelines to negotiated, contract-specific restrictions.

Future Implications and Unanswered Questions

The long-term implications will depend on several factors: whether these restrictions prove effective in practice, whether they become a model for other government contracts, and how they balance innovation with ethical constraints. Key questions remain unanswered:

- What enforcement mechanisms exist if restrictions are violated?

- How are key terms like "mass surveillance" and "autonomous weapons systems" defined?

- What oversight provisions are included?

- How does this align with broader U.S. and international AI governance efforts?

As AI capabilities advance, the tension between technological potential, ethical considerations, and national security needs will only intensify. This contract negotiation—and the different outcomes for two leading AI companies—illustrates the complex balancing act facing both technology providers and government agencies in the AI era.

Source: Initial reporting based on analysis of available contract information as shared by industry observers. Full contract documentation not publicly available.