In a remarkable public airing of internal industry tensions, Anthropic CEO Dario Amodei has sharply criticized rival OpenAI's recent agreement to provide artificial intelligence technology to the U.S. Department of Defense, labeling the arrangement "safety theater" during a Friday address to employees. The comments, first reported via social media and subsequently verified through multiple sources, reveal not only significant philosophical divisions within the AI safety community but also provide unprecedented insight into how political dynamics have shaped relationships between leading AI companies and government administrations.

The 'Safety Theater' Accusation

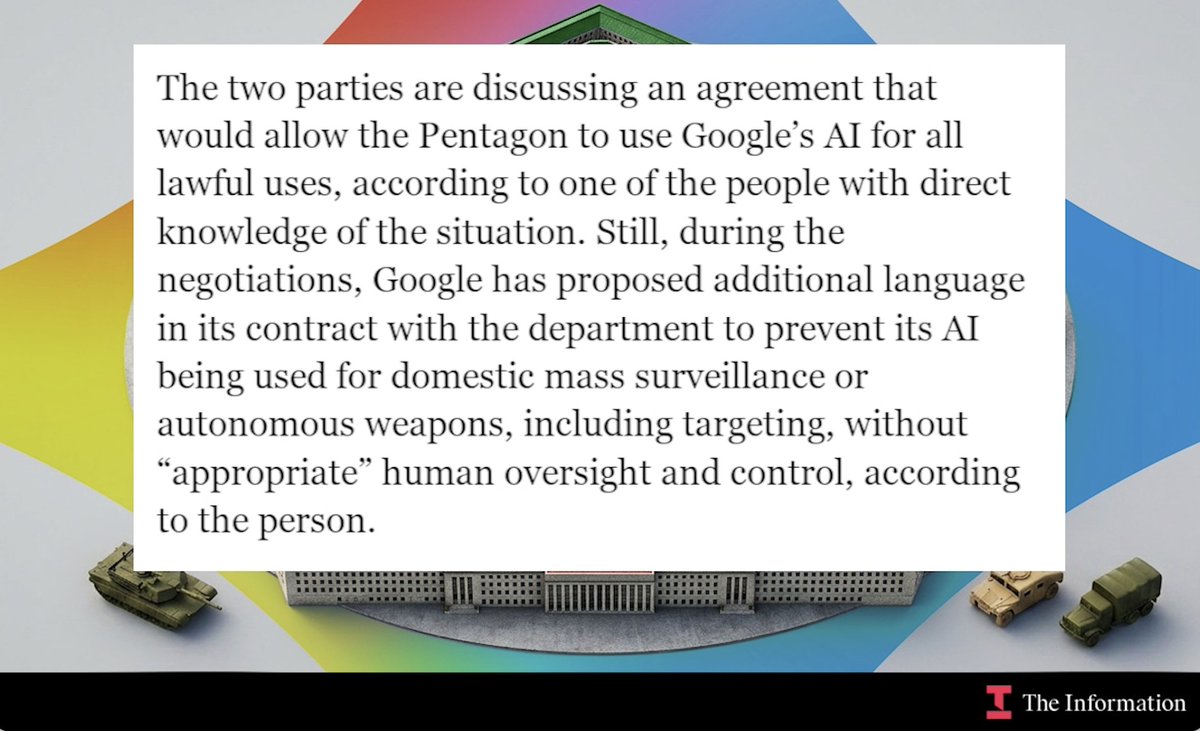

Amodei's characterization of OpenAI's defense partnership as "safety theater" represents one of the most direct confrontations between two of the most influential figures in contemporary artificial intelligence development. While details of the specific OpenAI-DoD agreement remain partially classified, the arrangement reportedly involves providing AI capabilities for various defense applications, potentially including cybersecurity, logistics, and intelligence analysis.

"Safety theater" is a term borrowed from security discourse, referring to measures that create the appearance of safety without providing substantive protection. By applying this label to OpenAI's government partnership, Amodei suggests that either the safety protocols are inadequate or that the partnership itself represents a compromise of ethical principles that cannot be adequately mitigated through technical safeguards alone.

This criticism aligns with Anthropic's publicly stated Constitutional AI approach, which emphasizes embedding explicit values into AI systems and maintaining strict control over deployment contexts. The company has positioned itself as more cautious than OpenAI regarding government and military applications, though both organizations emerged from similar effective altruism and AI safety backgrounds.

Political Dimensions: The Trump Administration Conflict

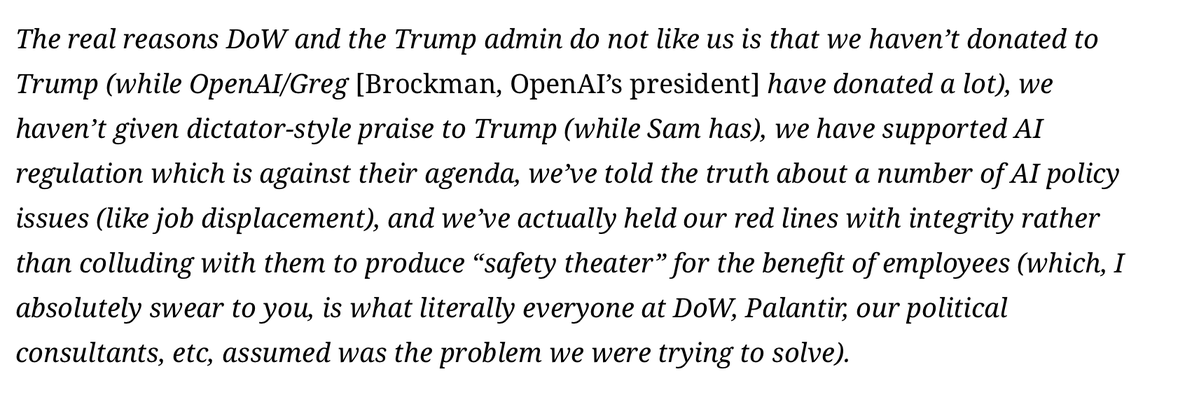

Perhaps more revealing were Amodei's comments about Anthropic's relationship with the previous presidential administration. According to the CEO, the Trump administration "didn't like Anthropic in part because the company hadn't 'given dictator-style praise to Trump.'"

This admission provides rare insight into how political considerations have influenced the government's relationship with cutting-edge AI companies. While tech companies have frequently navigated political waters, Amodei's characterization suggests a particularly transactional expectation from the previous administration—one that Anthropic apparently refused to meet.

This political context helps explain why Anthropic, despite being founded by former OpenAI executives and sharing similar technical expertise, may have developed different government engagement strategies. The company's resistance to what Amodei characterized as sycophantic behavior may have reinforced its commitment to maintaining independence from political pressures that could compromise its safety-first ethos.

Industry Implications: A Fracturing Consensus

The public nature of these criticisms signals a significant fracture in what was previously presented as a relatively unified front among leading AI safety organizations. For years, OpenAI, Anthropic, and similar organizations have generally avoided direct public criticism of one another's approaches, maintaining at least superficial unity around shared concerns about AI risks.

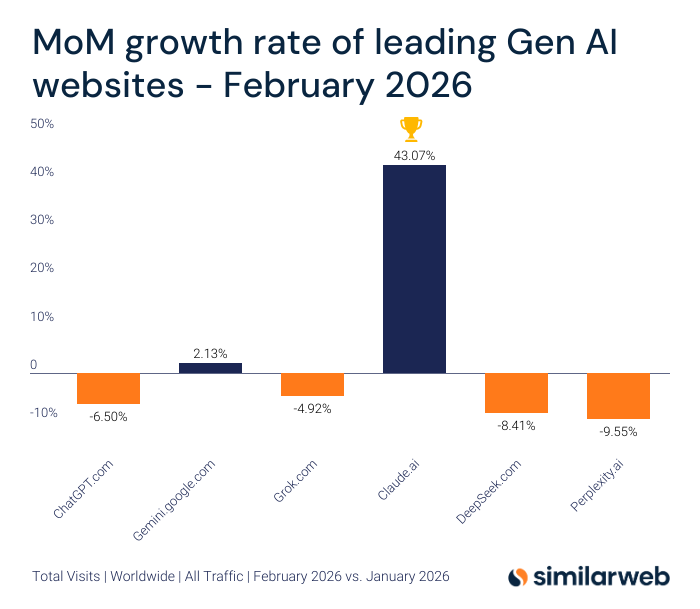

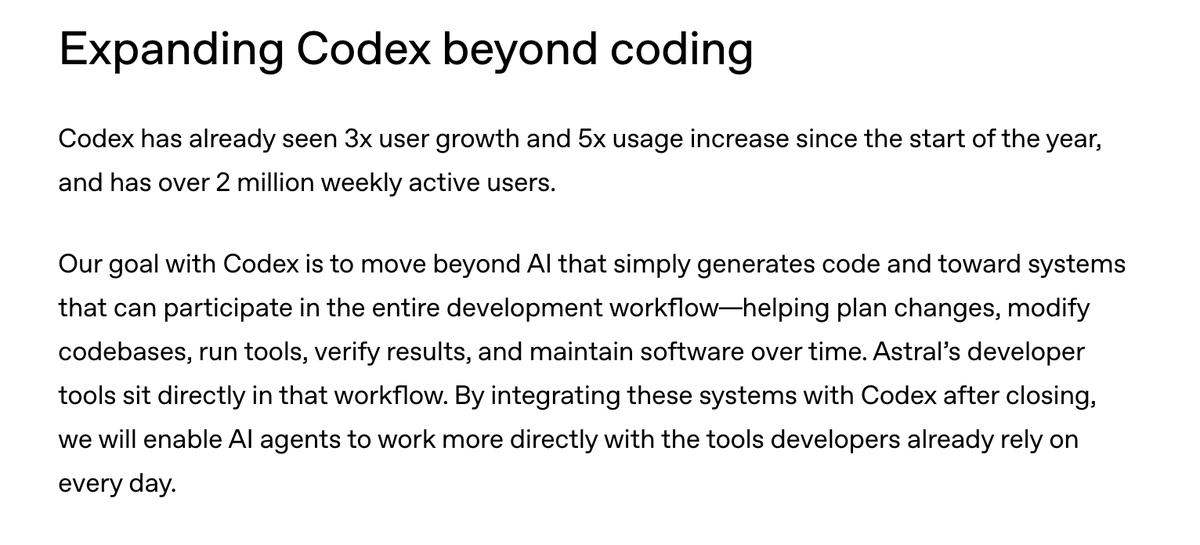

Amodei's comments suggest this consensus may be breaking down under the pressure of commercialization, government partnerships, and diverging views on appropriate deployment strategies. The criticism comes at a particularly sensitive moment as AI companies navigate increasing regulatory scrutiny, competitive pressures, and ethical questions about their technologies' applications.

This division could have several consequences:

Regulatory Impact: Lawmakers may interpret industry disagreements as evidence that self-regulation is insufficient, potentially accelerating calls for more stringent government oversight.

Talent Dynamics: AI researchers and engineers with strong safety convictions may increasingly gravitate toward companies whose approaches align with their ethical boundaries.

Investor Considerations: The different risk profiles and government engagement strategies may lead to divergent valuations and investment patterns for companies taking more versus less cautious approaches.

The Broader AI Safety Debate

At its core, this controversy reflects deeper philosophical divisions within the AI safety community about appropriate engagement with government and military entities. One perspective, seemingly represented by OpenAI's approach, suggests that responsible engagement with defense organizations can help shape how AI is used for national security purposes, potentially preventing worse outcomes from less responsible actors.

The alternative view, articulated by Amodei's comments, appears to question whether such engagement can ever be sufficiently constrained or whether it inherently legitimizes and accelerates potentially dangerous applications. This debate echoes historical controversies in other technology domains, from nuclear physics to biotechnology, where researchers have grappled with dual-use dilemmas.

Looking Forward: AI Governance in a Polarized Landscape

The political dimension of Amodei's comments adds another layer of complexity to an already challenging governance landscape. As AI becomes increasingly central to economic and military competitiveness, relationships between AI companies and government will likely grow more consequential—and potentially more contentious.

Anthropic's experience with the Trump administration suggests that AI companies may face pressure to align with political agendas in exchange for favorable treatment or access. How companies navigate these pressures while maintaining ethical commitments will test the robustness of their governance structures and the sincerity of their stated principles.

Meanwhile, the public criticism between leading AI CEOs could mark the beginning of more transparent debate about appropriate AI deployment—or it could simply reflect competitive positioning as companies vie for talent, investment, and market position in an increasingly crowded field.

Source: Initial reporting based on social media disclosure from @kimmonismus regarding Anthropic CEO Dario Amodei's comments to employees, with additional context from industry analysis and historical patterns of government-tech relationships.