Researchers have introduced QAsk-Nav, the first reproducible benchmark designed specifically for Collaborative Instance Object Navigation (CoIN) that enables explicit, separate assessment of embodied navigation and collaborative question-asking capabilities. Published on arXiv on March 31, 2026, the work addresses a critical gap in evaluating AI agents that must navigate physical spaces while interacting with humans through natural language dialogue.

The Problem with Current CoIN Benchmarks

Collaborative Instance Object Navigation tasks an embodied agent with reaching a target specified in free-form natural language under partial observability. The agent uses only egocentric visual observations and interactive natural-language dialogue with a human to resolve ambiguity among visually similar object instances. For example, an agent might need to find "the blue mug with a chip on the handle" in a kitchen containing multiple blue mugs, requiring it to ask clarifying questions like "Is the chip on the left or right side of the handle?"

Existing CoIN benchmarks have primarily focused on navigation success as the sole metric, offering no support for consistent evaluation of collaborative interaction quality. This makes it difficult to determine whether failures stem from poor navigation, ineffective questioning, or both—a critical distinction for improving these systems.

What QAsk-Nav Provides

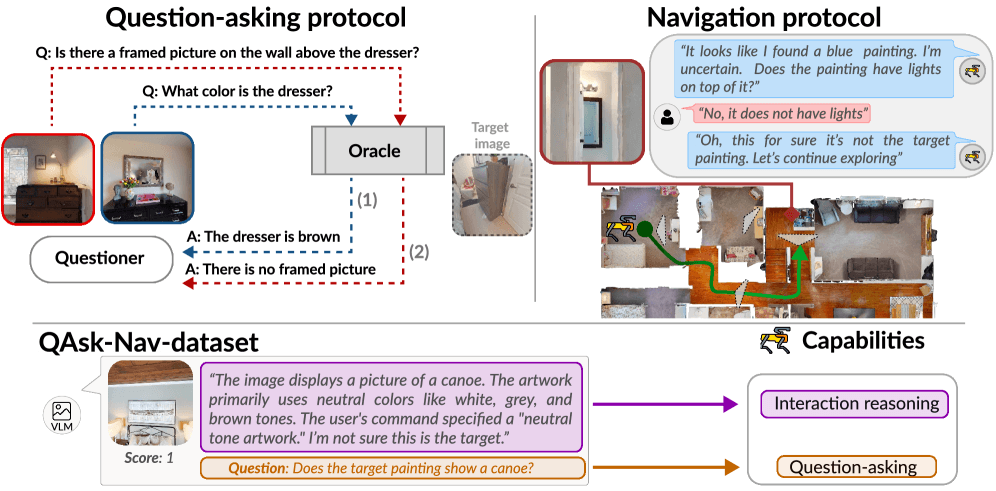

The QAsk-Nav benchmark introduces three key components:

A lightweight question-asking protocol scored independently of navigation - This allows researchers to evaluate an agent's ability to ask effective, clarifying questions separate from its ability to navigate to the correct location.

An enhanced navigation protocol with realistic, diverse, high-quality target descriptions - The benchmark includes more natural language descriptions that better reflect how humans would actually describe objects in real-world scenarios.

An open-source dataset with 28,000 quality-checked reasoning and question-asking traces - This substantial dataset provides training data and analysis tools for developing and evaluating CoIN models' interactive capabilities.

Light-CoNav: A Unified Model for Collaborative Navigation

Using the QAsk-Nav benchmark, the researchers developed Light-CoNav, a lightweight unified model for collaborative navigation that demonstrates significant advantages over existing approaches:

Key Performance Metrics

Model Size 3x smaller Baseline 67% reduction Inference Speed 70x faster Baseline 98.6% faster Generalization to Unseen Objects Outperforms SOTA State-of-the-art CoIN approaches Better performance Generalization to Unseen Environments Outperforms SOTA State-of-the-art CoIN approaches Better performanceLight-CoNav achieves these gains through a unified architecture that processes both visual navigation and language interaction in a single model, eliminating the overhead and coordination challenges of modular systems that separate these components.

Technical Implementation

The benchmark is built on realistic simulation environments where agents must navigate to specific object instances based on natural language descriptions. The evaluation protocol separates scoring into:

- Navigation Success Rate: Whether the agent reaches the correct target object

- Question Quality Score: How effectively the agent asks clarifying questions when faced with ambiguity

- Interaction Efficiency: How many dialogue turns are needed to resolve ambiguity

The 28,000 quality-checked traces include human-AI interaction logs that capture reasoning processes, question-asking strategies, and navigation decisions, providing rich training data for future models.

Why This Matters for Embodied AI

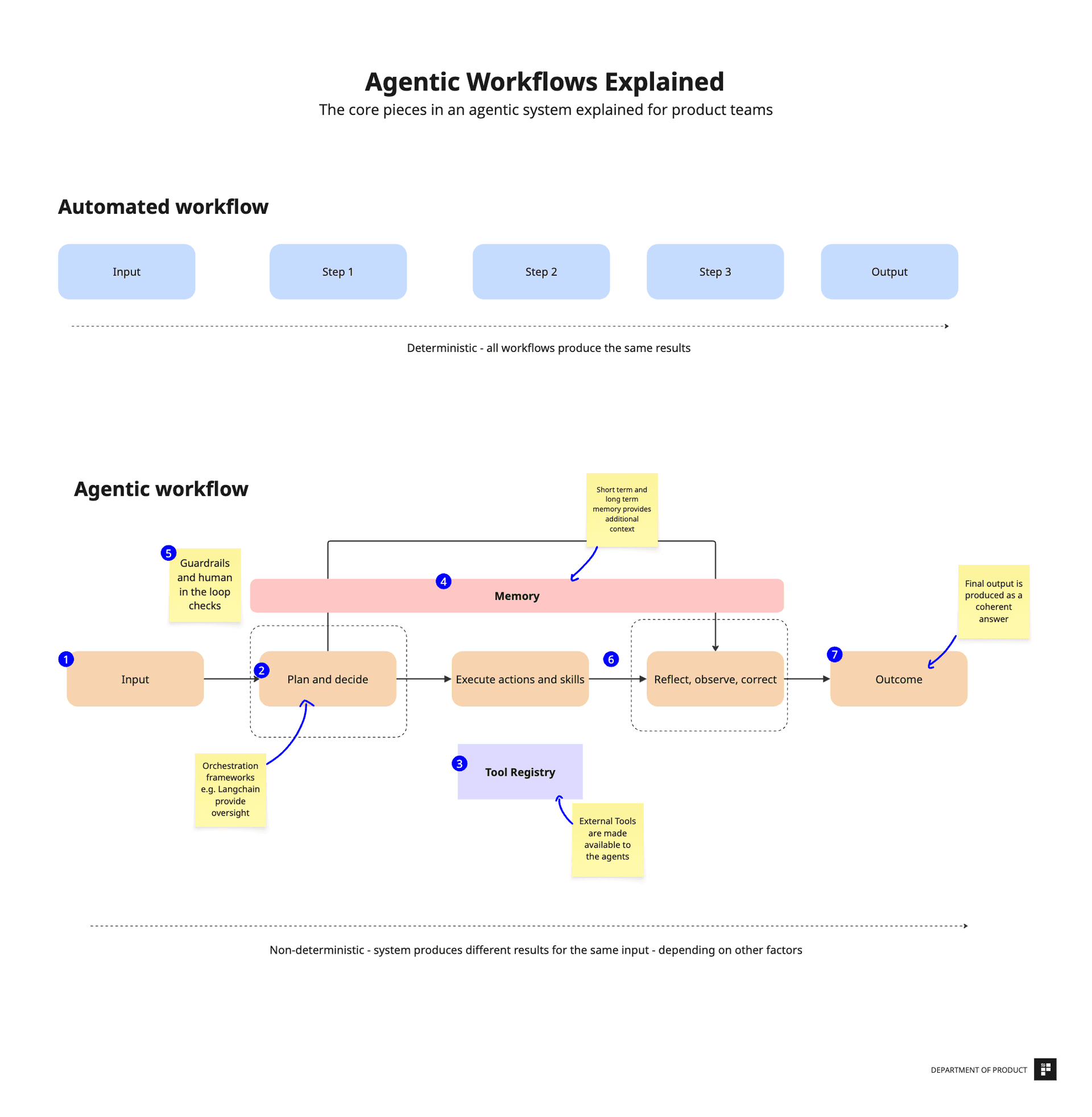

Separating navigation and dialogue evaluation addresses a fundamental challenge in developing collaborative embodied agents. In real-world applications—from home assistance robots to warehouse navigation systems—agents need both strong spatial reasoning and effective communication skills. By providing tools to measure these capabilities independently, QAsk-Nav enables more targeted improvements and better understanding of where systems fail.

The efficiency gains demonstrated by Light-CoNav are particularly significant for real-world deployment. A model that's 3x smaller and 70x faster than existing approaches could enable collaborative navigation on edge devices with limited computational resources, opening up new application possibilities.

gentic.news Analysis

This work arrives during a period of intense focus on AI agent evaluation and benchmarking, as evidenced by arXiv's recent activity. Just days before this paper's publication, arXiv hosted studies on RAG system vulnerabilities (March 27) and LLMs as essay graders (March 24), reflecting the broader research community's push toward more rigorous, nuanced evaluation methodologies. The trend toward specialized benchmarks like QAsk-Nav represents a maturation of the field—moving beyond simple accuracy metrics to more sophisticated assessments that capture multi-faceted capabilities.

The emphasis on reproducibility in QAsk-Nav aligns with growing concerns in the AI research community about benchmark gaming and inconsistent evaluation practices. This follows recent work we covered on Emergence WebVoyager (April 1), which exposed inconsistencies in web agent evaluation, suggesting a coordinated push toward more transparent, standardized testing frameworks across different AI agent domains.

Light-CoNav's unified architecture approach contrasts with the modular systems that have dominated embodied AI research. This architectural shift—from separate navigation and language modules to integrated models—parallels similar consolidation trends in other AI domains, where end-to-end training often outpercomes pipelined approaches once sufficient data and computational resources become available.

The 70x speed improvement is particularly noteworthy given recent discussions about throughput as a strategic lever in AI systems (covered March 31). As AI applications move from research to production, inference efficiency becomes increasingly critical, making Light-CoNav's performance gains practically significant beyond just academic benchmarks.

Frequently Asked Questions

What is Collaborative Instance Object Navigation (CoIN)?

Collaborative Instance Object Navigation is a task where an embodied AI agent must navigate to a specific object instance based on a natural language description, using only egocentric visual observations and the ability to ask clarifying questions to a human when faced with ambiguity. It combines computer vision, natural language processing, and robotics challenges.

How does QAsk-Nav differ from previous navigation benchmarks?

Previous benchmarks primarily measured navigation success as a single metric. QAsk-Nav introduces separate scoring for navigation and question-asking capabilities, provides higher-quality natural language descriptions, and includes a large dataset of interaction traces for training and analysis. This enables more nuanced evaluation and targeted improvement of collaborative agents.

Why is Light-CoNav 70x faster than previous methods?

Light-CoNav uses a unified architecture that processes both visual and language inputs in a single model, eliminating the overhead of coordinating separate navigation and dialogue modules. This architectural efficiency, combined with optimization techniques, results in significantly faster inference times while maintaining or improving accuracy.

What are the practical applications of this research?

This research enables more effective collaborative robots for home assistance, warehouse navigation, healthcare support, and other scenarios where AI agents must work alongside humans in physical environments. The efficiency gains could allow such systems to run on less powerful hardware, making them more affordable and accessible for real-world deployment.

Project page: https://benchmarking-interaction.github.io/