A tweet from user @heygurisingh has gone viral, claiming that "Scientists just proved ChatGPT is making you stupid." The tweet, which has been retweeted thousands of times, asserts this is not a "might" or "could" scenario, but a proven fact based on a study of 1,222 people.

What the Tweet Claims

The source material is a single retweet with no link to a research paper, preprint server (like arXiv), or institutional press release. The core claim is declarative: a scientific study involving 1,222 participants has conclusively demonstrated that using ChatGPT leads to reduced cognitive capacity or "stupidity."

The Immediate Problem: No Source

As of this writing, no corresponding peer-reviewed study, preprint, or detailed methodology has been identified linking directly to this claim. The tweet provides no authors, institution, journal name, or metrics. In the world of technical AI research, such a bold claim requires transparent data, defined constructs (what is "stupidity"?), controlled experiments, and statistical analysis to be taken seriously.

Key Missing Information:

- The study's design: Was it longitudinal? A controlled lab experiment? A survey?

- The measured variable: How was "stupidity" or cognitive decline operationalized and measured?

- The control group: Were there non-ChatGPT users for comparison?

- Causation vs. Correlation: Does the study establish that ChatGPT causes a decline, or simply observes a relationship?

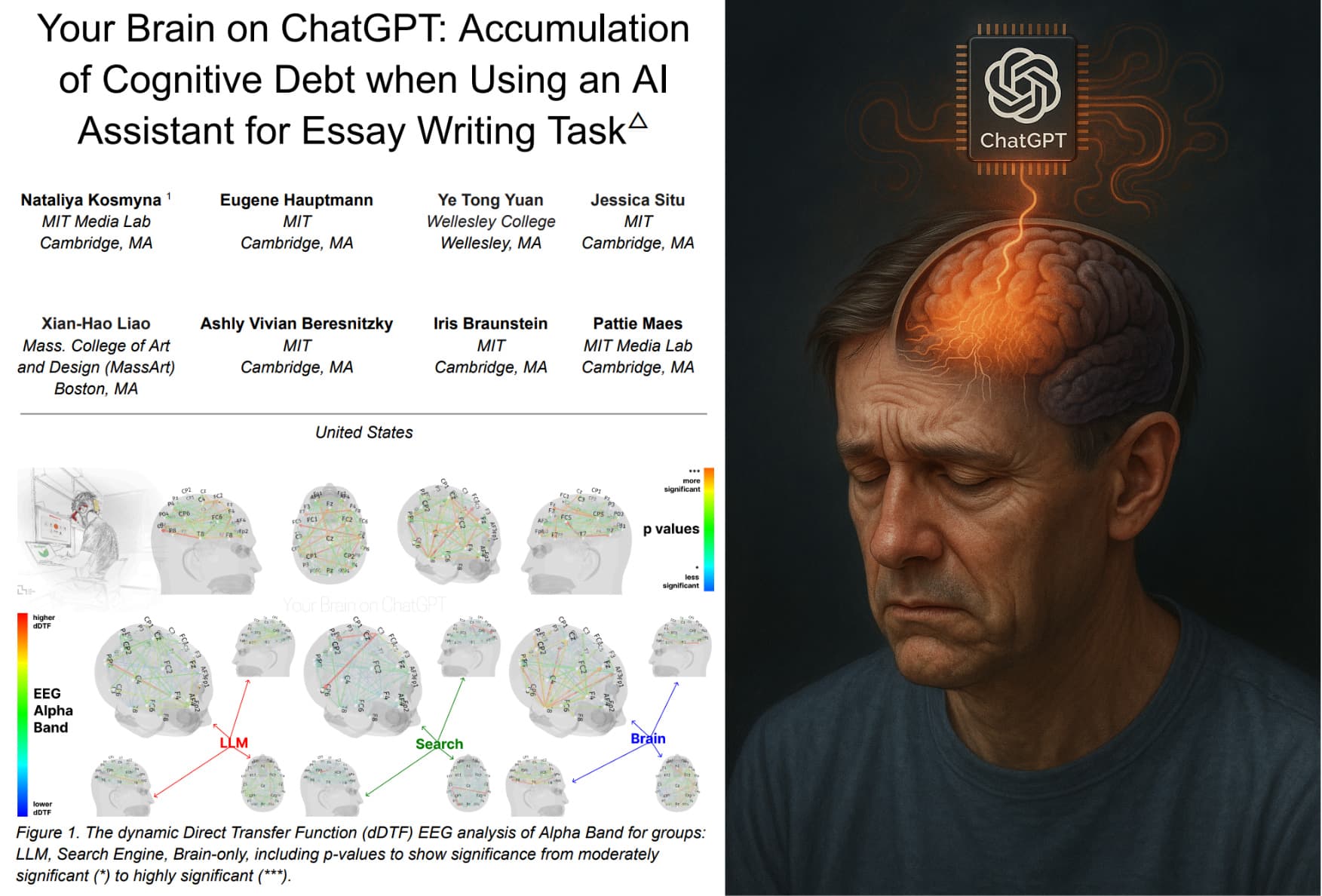

Context: The Real Academic Debate on LLMs and Cognition

While this specific viral claim lacks substantiation, it taps into a genuine and active area of research and concern. Scholars in human-computer interaction, psychology, and education are investigating how reliance on large language models (LLMs) might affect:

- Cognitive offloading: The tendency to outsource thinking (e.g., problem-solving, writing, coding) to a tool, potentially leading to skill atrophy.

- Critical thinking: Reduced incentive to verify AI-generated outputs, leading to the uncritical acceptance of plausible but incorrect information (often called the "fluency trap").

- Learning outcomes: Preliminary studies in educational settings show mixed results, with some finding LLMs can hinder deep learning if used as a crutch, while others show they can be effective tutors.

Recent, credible research has explored related themes. For instance, a 2025 study in Nature Human Behaviour examined how AI assistants affect problem-solving diversity in groups, finding they can reduce the range of ideas generated.

gentic.news Analysis

This viral episode is less about a new scientific finding and more about the sociology of AI discourse. It highlights how potent, simplified narratives about AI's dangers—especially those concerning human capability—can spread rapidly in the absence of primary sources. The tweet frames the issue in the most alarmist possible terms ("making you stupid"), which is effective for engagement but antithetical to scientific nuance.

This pattern is consistent with the broader "AI anxiety" trend we've tracked, where public concern oscillates between existential risk and more immediate, human-centric impacts like job displacement or cognitive erosion. The claim directly contradicts the dominant marketing narrative from LLM providers like OpenAI, Anthropic, and Google, which position their models as "reasoning engines" and "productivity multipliers" that augment, rather than diminish, human intelligence.

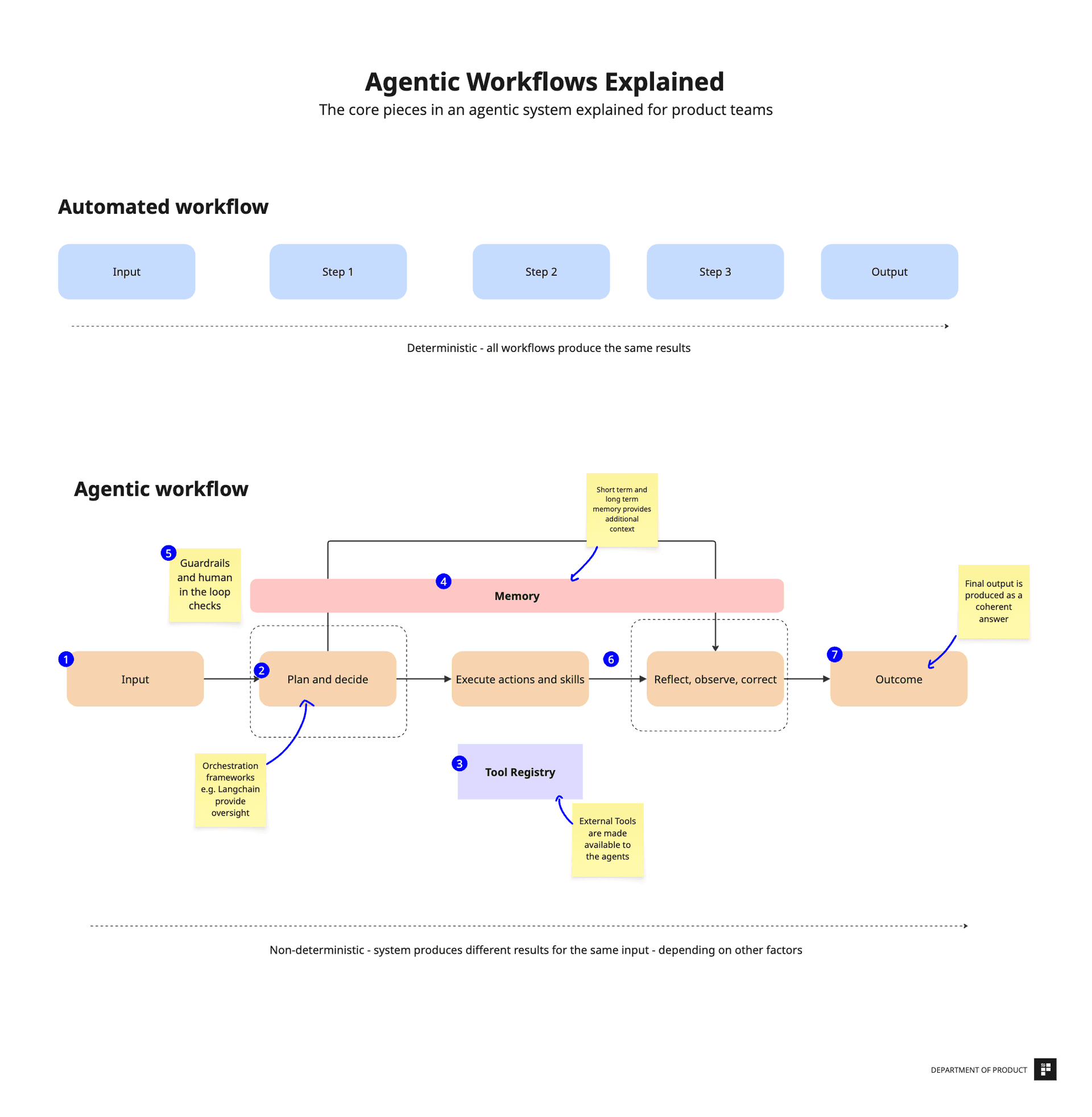

For practitioners, this serves as a critical reminder: the impact of AI tools is not deterministic. It is mediated by how they are used. The cognitive effects of ChatGPT likely fall on a spectrum, influenced by user expertise, task design, and the presence of guardrails that encourage critical engagement rather than passive consumption. The real research challenge is not proving AI "makes you stupid," but defining the conditions under which it enhances versus undermines complex cognitive skills.

Frequently Asked Questions

Is there really a study that proves ChatGPT makes you dumber?

As of April 2026, no such peer-reviewed study has been verified. The viral tweet references a study of 1,222 people but provides no citation, authors, or data. Extraordinary claims require extraordinary evidence, and the AI research community operates on shared data and methods. Until the full study is published for scrutiny, the claim remains an unsubstantiated viral assertion.

What does real research say about AI and human cognition?

Legitimate research is ongoing and shows nuanced effects. Studies suggest that over-reliance on AI for tasks like writing or coding can lead to cognitive offloading, where skills may atrophy if not practiced. Other research focuses on automation bias—the tendency to trust AI outputs uncritically. However, other studies show LLMs can be powerful tools for learning and brainstorming when used interactively. The impact is highly dependent on context and user behavior.

How can I use LLMs like ChatGPT without harming my own skills?

Experts suggest treating LLMs as a collaborator or tutor, not a replacement. Use it to generate drafts or explore ideas, but then critically edit, verify facts, and rework the output in your own words. For learning, try to solve a problem yourself first, then use the AI to check your work or explain gaps. The key is maintaining an active, engaged cognitive role in the process rather than passively accepting its outputs.

Why do claims like this spread so quickly?

Claims about technology "making us stupid" tap into deep-seated cultural anxieties that date back to Socrates' worries about writing. They are simple, emotionally resonant, and align with a common intuition that easy tools might make us lazy. In the fast-paced world of social media, such stark narratives often travel further and faster than complex, qualified academic findings, which require more time and expertise to parse.