As large language models (LLMs) become increasingly integrated into critical decision-making systems—from healthcare diagnostics to financial analysis—a fundamental trustworthiness problem persists: these models often don't know when they're wrong. Published on arXiv on February 18, 2026, a groundbreaking paper titled "Know When You're Wrong: Aligning Confidence with Correctness for LLM Error Detection" introduces a surprisingly simple yet effective solution to this problem.

The Confidence-Correctness Gap

The core challenge addressed by the research is what the authors call "the lack of reliable methods to measure [LLMs'] uncertainty." Current LLMs can produce convincing but incorrect answers with unwarranted confidence, a phenomenon particularly dangerous in high-stakes applications. Traditional approaches to error detection often require external validation systems or complex ensemble methods, creating significant computational overhead and implementation barriers.

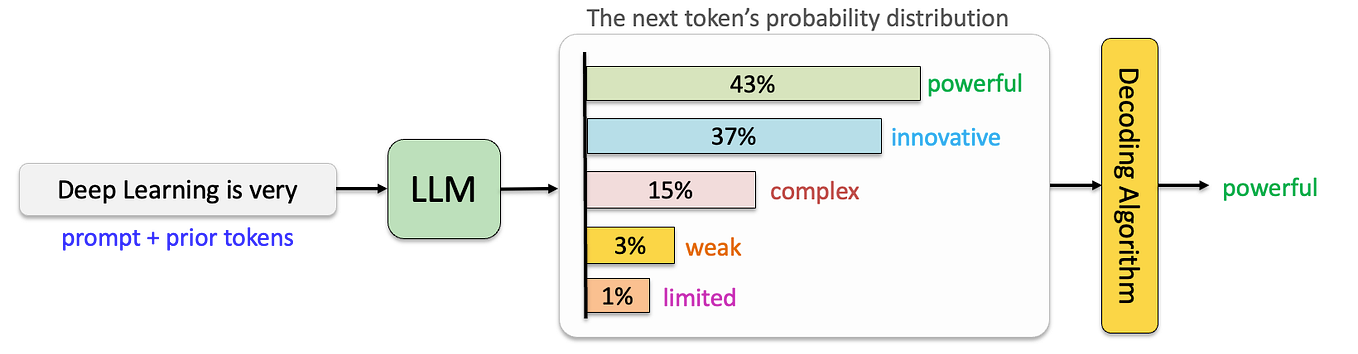

What makes this research particularly compelling is its elegant simplicity. The researchers propose a normalized confidence score based on output anchor token probabilities. For structured tasks like classification, this means looking at the probability assigned to the chosen label token. For open-ended generation tasks, the method uses self-evaluation responses (Yes/No) as anchor points. This approach enables direct detection of errors and hallucinations with minimal computational overhead and without requiring external validation systems.

Three Key Contributions

The paper makes three significant contributions that collectively advance the field of trustworthy AI:

First, the researchers demonstrate that their normalized confidence score and self-evaluation framework produces reliable confidence estimates across seven diverse benchmark tasks and five LLMs of varying architectures and sizes. This breadth of validation is crucial, as it shows the method's robustness across different types of tasks and model designs.

Second, their theoretical analysis reveals a critical insight about different training methodologies. Supervised fine-tuning (SFT) yields well-calibrated confidence through maximum-likelihood estimation, while reinforcement learning methods—including Proximal Policy Optimization (PPO), Group Relative Policy Optimization (GRPO), and Direct Preference Optimization (DPO)—induce overconfidence through reward exploitation. This finding helps explain why RL-trained models often appear more confident but less reliable.

Third, the researchers propose a practical solution: post-RL SFT with self-distillation to restore confidence reliability in reinforcement learning-trained models. This approach offers a path forward for teams that have invested in RL training but need more trustworthy confidence estimates.

Empirical Results and Practical Applications

The empirical results are striking. On the Qwen3-4B model, supervised fine-tuning improved average confidence-correctness AUROC (Area Under the Receiver Operating Characteristic curve) from 0.806 to 0.879 and reduced calibration error from 0.163 to 0.034. Meanwhile, GRPO and DPO degraded confidence reliability, confirming the theoretical analysis about reinforcement learning methods inducing overconfidence.

The practical value of this research was demonstrated through an adaptive retrieval-augmented generation (RAG) system that selectively retrieves external context only when the model lacks confidence. This system achieved remarkable efficiency, using only 58% of retrieval operations to recover 95% of the maximum achievable accuracy gain on the TriviaQA benchmark. Such applications could significantly reduce computational costs while maintaining performance in real-world systems.

Broader Implications for AI Development

This research arrives at a critical juncture in AI development. As noted in the paper's abstract, LLMs are "increasingly deployed in critical decision-making systems," making confidence calibration not just an academic concern but a practical necessity for safe deployment. The method's minimal overhead makes it particularly attractive for production systems where computational efficiency matters.

The findings about different training methodologies also have important implications for how we develop future AI systems. The revelation that reinforcement learning methods induce overconfidence through reward exploitation suggests that current RL approaches may need refinement when confidence calibration is important. The proposed post-RL SFT with self-distillation offers one pathway forward, but the research may also inspire new training approaches designed specifically for well-calibrated confidence.

Looking Forward

While the paper represents a significant advance, several questions remain for future research. How well does the method scale to even larger models? Can the approach be extended to multimodal systems that process both text and images? How might the confidence scores be integrated into user interfaces to help human operators make better decisions with AI assistance?

What's clear from this research is that simple, elegant solutions can sometimes address complex problems in AI. By focusing on anchor token probabilities and self-evaluation responses, the researchers have developed a method that could make AI systems more trustworthy and practical for real-world applications. As AI continues to integrate into critical systems, such advances in self-awareness and error detection will be essential for building public trust and ensuring safe deployment.

Source: "Know When You're Wrong: Aligning Confidence with Correctness for LLM Error Detection" (arXiv:2603.06604v1, submitted February 18, 2026)