In the high-stakes world of medical diagnosis, every question matters. When a patient presents with symptoms, a clinician must navigate a complex information-gathering process—asking the right follow-up questions, interpreting ambiguous responses, and gradually narrowing down possibilities. This multi-turn dialogue presents a formidable challenge for artificial intelligence systems, which must balance exploration (gathering more information) with exploitation (making a diagnosis) under conditions of inherent uncertainty.

A groundbreaking paper published on arXiv on February 10, 2026, introduces Adaptive Tree Policy Optimization (ATPO), a novel reinforcement learning algorithm specifically designed to align large language models (LLMs) for these interactive medical scenarios. What makes this development particularly remarkable is that the researchers achieved these results with a relatively modest Qwen3-8B model that surpassed the much larger GPT-4o by 0.92% in diagnostic accuracy on medical dialogue benchmarks.

The Challenge of Medical Dialogue AI

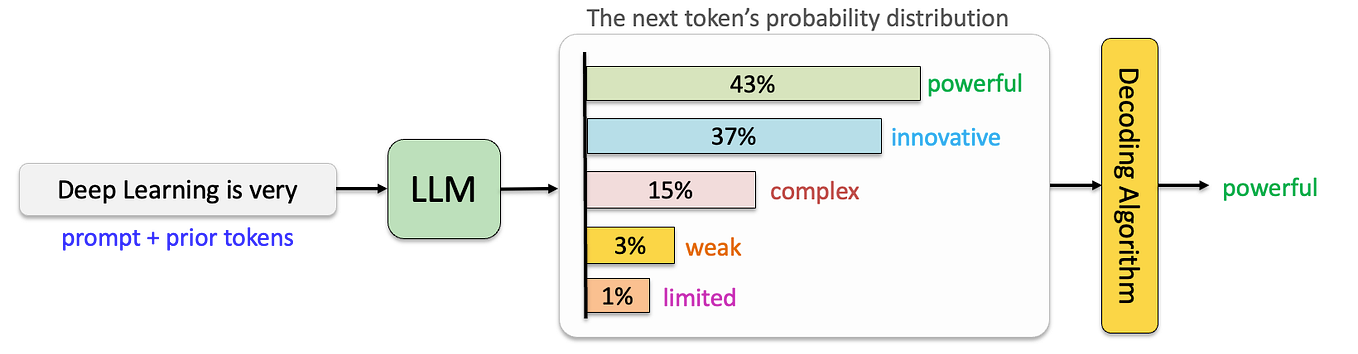

Medical diagnosis through dialogue represents what researchers call a Hierarchical Markov Decision Process (H-MDP). At each turn in the conversation, the AI agent must decide what question to ask or what diagnostic conclusion to draw, with each decision affecting future possibilities. The uncertainty is multi-layered: uncertainty about the patient's true condition, uncertainty about how they will respond to questions, and uncertainty about which information-gathering strategy will be most efficient.

Conventional reinforcement learning approaches have struggled with this domain. Group Relative Policy Optimization (GRPO) faces difficulties with long-horizon credit assignment—determining which early questions contributed to a later correct diagnosis. Meanwhile, Proximal Policy Optimization (PPO) suffers from unstable value estimation in these complex, uncertain environments. Both limitations can lead to suboptimal questioning strategies and diagnostic errors.

How ATPO Works: Uncertainty-Aware Resource Allocation

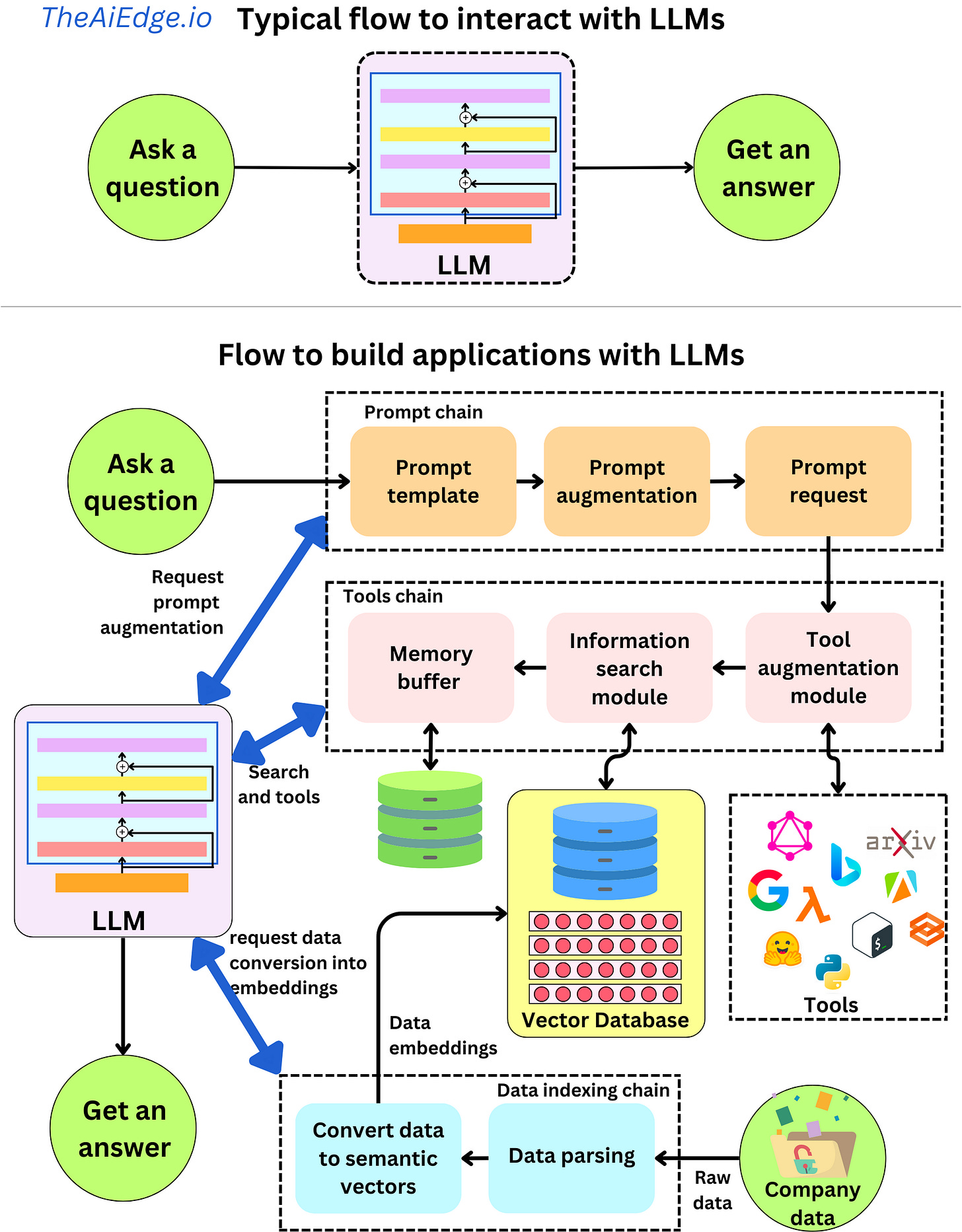

The core innovation of ATPO lies in its adaptive allocation of computational resources based on uncertainty. Rather than treating all possible conversational paths equally, the algorithm identifies states (points in the dialogue) where uncertainty is highest and allocates more "rollout budget"—more computational exploration—to those areas.

ATPO quantifies uncertainty using a composite metric combining Bellman error and action-value variance. The Bellman error measures how well the current value estimates satisfy the fundamental equations of optimal decision-making, while action-value variance captures how much disagreement exists about the best course of action. States with high values in both metrics receive more extensive exploration through tree search.

This approach enables more accurate value estimation while fostering more efficient and diverse exploration. The system learns not just what questions to ask, but when to ask them and how to interpret the answers within the broader diagnostic context.

Computational Innovations: Making Tree Search Practical

Tree-based reinforcement learning methods have traditionally been computationally prohibitive for large language models, requiring extensive rollouts (simulated conversations) to evaluate possible futures. The ATPO researchers addressed this challenge with two key optimizations:

Uncertainty-guided pruning: Instead of exploring all possible branches equally, the algorithm prunes low-uncertainty paths early, focusing computational resources where they matter most.

Asynchronous search architecture with KV cache reuse: This technical innovation maximizes inference throughput by reusing computed key-value pairs across different branches of the search tree, dramatically reducing redundant computation.

These optimizations make ATPO practically applicable to real-world medical dialogue systems where response time matters.

Performance and Implications

Extensive experiments on three public medical dialogue benchmarks demonstrated ATPO's superiority over several strong baselines. The Qwen3-8B model fine-tuned with ATPO not only surpassed GPT-4o in accuracy but did so with significantly fewer parameters—approximately 8 billion versus GPT-4o's estimated 1.8 trillion parameters.

This efficiency breakthrough has profound implications:

- Accessibility: Smaller, more efficient models can be deployed in resource-constrained environments like rural clinics or mobile health applications.

- Specialization: The approach enables fine-tuning of domain-specific models without requiring massive computational resources.

- Transparency: The uncertainty quantification provides clinicians with insight into where the AI is least confident, supporting human-AI collaboration rather than replacement.

The Future of Medical AI Dialogue Systems

ATPO represents more than just another incremental improvement in medical AI. It demonstrates a fundamental shift in how we approach aligning large language models with complex, uncertain real-world tasks. By explicitly modeling and responding to uncertainty, the algorithm moves beyond pattern recognition toward genuine reasoning under uncertainty.

The research also highlights the growing importance of efficient fine-tuning methods as the AI field confronts the computational and environmental costs of ever-larger models. Techniques like ATPO that achieve superior performance with smaller, specialized models may point toward a more sustainable and practical future for applied AI.

As noted in the cross-source context, similar approaches like Adaptive Social Learning via Mode Policy Optimization have shown promising results in multilingual dialogue tasks, suggesting that uncertainty-aware adaptive methods may have broad applicability across interactive AI domains.

Source: arXiv:2603.02216v1 "ATPO: Adaptive Tree Policy Optimization for Multi-Turn Medical Dialogue" (Submitted February 10, 2026)