Vision-language models (VLMs) have become the darlings of the AI community, demonstrating remarkable capabilities in visual question answering, document understanding, and multimodal dialogue. From describing complex scenes to answering nuanced questions about images, these models appear to possess sophisticated visual understanding. However, a groundbreaking study published on arXiv reveals a surprising weakness: VLMs significantly underperform on traditional fine-grained image classification tasks, exposing a fundamental disconnect in their visual knowledge capabilities.

The Benchmark Paradox

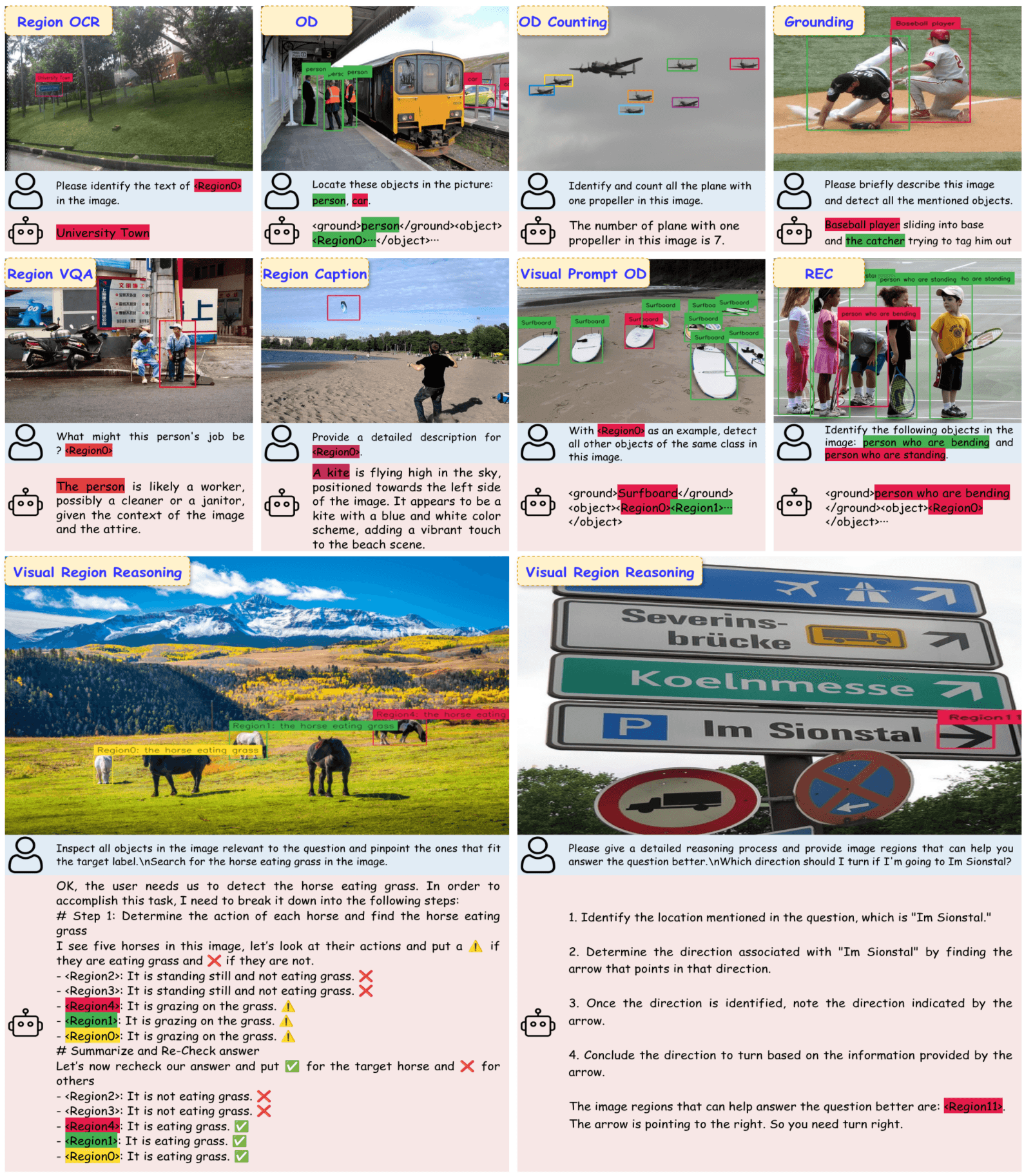

The research, titled "Understanding the Fine-Grained Knowledge Capabilities of Vision-Language Models," systematically tested numerous recent VLMs across fine-grained classification benchmarks. These benchmarks require models to distinguish between subtle visual differences—telling apart bird species with nearly identical plumage, identifying specific car models with minor variations, or classifying plant species based on subtle leaf characteristics.

Surprisingly, while VLMs excel at complex visual reasoning tasks that seem more sophisticated, they trail behind specialized image classifiers on these seemingly simpler classification tasks. This creates what the researchers call "the benchmark paradox"—models performing well on advanced multimodal benchmarks while struggling with foundational visual knowledge.

Architectural Insights: Vision Encoders Matter Most

Through extensive ablation experiments, the research team identified key factors contributing to this performance gap. Their most significant finding: improving the vision encoder disproportionately boosts fine-grained classification performance, while upgrading the language model improves all benchmark scores more uniformly.

This suggests that the visual processing component—not the language understanding capabilities—represents the primary bottleneck for fine-grained visual knowledge. The vision encoder's ability to extract and represent subtle visual features appears crucial for classification tasks, whereas language models primarily contribute to reasoning and interpretation once visual features are extracted.

Training Dynamics: The Pretraining Imperative

The study also reveals the critical importance of pretraining stages, particularly when language model weights remain unfrozen during this phase. This finding challenges conventional wisdom about multimodal training approaches and suggests that joint optimization of vision and language components during pretraining significantly impacts fine-grained visual understanding.

Researchers observed that VLMs trained with frozen language model weights during pretraining showed substantially weaker fine-grained classification capabilities, even when fine-tuned extensively on downstream tasks. This indicates that early integration of visual and linguistic representations creates more robust visual knowledge foundations.

Contextualizing the Findings

This research arrives amid growing concerns about AI benchmark saturation and evaluation methodologies. Just days before this study's publication, arXiv published research showing that nearly half of major AI benchmarks are becoming saturated and losing discriminatory power. Additionally, another recent study revealed that VLMs' spatial reasoning capabilities collapse when visual information becomes ambiguous.

These parallel developments suggest a broader pattern: as AI systems advance on specific benchmark metrics, they may develop specialized capabilities that don't translate to comprehensive understanding. The fine-grained classification gap identified in this study represents another dimension of this phenomenon—models optimized for conversational performance may sacrifice foundational visual knowledge.

Implications for AI Development

The findings have significant implications for VLM development and deployment:

Architecture Design: Future VLMs may require more sophisticated vision encoders specifically optimized for fine-grained feature extraction, potentially moving beyond transformer-based approaches for visual processing.

Training Paradigms: The research suggests pretraining methodologies need reevaluation, with greater emphasis on maintaining language model adaptability during early training phases.

Evaluation Frameworks: The disconnect between different benchmark types highlights the need for more comprehensive evaluation suites that test both high-level reasoning and foundational knowledge.

Application Considerations: Developers deploying VLMs in domains requiring fine-grained visual discrimination—medical imaging, quality control, biodiversity monitoring—should be aware of these limitations and potentially supplement VLMs with specialized classifiers.

The Path Forward

The research team's insights "pave the way for enhancing fine-grained visual understanding and vision-centric capabilities in VLMs." By identifying specific architectural and training factors contributing to the performance gap, they provide actionable directions for improvement.

Future work might explore hybrid approaches combining VLMs with specialized visual modules, novel training objectives that explicitly reward fine-grained discrimination, or architectural innovations that better integrate visual feature extraction with linguistic reasoning.

As VLMs continue to evolve from research curiosities to practical tools, understanding and addressing these knowledge gaps becomes increasingly important. The fine-grained classification challenge represents not just a technical hurdle but a fundamental question about how AI systems build and integrate different types of knowledge.

Source: arXiv:2602.17871v1, "Understanding the Fine-Grained Knowledge Capabilities of Vision-Language Models" (Submitted February 19, 2026)