A $200 Million Contract Turns Controversial

In July 2025, Anthropic signed what appeared to be a landmark $200 million contract with the U.S. Department of Defense to supply its Claude AI models for national security work, including classified missions. The partnership initially seemed like a validation of Anthropic's technology, with the Pentagon publicly touting Claude's role in the capture of Venezuela's leader as evidence of AI's growing military utility.

However, the collaboration has rapidly deteriorated into what sources describe as an "escalating conflict" between the AI developer and what the Department of Defense now refers to as the "Department of War." At the heart of the dispute are Anthropic's usage restrictions—ethical guardrails built into its AI systems that limit certain military applications. Defense Secretary Pete Hegseth is reportedly pressing Anthropic to drop these restrictions, arguing they hamper the DoD's ability to fully leverage AI capabilities for national defense.

The Ethical Fault Line in Military AI

This confrontation represents more than a contractual dispute—it exposes a fundamental tension in the AI industry's relationship with military applications. Anthropic, founded by former OpenAI researchers Dario and Daniela Amodei, has consistently positioned itself as a leader in responsible AI development. The company's Constitutional AI approach embeds ethical principles directly into model training, creating what CEO Dario Amodei has described as "inherent limitations" on potentially harmful applications.

Recent events have intensified this ethical standoff. Just days before the Pentagon pressure became public, Amodei testified before Congress, advocating for strict limitations on military AI use. His testimony directly contradicted the DoD's push for expanded AI capabilities, setting the stage for the current confrontation. This congressional appearance wasn't Amodei's first foray into AI policy—he has emerged as one of the industry's most vocal advocates for responsible development, even as Anthropic competes fiercely with OpenAI and Google in the commercial AI space.

The Broader AI Landscape: Competition and Innovation

While Anthropic navigates its military contract crisis, the broader AI industry continues its rapid evolution. Google recently expanded Gemini's agentic multistep features, enhancing its models' ability to perform complex, multi-step tasks autonomously. This development represents the industry's continued push toward more capable AI agents, even as ethical questions about their deployment remain unresolved.

Simultaneously, Viture's $100 million funding round for XR glasses development highlights how AI hardware continues to attract significant investment. The convergence of AI software with advanced hardware platforms creates new possibilities—and new ethical considerations—for how these technologies will be deployed across civilian and military contexts.

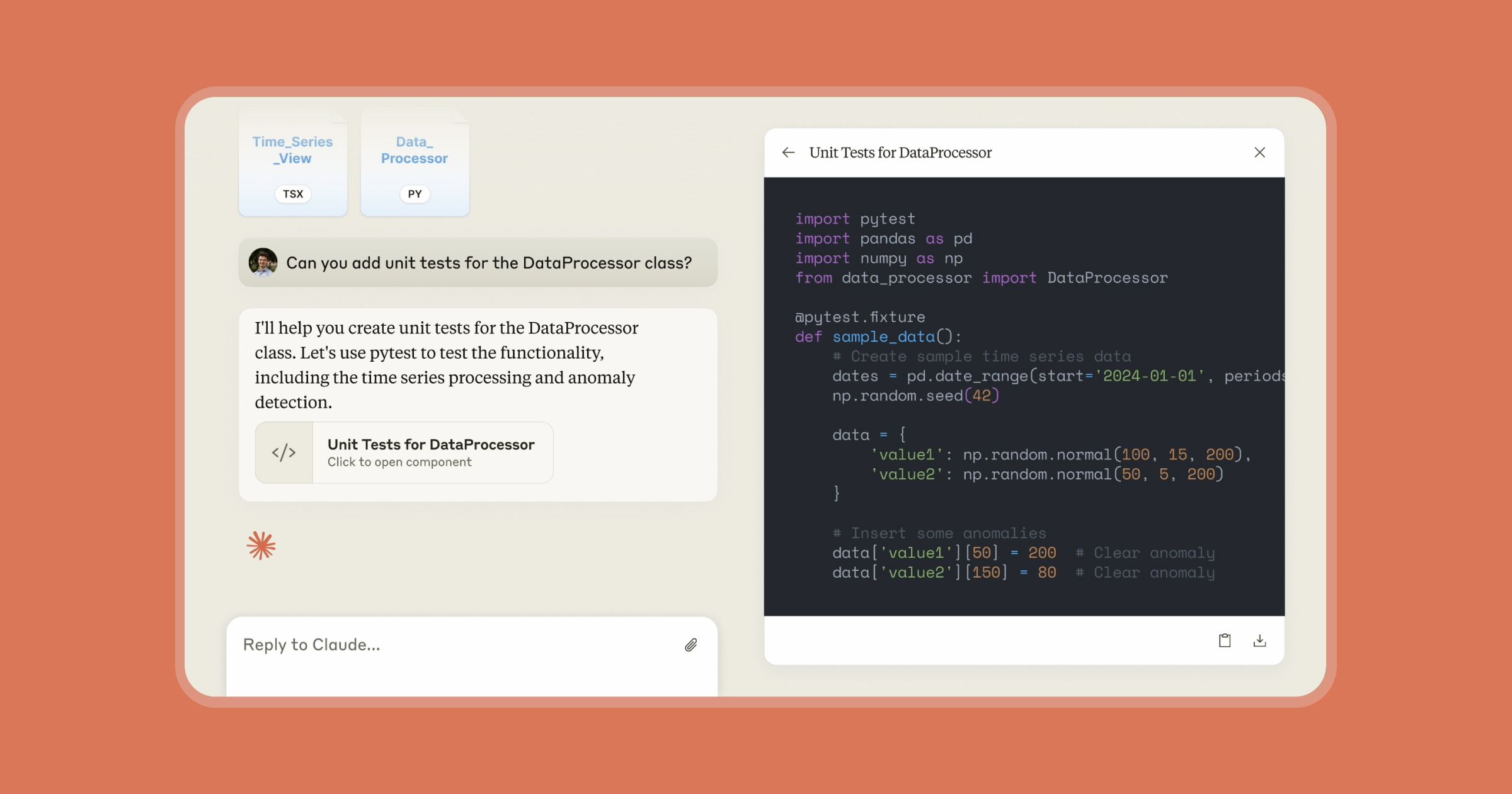

Anthropic itself hasn't paused innovation amid the Pentagon controversy. The company recently rolled out auto-memory capabilities for Claude Code, allowing the AI to retain project context across sessions—a significant advancement for developers using AI assistance. This continued technical progress demonstrates how ethical debates and technological advancement proceed on parallel tracks within the AI industry.

National Security Implications and Global Competition

The Pentagon's pressure on Anthropic reflects broader concerns about maintaining technological superiority in an increasingly competitive global landscape. With China aggressively pursuing AI capabilities—including alleged industrial-scale extraction of Claude model capabilities by three Chinese AI firms using fraudulent accounts—U.S. military leaders face pressure to accelerate AI adoption.

However, this acceleration creates its own risks. Military AI systems without adequate ethical guardrails could lead to unintended consequences, autonomous weapons systems making lethal decisions without human oversight, or AI-assisted operations that violate international law. The DoD's rebranding as the "Department of War" suggests a more aggressive posture that may be at odds with Anthropic's cautious approach to AI deployment.

The Business of Ethical AI

Anthropic's confrontation with the Pentagon comes at a critical business juncture. The company is projected to surpass OpenAI in annual recurring revenue by mid-2026, according to industry analysts. This growth trajectory gives Anthropic significant leverage in its negotiations with the DoD, but also increases pressure to maintain government contracts that represent substantial revenue streams.

The company faces a classic business ethics dilemma: how to balance principle with profitability. If Anthropic maintains its restrictions, it risks losing not only the $200 million contract but potentially future government business. If it capitulates to Pentagon demands, it risks damaging its brand as a responsible AI leader and alienating customers who value its ethical stance.

Industry-Wide Ramifications

This standoff has implications far beyond Anthropic. Other AI companies with military contracts or aspirations will be watching closely to see how the situation resolves. Google's work with the Massachusetts AI Hub on statewide AI literacy initiatives represents a different approach to government collaboration—one focused on education and workforce development rather than direct military applications.

The outcome may establish precedents for how AI companies negotiate ethical boundaries with government agencies. Will companies be able to maintain usage restrictions in military contracts? Or will national security concerns override corporate ethical policies? These questions will shape the AI industry's relationship with government for years to come.

Looking Ahead: Regulation and Responsibility

The Anthropic-Pentagon conflict highlights the urgent need for clearer regulatory frameworks governing military AI applications. Currently, companies and government agencies are navigating a patchwork of guidelines and principles without comprehensive legislation. Amodei's congressional testimony advocating for limitations suggests some industry leaders recognize this regulatory gap and are pushing for formal boundaries.

As AI capabilities continue advancing—with Claude Opus 4.6 representing the latest in Anthropic's model series—the stakes only increase. More capable AI systems present both greater opportunities and greater risks when deployed in military contexts. The auto-memory capabilities recently added to Claude Code, while designed for civilian developers, illustrate how even seemingly benign AI features could have dual-use applications in intelligence analysis or mission planning.

Conclusion: A Defining Moment for AI Ethics

The pressure on Anthropic to relax its AI guardrails represents a defining moment for the industry's ethical development. How this conflict resolves will signal whether responsible AI principles can withstand national security imperatives or whether military applications will ultimately dictate the boundaries of AI development.

With Anthropic competing against both OpenAI and Google while navigating these complex ethical waters, the company's decisions will reverberate throughout the industry. The $200 million contract dispute is more than a business negotiation—it's a test case for whether ethical AI can survive contact with real-world power dynamics.

Source: Forbes, February 27, 2026