March 26, 2026 — A new benchmark paper published on arXiv today delivers a stark diagnosis of the current state of visual generative AI. Titled ViGoR-Bench (Vision-Generative Reasoning-centric Benchmark), the research argues that beneath the high-fidelity imagery produced by models like DALL-E 3, Stable Diffusion 3, and Sora lies a "logical desert"—a fundamental inability to perform tasks requiring physical, causal, or complex spatial reasoning.

The authors, from an undisclosed institution, tested over 20 leading models and found that even state-of-the-art systems harbor "significant reasoning deficits." ViGoR-Bench is designed as a unified framework to dismantle what the paper calls a "performance mirage" created by current evaluations that rely on superficial metrics or fragmented benchmarks.

What the Researchers Built: A Four-Pillar Benchmark

ViGoR-Bench is not just another dataset; it's an evaluation framework built on four key innovations designed to probe the generative reasoning process itself.

- Holistic Cross-Modal Coverage: It bridges Image-to-Image and Video generation tasks within a single benchmark. This prevents models from specializing in one modality and forces evaluation of reasoning capabilities that should transfer across visual formats.

- Dual-Track Evaluation: Critically, ViGoR assesses both the final generated output and the intermediate reasoning process. This moves beyond just judging a pretty picture to understanding how the model arrived at its answer, exposing flawed logic even if the end result looks plausible.

- Evidence-Grounded Automated Judge: To scale evaluation, the team developed an automated judge system that aligns highly with human assessment. This judge is "evidence-grounded," meaning it evaluates based on logical consistency with the prompt's reasoning requirements, not just aesthetic quality.

- Granular Diagnostic Analysis: Performance is decomposed into fine-grained cognitive dimensions (e.g., object permanence, causality chains, spatial relationships). This allows researchers to pinpoint exactly where and how a model fails, moving beyond a single aggregate score.

The Core Problem: The 'Performance Mirage'

The paper posits that current benchmarks often create a misleading impression of capability. A model might score well on image quality (FID, CLIP score) or simple caption alignment, yet completely fail a task like "Generate an image of a stack of books, then show the same stack after the third book from the bottom is removed." This requires understanding object persistence, ordinal position, and the physics of support—capabilities that are not captured by standard metrics.

ViGoR-Bench is explicitly designed to be a "stress test" for the next generation of intelligent vision models, pushing them into scenarios that require genuine world understanding rather than pattern matching from training data.

Key Implications for the Field

- A New North Star for Evaluation: For teams developing the next GPT-4V, Gemini Vision, or open-source vision models, ViGoR-Bench provides a rigorous testbed. Improving on ViGoR will likely require architectural innovations beyond scaling up diffusion transformers or video U-Nets.

- Training Data Scrutiny: The results suggest that simply adding more images and captions from the web may not be sufficient to instill reasoning. This could accelerate research into synthetic data generation for reasoning tasks or curriculum learning strategies that explicitly teach physical concepts.

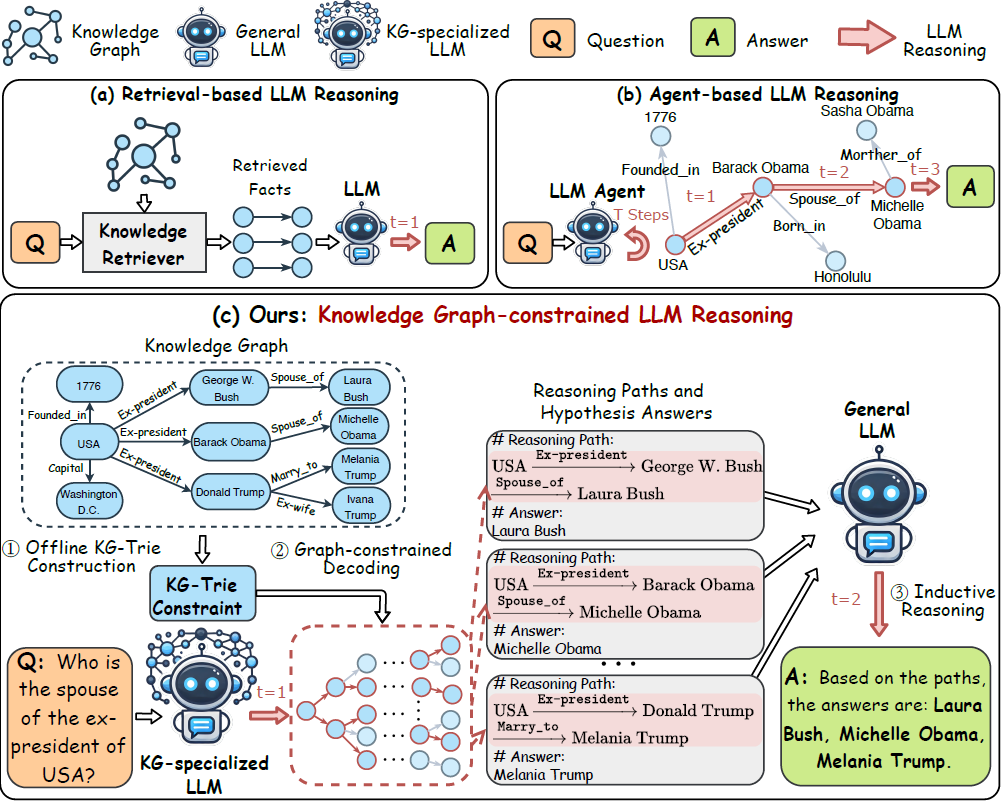

- The Multimodal Agent Gap: The findings have direct implications for the booming field of AI agents. An agent that uses a vision model to perceive a digital environment or analyze a diagram will fail if the underlying model cannot reason about cause and effect or spatial relationships. This connects to recent practical concerns, like the RAG system failure cautionary tale shared last week, highlighting that brittle reasoning is a production risk.

gentic.news Analysis

This paper arrives at a critical juncture in multimodal AI development. The industry has been captivated by increasingly impressive video generation demos, but ViGoR-Bench systematically questions the intelligence behind the imagery. This aligns with a broader trend of increased scrutiny on evaluation methods, as seen in other recent arXiv posts like the study challenging the assumption that fair model representations guarantee fair recommendations (March 25) and the work finding that reasoning training doesn't improve embedding quality (March 22).

The benchmark's focus on process over output is its most significant contribution. It formalizes an intuition many practitioners have had: that today's visual generative models are exceptional interpolators and stylists, but poor physicists and logicians. This creates a clear challenge for organizations like OpenAI, Anthropic, and Google DeepMind whose roadmaps likely include more capable reasoning-based agents.

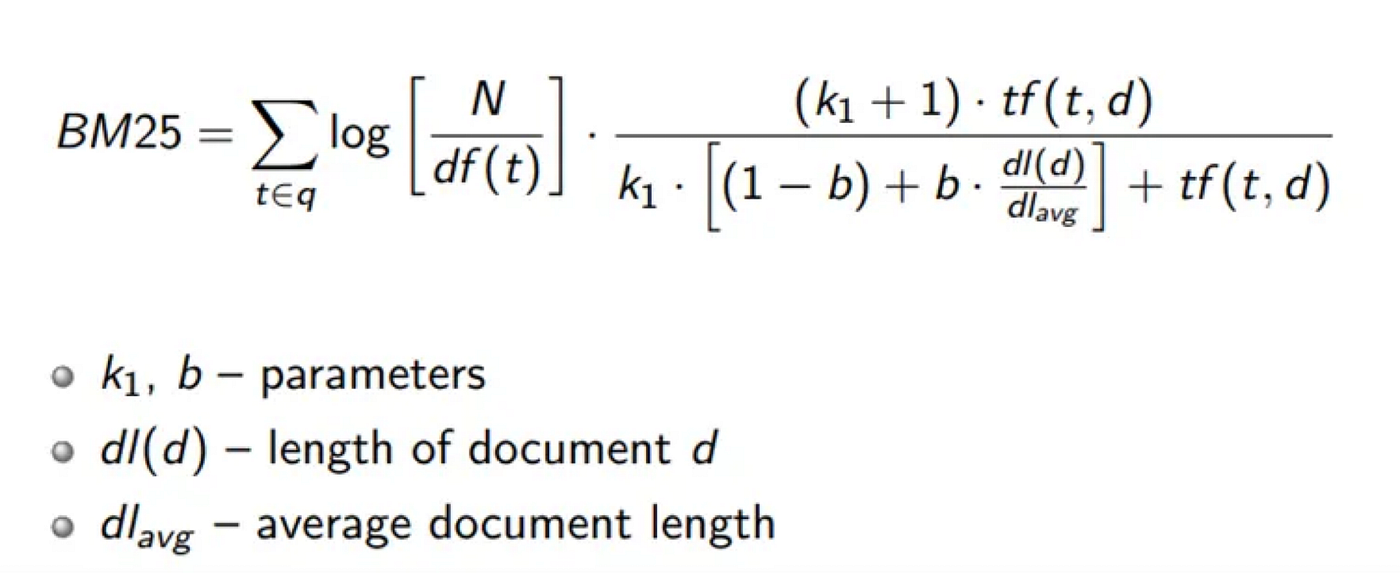

Furthermore, the paper's release follows a week of intense activity around Retrieval-Augmented Generation (RAG), a trending topic in our coverage with 33 mentions this week. There's a direct through-line: RAG systems are often built to compensate for the knowledge and reasoning limitations of base LLMs. ViGoR-Bench exposes a parallel limitation in the vision domain, suggesting future sophisticated agents will need robust RAG-like mechanisms for visual reasoning as well. As our recent article "Your RAG Deployment Is Doomed" outlined, fixing reasoning bottlenecks is paramount for production systems.

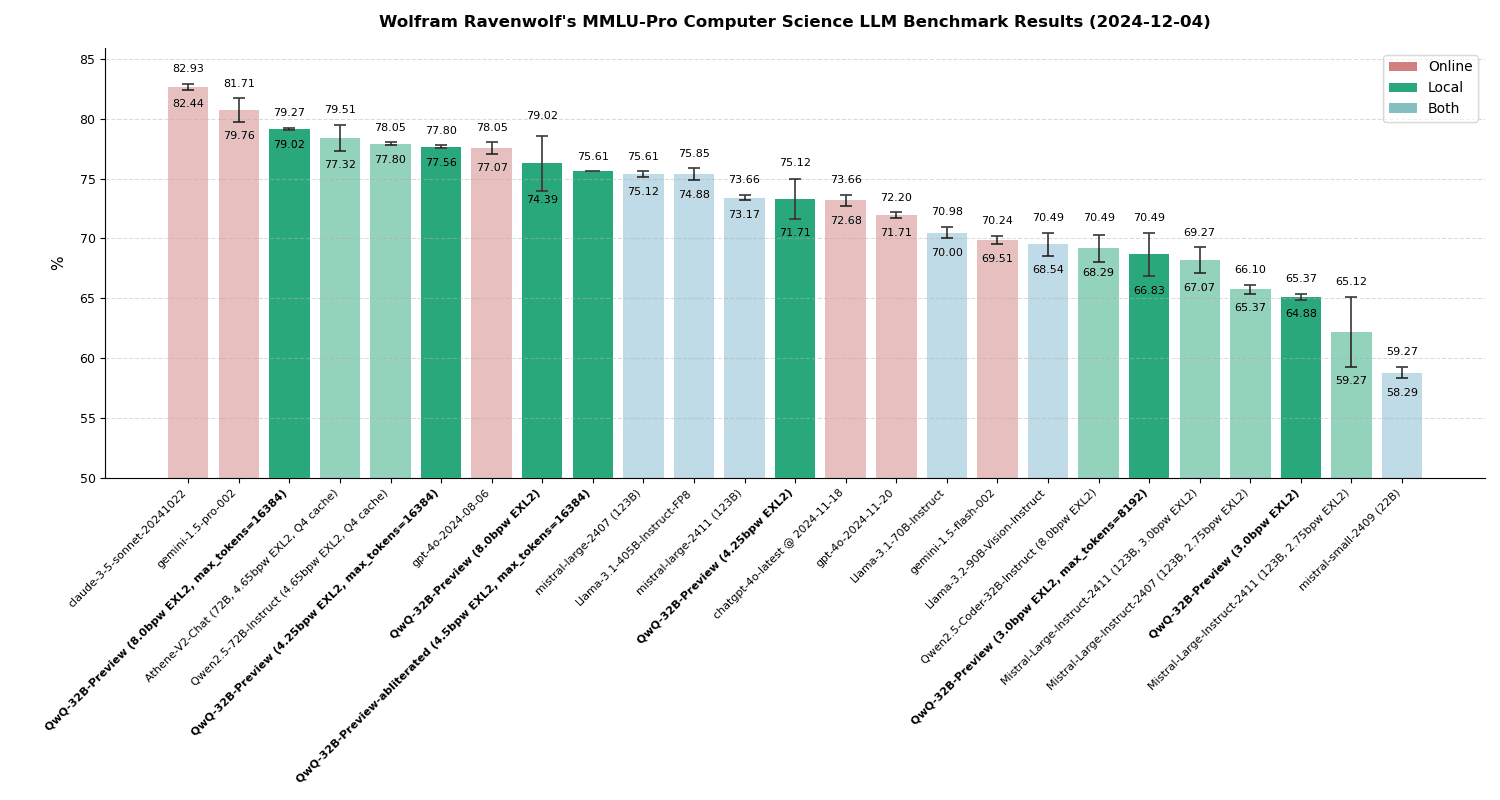

The authors have made a demo available, which will quickly become a standard reference point. Expect the leaderboard for ViGoR-Bench to become a key battleground for model announcements in the coming months, similar to how MMLU or GPQA functions for LLMs.

Frequently Asked Questions

What is ViGoR-Bench?

ViGoR-Bench (Vision-Generative Reasoning-centric Benchmark) is a new unified evaluation framework designed to test the physical, causal, and spatial reasoning capabilities of visual generative AI models (like image and video generators). It moves beyond judging output quality to assess the logical soundness of the generation process itself.

Which AI models were tested on ViGoR-Bench?

The paper states that experiments were conducted on "over 20 leading models." While the specific list isn't detailed in the abstract, this almost certainly includes state-of-the-art commercial and open-source models such as DALL-E 3, Midjourney v6, Stable Diffusion 3, Ideogram, Sora, and others in the image and video generation space.

What is a 'logical desert' in AI?

The term "logical desert," coined by the paper's authors, describes the phenomenon where a generative AI model produces outputs with high visual fidelity but contains fundamental failures in logical, physical, or causal reasoning. The model's capability landscape is lush with detail but barren of sound logic.

How does ViGoR-Bench differ from other AI benchmarks?

Unlike benchmarks that measure image quality (e.g., FID) or simple text-to-image alignment, ViGoR-Bench uses a dual-track mechanism to evaluate both the final output and the intermediate reasoning steps. It also provides granular diagnostics across specific cognitive dimensions and covers both image and video tasks, creating a more holistic and challenging assessment of true reasoning ability.