Key Takeaways

- A technical article details the creation of a fraud detection model that prioritizes explainability, using SHAP values to provide clear reasons for flagging transactions.

- This addresses a key pain point in automated systems: opaque decision-making.

What Happened

A developer has published a detailed account of building a fraud detection system with a core focus on explainability. The article, hosted on Medium, positions most existing fraud systems as opaque "black boxes" that reject transactions without providing a clear, actionable reason—akin to a bouncer denying entry with a vague "you're not on the list."

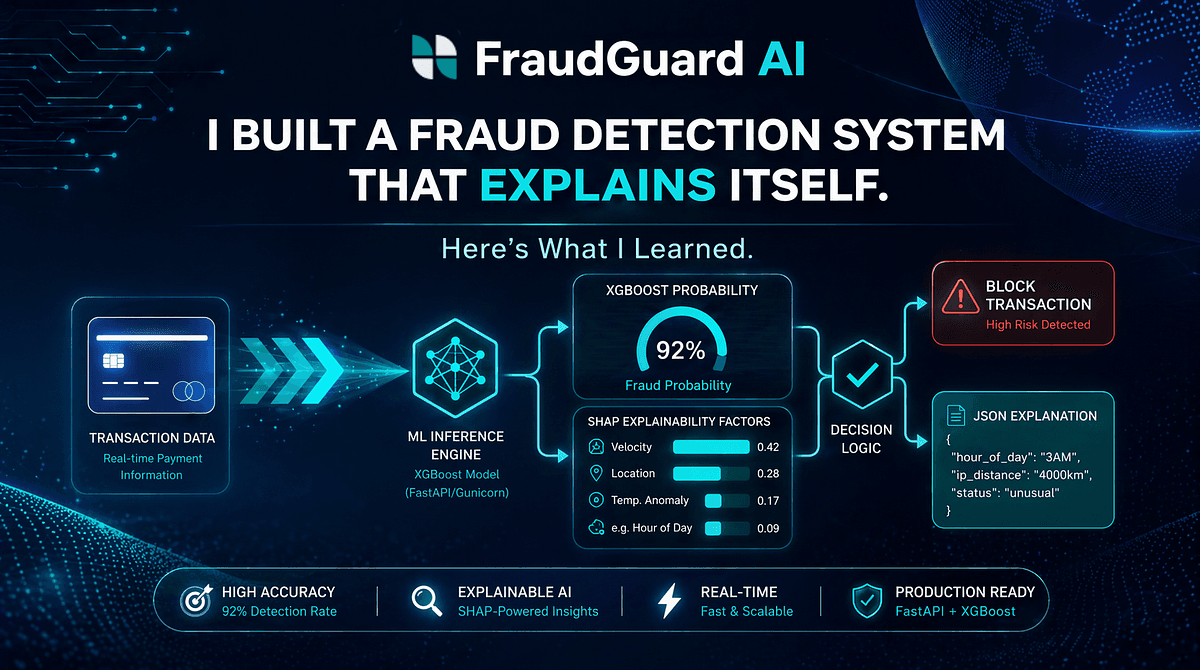

The author's project aimed to solve this by creating a system that not only predicts fraud but also explains why a transaction was flagged. The primary technical approach involved using SHAP (SHapley Additive exPlanations) values. SHAP is a game theory-based method that attributes a model's prediction to each input feature, quantifying its contribution to the final score. This allows the system to generate a human-readable report, highlighting which factors (e.g., transaction amount, location, time of day, device fingerprint) most influenced the decision to flag a transaction as suspicious.

The article likely walks through the practical steps of this implementation: data preprocessing, model selection (potentially a tree-based ensemble like XGBoost or LightGBM, which work well with SHAP), training, and the integration of the explanation layer into a usable application or API. The key learning emphasized is that explainability is not just a "nice-to-have" for data scientists but a critical component for building trust with end-users (like fraud analysts), ensuring regulatory compliance, and enabling continuous model improvement by identifying potential biases or flawed logic.

Technical Details: The Explainability Stack

While the full source article provides the complete code walkthrough, the core architecture for such a system typically involves:

- A Predictive Model: A high-performance algorithm trained on historical transaction data labeled as fraudulent or legitimate.

- An Explanation Framework (SHAP): This library calculates the contribution of each feature for a single prediction. For example, it can output: "This transaction was flagged 85% likely to be fraudulent. The top contributing factors were: transaction amount 50x user average (+40%), login from a new country (+30%), and time of day inconsistent with user history (+15%)."

- An Integration Layer: This bundles the prediction and the explanation into a consumable format—such as a JSON API response or a dashboard widget—for fraud analysts or customer service teams.

The major advantage of SHAP is its model-agnostic nature and solid theoretical foundation, providing consistent and locally accurate explanations. The main trade-off is computational cost, as calculating exact SHAP values can be expensive for large datasets or complex models, though approximations are commonly used in production.

Retail & Luxury Implications

For luxury retail and high-value e-commerce, the implications of explainable AI (XAI) in fraud detection are profound and directly address sector-specific challenges:

- Reducing False Positives & Protecting Customer Experience: A high-net-worth customer making an unusual, large purchase from a new location is a classic fraud signal. A black-box system might automatically block this, causing immense frustration. An explainable system would flag it for review but provide the analyst with the clear reasons, allowing for a rapid, informed manual approval if the context checks out (e.g., the customer is traveling). This preserves the sale and the relationship.

- Compliance and Dispute Resolution: Regulations like PSD2 in Europe emphasize the right to explanation. When a transaction is declined, merchants and payment processors may be required to provide a reason. An explainable system generates this audit trail automatically, demonstrating due diligence.

- Model Governance and Improvement: In a domain where fraud patterns evolve quickly (e.g., new counterfeit schemes, payment fraud), explainability allows data scientists to understand why the model is making certain calls. This is crucial for validating model behavior, identifying data drift (e.g., a new legitimate shopping trend being misclassified), and retraining models effectively. It turns the model from an oracle into a collaborative tool.

- Internal Trust and Operational Efficiency: Fraud and risk teams can act with greater confidence and speed when they understand the rationale behind an alert. They can prioritize cases based on the strength and nature of the signals, rather than treating all "high-score" alerts as equally urgent.

While the source article is a developer's personal project, it outlines a production-ready pattern. The gap between this demonstration and enterprise deployment involves scaling the data pipeline, ensuring real-time explanation latency, and integrating with existing case management systems (like Salesforce or dedicated fraud platforms). The core concept, however, is immediately applicable.