What Happened

Ethan Mollick, a professor at the Wharton School and a prominent researcher on AI's impact on work and education, posted a succinct observation on X (formerly Twitter). He stated that a long-held assumption—"everything around me is somebody's life work"—is "no longer a true assumption going forward" due to the advent of artificial intelligence.

The post links to a screenshot of a conversation, underscoring the point that generative AI tools can now produce, in seconds, outputs that previously required a human's dedicated skill, years of practice, or a lifetime of creative effort. This includes writing, code, art, design, and analysis.

Context

Mollick's observation cuts to the core of a cultural and economic anxiety surrounding generative AI. For centuries, the value of complex artifacts—a novel, a painting, a sophisticated software program, a legal brief—was intrinsically linked to the substantial human labor and expertise required to create them. This concept is a cornerstone of professional identity, creative pride, and economic valuation.

Generative AI, particularly large language models (LLMs) and diffusion models, decouples output from traditional human labor time. A model like GPT-4 or Midjourney can generate a competent first draft, a detailed image, or a functional code snippet in response to a prompt that may take a novice minutes to write. This does not mean the output is inherently better than a human's life work, but it redefines the starting point and the perceived "cost" of creation.

Mollick's framing—"for better and worse"—acknowledges the dual-edged nature of this shift. The potential benefits include democratizing creation, accelerating innovation, and automating tedious tasks. The risks involve devaluing professional skills, disrupting creative and knowledge economies, and creating an existential challenge for individuals whose identities are tied to skilled craftsmanship.

gentic.news Analysis

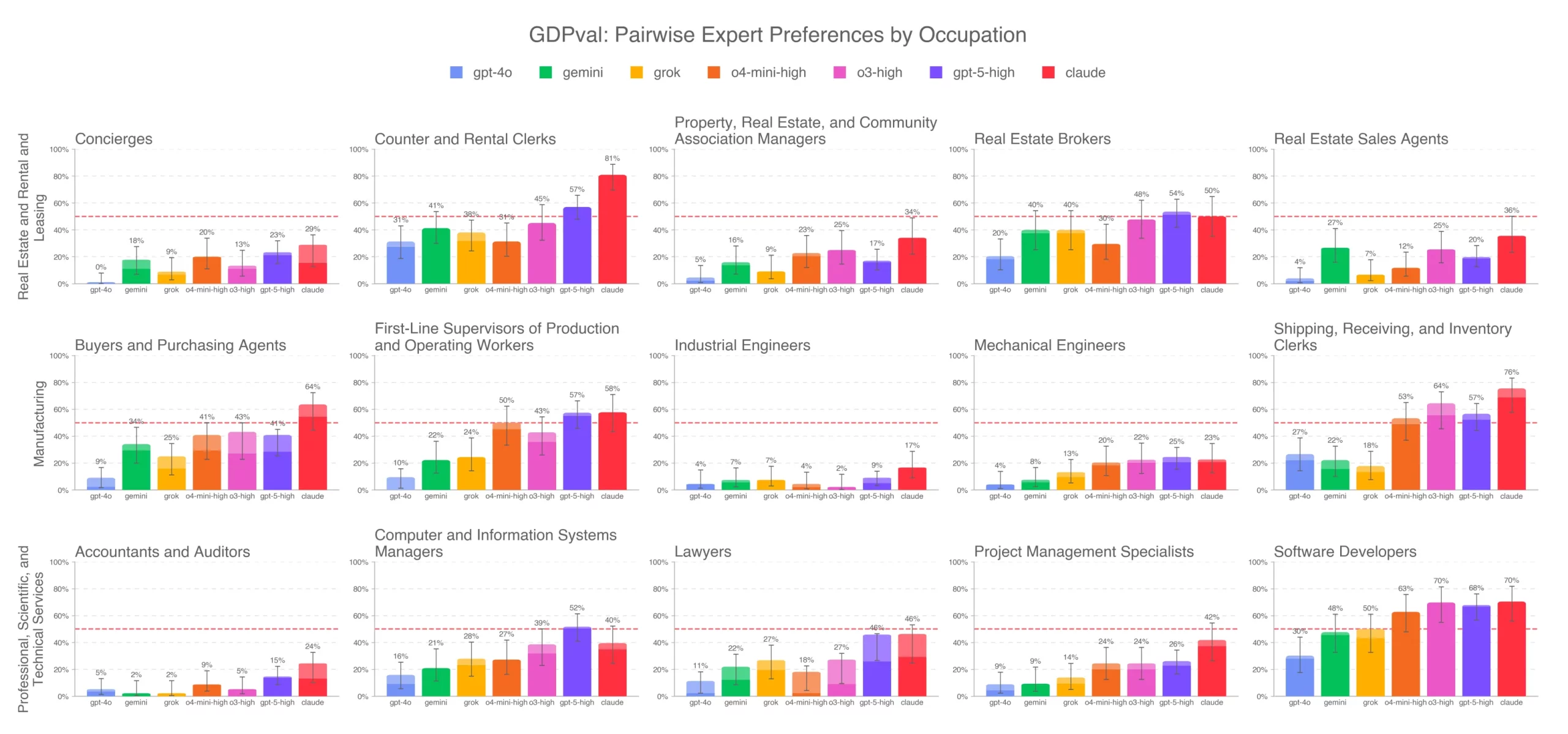

Mollick's tweet is not about a new model release or benchmark, but it captures the overarching societal implication of the entire trajectory we've been covering. This sentiment directly connects to our reporting on the erosion of the "skill premium" in white-collar work, a trend highlighted in our analysis of AI coding assistants like GitHub Copilot and Devin. When an AI can generate 40% of a codebase, the value of manually writing boilerplate plummets.

This observation also contextualizes the fierce legal and philosophical battles over AI training data and copyright, which we've covered extensively in stories on The New York Times vs. OpenAI and Stability AI lawsuits. The core tension is that these models are built by ingesting the collective "life work" of millions—books, articles, code repositories, artworks—and then synthesizing new outputs that compete with the creators of that original corpus.

Furthermore, it aligns with our recent analysis of synthetic data research. If AI can generate high-quality training data, the future development loop may become increasingly detached from human-generated source material, potentially accelerating the very phenomenon Mollick identifies.

For practitioners, this is a strategic imperative: the competitive advantage is shifting from execution of known tasks to problem definition, curation, editing, and integration—skills that combine AI oversight with high-level human judgment. The "life work" of the future may be less about crafting a single perfect artifact and more about orchestrating systems and guiding AI to solve novel, complex problems.

Frequently Asked Questions

What did Ethan Mollick actually say?

Ethan Mollick, a Wharton professor, tweeted that the assumption "everything around me is somebody's life work" is no longer true going forward because of AI. He meant that AI can now generate complex creative and professional outputs that previously required a human's dedicated lifetime of skill and effort.

Is AI making human work worthless?

No, but it is radically changing its economic and perceived value. AI is automating the generation of first drafts, standard designs, and routine code. This devalues pure execution of repetitive, skill-based tasks but increases the value of high-level strategy, creative direction, emotional intelligence, and editing—skills where humans still hold a significant edge.

What are the real-world examples of this shift?

Examples are widespread: journalists using AI for research and draft generation, reducing time per article; marketers generating hundreds of ad copy variants in minutes; software engineers using Copilot to write boilerplate code, focusing more on system architecture; and graphic designers using Midjourney to create concept mock-ups rapidly, spending more time on client refinement.

How should professionals adapt to this change?

Professionals should focus on developing skills that are complementary to AI, not competitive with it. This includes critical thinking, complex problem-solving, prompt engineering (effectively guiding AI), quality assurance and editing of AI output, ethical oversight, and domain expertise that allows for judging the suitability and nuance of AI-generated work.