In a brief but pointed social media post, Ethan Mollick, a professor at the Wharton School of the University of Pennsylvania and a prominent voice on AI adoption, issued a direct call to action for artificial intelligence labs. He stated it is "an important time for the AI labs to build interfaces around the goal of 'job augmentation through AI' rather than building 'job replacement through AI.'"

Mollick's argument hinges on a critical distinction in how AI systems are designed and integrated into workplaces. He notes that "Chatbots were mostly augments, requiring a human to work." This refers to the current dominant paradigm of large language models (LLMs) operating as tools within a human-in-the-loop workflow. A human provides the prompt, context, and oversight, while the AI assists with drafting, analysis, or information retrieval.

However, he highlights that "Agentic work patterns are still in flux & could center humans." This is a reference to the emerging field of AI agents—systems that can autonomously perform multi-step tasks, like writing and executing code, conducting research, or managing complex projects. The architecture and goals baked into these agents are currently being defined. Mollick's core argument is that developers have a choice: they can design these agentic systems to operate autonomously, potentially displacing human roles, or they can architect them to require and enhance human judgment, creating augmented roles.

The Design Philosophy Fork in the Road

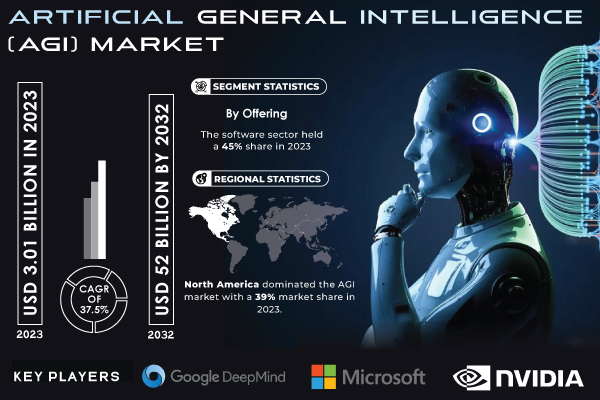

Mollick’s post underscores a fundamental strategic and ethical fork in the road for AI development, particularly at the lab level (e.g., OpenAI, Anthropic, Google DeepMind, Meta FAIR). The technical architecture of an AI system—its APIs, its default modes of operation, its evaluation metrics—profoundly influences how it will be used.

- Augmentation-First Design: This philosophy would prioritize features like seamless human-AI collaboration, explainability, suggestion modes over auto-execution, and tools that elevate human decision-making. The interface is built for partnership.

- Replacement-Oriented Design: This path might optimize for full task automation, minimizing human intervention, maximizing speed and cost reduction. The interface is built for delegation and eventual autonomy.

Mollick is asserting that because agentic patterns are "still in flux," now is the decisive moment to make this philosophical choice explicit in product development.

Context: A Growing Chorus on AI's Economic Impact

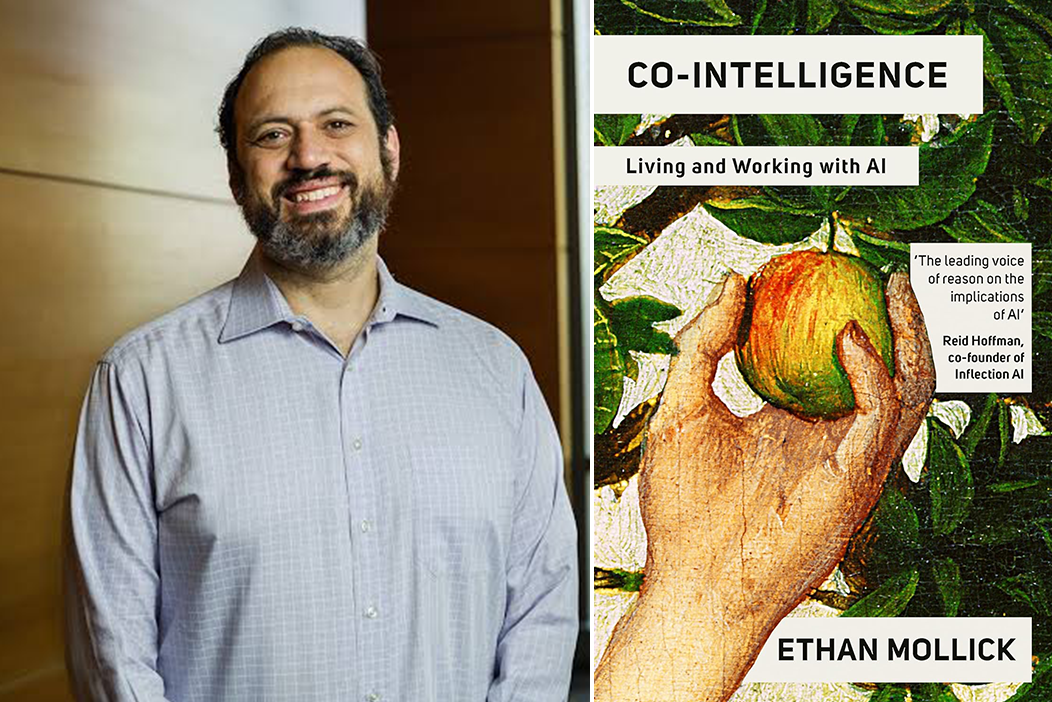

Mollick’s comment is not made in a vacuum. It taps into an intensifying debate about the economic and societal impact of generative AI. While productivity studies (some co-authored by Mollick himself) show AI can significantly boost performance for certain knowledge tasks, forecasts about long-term job displacement vary widely. His call can be seen as an attempt to steer the technology toward a future that amplifies human capabilities rather than one that seeks to replicate and replace them.

The post is deliberately aimed at "AI labs"—the research and development organizations creating the foundational models. The implication is that the values and goals encoded at this foundational level will cascade through the entire ecosystem of applications built on top of their APIs.

gentic.news Analysis

Ethan Mollick’s intervention is strategically timed and targets the most influential lever in the AI stack: the foundational model developers. This follows a pattern of increasing pressure on AI labs to consider the broader implications of their work, beyond mere capability benchmarks. We recently covered the internal safety debates at OpenAI, where employees like Jan Leike departed citing a deprioritization of safety culture in favor of "shiny products." Mollick’s argument aligns with that critique but from a socioeconomic angle, suggesting that a narrow focus on capability (which often leads to replacement-oriented demos) is crowding out design thinking for human-centric augmentation.

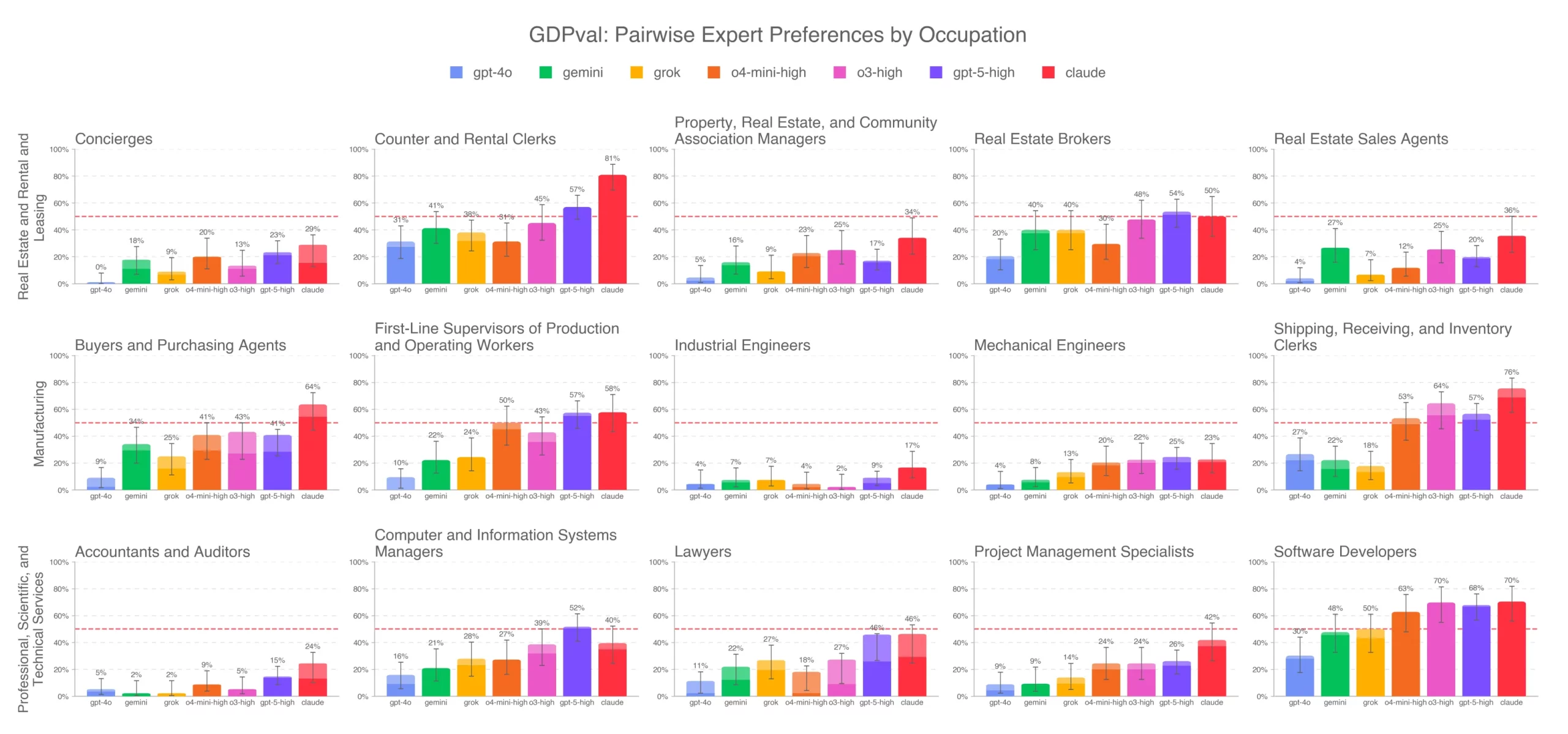

This call also directly intersects with the explosive growth in AI agent frameworks (e.g., CrewAI, AutoGen). As we reported in our analysis of Devon AI, these systems are rapidly moving from research prototypes to viable tools. Mollick is essentially urging the labs that provide the underlying LLMs (like GPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro) to provide APIs and model behaviors that guide agent builders toward augmentation. For instance, a model could be tuned to better flag uncertainty or seek confirmation when taking consequential actions.

Furthermore, this connects to the trending activity (📈) around AI regulation in the EU and US. While Mollick advocates for self-directed prioritization by labs, his argument provides a clear conceptual framework for policymakers: regulations could incentivize "augmentation-by-design" principles. The contrast he draws—"augmentation through AI" vs. "replacement through AI"—creates a tangible axis for measuring and guiding development, much like the EU AI Act's risk-based categories.

Ultimately, Mollick is making a product management and go-to-market argument as much as an ethical one. He is pointing out that a market of empowered, augmented professionals may be larger and more sustainable in the long term than a market seeking to eliminate them. Whether labs, currently locked in a fierce capability race, will slow down to prioritize this interface philosophy remains the central question.

Frequently Asked Questions

What does "job augmentation through AI" mean?

Job augmentation refers to designing AI tools that assist and enhance human workers, making them more productive, creative, or effective, rather than designing systems to fully automate and eliminate their roles. It implies a collaborative partnership where the human remains the central decision-maker.

What are "agentic work patterns" in AI?

Agentic work patterns refer to the behavior of AI agents—systems that can autonomously plan and execute sequences of actions to achieve a goal (e.g., "write a report, analyze this data, and email a summary"). These patterns are "in flux" because developers are still deciding how much autonomy to grant, how much oversight is required, and whether they are built as standalone workers or human assistants.

Who is Ethan Mollick and why is his opinion significant?

Ethan Mollick is a professor at the Wharton School of the University of Pennsylvania who specializes in innovation and entrepreneurship. He has become a leading researcher and commentator on the real-world adoption and impact of generative AI in business. His studies on AI boosting productivity give his views on its design and implementation considerable weight in both academic and industry circles.

Can AI labs really design for augmentation if companies want replacement to cut costs?

This is the core tension. Mollick's argument is that labs set the technological possibilities. If they only offer models and APIs optimized for autonomous replacement, that will be the default path. By proactively building and promoting interfaces for augmentation, they can create a viable, attractive alternative for businesses, potentially shifting the demand. It's an attempt to influence the market by shaping the supply.