March 2026 — In a brief but provocative social media post, Ethan Mollick, a professor at the Wharton School of the University of Pennsylvania and a prominent analyst of AI's economic impact, outlined a stark strategic reality for the race toward artificial general intelligence (AGI).

Mollick's core argument is straightforward: "The easiest way to make money fast from a superhuman artificial intelligence would be in the financial markets, almost by definition." He concludes that, therefore, "the first lab to develop one, if AGI is possible, would almost certainly keep it quiet for as long as they could. Beats charging for API access."

This 50-word thesis cuts to the heart of a critical, often undiscussed, incentive structure in advanced AI development. It suggests the most economically rational first use of a superhuman AI—one capable of outperforming humans across a wide range of cognitive tasks—would not be as a publicly available tool or service, but as a proprietary, clandestine advantage in the world's most liquid and complex game: global finance.

What Mollick's Argument Implies

Mollick's logic rests on two premises:

- Financial markets represent a near-ideal, high-stakes optimization domain. A superhuman AI could theoretically analyze global data feeds, predict market movements, and execute trades with speed and precision impossible for human teams or narrow AI. The profit potential is virtually unlimited and, crucially, immediate.

- Secrecy maximizes first-mover advantage and profit. Announcing an AGI would trigger regulatory scrutiny, competitive reverse-engineering attempts, and market adaptations that could dilute its financial edge. Silently deploying it in markets allows a lab or its corporate backer to accumulate vast capital before the technology's existence becomes known.

This stands in contrast to the current dominant business model for advanced AI, where companies like OpenAI, Anthropic, and Google DeepMind monetize through API access, enterprise licenses, and consumer subscriptions. Mollick implies that for a true leap to AGI, the incentive flips from commercialization to covert application.

The Strategic Tension in AI Development

Mollick's scenario highlights a fundamental tension:

- The "Open" Path: The historical norm in AI research, emphasizing publication, collaboration, and incremental progress shared across academia and industry. The business model is selling access to capabilities (APIs, cloud services).

- The "Closed" Path: A winner-takes-all scenario where the leading lab achieves a decisive lead and chooses to hoard the advantage for private, asymmetric gain, likely in finance, before any other sector.

This isn't merely theoretical. The trend in frontier AI has been toward increasing secrecy. Key architectural details, full training datasets, and the exact scale of largest models are often kept confidential by leading labs, citing competitive and safety concerns. Mollick's argument takes this to its logical extreme: complete secrecy of the capability itself.

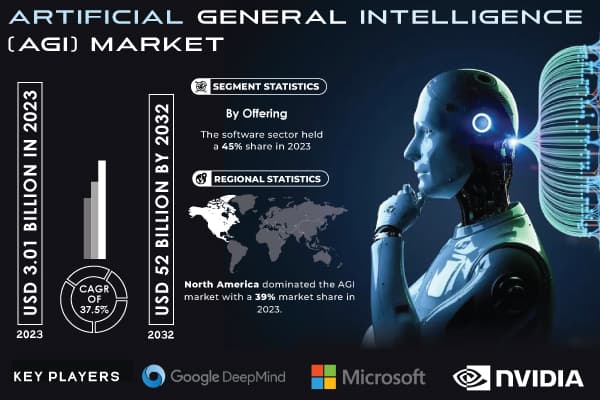

Context: The AGI Race and Its Players

The post implicitly references the current state of the AGI race. Major players like OpenAI (with its stated goal of achieving AGI), Google DeepMind, Anthropic, and xAI are investing billions, often backed by tech conglomerates or sovereign wealth funds with vast resources. These entities have the capital to fund long-term AGI research and the infrastructure to potentially deploy a resulting system.

Notably, several are connected to figures with deep experience in high-frequency trading and quantitative finance. The founder of xAI, for instance, previously co-founded a company that applied AI to trading. This existing expertise at the intersection of AI and finance would lower the barrier to implementing Mollick's hypothesized strategy.

gentic.news Analysis

Mollick's tweet, while speculative, frames a critical strategic dilemma that is becoming more relevant as models approach broader capabilities. It connects directly to trends we've been tracking: the escalating private investment in frontier AI, the retreat from full openness, and the specific interest from financial sectors.

This aligns with our previous reporting on the capital demands of AI. In our analysis of xAI's $6 billion funding round in 2024, we noted that a significant portion of capital was earmarked for procuring compute resources to train next-generation models. The investors in that round included firms with strong ties to global finance. If the end goal of such investment is a financial return, Mollick's path offers a potentially faster and more direct route than the slow burn of API monetization.

Furthermore, this thesis contradicts a common narrative in policy circles that the first signs of AGI would be highly visible—a public demo or a released product. Mollick suggests the first signs might instead be anomalous, persistent financial market outperformance by a particular fund or a corporate entity's trading division, a signal that would be ambiguous and deniable for a considerable time.

For practitioners and observers, the key takeaway is to watch the intersection of elite AI talent and quantitative finance. Unusual hiring patterns, strategic investments from hedge funds into AI labs, or the formation of new AI research entities under the umbrella of financial conglomerates could be indirect indicators that some players are positioning for the scenario Mollick describes. The business model for the first true AGI may not be software-as-a-service, but advantage-as-a-service—for its creators alone.

Frequently Asked Questions

What is AGI?

Artificial General Intelligence (AGI) refers to a hypothetical AI system that possesses the ability to understand, learn, and apply its intelligence to solve any problem that a human being can, across a wide range of domains. It is contrasted with today's "narrow" or "specialized" AI, which excels at specific tasks like translation or image recognition but lacks broad, adaptable cognitive ability.

Is any lab close to developing AGI?

As of early 2026, there is no consensus that any lab has developed AGI. Leading organizations like OpenAI, Google DeepMind, and Anthropic have created increasingly powerful and general-purpose models (like GPT-5, Gemini Ultra, and Claude 3.5), but these systems still exhibit limitations in reasoning, planning, and true understanding that fall short of the AGI threshold. The timeline for AGI remains highly debated among experts.

Why would financial markets be the first target?

Financial markets offer a combination of high reward, clear objectives (profit), vast and machine-readable data, and the ability to act autonomously and swiftly. A superhuman AI could exploit arbitrage opportunities, model complex risk, and execute trades at a scale and speed impossible for humans. The returns are liquid, immediate, and can be reinvested into further AI development, creating a self-funding loop.

Wouldn't a secret AGI be dangerous?

This is a core concern. Mollick's scenario raises significant safety and governance issues. A secretly developed and deployed AGI would operate outside any regulatory or ethical oversight frameworks currently being discussed. Its actions in global markets could have destabilizing effects, and its capabilities could be redirected to other domains without societal scrutiny. This potential for unaligned, unsupervised advanced AI is a major argument for transparency and international cooperation in AI development, which Mollick's hypothesized incentive structure directly undermines.