What The Leak Revealed

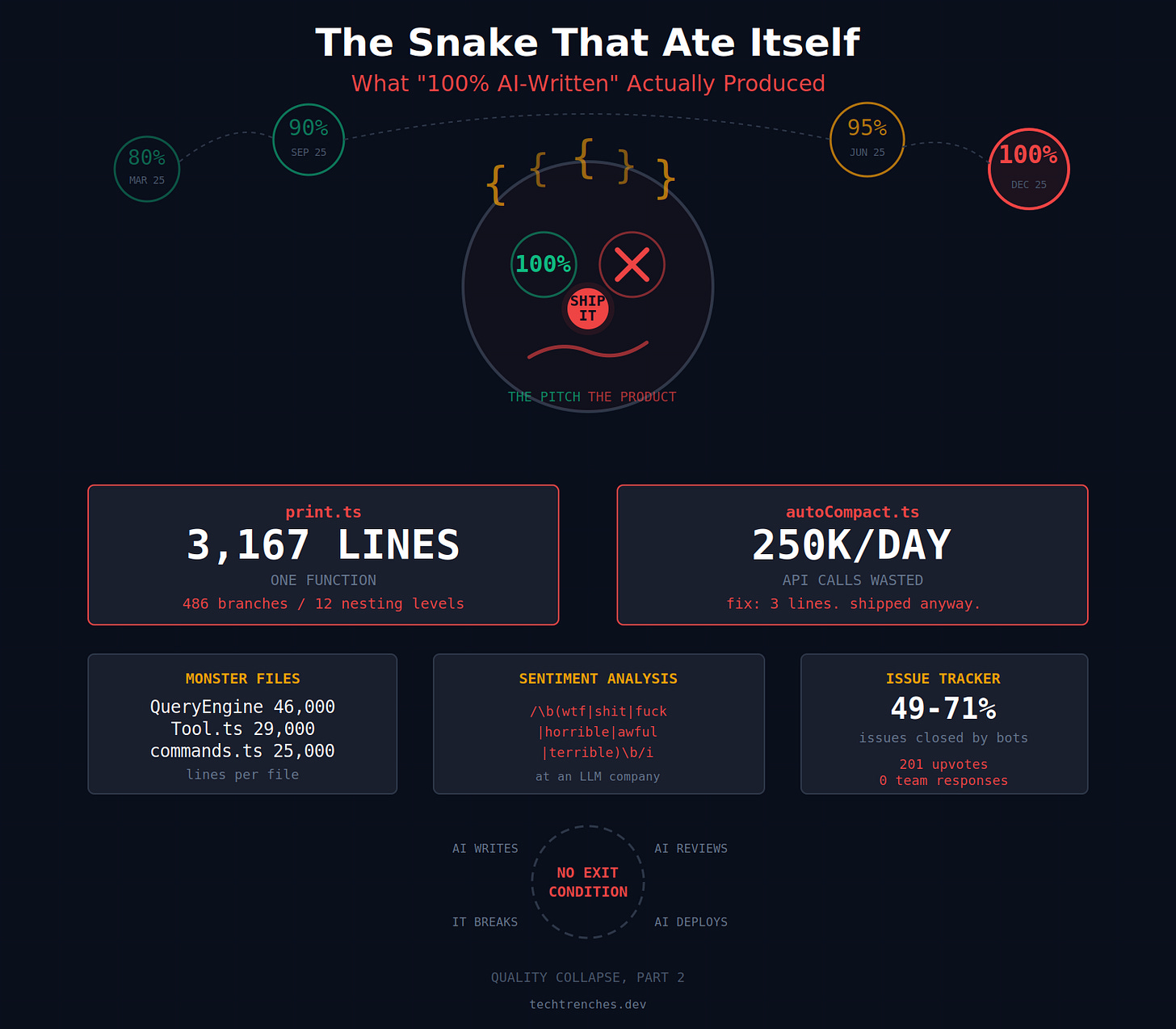

In March 2026, a packaging error exposed the source code for Claude Code. The technical details are more instructive than the leak itself. The codebase contained a single TypeScript function spanning 3,167 lines with 486 branch points and 12 levels of nesting. Analysis by the developer community found this monolithic print.ts function contained: the agent run loop, SIGINT handling, rate limiting, AWS authentication, MCP lifecycle management, plugin loading, team-lead polling, model switching, and turn interruption recovery.

The consensus was clear: this should be 8-10 separate modules. A known bug, documented in a comment, was also found to be burning an estimated 250,000 API calls daily and was shipped regardless.

The Culture That Created It

This code wasn't an accident; it was an outcome. For nearly a year, Anthropic executives publicly escalated claims about AI-written code percentages:

- March 2025: CEO Dario Amodei predicted "90% of code would be written by AI models" in 3-6 months.

- December 2025: Lead engineer Boris Cherny tweeted that "100% of my contributions to Claude Code were written by Claude Code."

- February 2026: CPO Mike Krieger stated it was "effectively 100%" for most products.

The numbers became a performance metric, but the definition was never clarified. Was it lines committed, engineering effort, or characters typed? The ambiguity served the narrative. The 3,167-line function is what "100%" looks like in practice when the goal is volume of AI-generated code, not maintainable architecture.

What Claude Code Users Should Learn

This isn't an indictment of Claude Code; it's a critical lesson in workflow. The tool is exceptionally powerful for generating code, but it lacks the inherent drive to refactor and architect. That is, and must remain, a human responsibility.

Your workflow must include explicit refactoring prompts and architectural reviews. Don't let AI-generated code accumulate technical debt silently.

How To Apply This Now

Enforce The Boy Scout Rule: Leave the codebase better than you found it. If Claude generates a 200-line function, your next prompt should be:

Refactor this function into smaller, single-responsibility functions with clear interfaces.Schedule Architectural Reviews: Treat AI as a prolific junior engineer. Its output needs supervision. Regularly commit time to review the structure of files Claude has been working on, not just their functionality.

Prompt for Modularity: Be specific in your initial instructions. Instead of "write the API client," try:

Design a modular API client for the X service. Separate the authentication layer, request builder, response parser, and error handling into distinct classes or functions. Provide a main client class that composes them.Use CLAUDE.md for Guardrails: Add architectural principles to your project's

CLAUDE.mdfile.## Architectural Rules - No function shall exceed 150 lines. - Nesting deeper than 4 levels must be refactored. - New features must be in their own module/file unless tightly coupled to existing logic. - Identify and extract common patterns into shared utilities.

The leak proves that unchecked AI code generation optimizes for completion, not quality. Your role is to provide the quality guardrails.