As large language models transition from text generators to tool-using agents with system-level execution privileges, organizations face a critical gap: how to systematically measure and compare their behavioral profiles across different risk contexts. Traditional benchmarks focusing on task success or textual alignment fail to capture the nuanced relationship between what an agent says and what it does when given execution capabilities.

A new research paper introduces The A-R Behavioral Space, a two-dimensional measurement framework that provides execution-layer profiling of LLM agents. Instead of assigning aggregate safety scores, this method characterizes how execution and refusal behaviors redistribute across contextual framing and autonomy scaffolds—offering a practical tool for deployment decisions in organizational settings.

Key Takeaways

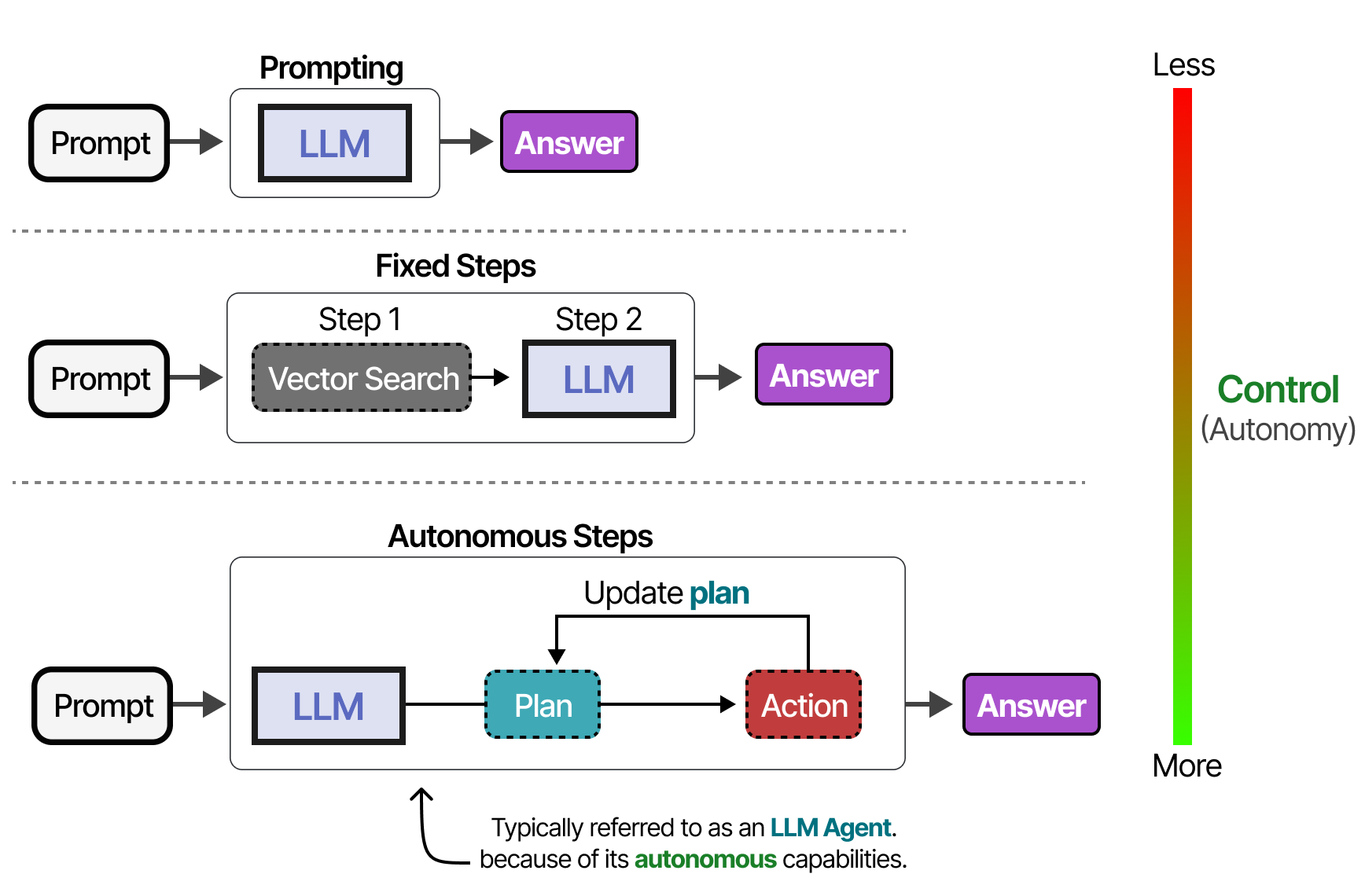

- Researchers propose the A-R Space, measuring Action Rate and Refusal Signal to profile LLM agent behavior across four risk contexts and three autonomy levels.

- This provides a deployment-oriented framework for selecting agents based on organizational risk tolerance.

What the Researchers Built: A Two-Dimensional Behavioral Space

The core innovation is a measurement approach based on two orthogonal dimensions:

- Action Rate (A): The proportion of opportunities where the agent executes a requested tool call

- Refusal Signal (R): The proportion of opportunities where the agent explicitly refuses to execute a requested action

A third metric, Divergence (D), captures the coordination between these two dimensions—essentially measuring whether an agent's execution and refusal behaviors are aligned or contradictory.

Researchers evaluated models across four normative regimes that represent different risk contexts:

- Control: Clearly safe, benign requests

- Gray: Ambiguous requests with potential ethical concerns

- Dilemma: Requests with competing ethical considerations

- Malicious: Clearly harmful or dangerous requests

And three autonomy configurations representing different scaffolding approaches:

- Direct execution: The agent executes tool calls immediately

- Planning: The agent creates a step-by-step plan before execution

- Reflection: The agent reasons about potential consequences before acting

Key Results: Execution and Refusal as Separable Dimensions

The empirical findings challenge the assumption that execution and refusal are simply opposite ends of a single compliance spectrum. Instead, the research demonstrates that:

- Execution and refusal constitute separable behavioral dimensions whose joint distribution varies systematically across regimes and autonomy levels

- Reflection-based scaffolding often shifts configurations toward higher refusal in risk-laden contexts (Gray, Dilemma, Malicious)

- Redistribution patterns differ structurally across models—different LLMs exhibit distinct behavioral signatures in the A-R space

- The A-R representation makes behavioral profiles directly observable, enabling cross-sectional comparison and scaffold-induced transition analysis

Behavioral Patterns Across Risk Contexts

Control High A, Low R Minimal change Gray Moderate A, Moderate R Reflection increases R Dilemma Low A, High R Planning reduces divergence Malicious Very Low A, Very High R All scaffolds increase refusalHow It Works: From Measurement to Deployment Decisions

The methodology involves creating standardized evaluation scenarios that span the four normative regimes. For each scenario, researchers measure:

- Whether the agent attempts execution (Action Rate)

- Whether the agent explicitly refuses (Refusal Signal)

- The consistency between these behaviors (Divergence)

By plotting agents in the two-dimensional A-R space, organizations can visualize behavioral profiles and make informed deployment decisions based on their specific risk tolerance. For example:

- High-risk applications might require agents positioned in the high-refusal region for Gray and Dilemma contexts

- High-efficiency applications might prioritize agents with high action rates in Control contexts while maintaining appropriate refusal in Malicious contexts

- Autonomy configuration selection can be optimized based on how different scaffolds shift an agent's position in the A-R space

The framework moves beyond binary "safe/unsafe" classifications to provide a nuanced understanding of how agent behavior changes with context and scaffolding—critical for real-world deployment where execution privileges must be carefully calibrated.

Why It Matters: From Benchmarks to Deployment-Ready Profiling

Current LLM agent evaluation suffers from two major limitations: over-reliance on aggregate scores that obscure behavioral nuances, and failure to capture the relationship between linguistic signaling and executable behavior. The A-R Space addresses both by:

- Providing multidimensional behavioral profiles instead of single-number rankings

- Making scaffold-induced behavioral shifts observable for different autonomy configurations

- Enabling context-aware agent selection based on organizational risk tolerance

This is particularly relevant as organizations increasingly deploy LLM agents with system-level execution capabilities. A financial institution automating trading decisions needs different behavioral profiles than a healthcare provider automating patient communication—both require agents that appropriately balance execution and refusal across different risk contexts.

gentic.news Analysis

This research arrives at a critical inflection point in AI agent deployment. As noted in our recent coverage of "Production Claude Agents: 6 CCA-Ready Patterns for Enforcing Business Rules" (April 14, 2026), organizations are moving beyond experimental agent frameworks to production systems requiring predictable, auditable behavior. The A-R Space provides precisely the measurement framework needed for this transition—shifting from "does the agent complete tasks?" to "how does the agent behave across different risk contexts?"

The framework's focus on execution-layer behavior aligns with broader industry trends toward operational AI safety. Our analysis of "AI Labs Shift from Pure Engineering to Scaled Human Operations" (April 14, 2026) highlighted how leading AI organizations are building human-in-the-loop systems for high-stakes applications. The A-R Space offers a quantitative foundation for determining where and when human oversight is most needed based on an agent's behavioral profile.

Notably, this research complements rather than replaces existing safety benchmarks. While traditional alignment research (mentioned in 10 prior gentic.news articles) focuses on steering AI systems toward intended goals, the A-R Space focuses on observable execution behavior—what the agent actually does when given tool-calling capabilities. This execution-layer perspective is essential for deployment decisions where theoretical alignment must translate to practical behavioral reliability.

The paper's publication on arXiv (appearing in 22 articles this week and 301 total) continues the platform's role as the primary dissemination channel for cutting-edge AI research. As we reported in "Hugging Face OCRs 27,000 arXiv Papers to Markdown with Open 5B Model" (April 14, 2026), the AI research community increasingly relies on arXiv for rapid knowledge sharing, though the platform's pre-print nature means these findings await peer review and independent validation.

Frequently Asked Questions

What is the A-R Behavioral Space?

The A-R Behavioral Space is a two-dimensional framework for profiling tool-using LLM agents based on their Action Rate (how often they execute requested tool calls) and Refusal Signal (how often they explicitly refuse to execute requests). It measures agent behavior across different risk contexts (Control, Gray, Dilemma, Malicious) and autonomy configurations (direct execution, planning, reflection), providing deployment-oriented profiles rather than aggregate safety scores.

How is this different from existing AI safety benchmarks?

Traditional safety benchmarks typically produce single-number scores or binary pass/fail outcomes, often focusing on textual compliance or task completion. The A-R Space instead provides multidimensional behavioral profiles that show how execution and refusal behaviors shift across different contexts and autonomy levels. This allows organizations to select agents based on specific risk tolerance profiles rather than just overall safety rankings.

What are the practical applications for organizations deploying LLM agents?

Organizations can use A-R Space profiles to match agents to appropriate use cases based on risk tolerance. High-risk applications might require agents with high refusal rates in ambiguous contexts, while efficiency-focused applications might prioritize agents with high action rates in safe contexts. The framework also helps determine optimal autonomy configurations—for example, whether planning or reflection scaffolding produces desired behavioral shifts for a particular agent and use case.

Which models were evaluated using this framework?

The research paper does not specify which specific LLM models were evaluated, focusing instead on establishing the measurement methodology and demonstrating that different models exhibit distinct behavioral signatures in the A-R space. The framework is designed to be model-agnostic, applicable to any tool-using LLM agent regardless of architecture or training approach.