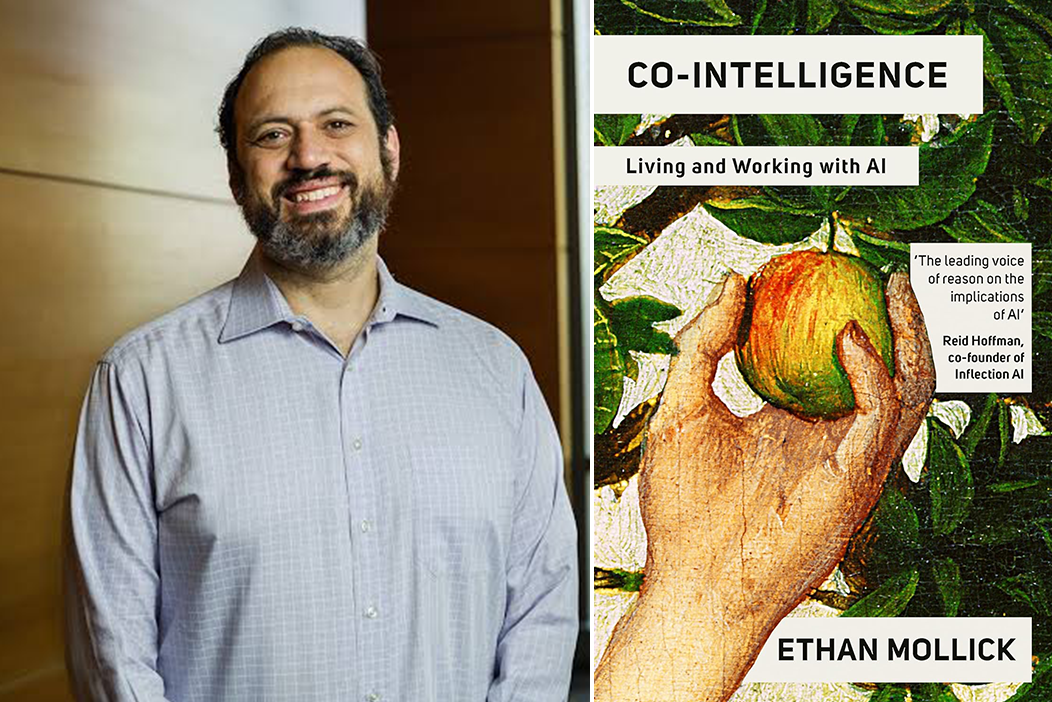

A fascinating new experiment using one of history's most infamous corporate archives—the Enron email dataset—has revealed significant limitations in how AI agents navigate complex workplace environments. According to researcher and professor Ethan Mollick, who shared the findings on social media, the test provides "helpful evidence that agent swarms are less useful than agent organizations" when dealing with real-world corporate communication patterns.

The Enron Experiment: A Corporate Navigation Test

The Enron email archive, containing over 600,000 messages from the collapsed energy giant, represents a unique dataset for testing AI capabilities. Unlike clean, structured datasets typically used in AI training, these emails reflect the messy reality of corporate communication—complete with office politics, ambiguous relationships, hidden agendas, and complex social dynamics that characterized Enron's toxic corporate culture.

Researchers used this archive to test how effectively AI agents could navigate what Mollick describes as "work" environments. The experiment appears to have compared different approaches to agent deployment, specifically contrasting "agent swarms" (large numbers of relatively simple agents working in parallel) with "agent organizations" (more structured, hierarchical arrangements of specialized agents).

Key Finding: Structure Overwhelms Swarm Intelligence

The most striking conclusion from the experiment, according to Mollick's summary, is that swarms of AI agents performed significantly worse than organized agent structures when dealing with the complexities of the Enron email environment. This finding challenges some prevailing assumptions in AI development about the power of decentralized, swarm-like approaches to problem-solving.

While the specific metrics and methodologies haven't been detailed in Mollick's brief post, the implication is clear: navigating human workplace dynamics requires more than brute-force parallel processing. It demands the kind of organizational intelligence, role specialization, and hierarchical coordination that characterizes effective human organizations.

Why the Enron Dataset Matters for AI Testing

The Enron archive represents an ideal testing ground for several reasons. First, it's a real-world dataset with documented outcomes—we know how the story ended, with corporate collapse and criminal convictions. Second, it contains the full spectrum of workplace communication, from routine administrative messages to evidence of fraud and conspiracy. Third, the social networks within the emails are well-studied, allowing researchers to benchmark AI performance against known human behavioral patterns.

Most AI training data is sanitized and structured, but real workplaces are messy. The Enron experiment suggests that AI systems trained primarily on clean data may struggle when confronted with the ambiguities, contradictions, and unspoken rules that characterize actual corporate environments.

Implications for Enterprise AI Deployment

This research has significant implications for how businesses might deploy AI agents in workplace settings. The poor performance of agent swarms suggests that simply unleashing large numbers of AI assistants into corporate systems—whether for email management, project coordination, or information retrieval—may yield disappointing results without careful organizational design.

The better performance of agent organizations points toward a future where AI systems mirror human organizational structures, with specialized agents taking on specific roles (analyst, coordinator, communicator) and reporting through defined channels. This approach aligns with emerging best practices in enterprise AI, where successful implementations often involve carefully designed agent architectures rather than undifferentiated AI deployments.

The Human-AI Collaboration Frontier

Perhaps the most important implication of this research is what it suggests about the future of human-AI collaboration in workplaces. If AI agents struggle to navigate the social and political dimensions of corporate communication—even in a dataset where we know the eventual outcomes—this highlights areas where human judgment remains essential.

The experiment suggests that the most effective workplace AI systems may be those designed to augment rather than replace human organizational intelligence. Rather than creating autonomous agents that navigate office politics independently, we might develop systems that help humans better understand organizational dynamics while leaving final decisions about social navigation to people.

Looking Ahead: Next Steps in Agent Research

While Mollick's post provides only a high-level summary, it points toward important directions for future research. Key questions include: What specific organizational structures work best for AI agents? How can we train agents to recognize subtle social cues in communication? And perhaps most importantly, how do we create AI systems that can navigate ethical gray areas—a particular challenge given the Enron dataset's documentation of corporate misconduct.

Future experiments might compare different organizational models for AI agents or test how agents perform in healthier corporate environments versus toxic ones like Enron's. Such research could help establish best practices for designing AI systems that complement rather than conflict with human organizational intelligence.

Source: Ethan Mollick's analysis of research using the Enron email archive to test AI agent performance in workplace navigation.