Key Numbers:

- Annual Global Datacenter CapEx: $250-300 billion

- Inflation-Adjusted Manhattan Project Cost: $25-30 billion

- Annual Equivalent: 5-7 Manhattan Projects

Key Takeaways

- Inflation-adjusted global datacenter CapEx reaches $250-300B annually, equivalent to 5-7 Manhattan Projects per year.

- This quantifies the unprecedented infrastructure investment driving the AI boom.

What the Data Shows

A striking comparison from technology analyst @kimmonismus puts today's artificial intelligence infrastructure spending into historical perspective: even after adjusting for inflation, annual global datacenter capital expenditure now equals 5-7 Manhattan Projects per year.

The Manhattan Project—the World War II-era research and development program that produced the first nuclear weapons—cost approximately $25-30 billion in today's dollars. Current datacenter investment ranges from $250-300 billion annually, creating a direct 10:1 ratio between modern AI infrastructure spending and one of history's most ambitious scientific endeavors.

The Scale of AI Infrastructure Investment

This comparison isn't merely rhetorical—it quantifies the physical and financial reality of the AI boom. While the Manhattan Project concentrated resources on a single, geographically focused mission over several years, today's datacenter investment represents a distributed, continuous global effort to build the computational foundation for artificial intelligence.

The $250-300 billion figure encompasses:

- Hyperscale datacenter construction by cloud providers (AWS, Google Cloud, Microsoft Azure)

- Specialized AI infrastructure from companies like CoreWeave, Lambda Labs, and Crusoe Energy

- Enterprise datacenter upgrades for AI workloads

- Semiconductor manufacturing facilities producing AI chips

What This Means in Practice

For AI Practitioners: This scale of investment directly translates to available compute. The infrastructure being built today will determine what AI models can be trained tomorrow—larger models, more extensive training runs, and more sophisticated inference capabilities become economically feasible at this investment level.

For the Industry: The comparison highlights AI's transition from research project to industrial-scale enterprise. When infrastructure spending reaches multiples of history's most famous scientific project, the technology has clearly moved beyond experimental phases into mainstream economic activity.

Historical Context and Trajectory

The Manhattan Project comparison is particularly apt because both endeavors represent frontier technological development with significant geopolitical implications. Just as nuclear technology reshaped 20th-century power dynamics, artificial intelligence is positioned to redefine 21st-century economic and military landscapes.

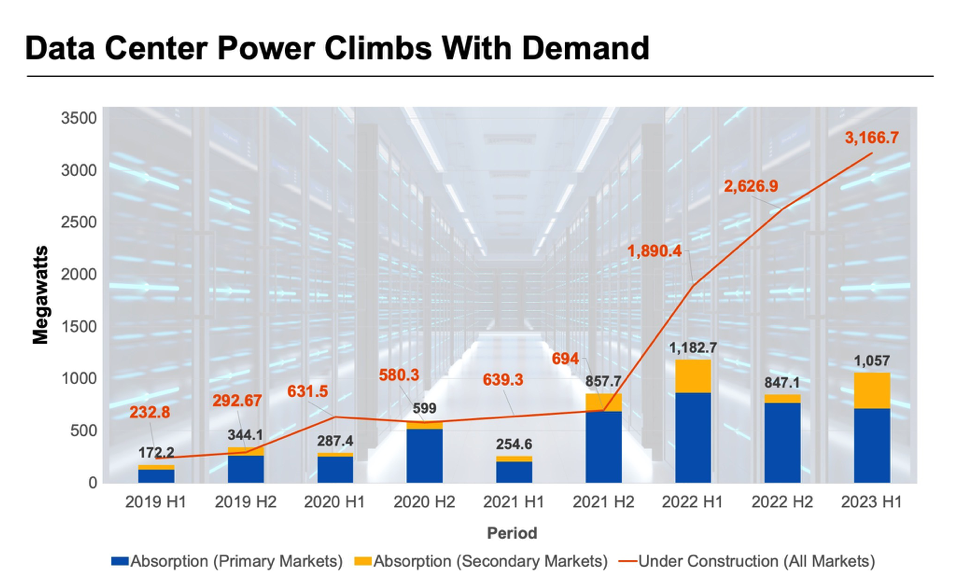

What's remarkable about the datacenter investment figure is its annual nature—this isn't a one-time expenditure but a recurring investment that shows no signs of slowing. Industry analysts project datacenter spending will continue growing at 15-20% annually through the end of the decade, potentially reaching $500 billion annually by 2030.

The Competitive Landscape

This massive infrastructure investment creates significant barriers to entry and advantages for incumbents:

Microsoft $50B+ annually Azure AI infrastructure, OpenAI partnership Amazon $45B+ annually AWS, custom AI chips (Trainium, Inferentia) Google $40B+ annually TPU infrastructure, Gemini model development Meta $35B+ annually Llama models, recommendation systems Specialized AI clouds $30B+ collectively GPU-focused infrastructure (CoreWeave, Lambda)gentic.news Analysis

This datacenter spending comparison arrives at a critical inflection point in AI development. As we've covered in our analysis of Nvidia's Blackwell platform launch and Microsoft's $100 billion "Stargate" AI supercomputer project, infrastructure has become the primary bottleneck—and battleground—in advanced AI development.

The Manhattan Project analogy is particularly insightful because it captures both the scale and strategic importance of current AI investments. Just as nuclear capability defined great power status in the 20th century, AI capability—enabled by massive computational infrastructure—is emerging as the defining technological advantage of the 21st century.

What's often overlooked in these comparisons is the distributed nature of modern AI infrastructure. Unlike the centralized Manhattan Project, today's datacenter investment spans multiple continents, companies, and technological approaches. This creates a more resilient—but also more fragmented—AI ecosystem. The geopolitical implications are significant: while the U.S. currently leads in AI infrastructure investment, China's domestic datacenter buildout and semiconductor initiatives represent a parallel effort at similar scale.

For technical leaders, the key takeaway is that infrastructure constraints will continue shaping AI development for the foreseeable future. Model architectures, training methodologies, and deployment strategies must all account for the physical reality of distributed computation at unprecedented scale. The companies and research institutions that can most effectively navigate this infrastructure landscape will determine the next generation of AI capabilities.

Frequently Asked Questions

How does datacenter CapEx break down between AI and traditional computing?

While exact percentages vary by company, industry analysts estimate 60-70% of new datacenter investment is now AI-focused, up from 20-30% just three years ago. Traditional enterprise computing and cloud services still require significant infrastructure, but growth is overwhelmingly driven by AI workloads.

Is this level of investment sustainable long-term?

Current investment levels reflect expected returns from AI capabilities. If AI delivers projected productivity gains and new revenue streams, the investment is sustainable and likely to increase. However, if AI adoption slows or fails to generate expected returns, we could see a significant correction in datacenter spending within 2-3 years.

How does this compare to other major technological investments?

The Apollo space program cost approximately $280 billion in today's dollars over its entire lifespan (1960-1973). Annual datacenter investment now equals one Apollo program per year. The Internet infrastructure buildout of the 1990s-2000s reached similar annual percentages of GDP at its peak, but AI infrastructure is growing faster in absolute terms due to higher chip costs and energy requirements.

What are the environmental implications of this scale of datacenter construction?

AI datacenters consume significantly more power per square foot than traditional facilities. At current growth rates, AI could account for 4-6% of global electricity consumption by 2030. This has accelerated investment in nuclear, geothermal, and next-generation renewable energy projects specifically to power AI infrastructure, creating both environmental challenges and clean energy investment opportunities.