The Data-Driven Edge for Claude Code Users

A 30-day, real-world test comparing Claude Sonnet 4.5 and GPT-4o on identical autonomous agent workloads reveals concrete advantages that directly impact how you should use Claude Code. The test involved 5 agents handling content production, code generation, API integrations, and competitive research, with outputs evaluated on a simple "did it work?" basis.

Claude's Multi-File Code Dominance

For tasks involving 3+ interdependent files and a test suite, Claude Sonnet 4.5 significantly outperformed GPT-4o:

- Write Python script + tests + docs: 87% pass rate (Claude) vs 71% (GPT-4o)

- Refactor + maintain backward compatibility: 82% vs 68%

- API integration from scratch: 91% vs 74%

The key differentiator: Claude tends to read the existing codebase before writing, while GPT-4o more often generates standalone code that works in isolation but conflicts with the existing system. In one example, Claude caught that a utility function was already imported from a different module, while GPT-4o regenerated it inline, creating a duplicate that caused silent failures hours later.

What this means for your CLAUDE.md: When working on multi-file projects, you can trust Claude Code to maintain better awareness of your existing architecture. This aligns with Claude Code's native multi-file editing capabilities and direct file system access.

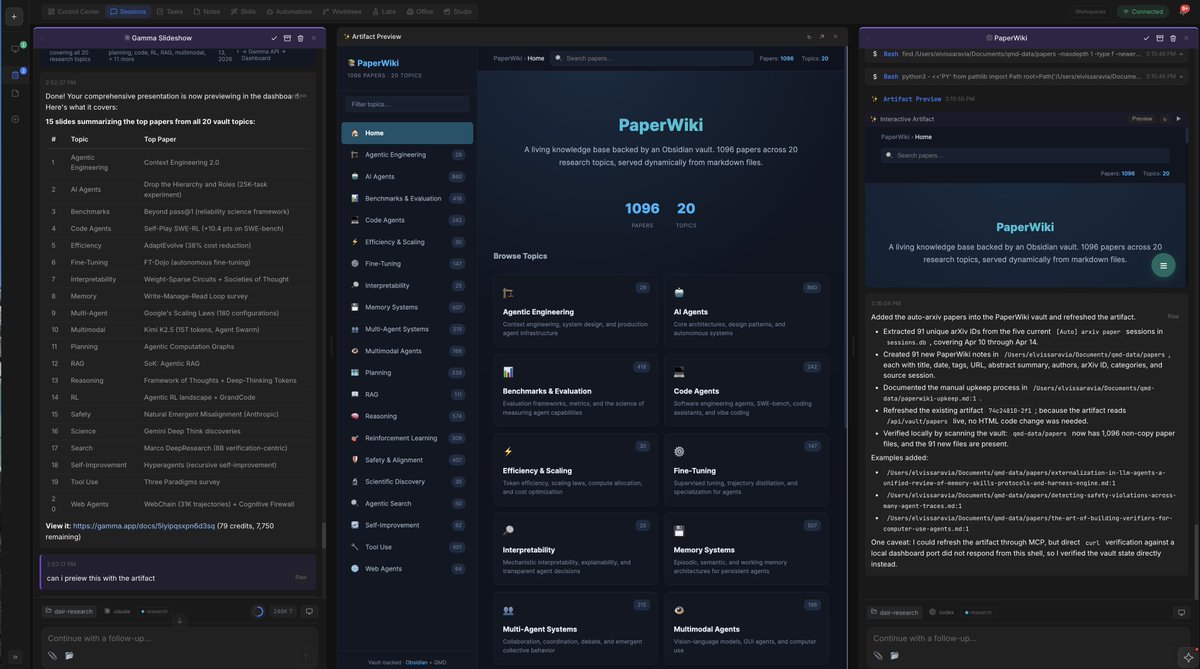

Long-Context Reliability Matters

For developers working on large codebases or accumulating context across a session, Claude's long-context handling is operationally superior. The test found:

- Claude: Maintains instruction following at 150K+ tokens, with rare instruction forgetting

- GPT-4o: Noticeable instruction degradation past ~100K tokens, with system prompts getting ignored

In one overnight run, a GPT-4o research agent forgot its output format specification at hour 3, producing unstructured outputs that couldn't be parsed by the next agent in the chain. For Claude Code users working on refactoring large projects or maintaining context across multiple editing sessions, this reliability difference is significant.

Cost Reality: Caching Changes Everything

The common assumption that GPT-4o is cheaper doesn't hold up in practice due to Claude's prompt caching:

Input per 1M tokens $3.00 $2.50 Output per 1M tokens $15.00 $10.00 Cache TTL (default) 5 minutes No native caching Effective cost with caching ~$0.30–0.60/M input $2.50/M inputClaude's prompt caching drops effective input cost by 80–90% for repeated context. In the test's orchestration loop, the same 200K-token context was passed 100+ times per day. With caching, that's roughly $6/day vs $50/day without.

Critical update: Anthropic changed the default cache TTL from 1 hour to 5 minutes in March 2026. If you configured caching before that date and haven't verified, you may not be getting the savings you expect.

Tool Use Reliability for Autonomous Workflows

For developers using Claude Code with MCP servers or custom tool integrations, reliability matters:

- Argument hallucination rate: Claude ~3% vs GPT-4o ~7%

- Error recovery: Claude usually re-attempts with corrected arguments and explains the fix, while GPT-4o is more likely to report the error back without attempting recovery

In an autonomous system where the model has to self-correct without human intervention, Claude's error recovery behavior is operationally significant.

When to Use Which Model in Your Workflow

Based on the 30-day test results:

Use Claude Code (Sonnet 4.5) for:

- Multi-file code generation and refactoring

- Long-running editing sessions (>2 hours)

- Tasks where context accumulates across turns

- Projects where error recovery matters

Consider GPT-4o for:

- Structured data extraction at scale (Claude tends to add reasoning prose before JSON)

- Shorter-context tasks where caching isn't a factor

Use Claude Opus for:

- Architectural decisions and code reviews

- Anything where getting it wrong costs more than API cost

Try This Now in Your Workflow

For complex refactoring: Use Claude Code's multi-file editing with confidence that it will maintain awareness of your existing imports and dependencies.

Configure caching properly: Verify your cache TTL settings if you set them up before March 2026. The default changed from 1 hour to 5 minutes.

Structure your prompts for JSON: If you need structured output, be explicit: "Output ONLY valid JSON, no reasoning text before or after."

Leverage long context: Don't hesitate to provide extensive context about your codebase—Claude handles it better than GPT-4o at scale.

The full test suite, evaluation scripts, and raw data are available in the whoff-automation repo.