A new research paper introduces Autogenesis, a framework for creating AI agents that can autonomously identify their own capability gaps, generate candidate improvements, validate them through testing, and integrate successful changes back into their operational framework—all without human intervention or model retraining.

The protocol represents a significant step toward continual self-improvement in AI systems, addressing the fundamental problem that static agents quickly become outdated as deployment environments change and new tools emerge.

Key Takeaways

- A new paper introduces Autogenesis, a self-evolving agent protocol.

- Agents can assess their own shortcomings, propose and test improvements, and update their operational framework in a continuous loop.

What the Protocol Enables

Autogenesis creates a closed-loop system where agents:

- Self-Assess: Continuously monitor performance and identify specific capability deficiencies

- Generate Improvements: Propose modifications to their own architecture, tools, or decision logic

- Validate Changes: Test proposed improvements in controlled environments

- Integrate Successes: Safely incorporate validated improvements into their operational framework

The key innovation is that this entire process happens without retraining the underlying model and without human patching. The agent maintains its core capabilities while evolving its operational framework to adapt to changing requirements.

How Autogenesis Works

The protocol operates through a structured cycle of assessment, proposal, validation, and integration:

Assessment Phase: Agents analyze their recent performance against objectives, identifying specific failure modes or inefficiencies. This isn't just error detection—it's capability gap analysis that identifies what the agent cannot do that it needs to do.

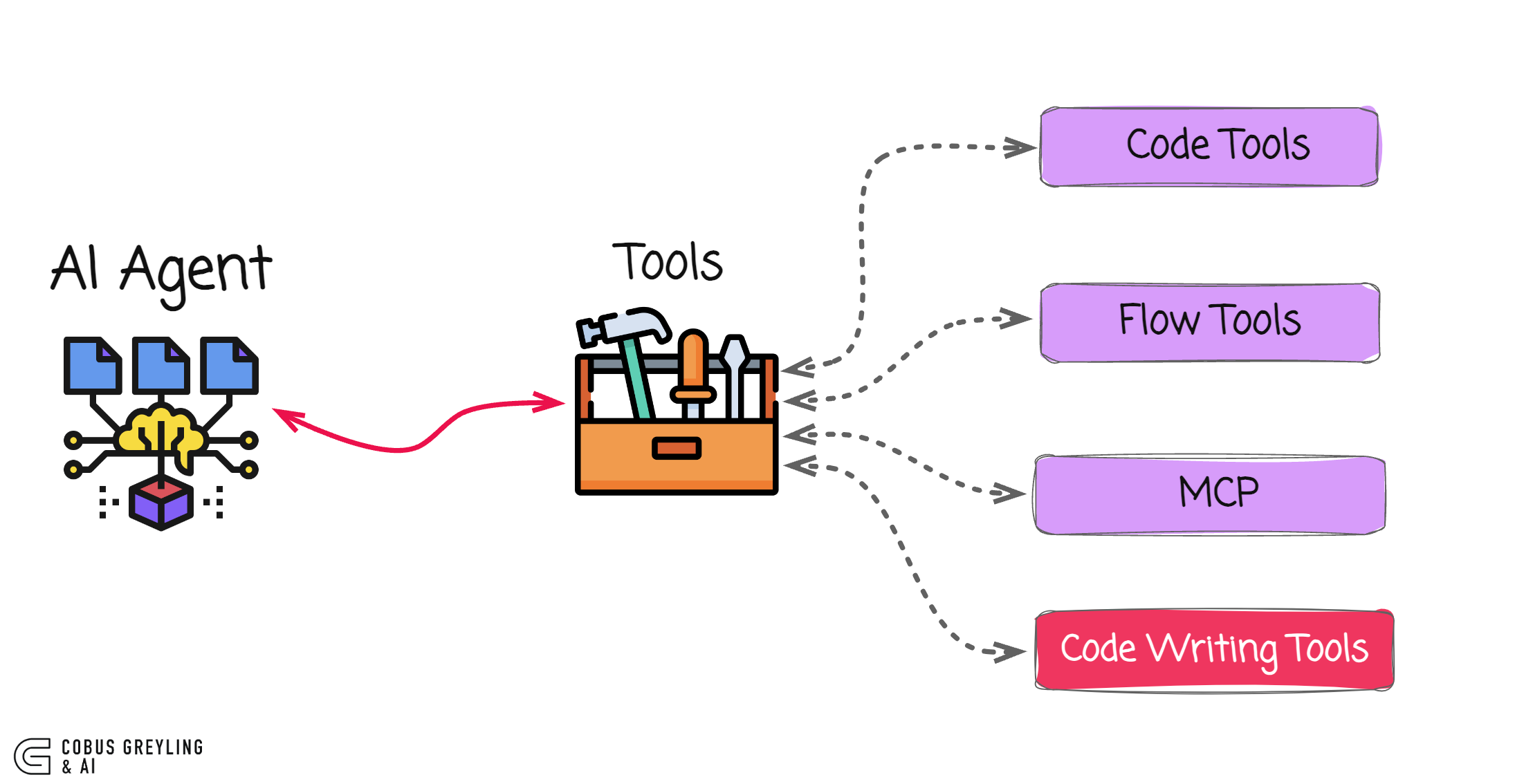

Proposal Generation: Based on identified gaps, the agent generates candidate improvements. These could include:

- New tool integrations

- Modified decision-making logic

- Architectural adjustments to workflow

- Additional verification steps

Validation Testing: Proposed changes undergo rigorous testing in sandboxed environments. The agent evaluates whether modifications actually address the identified gaps without introducing regressions or safety issues.

Integration: Successfully validated changes are incorporated into the agent's operational framework. The protocol includes safeguards to ensure integration happens safely and maintains system stability.

Technical Architecture

While the paper presents Autogenesis as a protocol rather than a specific implementation, the architecture typically involves:

- Meta-Cognitive Layer: Monitors agent performance and identifies capability gaps

- Improvement Generator: Creates candidate modifications based on gap analysis

- Validation Environment: Sandboxed testing framework for proposed changes

- Integration Manager: Safely incorporates validated improvements into the operational framework

- Version Control: Tracks changes and maintains rollback capabilities

The system operates at the agent framework level, not the underlying language model level. This means agents evolve their operational logic, tool usage, and decision processes rather than modifying their core reasoning capabilities.

Why This Matters for AI Development

Static AI agents face rapid obsolescence. As environments change, new tools emerge, and requirements evolve, fixed agents quickly lose effectiveness. Traditional approaches require:

- Manual analysis of failure modes

- Human-designed patches or updates

- Retraining or fine-tuning cycles

- Deployment and validation processes

Autogenesis automates this entire improvement loop, potentially enabling agents to:

- Adapt to changing API interfaces automatically

- Incorporate new data sources as they become available

- Optimize workflows based on performance feedback

- Develop specialized capabilities for specific tasks

Related Work in Self-Improving Systems

Autogenesis joins a growing body of research on self-improving AI systems:

- Meta-Harness: Focuses on meta-learning approaches where agents learn to adapt their learning strategies

- Darwin Gödel Machine: Theoretical framework for self-referential self-improvement

- Recursive Self-Improvement: Systems that can modify their own learning algorithms

What distinguishes Autogenesis is its protocol-level approach—it provides a structured framework for continual improvement rather than focusing on specific algorithmic innovations.

Limitations and Considerations

The paper acknowledges several important challenges:

Safety: Autonomous self-modification introduces risks if not properly constrained. The validation phase must be robust enough to catch potentially harmful modifications.

Stability: Continuous changes could lead to system instability or unpredictable behavior over time.

Evaluation: Measuring the effectiveness of self-improvement is non-trivial—how do we know the agent is actually getting better rather than just changing?

Scope: The protocol focuses on agent framework evolution, not fundamental model capability improvement. An agent can learn to use new tools more effectively but cannot fundamentally increase its reasoning capacity.

Practical Implications

For AI developers, Autogenesis suggests a shift from building static agents to designing evolvable agent frameworks. Key considerations include:

- Designing agents with introspection capabilities from the start

- Building validation environments that can safely test proposed changes

- Implementing version control and rollback mechanisms

- Establishing metrics for measuring improvement rather than just performance

The protocol could be particularly valuable in domains where:

- Requirements change frequently

- New tools and APIs emerge regularly

- Agents operate in diverse environments

- Continuous optimization provides competitive advantage

gentic.news Analysis

Autogenesis arrives at a critical inflection point in agent development. Throughout 2025, we've tracked the industry's pivot from single-task agents to persistent, multi-session systems that maintain context across interactions. Companies like Cognition Labs (with Devin) and Magic have pushed the boundaries of what autonomous agents can accomplish, but their systems remain largely static between updates. The Autogenesis protocol directly addresses this limitation by providing a framework for continuous adaptation.

This work aligns with broader trends we've covered in our Agent Infrastructure series. In November 2025, we analyzed LangChain's "Auto-eval" framework, which allows agents to assess their own outputs against predefined criteria. Autogenesis extends this concept beyond evaluation to generation and integration of improvements, creating a complete self-improvement loop. The protocol-level approach is particularly interesting—rather than baking self-improvement into specific agents, it provides a reusable framework that could be implemented across different agent architectures.

Looking forward, the most immediate application of Autogenesis will likely be in enterprise agent systems where requirements evolve with business processes. We expect to see implementations focused on specific domains like customer support (adapting to new products), data analysis (incorporating new data sources), and workflow automation (optimizing processes based on performance metrics). The safety considerations highlighted in the paper will be paramount—early adopters will need robust validation environments to prevent undesirable emergent behaviors from self-modification.

Frequently Asked Questions

What problem does Autogenesis solve?

Autogenesis addresses the rapid obsolescence of static AI agents. As deployment environments change and new tools become available, fixed agents quickly lose effectiveness. The protocol enables agents to autonomously identify capability gaps, generate improvements, validate them, and integrate successful changes—creating agents that can adapt without human intervention or model retraining.

How is Autogenesis different from fine-tuning or retraining?

Fine-tuning and retraining modify the underlying language model's weights, changing its fundamental capabilities. Autogenesis operates at the agent framework level, modifying how the agent uses tools, makes decisions, and structures workflows without changing the core model. This allows for more targeted, rapid adaptations without the computational cost of retraining.

Is Autogenesis safe for production use?

The paper acknowledges safety as a primary concern. The protocol includes validation phases and integration safeguards, but production implementations would require additional safety measures. Early applications will likely be in controlled environments with human oversight before progressing to fully autonomous self-modification in critical systems.

What types of improvements can agents make with Autogenesis?

Agents can modify their operational framework, including tool usage patterns, decision logic, workflow structures, and verification steps. They cannot fundamentally increase their reasoning capacity or acquire entirely new capabilities beyond what their underlying model supports. The improvements focus on optimizing how existing capabilities are applied.

How does this compare to other self-improving AI systems?

Autogenesis takes a protocol-level approach rather than focusing on specific algorithms. It provides a structured framework for the complete self-improvement cycle (assess, propose, validate, integrate) that can be implemented across different agent architectures. This makes it more general than approaches like Meta-Harness (focused on meta-learning) while being more practical than theoretical frameworks like the Darwin Gödel Machine.