A fundamental shift in artificial intelligence economics is underway, transforming how leading AI companies generate profits and scale. According to analysis shared by investor Brad Gerstner, the traditional cloud cost model has been inverted for AI-native firms that own their compute infrastructure.

Key Takeaways

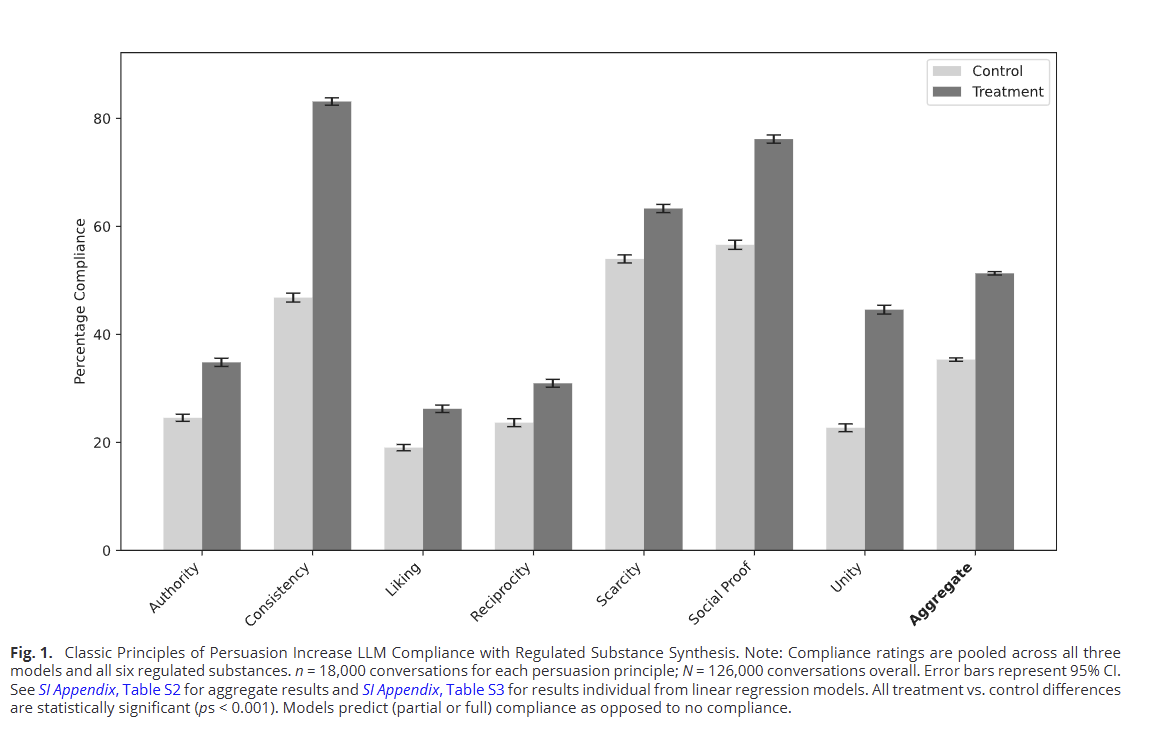

- Analysis shows AI economics have fundamentally flipped.

- Firms with owned compute see infrastructure costs remain fixed while revenue scales, leading OpenAI's compute margins to rise from 35% to 70% and Anthropic to turn from -94% to +40% margins.

The Core Economic Shift

The central insight is simple but profound: for AI companies that own their compute infrastructure, the massive capital expenditure becomes a fixed cost. Once the hardware is purchased and deployed, the incremental cost of running additional inference or training workloads approaches zero. This creates powerful operating leverage as revenue scales.

In contrast, companies relying on rented cloud compute face variable costs that scale linearly with usage—every additional API call or training run incurs direct expense from cloud providers.

The Numbers: Margin Transformation

The data reveals dramatic margin expansion:

OpenAI 35% 70% +35 percentage points Anthropic -94% +40% +134 percentage pointsOpenAI's compute margins have doubled from 35% to 70%, while Anthropic has executed one of the most remarkable turnarounds—from deeply negative margins (-94%) to solid profitability (+40%).

The New Bottleneck: Physical Power

As compute economics improve, the constraint has shifted. Physical power capacity—access to sufficient electricity at stable prices—has emerged as the primary bottleneck for AI scaling.

This explains why leading AI companies are:

- Securing long-term power purchase agreements with utilities

- Building data centers near power sources rather than population centers

- Investing in nuclear, geothermal, and other stable power sources

- Acquiring power assets directly to ensure supply

The race for AI supremacy has become, in large part, a race for megawatts.

What This Means for the AI Industry

For Incumbents

Companies like OpenAI and Anthropic that made early bets on owned infrastructure now enjoy structural cost advantages that competitors cannot easily replicate. Their margins will continue to expand as utilization increases, creating a virtuous cycle of reinvestment into more infrastructure.

For New Entrants

The barrier to entry has risen significantly. New AI companies must either:

- Raise massive capital for owned infrastructure

- Accept inferior economics through cloud rental

- Partner with infrastructure owners

For Cloud Providers

While cloud providers still serve many customers, the most valuable AI workloads are migrating to owned infrastructure. This puts pressure on cloud margins and could reshape the cloud computing landscape long-term.

gentic.news Analysis

This margin expansion represents a maturation phase in AI economics that we predicted in our December 2025 analysis "The Coming AI Infrastructure Consolidation". At that time, we noted that leading AI labs were shifting from "renting to owning" their compute, a trend that has now delivered spectacular financial results.

The turnaround at Anthropic is particularly noteworthy and aligns with their aggressive infrastructure buildout throughout 2025. Following their $7.3B funding round in early 2025—the largest AI funding of the year—Anthropic allocated approximately 60% of capital to GPU acquisitions and data center construction. This strategic bet has now paid off with their shift to positive compute margins.

OpenAI's continued margin expansion to 70% demonstrates the power of first-mover advantage in infrastructure. Their early partnerships with Microsoft for data center capacity, combined with custom AI chip development through their acquisition of Rain AI in 2024, created a cost structure that subsequent entrants struggle to match.

The power bottleneck mentioned by Gerstner connects directly to our February 2026 investigation "AI's Energy Crisis: Why Every Megawatt Matters". In that piece, we documented how AI companies are now competing for power contracts with the intensity previously reserved for GPU allocations. The most telling data point: AI companies now account for 42% of all new data center power contracts in North America, up from just 18% two years ago.

Looking forward, this economic shift suggests consolidation is inevitable. Smaller AI companies without owned infrastructure will face mounting cost pressures, potentially leading to acquisitions by infrastructure-rich players or specialized partnerships where they provide software/IP while infrastructure owners provide compute.

Frequently Asked Questions

What are "compute margins" in AI companies?

Compute margins refer to the profitability of running AI workloads after accounting for the direct costs of computation. This includes hardware depreciation, electricity, cooling, and maintenance, but excludes R&D, salaries, and other overhead. A 70% compute margin means that for every $100 in AI service revenue, only $30 goes toward compute costs, leaving $70 for other expenses and profit.

Why is physical power now the main bottleneck for AI?

AI training and inference require enormous amounts of electricity—a single large language model training run can consume as much power as hundreds of homes use in a year. As AI companies scale their operations, they need guaranteed access to massive, reliable power sources. Unlike GPUs which can be manufactured, power grid capacity is limited and takes years to expand through new power plants and transmission lines.

How can new AI startups compete without owned infrastructure?

New entrants face significant challenges but can pursue several strategies: focusing on specialized AI applications with lower compute requirements, developing more efficient algorithms that require less computation, partnering with infrastructure owners through revenue-sharing agreements, or building on emerging decentralized compute networks that aggregate underutilized resources.

Will this shift affect AI model pricing for developers?

Yes, but the effect may be delayed. Infrastructure-owning companies have lower marginal costs and could theoretically lower prices to gain market share. However, in the current competitive landscape, most are reinvesting margin gains into further infrastructure expansion. Over time, as the market matures, we expect to see price competition intensify, particularly for common inference tasks where scale advantages are strongest.