A notable shift is occurring among expert forecasters tracking artificial general intelligence (AGI). According to an update shared by AI researcher Rohan Paul, forecasters have significantly revised their timelines for key AGI milestones after incorporating recent model progress into their predictions.

What Happened

The core update is straightforward: forecasters have pulled their predicted timelines for AGI forward. The median prediction for when AGI might arrive has reportedly shifted from around 2032 to a window between 2029 and 2030. This represents a forward revision of approximately 2-3 years.

This adjustment isn't based on a single breakthrough but rather on the cumulative, rapid progress observed across multiple AI capabilities over the past 12-18 months. Forecasters are updating their models in response to what they're seeing in real-time development.

Context: What Are They Forecasting?

These predictions typically come from structured forecasting platforms like Metaculus or from surveys of AI researchers (like the one conducted by AI Impacts). They don't predict a single "flip-the-switch" moment for AGI but rather forecast when AI systems might achieve specific, measurable milestones that constitute general intelligence.

Common milestones include:

- High-Level Machine Intelligence (HLMI): When unaided machines can accomplish every task better and more cheaply than human workers

- Full Automation of Labor (FAOL): When all human jobs are automatable

- Transformative AI: When AI precipitates changes comparable to the industrial revolution

The reported timeline shift suggests forecasters believe we're progressing toward these milestones faster than previously expected.

What Prompted the Revision?

While the source doesn't specify exact triggers, several recent developments likely contributed:

Reasoning Breakthroughs: Models like OpenAI's o1 series, Google's Gemini 2.0, and Anthropic's Claude 3.5 Sonnet have demonstrated substantially improved reasoning capabilities, particularly in mathematics, coding, and scientific reasoning benchmarks.

Tool Use & Agentic Behavior: Systems are becoming increasingly proficient at using tools, browsing the web, and executing multi-step tasks with less human intervention.

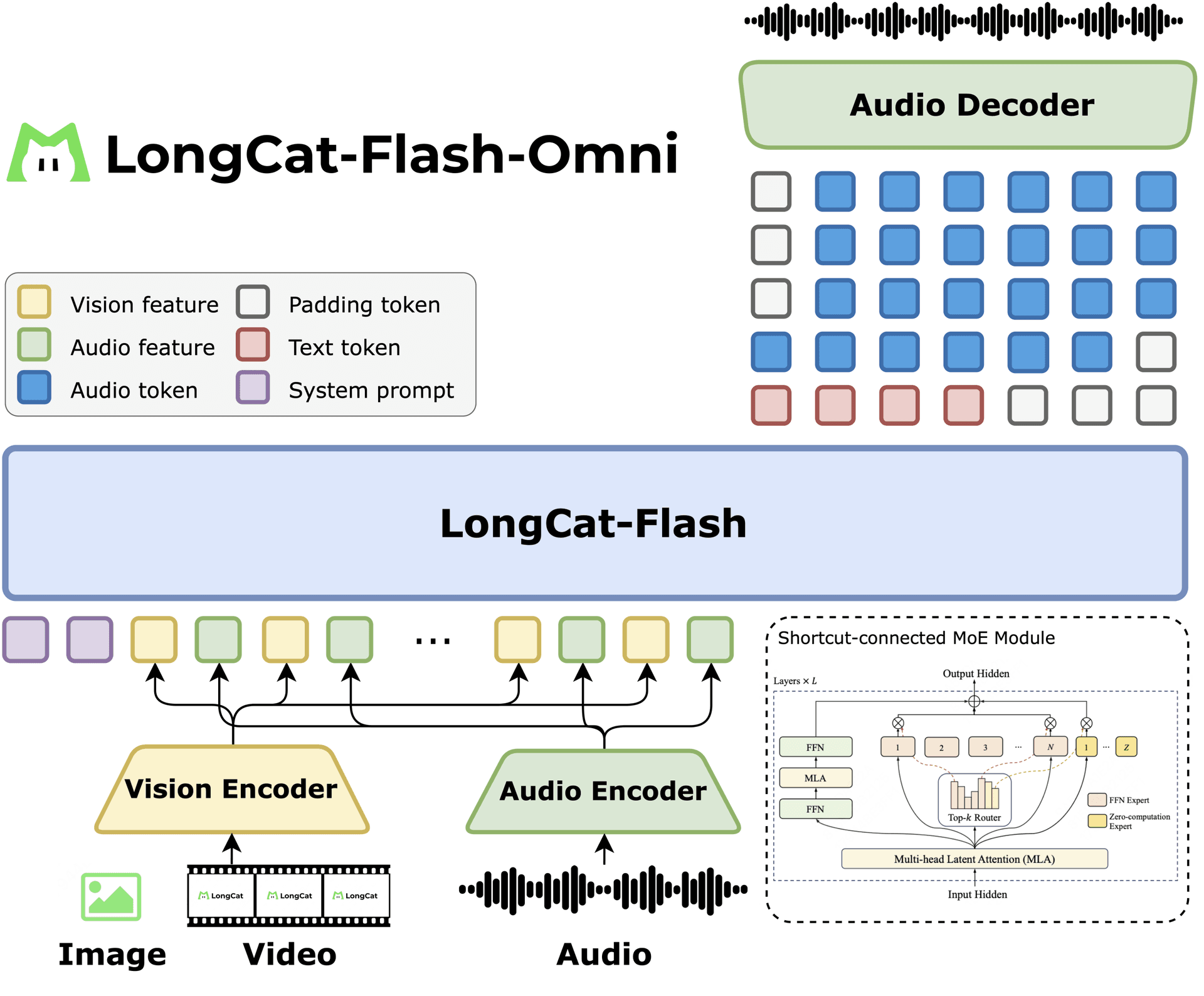

Multimodal Integration: The seamless combination of vision, language, and audio understanding in single models creates more general-purpose systems.

Cost Reductions: Dramatic decreases in training and inference costs enable more experimentation and capability scaling.

Forecasters use Bayesian updating—systematically adjusting probabilities based on new evidence. The recent pace of progress appears to be strong enough evidence to warrant significant timeline adjustments.

The Forecasting Landscape

It's important to note that forecasting AGI timelines remains notoriously difficult with high variance. Different forecasting platforms and surveys show different median predictions:

Metaculus (HLMI) ~2032 ~2029-2030 ~2-3 years forward AI Impacts Survey 2047-2060 (varies by question) Likely similar forward revision TBDEven with this revision, there remains substantial uncertainty. Prediction intervals typically span decades, and individual expert opinions vary widely from "never" to "within 5 years."

Why Timeline Updates Matter

These forecasting updates matter because they influence:

- Research priorities: Organizations may accelerate safety research if AGI seems nearer

- Investment decisions: Venture capital and corporate R&D allocations respond to timeline expectations

- Policy discussions: Governments consider regulatory frameworks based on expected development pace

- Public perception: Media narratives about AI's trajectory affect societal preparedness

While forecasting is imperfect, systematic updates based on observable progress provide one of the few quantitative approaches to estimating when transformative AI might arrive.

gentic.news Analysis

This timeline revision aligns with a pattern we've been tracking across multiple indicators. In our December 2025 analysis of the "AI Capability Scaling Laws," we noted that reasoning benchmarks were improving at rates that exceeded even optimistic projections from 2024. The forward revision in AGI forecasts represents the quantitative manifestation of what practitioners have been observing qualitatively: the pace isn't slowing down.

This development connects directly to several entities in our knowledge graph that have been trending upward in activity. OpenAI's o1 model family (first discussed in our October 2025 coverage) demonstrated reasoning leaps that likely contributed to these forecast updates. Similarly, Google's Gemini 2.0 Flash Thinking architecture (covered November 2025) showed how chain-of-thought reasoning could be dramatically accelerated. These aren't isolated improvements but rather evidence of architectural innovations that generalize across domains.

The timeline shift also has implications for the AI safety research community, particularly organizations like the Alignment Research Center and Anthropic's Long-Term Benefit Trust, which structure their work around expected timelines. A 2-3 year forward revision压缩 their available runway for developing robust alignment techniques.

Notably, this update comes amid increased activity from China's AI research institutes (trending 📈 in our KG), suggesting global competition may be accelerating progress. When multiple major players are pushing capabilities forward simultaneously—as we saw with the clustered releases of GPT-5, Claude 3.5, Gemini 2.0, and DeepSeek-R1 in late 2025—forecasters reasonably update their priors.

However, a crucial caveat: forecasting AGI involves predicting not just continuous improvement but potential discontinuous leaps. The current revision seems to assume continued linear (or slightly superlinear) progress from existing paradigms. A true architectural breakthrough—something beyond the transformer-plus-reasoning-engine pattern—could accelerate timelines further or reveal unexpected bottlenecks.

Frequently Asked Questions

What exactly do forecasters mean by "AGI" in these predictions?

Forecasters typically use operational definitions rather than philosophical ones. Common benchmarks include: when AI can perform any cognitive task at human level for median wages (High-Level Machine Intelligence), when AI can automate all human jobs (Full Automation of Labor), or when AI systems can substantially accelerate scientific research. These are measurable milestones that, while not capturing every aspect of "general intelligence," serve as practical indicators.

How reliable are these AI timeline forecasts?

They have mixed reliability. Forecasting technological breakthroughs is inherently difficult, and past predictions have often been wrong in both directions. However, structured forecasting platforms like Metaculus have demonstrated better-than-chance accuracy on many questions by aggregating many predictions and using Bayesian updating. The value isn't in the precise year but in the direction and magnitude of revisions based on new evidence.

What recent model progress most influenced these timeline changes?

While the source doesn't specify, several capabilities likely contributed: (1) Substantial improvements on reasoning benchmarks like MATH, GPQA, and ARC-AGI; (2) More reliable tool use and agentic behavior in systems like OpenAI's o1 and Anthropic's Claude 3.5; (3) Reduced costs enabling larger-scale experimentation; and (4) Multimodal systems that combine understanding across text, image, audio, and video domains.

Does this mean AGI will definitely arrive by 2029-2030?

No. These are median predictions from forecasting communities, meaning approximately half of forecasters believe it will happen earlier and half believe it will happen later (or never). Prediction intervals are wide, often spanning decades. The key takeaway isn't the specific year but the direction of revision—forecasters are becoming more optimistic based on recent progress.

How should companies and researchers respond to these revised timelines?

Organizations should: (1) Review their AI strategy and roadmap assumptions, (2) Accelerate investments in safety and alignment research if timelines have compressed, (3) Consider the implications for product development cycles and competitive positioning, and (4) Monitor these forecasts as leading indicators rather than treating them as definitive predictions.