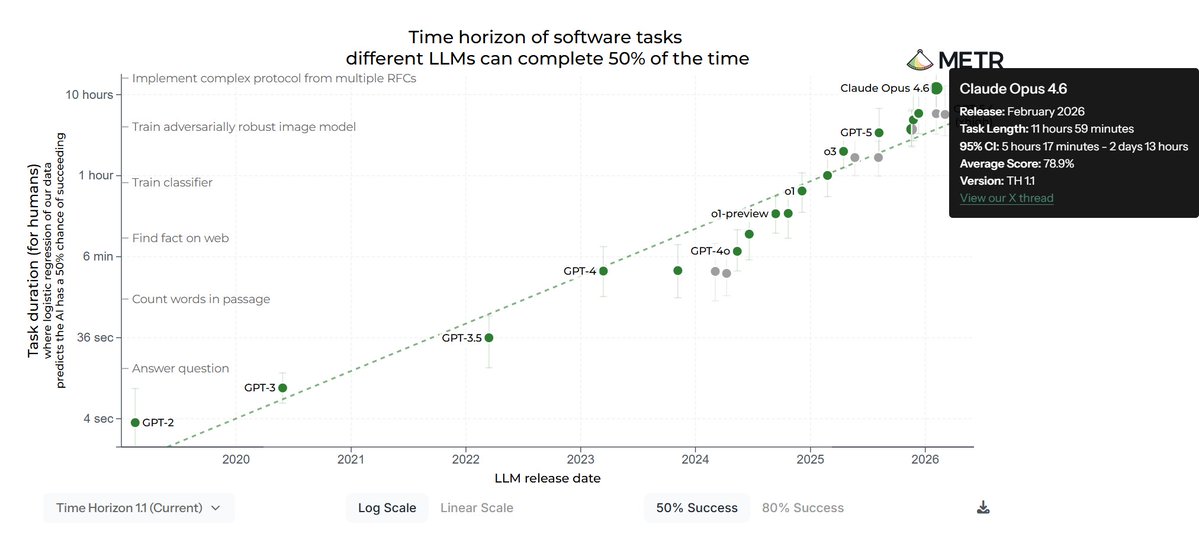

New analysis of leading AI models reveals significant advances in their ability to handle complex, multi-step tasks that would require human experts hours to complete. According to recent evaluations using the METR (Model Evaluation for Time Horizons) benchmark, both Anthropic's Claude Opus 4.6 and OpenAI's GPT 5.3 Codex demonstrate capabilities approaching 3-4 hour time horizons at 50% success rates.

Understanding the METR Time Horizon Benchmark

The METR benchmark represents a crucial evolution in AI evaluation, moving beyond simple question-answer formats to measure how long a task would take a competent human expert to complete—and whether AI systems can match or exceed that capability in a single attempt. As described in the original analysis on LessWrong, "these represent the time it would take for a competent human expert to complete a task which the model has a 50% or 80% chance of one-shotting."

Unlike traditional benchmarks that measure accuracy on discrete problems, METR evaluates AI performance across "a diverse set of multi-step software and reasoning tasks" with varying complexity levels. The benchmark interpolates performance data to estimate at what task duration (measured in human expert time) a model would achieve specific success rates.

Performance Breakdown Across Models

The evaluation reveals distinct testing approaches for each model. For GPT 5.3 Codex, assessments include Terminal Bench 2.0, SWE Bench Pro (Public), and Cybench. Meanwhile, Claude Opus 4.6 has been evaluated on Terminal Bench 2.0, ARC-AGI-2, GDPval-AA, and SWE-Bench-Verified (Bash only).

This divergence in testing suites reflects the different capabilities and specializations of each model, though both demonstrate substantial progress in handling longer-duration tasks. The 50% time horizon metric—where models succeed half the time on tasks of a given duration—has become particularly significant for understanding practical deployment capabilities.

The Significance of Multi-Hour Capabilities

Reaching 3-4 hour time horizons represents a qualitative leap in AI capabilities. Tasks requiring this level of sustained reasoning and execution typically involve complex software development, research synthesis, strategic planning, or multi-component problem-solving. Previously, AI systems struggled with tasks extending beyond 30-60 minutes of human-equivalent effort.

This advancement suggests AI systems are developing better working memory, more consistent reasoning chains, and improved error correction throughout extended problem-solving sessions. The ability to maintain coherence and direction across what would be hours of human work indicates progress toward more autonomous, reliable AI assistants.

Implications for Software Development and Beyond

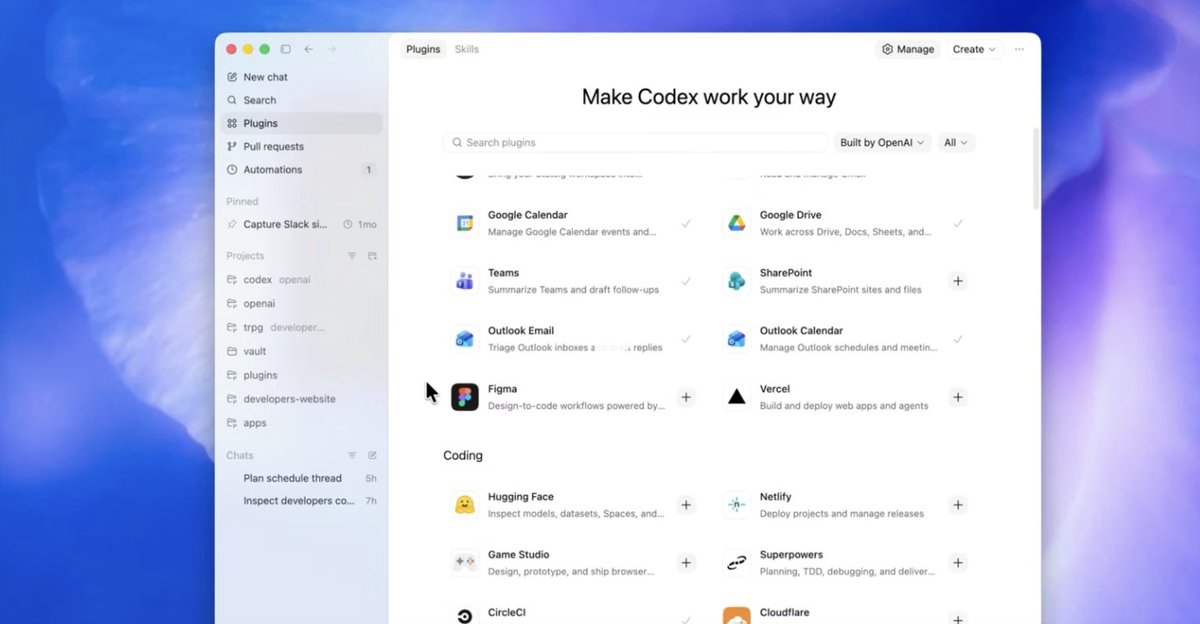

The specific inclusion of software engineering benchmarks (SWE Bench variants, Terminal Bench) highlights where these capabilities are most immediately applicable. AI systems approaching 3-4 hour time horizons could significantly accelerate development workflows, potentially handling complete feature implementations, complex debugging sessions, or architectural refactoring in single attempts.

Beyond software, these capabilities suggest AI could soon handle other extended tasks: comprehensive research literature reviews, detailed business strategy documents, complex data analysis pipelines, or multi-step creative projects. The transition from minutes to hours in task handling represents a fundamental shift in how AI can integrate into professional workflows.

Benchmarking Challenges and Future Directions

The METR approach, while innovative, faces challenges in standardization and interpretation. Different testing suites for different models complicate direct comparisons, and the "human expert time" metric inherently contains estimation uncertainties. Additionally, the distinction between 50% and 80% success rates highlights the reliability gap that remains even as capabilities expand.

Future developments will likely focus on improving consistency at longer time horizons, expanding the diversity of tasks included in evaluations, and developing better metrics for measuring the quality (not just completion) of extended outputs. As models push toward 8-hour and eventually day-long time horizons, evaluation methodologies will need to evolve accordingly.

The Competitive Landscape

The parallel advancement of both Claude and GPT models suggests this is a general trend in frontier AI development rather than a proprietary breakthrough. Both organizations appear to be prioritizing extended reasoning capabilities, though their different testing approaches may indicate varying emphasis areas or capability profiles.

This competition drives rapid progress but also raises questions about evaluation standardization. As noted in the original analysis, "One of the most attended to benchmarks for any new model these days is the METR estimated time horizon," indicating its growing importance in the AI development ecosystem.

Practical Applications and Limitations

For organizations considering AI integration, these developments suggest near-term possibilities for automating or augmenting complex professional work. However, the 50% success rate at 3-4 hour horizons means these systems still require human oversight and quality checking for critical applications.

The most immediate impact will likely be in domains where partial success still provides value (such as generating initial code drafts or research outlines) or where humans can efficiently verify and correct AI outputs. As success rates improve at these extended time horizons, more autonomous applications will become feasible.

Source: Analysis based on "Estimating METR Time Horizons for Claude Opus 4.6 and GPT 5.3 Codex" from LessWrong.