Amazon launched an AI agent for SageMaker that automates fine-tuning of Llama, Qwen, DeepSeek, and Nova models. The Kiro agent, preinstalled in the development environment, replaces manual API and data-format wrangling with plain-language instructions.

Key facts

- Kiro agent preinstalled in SageMaker AI development environment.

- Supports Llama, Qwen, DeepSeek, and Nova model families.

- Nine prebuilt skills manage dataset checking to model deployment.

- Claude Code can substitute for Amazon's Kiro agent.

- All generated code is editable and reusable Jupyter notebooks.

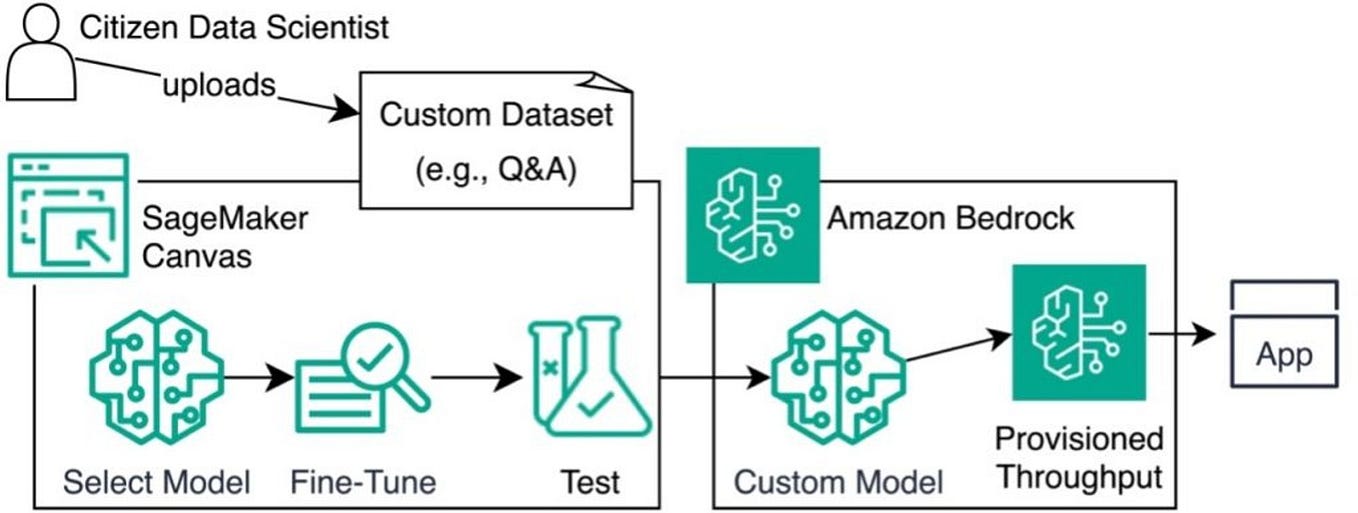

Amazon SageMaker AI now includes an AI agent designed to help developers customize language models. Instead of wrestling with different APIs and data formats, developers can now describe their use case in plain language. The agent then recommends the right training method, prepares the data, kicks off training, and delivers the finished code as Jupyter notebooks [According to The Decoder].

Amazon's Kiro AI agent comes preinstalled in the development environment, but developers can also use Claude Code or other agents. Nine prebuilt "skills" handle the workflow, from checking the dataset to deploying the finished model. The agent supports model families like Llama, Qwen, Deepseek, and Amazon's own Nova. All generated code is editable and reusable.

Why This Matters

The unique angle here is that Amazon is abstracting away the fragmentation of fine-tuning APIs across model families. Most cloud providers support fine-tuning, but require developers to switch between vendor-specific SDKs and data formats. SageMaker's agentic approach — using nine prebuilt skills and a natural-language interface — directly competes with offerings from Google Vertex AI and Microsoft Azure AI, which also offer managed fine-tuning but lack a unified agent layer. By integrating support for Claude Code as an alternative agent, Amazon hedges against lock-in while embedding Anthropic's tooling deeper into AWS workflows, a move consistent with its $25 billion Anthropic investment announced in April 2026 [per the knowledge graph].

What's Missing

Amazon did not disclose performance benchmarks, pricing for the agentic fine-tuning feature, or whether the Kiro agent uses a specific underlying model. The company also didn't specify which training methods (e.g., LoRA, full fine-tuning, RLHF) the agent can recommend, leaving developers to discover the supported methods through trial.

What to watch

Watch for Amazon to release performance benchmarks comparing agentic fine-tuning with manual workflows, and whether the Kiro agent expands to support additional model families like Mistral or Cohere. Also track adoption of Claude Code as a SageMaker agent given Amazon's deep Anthropic ties.