In a dramatic development that has sent shockwaves through the artificial intelligence community, Anthropic CEO Dario Amodei has taken a bold public stance against certain military applications of AI during recent congressional testimony, setting up what observers are calling a "direct confrontation" with Department of Defense interests.

The Congressional Showdown

According to multiple sources and social media commentary, Amodei's testimony before Congress marked a significant escalation in the ongoing debate about AI safety and military use. While the exact wording of his testimony hasn't been fully disclosed in public reports, the reaction from defense circles suggests Amodei advocated for strict limitations on how advanced AI systems—particularly those developed by companies like Anthropic—should be integrated into military operations.

This confrontation comes at a critical juncture in AI development, as governments worldwide grapple with how to regulate increasingly powerful systems while maintaining technological competitiveness. Amodei, whose company has positioned itself as a leader in AI safety research, appears to be drawing a line in the sand regarding what constitutes ethical deployment of frontier AI models.

The Department of Defense's AI Ambitions

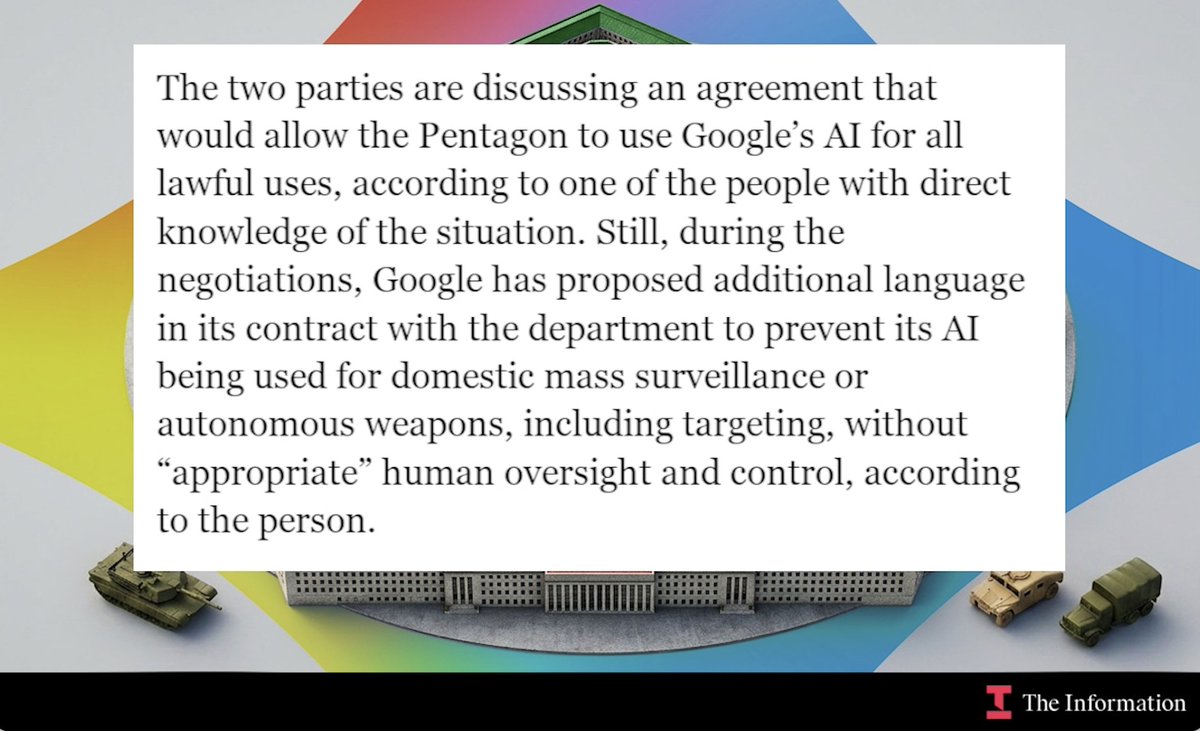

The Department of Defense has been increasingly vocal about its intention to integrate AI across military operations, from logistics and intelligence analysis to potential combat applications. The Pentagon's "Replicator" initiative and other programs signal a determined push to maintain technological superiority through AI adoption.

Defense officials have argued that responsible AI integration is essential for national security, particularly as geopolitical rivals like China aggressively pursue military AI capabilities. The department has established ethical AI principles and governance frameworks, but critics argue these don't go far enough to prevent potentially dangerous applications of increasingly autonomous systems.

Anthropic's Safety-First Philosophy

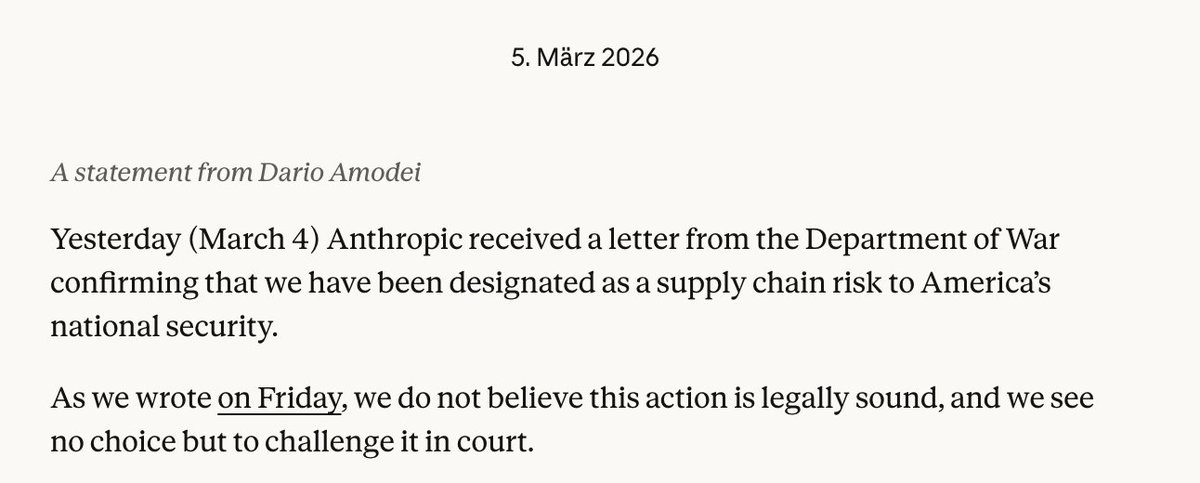

Anthropic's public stance aligns with its founding principles, which emphasize developing AI systems that are "helpful, honest, and harmless." The company has been at the forefront of AI safety research, developing constitutional AI techniques and advocating for responsible scaling policies. Amodei's testimony appears to extend this philosophy into the realm of government policy, suggesting that some military applications might conflict with fundamental safety principles.

This position places Anthropic in a distinct category among major AI labs. While other companies have engaged with defense contracts or remained ambiguous about military applications, Anthropic is taking a more publicly principled stand—potentially at significant commercial and political cost.

The Broader Industry Context

The confrontation between Amodei and defense interests reflects a deeper schism within the AI industry about the appropriate relationship between cutting-edge AI development and national security applications. Some companies and researchers argue that engagement with defense agencies is essential for ensuring AI systems are developed with appropriate safeguards and real-world testing. Others believe that certain military applications are fundamentally incompatible with AI safety goals.

This debate has intensified as AI capabilities have advanced more rapidly than governance frameworks. The emergence of increasingly general and capable systems has raised questions about whether existing ethical guidelines and international laws are sufficient to manage potential risks.

Implications for AI Governance

Amodei's public stance could have significant implications for how AI governance evolves in the coming years. By taking a strong position before Congress, he has elevated what was previously a technical and ethical debate among researchers to a matter of public policy. This could influence:

- Legislative approaches to AI regulation - potentially encouraging more restrictive frameworks for certain applications

- Industry norms - setting precedents for how other AI companies engage with defense interests

- International standards - providing a model for how democratic societies might approach military AI differently from authoritarian regimes

The Path Forward

The confrontation between commercial AI developers and defense interests is unlikely to be resolved quickly. As AI capabilities continue to advance, the tension between innovation, safety, and security will only intensify. Amodei's testimony represents one of the most public manifestations of this conflict to date, but it's part of a much larger conversation that will shape the future of AI development and deployment.

What makes this moment particularly significant is that it involves a sitting CEO of a major AI lab taking a public stand that could potentially limit business opportunities and invite political pushback. This suggests that for some AI leaders, safety considerations are beginning to outweigh commercial and political considerations in fundamental ways.

Source: Twitter commentary and analysis of recent congressional testimony by @kimmonismus and other industry observers.