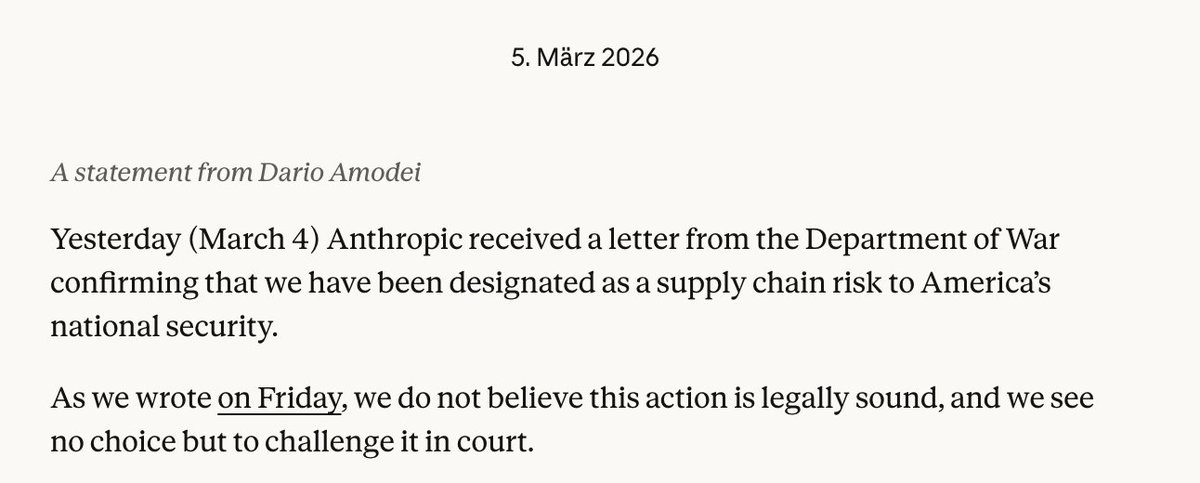

The U.S. Department of Defense and artificial intelligence company Anthropic are engaged in what's being described as "last-ditch" negotiations to prevent the AI safety-focused company from being officially designated as a "supply chain risk" and subsequently banned from government work, according to exclusive reporting by the Financial Times. The discussions represent a critical juncture in the evolving relationship between cutting-edge AI developers and national security institutions.

The Stakes of the Negotiations

At the heart of these negotiations is Anthropic's potential classification under government supply chain risk protocols, which could effectively bar the company from any Department of Defense contracts or collaborations. Such a designation typically applies to companies with foreign ownership, control, or influence that could compromise national security. While Anthropic is an American company, concerns have reportedly emerged about its funding sources and the potential vulnerabilities in its AI systems.

Anthropic CEO Dario Amodei is personally involved in the discussions with top defense officials, seeking to establish clear parameters for how the military can responsibly utilize Anthropic's AI models, particularly Claude, the company's flagship large language model. The negotiations aim to create safeguards that would allow government use while addressing national security concerns.

Anthropic's Unique Position in AI Development

Founded in 2021 by former OpenAI researchers Dario and Daniela Amodei, Anthropic has positioned itself as an AI safety-focused company with its "Constitutional AI" approach. This methodology involves training AI systems according to a set of principles or a "constitution" designed to make them more helpful, honest, and harmless. The company has received significant funding from technology investors including Google, Salesforce, and Amazon, raising questions about potential conflicts or vulnerabilities.

Anthropic's emphasis on AI safety and alignment has made it an attractive partner for government agencies concerned about deploying powerful AI systems. However, this same emphasis on safety and careful deployment may be contributing to the Pentagon's concerns about whether Anthropic's models can be sufficiently controlled or secured for sensitive military applications.

The Broader Context of AI and National Security

These negotiations occur against a backdrop of increasing government scrutiny of AI companies and their potential national security implications. The U.S. government has been actively developing frameworks for assessing AI risks, particularly through initiatives like the National Institute of Standards and Technology's AI Risk Management Framework and various Defense Department AI ethics principles.

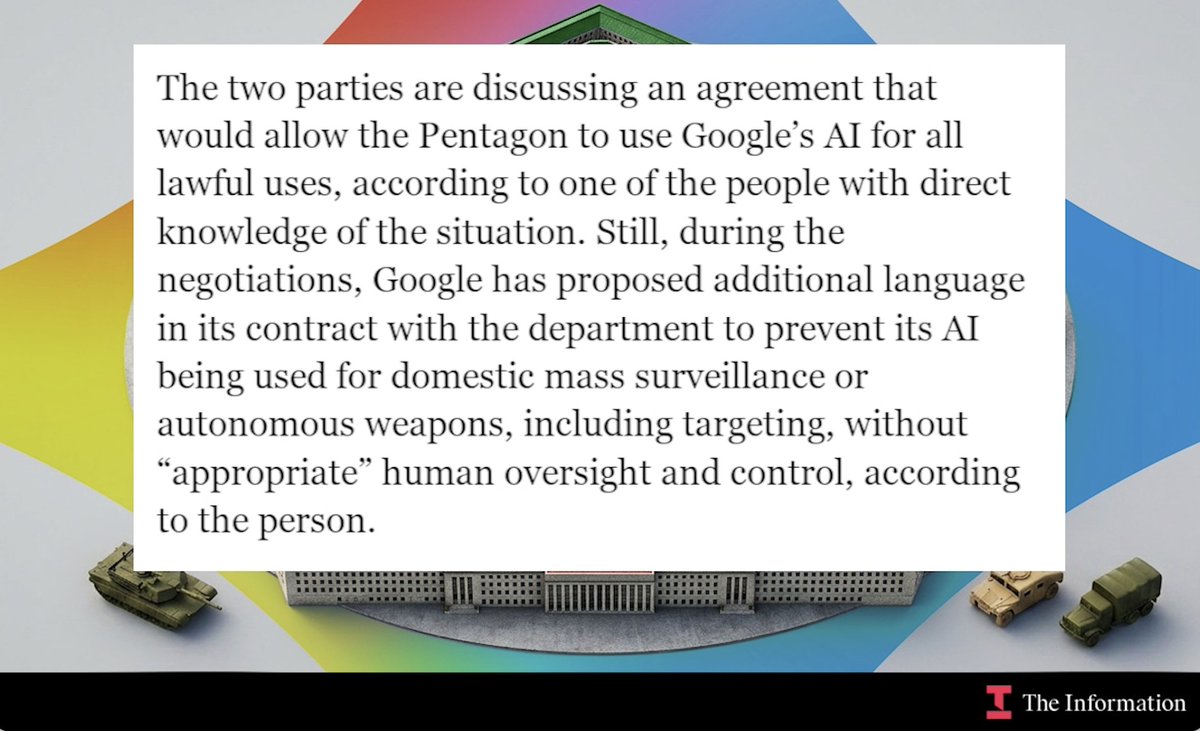

The Pentagon's interest in Anthropic's technology reflects the growing recognition within defense circles that large language models and other advanced AI systems could transform military operations, intelligence analysis, logistics planning, and cybersecurity. However, this potential comes with significant risks, including vulnerabilities to adversarial attacks, potential for misuse, and concerns about the integrity of training data and model development processes.

Potential Compromise Scenarios

Sources familiar with the negotiations suggest several possible compromise scenarios that could emerge:

Limited Use Agreements: The Pentagon might gain access to Anthropic's models for specific, non-sensitive applications while maintaining restrictions on more critical systems.

Enhanced Security Protocols: Anthropic could implement additional security measures, auditing processes, or model modifications specifically for government use cases.

Third-Party Validation: Independent security assessments by government-approved entities could provide assurance about Anthropic's systems.

Technical Safeguards: Implementation of additional guardrails, monitoring systems, or usage restrictions specifically for military applications.

The outcome of these negotiations could set important precedents for how other AI companies engage with government agencies, particularly in the defense and intelligence sectors.

Implications for the AI Industry

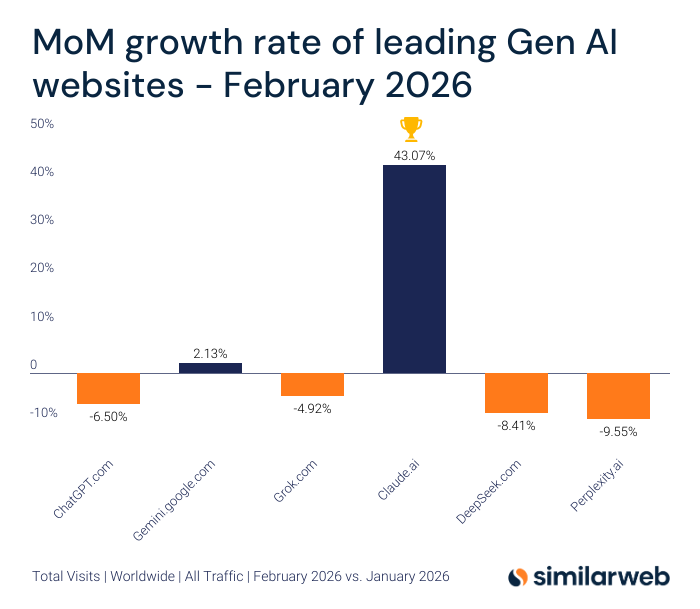

Should Anthropic receive the "supply chain risk" designation, it would represent a significant setback not only for the company but potentially for the broader AI industry's relationship with government. Other AI developers would likely face increased scrutiny of their funding sources, security practices, and potential foreign connections.

Conversely, a successful negotiation that establishes a framework for secure government use of Anthropic's technology could create a model for public-private partnerships in sensitive AI applications. This could accelerate government adoption of advanced AI while maintaining security standards.

The discussions also highlight the tension between rapid AI innovation and the deliberate pace of government security protocols. As AI capabilities advance at an unprecedented rate, government agencies are struggling to develop assessment and approval processes that don't unnecessarily hinder technological progress while still protecting national interests.

Looking Forward

The outcome of these negotiations will likely influence several key areas:

- Government AI Procurement: How defense and intelligence agencies evaluate and approve AI technologies for sensitive applications

- AI Safety Standards: Potential development of more formalized security requirements for AI systems used in national security contexts

- Industry Practices: Possible changes to how AI companies structure their organizations, funding, and security protocols to maintain government eligibility

- International Competition: The balance between leveraging innovative American AI companies and protecting against potential vulnerabilities in an increasingly competitive global AI landscape

As these high-stakes discussions continue, they represent a microcosm of the broader challenges facing society as powerful AI systems become increasingly integrated into critical infrastructure and national security frameworks. The decisions made in these negotiations could shape the trajectory of government AI adoption for years to come.

Source: Financial Times reporting on Pentagon-Anthropic negotiations