A new technical analysis from DAIR AI provides a detailed comparison between Anthropic's Claude Code and the open-source OpenClaw model, offering developers a clear view of the current landscape for AI-powered coding assistants.

Key Takeaways

- A technical dive compares Anthropic's Claude Code, a specialized coding model, against the open-source OpenClaw.

- The analysis examines benchmarks, capabilities, and the trade-offs between proprietary and open-source AI for code.

What Happened

DAIR AI, a research collective focused on democratizing AI, has published a comparative analysis of two prominent code generation models: Anthropic's Claude Code and the open-source OpenClaw. The analysis, shared via social media, aims to dissect their architectures, performance, and practical use cases. This comes as the market for AI coding tools becomes increasingly crowded, with developers needing to choose between proprietary, high-performance APIs and customizable, open-source alternatives.

Context

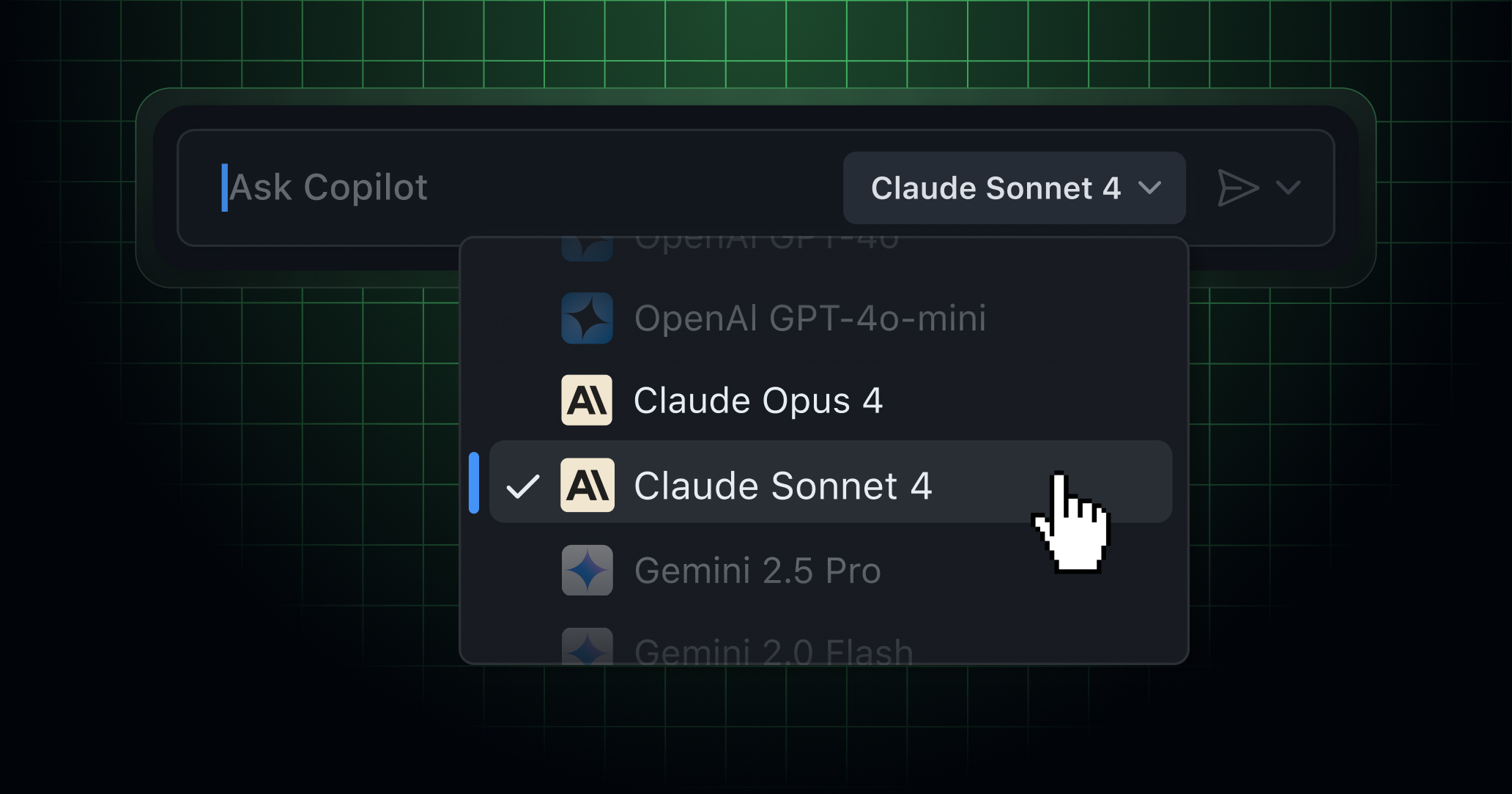

Claude Code is a specialized variant of Anthropic's Claude 3.5 Sonnet model, fine-tuned explicitly for software engineering tasks. It is accessible via Anthropic's API and is known for its strong performance on benchmarks like SWE-Bench and HumanEval. OpenClaw, in contrast, is an open-source code model, often a fine-tuned version of a base model like CodeLlama or DeepSeek-Coder, developed and released by the community. The core tension explored is between the polished, high-capability but closed API of Claude Code and the transparent, modifiable but potentially less performant open-source option.

Key Comparison Points

While the full analysis is contained in the linked article, the comparison typically revolves around several axes:

- Benchmark Performance: How each model scores on standardized coding tests (e.g., HumanEval, MBPP, SWE-Bench). Claude Code generally leads in published scores.

- Architecture & Training: Claude Code is built on Anthropic's proprietary transformer architecture and trained on a massive, curated dataset. OpenClaw models are usually based on publicly documented architectures (like Llama or Mistral) and trained on open datasets like The Stack.

- Cost & Accessibility: Claude Code operates on a pay-per-token API model. OpenClaw can be run locally or on private infrastructure, offering potentially lower long-term cost and no data privacy concerns.

- Customization & Fine-tuning: OpenClaw can be fine-tuned on a company's private codebase. Claude Code is a fixed model, though it can be guided via system prompts.

- Latency & Throughput: API-based models like Claude Code benefit from Anthropic's optimized infrastructure. OpenClaw's performance depends on the user's own hardware.

For developers, the choice hinges on priorities: maximum out-of-the-box accuracy and convenience (Claude Code) versus control, cost predictability, and data privacy (OpenClaw).

gentic.news Analysis

This analysis from DAIR AI is a timely contribution to an ongoing industry debate that we've tracked closely. The release of Claude 3.5 Sonnet in June 2024, which included significant coding improvements, set a new bar for proprietary models. In response, the open-source community has been active, with projects like DeepSeek-Coder and fine-tunes of Meta's Code Llama pushing the envelope of what's possible without an API. This DAIR AI comparison serves as a practical guidepost in that landscape.

The trend is clear: capability is consolidating at the top with players like Anthropic, OpenAI (with its Codex lineage and GPT-4's coding prowess), and Google (Gemini Code Assist), while innovation in accessibility and specialization flourishes in the open-source realm. This dynamic mirrors the broader LLM market but is particularly acute in coding, where integration into developer workflows and toolchains is critical. For enterprise adoption, the data privacy argument for open-source models remains powerful, often outweighing a few percentage points on a benchmark. However, for individual developers and startups, the productivity boost from a top-tier API can be decisive.

Looking forward, the competition will likely drive both sides. Anthropic and others will continue to refine their specialized coding models, while the open-source community will chip away at the performance gap through better datasets, training techniques, and model merging. The ultimate winner is the developer, who gets an ever-improving set of tools.

Frequently Asked Questions

What is Claude Code?

Claude Code is a specialized AI model from Anthropic, built on the Claude 3.5 Sonnet architecture and fine-tuned for software engineering tasks. It is designed to understand, generate, debug, and explain code across numerous programming languages and is accessed via Anthropic's API.

What is OpenClaw?

OpenClaw is not a single model but a term often used to refer to open-source code generation AI models. These are typically community-developed projects, frequently based on architectures like CodeLlama or DeepSeek-Coder, that have been further fine-tuned and released publicly for anyone to use, modify, and run on their own hardware.

Which is better, Claude Code or an OpenClaw model?

There is no universal "better." Claude Code generally offers higher benchmark scores and seamless API integration. An OpenClaw model offers full control, data privacy, and no ongoing API costs. The best choice depends on a developer's specific needs regarding performance, budget, data sensitivity, and desire for customization.

Can I fine-tune Claude Code on my own codebase?

No. Claude Code is a proprietary, closed model served via API and cannot be fine-tuned by users. You can guide it using detailed system prompts and context, but you cannot update its weights. OpenClaw models, by contrast, are specifically designed to be fine-tuned on private datasets.