A new desktop application called Modly has been released, enabling users to generate 3D models from 2D images with all processing performed locally on their own hardware. The tool represents a shift towards accessible, offline-capable 3D content creation, removing the need for cloud API credits or internet connectivity.

What Happened

According to an announcement shared on social media, an independent developer has built and released Modly. The core functionality is straightforward: users provide one or more 2D images, and the application outputs a corresponding 3D model. The defining technical claim is that the entire generative AI pipeline runs 100% locally on the user's desktop computer. This means no data is sent to external servers, addressing privacy, cost, and latency concerns associated with cloud-based 3D generation services.

Context & Technical Implications

3D asset generation from 2D inputs is an active research and commercial frontier, typically dominated by cloud services from companies like Luma AI, Masterpiece Studio, and Kaedim. These services often use sophisticated models like TripoSR or Large Reconstruction Models (LRMs), which require significant GPU memory and compute.

Running such models locally has been a challenge for consumer hardware. Modly's release suggests the developer has successfully optimized or implemented a lighter-weight 3D reconstruction model that can function on consumer-grade GPUs. This could involve techniques like model quantization, distillation, or the use of efficient architectures like One-2-3-45++ or SSDNeRF, which are designed for faster inference.

The move to local-first AI tools is a growing trend, mirroring developments in image generation (Stable Diffusion) and language models (Llama.cpp). It empowers creators with full control over their data and workflow, and can significantly reduce costs for high-volume users.

What We Don't Know Yet

The initial announcement lacks several critical technical details that would be necessary for a full assessment:

- Underlying Model: The specific architecture or base model (e.g., a customized TripoSR, LRM, or novel approach) is not disclosed.

- System Requirements: Minimum and recommended GPU (VRAM), CPU, and RAM specifications are not provided.

- Output Quality & Formats: The fidelity of the generated 3D models (polygon count, texture quality) and supported export formats (

.obj,.glb,.fbx, etc.) are unknown. - Input Requirements: Whether the tool needs a single image or multiple views, and if it supports text prompts or image conditioning.

- Performance Metrics: Generation speed (time per model) on various hardware tiers has not been benchmarked.

- License & Pricing: The business model (one-time purchase, subscription, or free) and license for generated assets are not stated.

gentic.news Analysis

This development fits squarely into two converging trends we've been tracking: the democratization of 3D content creation and the strong market pull towards local, private AI inference. For years, the high computational cost of 3D reconstruction confined it to research labs and cloud APIs. The release of open-weight models like TripoSR by Stability AI in early 2024 began to change that, allowing developers to experiment locally. Modly appears to be one of the first polished, end-user desktop applications to productize this capability, following the template set by applications like ComfyUI and Automatic1111 for Stable Diffusion.

From a competitive standpoint, this directly challenges the SaaS-centric business models of current 3D AI leaders. If Modly's local performance is competent, it could capture a segment of users—indie game developers, hobbyists, privacy-conscious studios—who are unwilling or unable to use cloud services. However, its ultimate impact hinges on the unanswered technical questions above, particularly output quality. Cloud services like Luma AI benefit from constantly updated, state-of-the-art models running on A100/H100 clusters, which may be difficult for a local app to match consistently.

The success of Modly will depend on its execution within the broader ecosystem. Can it integrate with popular 3D suites like Blender or Unreal Engine? Does it support control nets or LoRAs for fine-tuned generation? The answers will determine whether it remains a niche tool or becomes a staple in the 3D artist's toolkit. Its emergence is a clear signal that the 3D generative AI stack is maturing rapidly and moving down the compute stack to the edge.

Frequently Asked Questions

What is Modly?

Modly is a desktop application for Windows, macOS, or Linux that uses AI to generate 3D models from 2D images. Its key differentiator is that all processing is done on your local computer, with no data sent to the cloud.

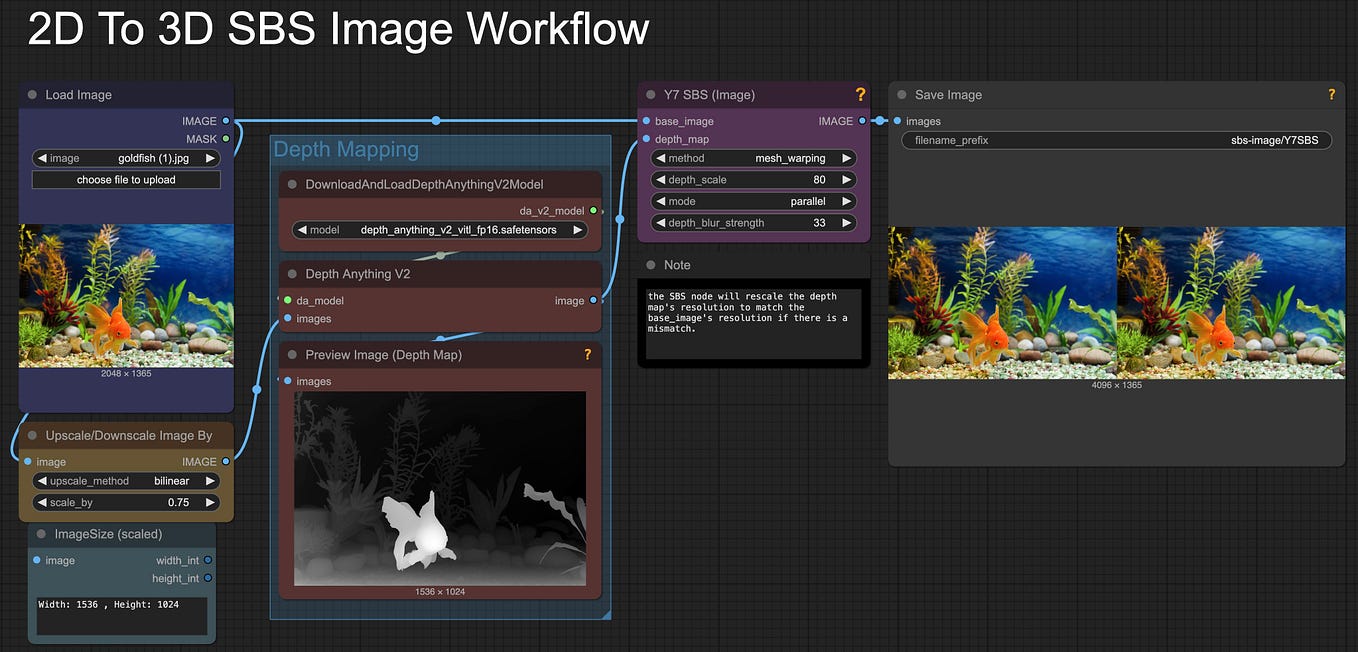

How does local 3D generation work?

Local 3D generation uses a pre-trained neural network (likely based on research models like TripoSR or LRM) that is installed on your computer. When you provide an image, your computer's GPU runs this model to predict a 3D geometry and texture that matches the 2D input, entirely offline.

What are the advantages of running 3D AI locally?

Running locally offers major benefits in data privacy (your images never leave your machine), cost predictability (no per-generation API fees), offline usability, and potentially lower latency after the initial model load. It gives users full control over their workflow.

What hardware do I need to run an app like Modly?

Specific requirements for Modly are not yet published, but based on similar local AI tasks, you would likely need a modern NVIDIA or AMD GPU with at least 8GB of VRAM (with 12GB+ recommended for higher-resolution outputs), a capable CPU, and sufficient system RAM. Performance and output quality will scale directly with your hardware's power.