The Tool — A Decision Engine for Claude Code

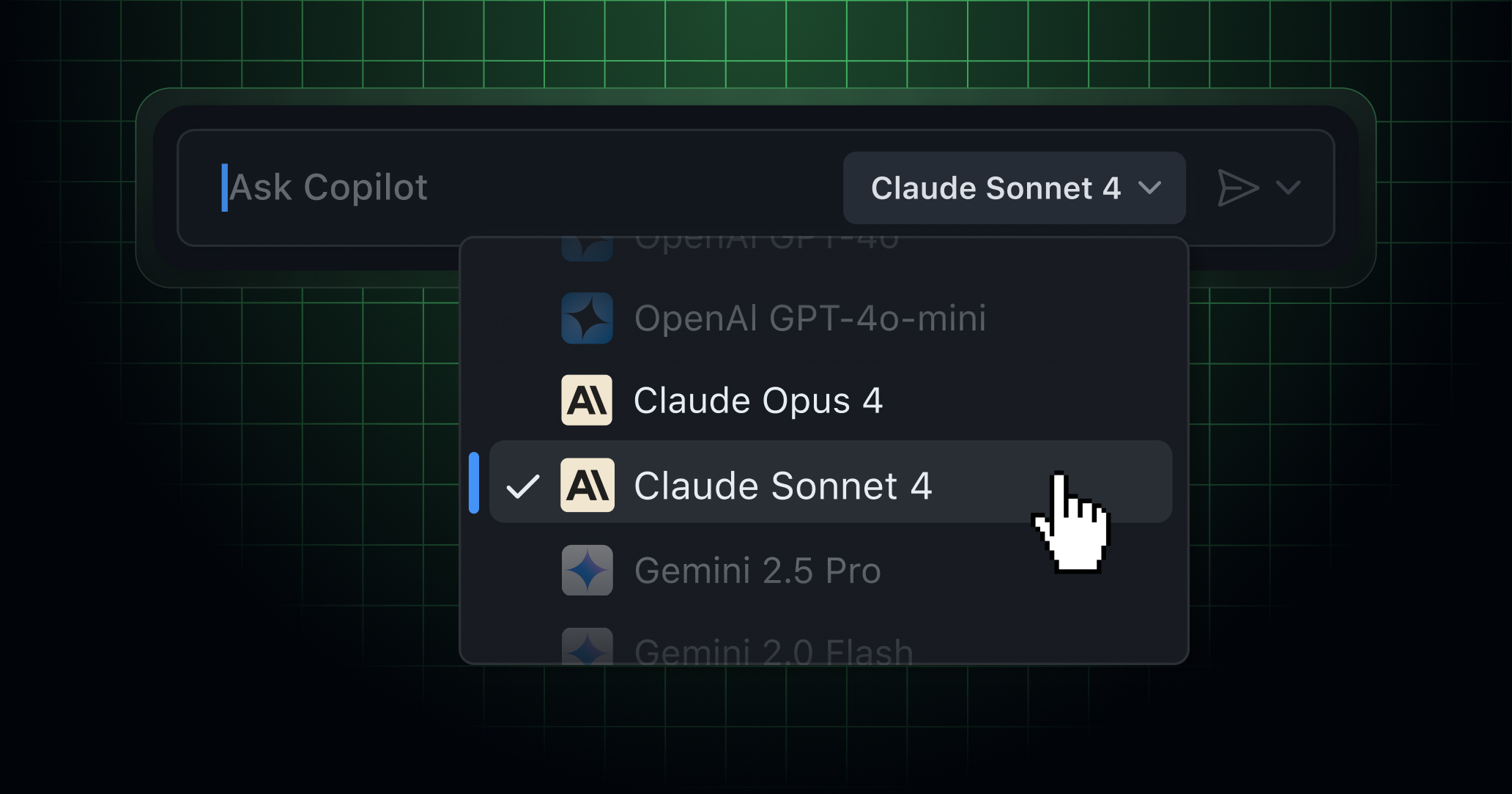

Developer Ben Dansby created Claude Model Chooser, a web interface that mirrors the exact model selection logic Claude Code uses internally. This isn't just another comparison chart—it's a functional replica of the decision engine that runs when you type claude code with different flags and contexts.

The interface presents the same three dimensions Claude Code evaluates:

- Model (Sonnet 4.6, Opus 4.6, etc.)

- Effort (Fast, Medium, High)

- Fast Mode toggle (skips extended thinking)

For each combination, it shows estimated token cost, intelligence level, speed, and best use cases—exactly what Claude Code considers when you don't specify a model explicitly.

Why This Matters — Beyond Guesswork

Most developers default to claude code --model opus for everything, wasting tokens on simple tasks. Others toggle between models randomly based on hunches. This tool reveals the actual algorithm Claude Code uses, which follows these rules:

- Fast Mode + Sonnet: For routine file operations, simple refactors, and git commands where extended thinking adds no value

- Medium Effort + Opus: For complex refactoring, debugging sessions, and architectural decisions

- High Effort + Opus: For multi-file system redesigns, algorithm optimization, and security audits

The tool shows that --fast mode isn't just about speed—it's about token economics. Skipping "extended thinking" can cut token usage by 30-50% for tasks that don't require deep reasoning.

How To Use It — Before You Run Commands

Instead of guessing, use this workflow:

- Before running

claude code, open the Model Chooser - Describe your task in the "What kind of task?" field

- Set your priorities (Quality, Budget, Speed, Context Size)

- Get the recommendation and use it in your command

For example:

- Task: "Refactor this React component to use hooks"

- Priorities: Quality (high), Budget (medium), Speed (medium)

- Recommendation:

claude code --model opus --effort medium

Or:

- Task: "Rename variables across these 5 files"

- Priorities: Speed (high), Budget (high), Quality (low)

- Recommendation:

claude code --model sonnet --fast

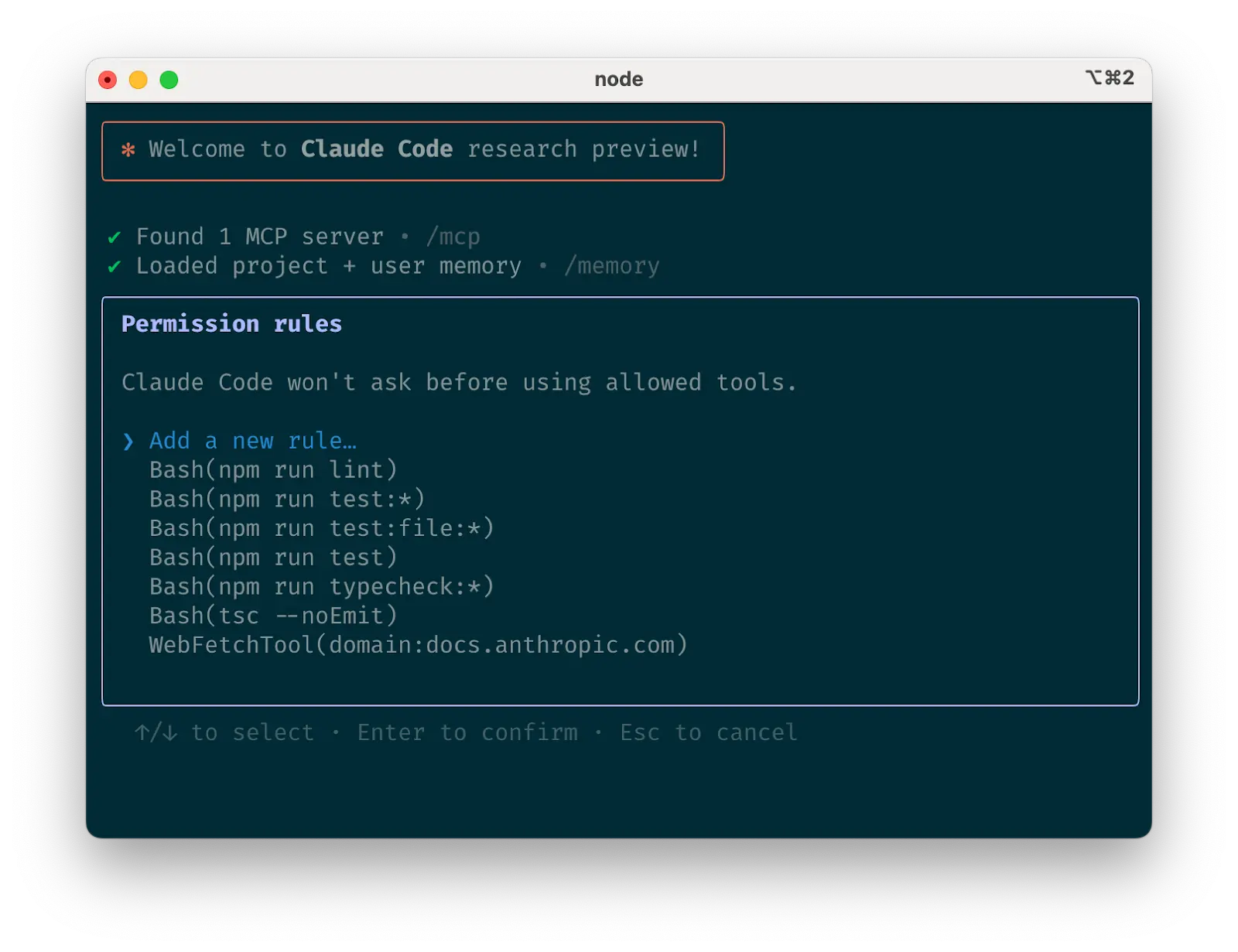

The Hidden Benefit — Understanding Context Triggers

The tool reveals when Claude Code automatically switches models based on your project context. Large codebases with complex CLAUDE.md files often trigger Opus selection, even for seemingly simple tasks. Now you can see why—and decide if you want to override it with --model sonnet --fast for faster iteration.

Try It Now — Today's Workflow Change

Bookmark the Claude Model Chooser and use it for your next three Claude Code sessions. Notice the patterns:

- When does it recommend Sonnet vs. Opus?

- When does Fast Mode make sense?

- How does your

CLAUDE.mdfile affect recommendations?

Then update your mental model. The biggest win isn't using the web tool forever—it's internalizing the decision logic so you can run optimized commands without thinking.

gentic.news Analysis

This tool arrives as Claude Code's user share has nearly tripled to 6% in the past month, indicating rapid adoption among developers who need more than just inline completions. The timing aligns with Anthropic's aggressive model release cadence—Opus 4.6 will likely be retired within a quarter based on recent patterns, making model selection even more critical.

The Model Chooser indirectly addresses a pain point we covered in "Stop Using Claude Code for Small Edits"—developers defaulting to overpowered models for simple tasks. By making the selection algorithm transparent, it helps optimize token usage at a time when Claude Code is being used for everything from Linux kernel audits to hardware debugging via MCP servers.

Interestingly, this follows Anthropic's broader push toward adaptive thinking and compute-constrained efficiency. The Model Chooser essentially externalizes the cost-benefit analysis Claude Code performs internally, giving developers the same visibility into model selection that Anthropic's engineers have.

As Claude Code continues competing with Cursor and Copilot, tools like this that optimize workflow efficiency—not just raw capability—will differentiate it. The next step would be integrating this logic directly into the CLI with a --recommend flag that suggests optimal parameters before execution.