A new report reveals that the U.S. National Security Agency (NSA) is actively using Anthropic's Claude Mythos Preview, one of the most powerful AI models currently available. This adoption comes despite the NSA's own internal classification of Anthropic as a "supply chain risk." The situation underscores the complex calculus government agencies face: balancing stringent security protocols against the operational imperative to leverage the most advanced technological tools.

Key Takeaways

- The National Security Agency is using Anthropic's Claude Mythos Preview for its capabilities, despite having labeled Anthropic itself as a potential supply chain risk.

- This highlights the tension between security concerns and the operational need for cutting-edge AI.

What Happened

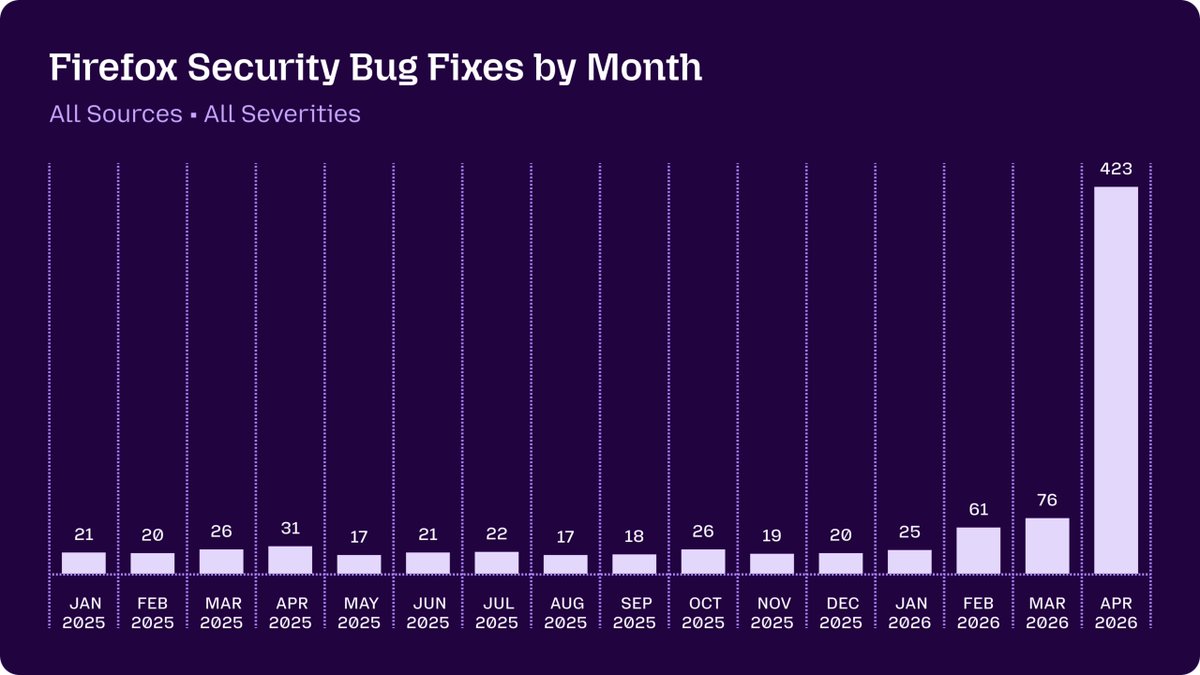

According to a report by The Intercept, the NSA has integrated Anthropic's Claude Mythos Preview into its workflows. The model is being used for tasks that require advanced reasoning and analysis, capitalizing on its significant performance leap over previous models. This move is notable because, as part of its supply chain risk management, the NSA had previously flagged Anthropic as a potential security concern. The agency's decision to proceed with deployment suggests that Mythos's technical capabilities were deemed sufficiently critical to outweigh the identified risks.

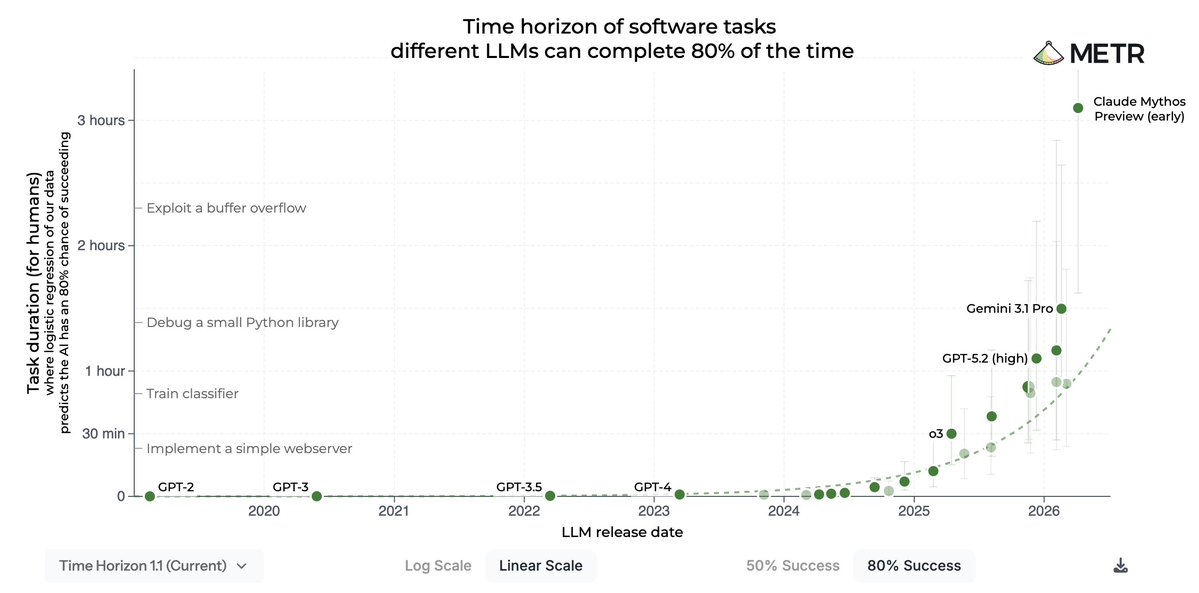

Context: The Rise of Claude Mythos

Claude Mythos is Anthropic's flagship frontier model, positioned as a direct competitor to OpenAI's o1 series and Google's Gemini 2.0. It is renowned for its deep reasoning abilities, low hallucination rate, and strong performance on complex, multi-step tasks. The "Preview" designation indicates a limited-access, pre-full-release version typically offered to select enterprise and research partners for evaluation and integration.

Anthropic, founded by former OpenAI research executives, has consistently emphasized AI safety and constitutional AI principles. However, its status as a private company with significant investment from technology giants has made its products subject to government scrutiny regarding data sovereignty and potential foreign influence.

The Security Paradox

The NSA's actions highlight a recurring tension in national security technology procurement. On one hand, agencies must vet vendors for potential vulnerabilities, foreign ties, or dependencies that could compromise sensitive operations—a process that leads to "supply chain risk" labels. On the other hand, falling behind in the adoption of transformative technologies like advanced AI poses its own, potentially greater, national security risk.

The use of Mythos suggests the NSA performed a detailed evaluation and likely established stringent safeguards, such air-gapped deployments, rigorous output auditing, or task-specific limitations, to mitigate the supply chain concerns while harnessing the model's power.

gentic.news Analysis

This development is a significant data point in the ongoing narrative of government adoption of frontier AI. It follows our previous coverage on the CIA's contract with OpenAI for bespoke analysis tools and the Department of Defense's testing of various LLMs for logistics and planning. The NSA's move indicates that, despite public caution and regulatory debates, intelligence and defense communities are aggressively integrating these models into core missions.

The contradiction between the "risk" label and operational use is less surprising upon closer inspection. Anthropic, while labeled a risk, is a U.S.-domiciled company. The risk likely pertains to its cloud infrastructure dependencies or its investor base, which includes Amazon and Google. This is a different category of risk than procuring hardware or software directly from adversarial nations. The calculus appears to be that the risks associated with Anthropic's corporate structure are manageable, especially when compared to the alternative: not having access to a top-tier reasoning model that adversaries may already be using.

This also reinforces a trend we've noted: capability is the ultimate trump card. For mission-critical applications where AI can provide a decisive advantage, agencies are willing to navigate complex procurement and security hurdles. The NSA's endorsement serves as a powerful, albeit tacit, benchmark for Mythos's performance, suggesting it has demonstrably surpassed available open-source or in-house alternatives for specific tasks. This will undoubtedly influence procurement decisions across other federal agencies and allied governments.

Frequently Asked Questions

What is Claude Mythos?

Claude Mythos is Anthropic's most advanced AI model, focused on deep reasoning and complex problem-solving. It is part of the latest generation of frontier models that prioritize accuracy and logical chain-of-thought over raw creative generation.

Why would the NSA label Anthropic a supply chain risk?

Supply chain risk assessments evaluate all aspects of a vendor's ecosystem. For Anthropic, concerns could include its reliance on major cloud providers (AWS, Google Cloud) for training and hosting, the composition of its investor base, or security practices around its training data and model weights. The label is a standard precaution, not necessarily an accusation of wrongdoing.

Does this mean the NSA's data is being sent to Anthropic?

Almost certainly not. For a sensitive organization like the NSA, deployment of a model like Mythos would follow one of two patterns: a heavily secured API connection with strict data governance agreements, or more likely, an on-premises or air-gapped deployment of the model. In the latter case, the agency would run the model on its own secure servers, with no data leaving its infrastructure.

What tasks would the NSA use an AI like Mythos for?

While specific use cases are classified, plausible applications include: analyzing vast volumes of foreign signals intelligence (SIGINT) to surface patterns and connections, assisting in complex code analysis and vulnerability research, summarizing and cross-referencing technical reports, and simulating or planning logistical operations.