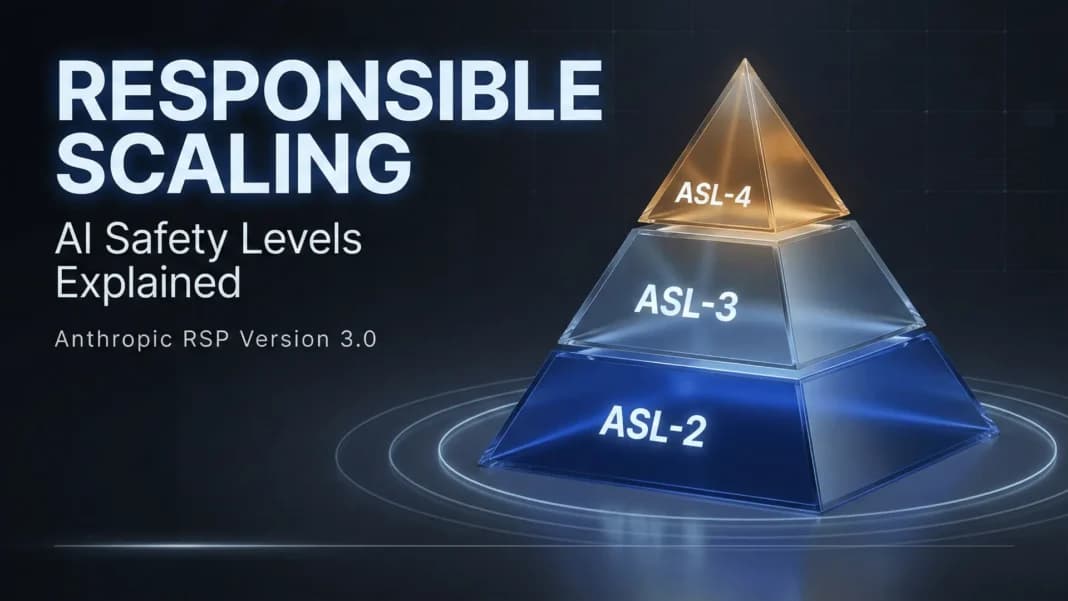

On February 24, 2026, Anthropic released version 3.0 of its Responsible Scaling Policy (RSP), marking a significant evolution in how one of the leading AI companies approaches safety governance. The update represents a philosophical shift from what some perceived as "binding ourselves to the mast" commitments toward a more adaptive, transparent framework that emphasizes continuous assessment and external accountability.

The Core Changes in RSP v3.0

The most notable change in RSP v3.0 is the move away from rigid, unilateral commitments to pause development under specific conditions. While previous versions created the impression of irreversible safety commitments, the new framework acknowledges that responsible scaling requires flexibility and responsiveness to changing circumstances.

Key components of the updated policy include:

- Risk Reports: Regular, detailed assessments of AI capabilities and potential hazards

- External Review Mechanisms: Increased transparency and third-party evaluation of safety practices

- Roadmap Integration: Better alignment between safety protocols and development timelines

- Removal of Distorting Requirements: Elimination of previous rules that the company says were creating perverse incentives in safety efforts

Why Anthropic Made This Shift

According to the detailed analysis published on LessWrong by an Anthropic employee (who emphasizes these are personal views, not official company statements), the revision wasn't prompted by an immediate increase in catastrophic risk from current AI systems. Rather, it reflects accumulated learning about the limitations of the previous framework.

The author notes taking "significant responsibility for this change," having pushed for the revision for about a year and led development of the new RSP. The motivation stems from recognizing that the previous approach had design flaws that needed addressing, particularly around how safety commitments interacted with competitive dynamics in the AI industry.

The Competitive Landscape Consideration

An important contextual factor in this revision is the acknowledgment that unilateral safety commitments become problematic when other AI developers don't adhere to similar standards. The original RSP contained language allowing revision if other companies weren't following comparable safety practices, but this nuance was often overlooked in public perception.

The new framework appears designed to create a more sustainable approach to safety that can withstand competitive pressures while maintaining high standards. This reflects the reality that AI safety cannot be achieved by one company acting alone in a rapidly advancing field with multiple major players.

Implications for AI Governance

RSP v3.0 represents a maturation of corporate AI governance approaches. By moving toward external review and regular risk reporting, Anthropic is adopting practices more commonly associated with regulated industries like pharmaceuticals or aviation. This could set a precedent for how AI companies operationalize their ethical commitments.

The emphasis on transparency through risk reports addresses a common criticism of AI safety efforts: that they happen behind closed doors without sufficient external scrutiny. If implemented effectively, this could improve public trust and enable more informed policy discussions about AI risks.

Potential Criticisms and Concerns

As the author anticipates, some observers will be "upset about the move away from a 'hard commitments' vibe." Critics may argue that flexible standards are easier to circumvent when commercial pressures mount, and that the previous approach's strength was precisely in its perceived irrevocability.

There's also a risk that "adaptive" frameworks could become excuses for lowering standards rather than improving them. The effectiveness of the external review mechanisms will be crucial in determining whether this represents genuine progress or a retreat from ambitious safety commitments.

The Broader AI Safety Ecosystem Impact

Anthropic's policy evolution comes at a critical moment for AI governance, with multiple companies developing their own scaling policies and governments considering regulatory frameworks. The shift toward more transparent, reviewable safety practices could influence industry norms and potentially inform regulatory approaches.

The author expresses hope that other companies will make similar changes, suggesting this revision could catalyze improvements across the industry rather than representing a lowering of standards. This highlights the interconnected nature of AI safety—no single company's policies exist in isolation.

Looking Forward: Implementation and Evolution

The true test of RSP v3.0 will be in its implementation. Key questions include:

- How rigorous will the external review processes be?

- Will risk reports contain meaningful information or become sanitized public relations documents?

- How will the company balance flexibility with maintaining safety standards under pressure?

As AI capabilities continue to advance, governance frameworks must evolve accordingly. RSP v3.0 represents Anthropic's attempt to create a more sustainable, effective approach to responsible scaling—one that can adapt to new challenges while maintaining core safety principles.

Source: Anthropic's Responsible Scaling Policy v3.0 announcement and analysis published on LessWrong by an Anthropic employee (personal views).