New research from Anthropic investigates the economic dimensions of public sentiment toward artificial intelligence, drawn from a massive survey of 81,000 people conducted last month. The work aims to translate broad public concerns and aspirations into concrete priorities for AI safety and development.

Key Takeaways

- Anthropic published new research analyzing the economic hopes and worries expressed by 81,000 people in a prior survey on AI.

- The findings aim to guide AI development toward public priorities.

What the Research Examined

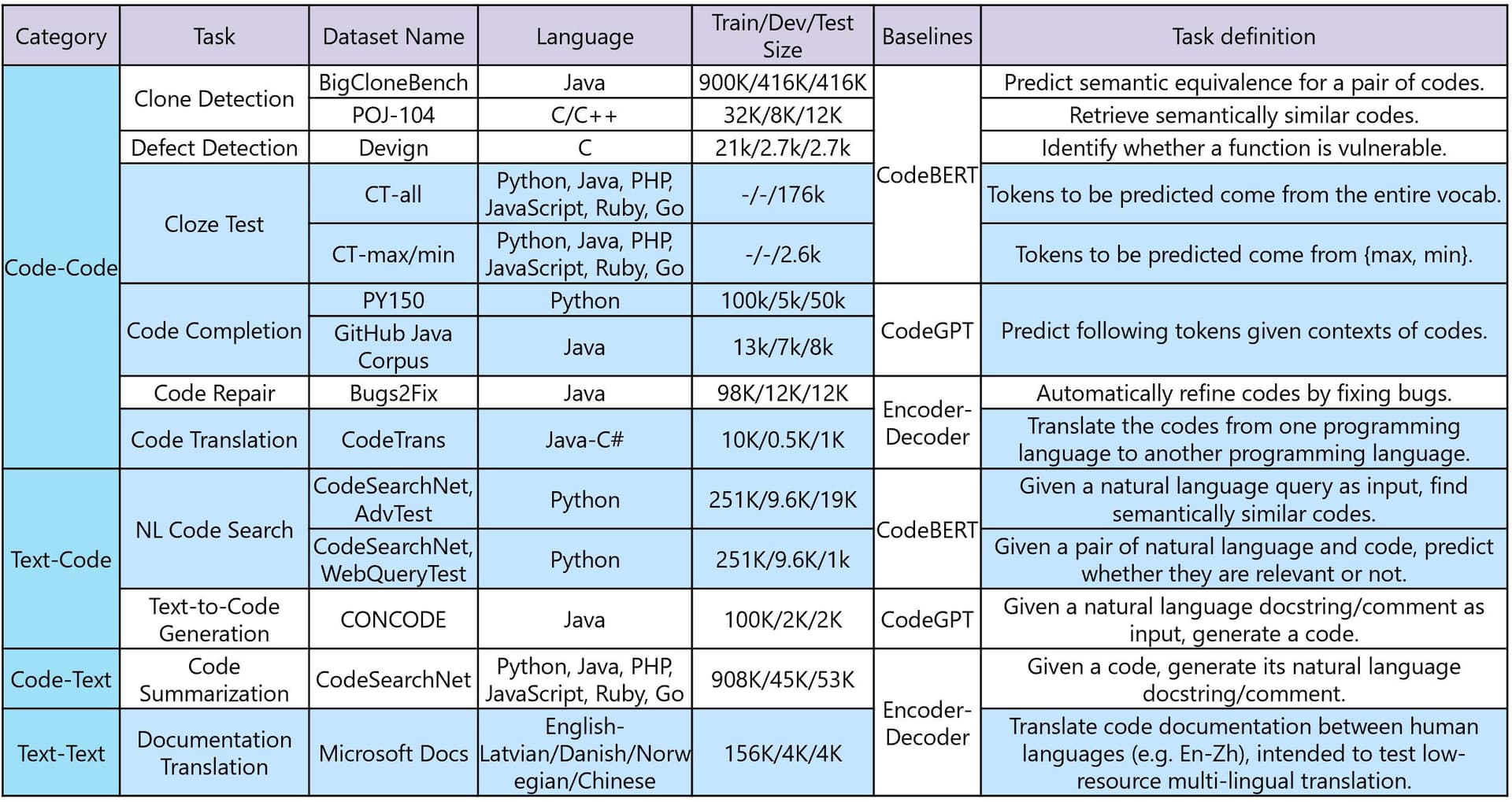

The analysis focuses specifically on the economic hopes and worries referenced within the open-ended responses from Anthropic's earlier public survey. Rather than presenting new polling data, this research performs a qualitative and quantitative dissection of the economic themes that emerged organically when people were asked what they want from AI.

Key Themes from Public Responses

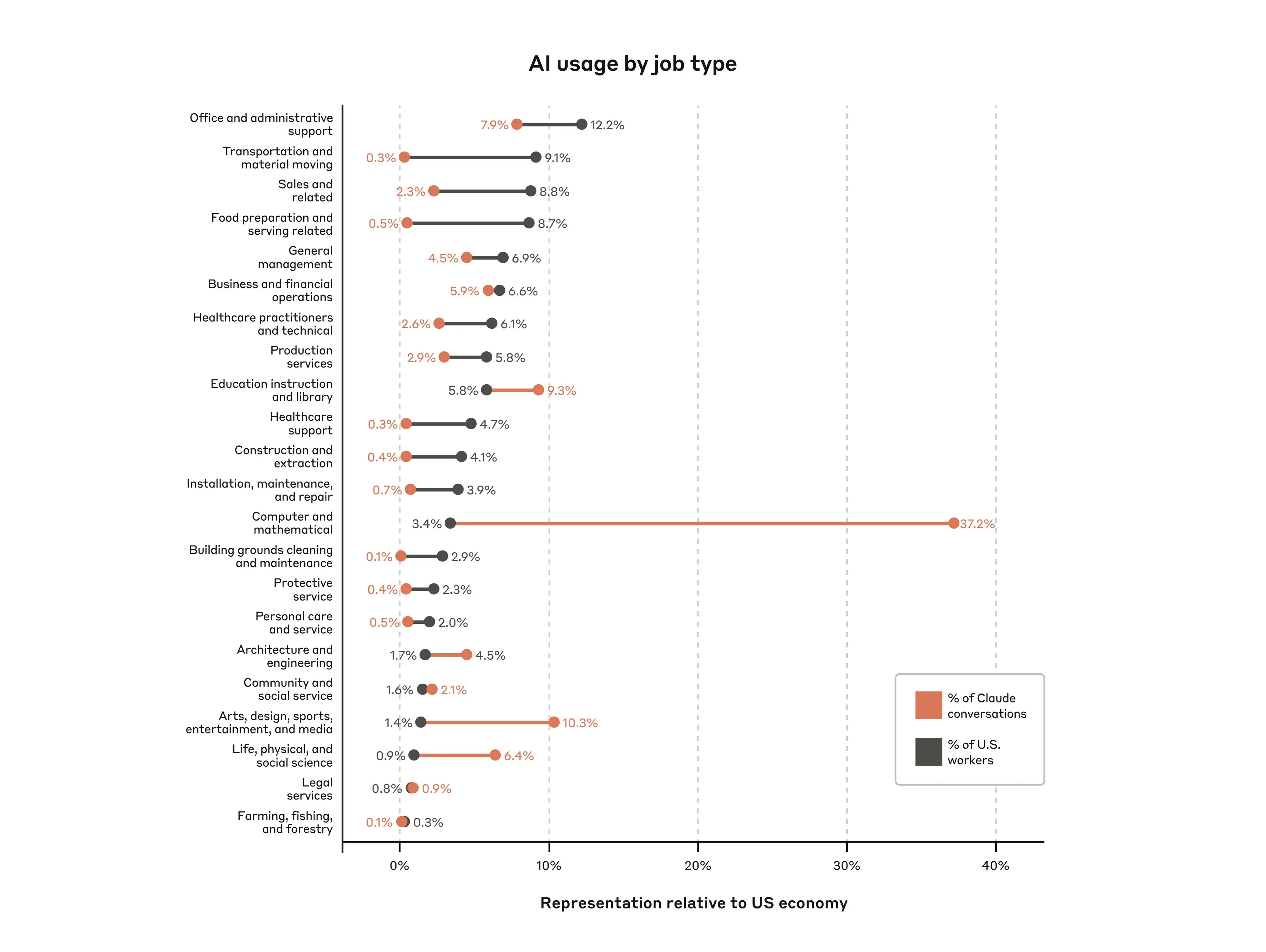

While the full paper provides the detailed breakdown, the research categorizes economic sentiments into several key areas. These typically include concerns about job displacement and labor market transformation, hopes for increased productivity and economic growth, anxieties about inequality and access, and speculation about new forms of work and industry. The value of the research lies in ranking the prevalence and urgency of these themes from a large, global sample.

Connecting Public Sentiment to AI Development

For Anthropic, a company whose foundational mission is aligned with building safe and beneficial AI, this research is a direct input into its development process. Understanding public priorities, especially regarding economic stability, informs how the company approaches model deployment, safety research, and policy advocacy. The goal is to ensure AI development is responsive to the societal risks and benefits that matter most to people.

The Context of AI Public Trust

This research is part of a broader, industry-wide effort to gauge and address public trust in AI. As large language models become more capable and integrated into economic systems, managing the societal transition is as critical a technical challenge as improving benchmark scores. Anthropic's large-scale survey work provides a data-driven alternative to speculative debates about AI's impact.

gentic.news Analysis

This research represents a strategic and necessary investment for Anthropic. In the competitive landscape of frontier AI labs—dominated by OpenAI, Google DeepMind, and Meta—technical prowess is table stakes. Anthropic has consistently differentiated itself through its principled approach to safety, embodied by its Constitutional AI training framework. This public sentiment research is an extension of that differentiation into the sociotechnical domain. It provides a empirical foundation for Anthropic's policy positions and safety priorities, which are increasingly scrutinized by regulators.

The focus on economic impact is particularly timely. As we covered in our analysis of the 2026 AI Impact Act hearings, legislative bodies are intensely focused on labor market displacement and economic inequality. Anthropic's data allows it to engage in these policy debates from a position of evidence, not just theory. Furthermore, this follows a trend of AI labs investing in anthropological and sociological research to understand model impact, a trend we noted in our piece on DeepMind's Ethics & Society team expansion.

Practically, this research likely feeds directly into the development of Anthropic's next-generation models, like the anticipated successor to Claude 3.5 Sonnet. If a core public concern is job displacement in creative fields, for instance, Anthropic might prioritize research on AI-as-assistant paradigms over AI-as-replacement in those domains. This aligns with a broader industry pivot towards agentic workflows that augment human capability, a trend we've tracked closely in our agent coverage.

Frequently Asked Questions

What did Anthropic's original survey ask?

The survey, conducted a month prior to this economic analysis, asked a simple, open-ended question to 81,000 participants globally: "What do you want from AI?" The new research paper digs into the subset of responses that explicitly mentioned economic or work-related outcomes.

How will this research affect Anthropic's AI models?

The findings are intended to inform Anthropic's safety research, policy development, and product deployment strategies. For example, if fears of job displacement in specific sectors are highly prevalent, Anthropic might enhance its models' capabilities as collaborative tools for those professions or develop more robust safeguards against fully automated replacement in sensitive areas.

Is this research peer-reviewed?

The research is published by Anthropic's research team. It is not listed as an academic peer-reviewed paper but as an official company research publication, similar to many technical reports released by AI labs. The methodology and full data analysis are contained within the report.

How does this compare to other AI sentiment surveys?

Anthropic's survey is notable for its very large sample size (81,000) and open-ended format, which avoids leading questions. Other efforts, like the annual surveys from the Pew Research Center or Stanford's AI Index, often use multiple-choice questions on predefined topics. Anthropic's approach aims to capture top-of-mind, unprompted concerns from a global audience.