Anthropic is experimenting with a novel model orchestration strategy: allowing its Claude 3.5 Sonnet model to automatically call upon the more powerful Claude 3 Opus when faced with complex tasks. This "phone a friend" mechanism, revealed in a social media post by a company employee, is designed to enhance performance on challenging queries while simultaneously reducing total computational cost.

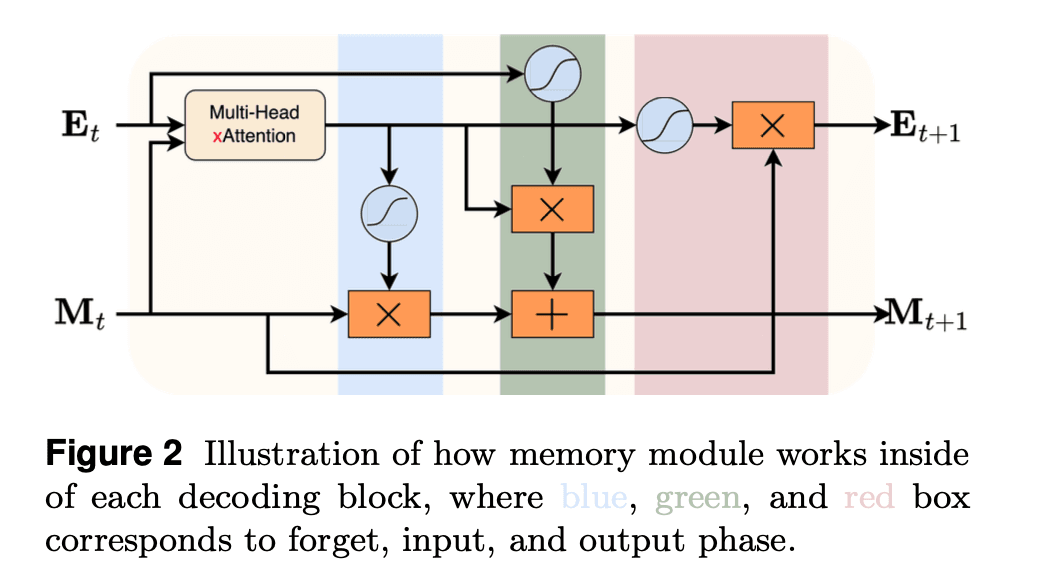

The core idea is one of intelligent routing. Instead of a user or application always defaulting to the most expensive model (Opus) for all tasks, or accepting potentially lower-quality outputs from a cheaper model (Sonnet) on hard problems, the system attempts to dynamically choose the right tool. Claude 3.5 Sonnet would handle the majority of requests independently. When it encounters a problem it deems sufficiently difficult—or perhaps after struggling for a certain number of reasoning steps—it can pass the task, along with its context, to Claude 3 Opus to complete.

What Happened

Alex Albert, an employee at Anthropic, posted about the experiment on social media. The post stated: "Allowing Sonnet to 'phone a friend' (i.e. call Opus) increases performance while also reducing total cost since it reduces tokens spent trying to solve more complex tasks."

The accompanying graphic suggests this is an internal experiment or a feature under development. The logic is economic and performance-driven: Opus, while more capable, is also more expensive per token. If Sonnet can solve simpler tasks efficiently but knows when to defer, the average cost per task drops, and the success rate on hard tasks increases.

Technical Implications

This approach sits at the intersection of several active research and engineering trends:

- Model Routing & Cascading: Instead of a static model choice, systems intelligently route queries to the most cost-effective model likely to succeed. This is similar to cascading systems used in search and classification for decades.

- Mixture-of-Agents (MoA): It extends the concept of Mixture-of-Experts (MoE) at an architectural level to a Mixture-of-Agents at the API level, where different, fully separate models collaborate.

- Cost-Aware Inference: A primary goal is explicitly reducing inference cost, a major operational concern for companies deploying LLMs at scale.

The key technical challenge is the "deferral decision." Sonnet must accurately assess task complexity and its own likelihood of success. A poorly tuned deferral mechanism could lead to excessive, costly calls to Opus for simple tasks or, conversely, Sonnet wasting tokens (and producing poor results) on tasks it should have passed off.

How It Compares

This is not the first instance of model routing, but its implementation within a single vendor's tightly controlled model family is notable.

- vs. Manual Model Selection: This automates what developers currently do manually: writing logic to choose between Sonnet and Opus APIs based on heuristics.

- vs. External Routers: Services like Martian Router or OpenRouter dynamically select between models from different providers (OpenAI, Anthropic, etc.) based on price and performance benchmarks. Anthropic's approach is a proprietary, intra-family optimizer.

- vs. Pure Cascades: Traditional cascades often involve a fast, cheap model filtering easy cases for a slow, accurate model. Here, the first model (Sonnet) actively works on the problem before potentially deferring, which is more akin to collaborative problem-solving.

What to Watch

If this feature moves from experiment to a public API offering, it could significantly change how developers architect their use of Anthropic's models. Key questions remain:

- Transparency: Will developers see when a "handoff" occurs, and will they be billed separately for Sonnet and Opus tokens?

- Control: Can developers set thresholds or rules for when Sonnet should call Opus?

- Latency: What is the added latency of the handoff process itself?

- Benchmarks: What are the measurable performance gains and cost savings on standardized benchmarks?

gentic.news Analysis

This experiment is a logical next step in Anthropic's commercial and technical strategy following the release of the Claude 3 model family in March 2024. The three-tiered family (Haiku, Sonnet, Opus) was explicitly designed for different cost-performance trade-offs. This "phone a friend" system is an attempt to blur those tiers dynamically, creating a more adaptive and economically efficient product.

It aligns with a broader industry trend towards inference optimization, as covered in our analysis of vLLM's continuous batching and SambaNova's dataflow architecture. As model APIs become commodities, vendors are competing on total cost of ownership and smart orchestration, not just raw benchmark scores. Anthropic's move can be seen as a defensive play against similar routing services from third parties and an offensive move to lock developers deeper into its ecosystem by simplifying cost optimization.

Furthermore, this mirrors internal techniques used by large tech companies for years. Google, for instance, has long used cascades of smaller, faster models for tasks like query understanding before invoking heavier machinery. Anthropic is productizing this pattern for the API economy. If successful, it pressures competitors like OpenAI to offer similar dynamic routing between, say, GPT-4o and o1 models, or for open-source model providers to enable easy cascading between different model sizes.

Frequently Asked Questions

What is Claude 3.5 Sonnet "phoning a friend"?

It's an experimental system where the Claude 3.5 Sonnet AI model can automatically pass a difficult task it's working on to the more powerful (and expensive) Claude 3 Opus model. Sonnet starts the task, and if it determines the problem is too complex, it calls Opus to finish it, aiming for better results at a lower total cost than just using Opus from the start.

How does calling Opus reduce total cost?

The logic is that Opus costs more per token of processing than Sonnet. If a user always used Opus for every task, they'd pay the highest rate. If they always used Sonnet, they might get poor results on hard tasks, wasting those tokens. By having Sonnet handle easy tasks alone and only call Opus for the hard parts, the average cost per task is lowered, as more work is done by the cheaper model.

Is this feature available in the Anthropic API now?

No. This was revealed as an internal experiment by an Anthropic employee. There is no public timeline or confirmation if or when this capability will be added to the official Claude API for developers to use.

Could this work with other AI models?

The concept of model routing or cascading is universal. Technically, you could build a system where any smaller model calls a larger one. However, Anthropic's experiment likely benefits from both models (Sonnet and Opus) sharing the same underlying architecture, training data, and "reasoning style," making the handoff of context between them more seamless and effective.