In a public statement, Amazon Web Services (AWS) CEO Matt Garman confirmed that "all of Anthropic’s latest models are trained on Trainium." This direct acknowledgment from AWS's top executive provides the clearest signal yet of the technical depth of the partnership between the cloud giant and the leading AI research company.

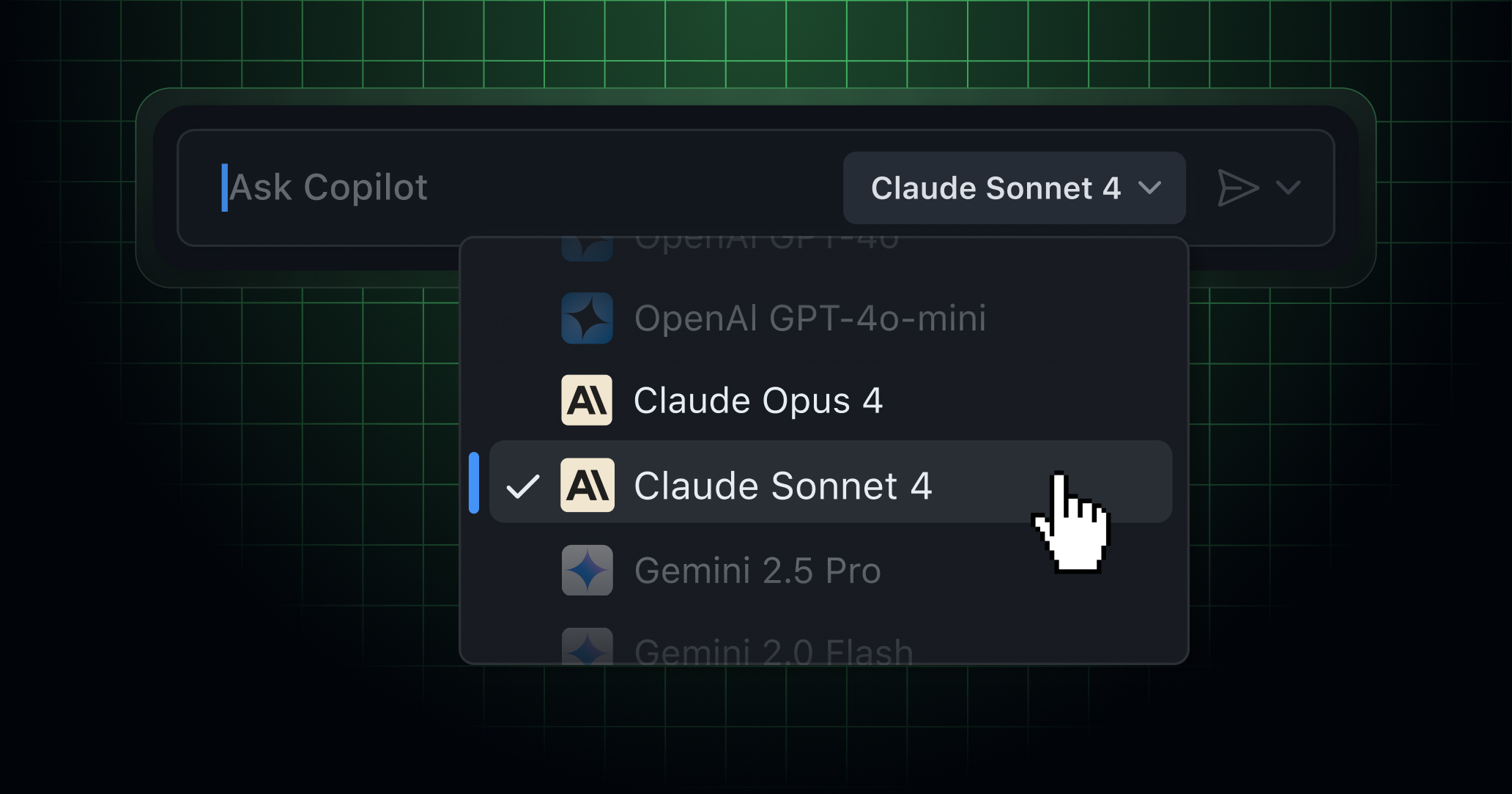

The statement, made during a public appearance and reported via social media, explicitly ties the performance of Anthropic's flagship Claude models—including the recent Claude 3.5 Sonnet and Claude 3 Opus—to Amazon's custom-designed AI accelerator chips. For AI practitioners, this confirms the underlying hardware stack for one of the industry's most capable model families.

What This Means for the AI Stack

Trainium (Trn1) and its successor, Trainium2 (Trn2), are AWS's purpose-built chips for training machine learning models. They are Amazon's competitive answer to NVIDIA's dominant H100 and upcoming Blackwell GPUs. By stating that Anthropic's latest models are trained on Trainium, Garman indicates a shift or consolidation of Anthropic's training workload onto AWS's proprietary silicon.

This has several immediate implications:

- Supply Chain Independence: It demonstrates that a top-tier AI lab can build state-of-the-art models without relying solely on NVIDIA GPU supply, which has been constrained.

- Performance Validation: For Trainium to be chosen for the entire training cycle of models as complex as Claude 3.5 Sonnet, it must meet Anthropic's stringent requirements for stability, scalability, and cost-effectiveness.

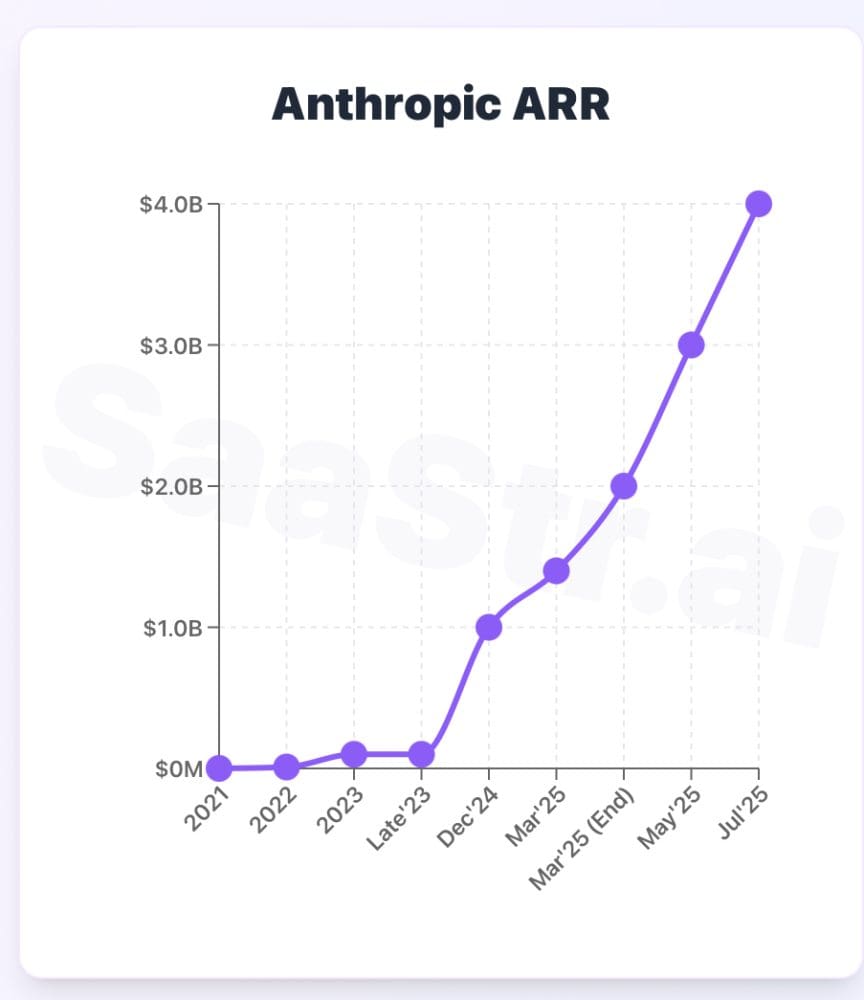

- Strategic Lock-in: The $4 billion investment Amazon made in Anthropic in 2023 is now coupled with a deep technical dependency, as model training infrastructure is non-trivial to migrate.

The Broader Cloud-AI Partnership

Anthropic is not just using AWS for compute; it is the primary cloud provider for the company's workloads. Furthermore, Anthropic has made Claude available on Amazon Bedrock, AWS's managed service for foundation models, and has committed to providing AWS customers with early access to unique features.

Garman's comment serves as a powerful case study for AWS in its competition with Microsoft Azure (which has a deep partnership with OpenAI) and Google Cloud Platform. The message to enterprise clients is that the most advanced AI models are being built natively on AWS hardware.

gentic.news Analysis

This confirmation is the logical culmination of a partnership trajectory we have been tracking since late 2023. As we reported following Amazon's $4 billion investment in Anthropic in September 2023, the deal was always about more than capital; it was about forging a primary cloud and silicon alliance. This move directly counters the Microsoft-OpenAI and Google-DeepMind vertical integrations, creating a third powerful pole in the AI infrastructure landscape.

The timing is also critical. With NVIDIA's next-generation Blackwell GPUs on the horizon, AWS is aggressively proving the viability of its alternative stack now. Successfully training models at the scale of Claude provides tangible evidence for AWS's "Project Ceiba" supercomputer, which will cluster up to 100,000 Trainium2 chips. For AI engineers, the practical takeaway is that a viable, high-performance alternative to NVIDIA for large-scale training now exists in production, which could improve availability and potentially apply downward pressure on cloud training costs over time.

However, this deep integration raises questions about neutrality. Anthropic has maintained a multi-cloud posture, with its models also available on Google Cloud Vertex AI. Garman's statement underscores where the core R&D happens. The long-term risk for Anthropic is the same as for OpenAI with Microsoft: an increasing dependency on a single infrastructure provider, which could influence strategic roadmaps.

Frequently Asked Questions

What is Amazon Trainium?

Amazon Trainium is a custom-designed machine learning accelerator chip built by AWS specifically for training deep learning models. It is optimized for high-performance, cost-effective training in the cloud and is offered as part of AWS's EC2 Trn1 and Trn2 instance families.

Does this mean Anthropic doesn't use NVIDIA GPUs?

Not necessarily. The statement specifies "latest models," implying the full training run for Claude 3.5 Sonnet and its immediate predecessors was done on Trainium. Previous model generations or certain research workloads may have used other hardware. However, it marks a significant commitment to Trainium as their primary training platform.

Why does it matter what chips a model is trained on?

The training hardware defines the cost, speed, and scalability of AI development. By using Trainium, Anthropic is leveraging AWS's integrated stack, which can be optimized end-to-end. It also reduces their exposure to the competitive and supply-constrained market for NVIDIA GPUs. For customers, it validates AWS's AI infrastructure as capable of producing top-tier models.

Can I access Trainium chips to train my own models?

Yes. Any AWS customer can provision Trn1 or Trn2 instances via Amazon EC2 or use them through managed services like Amazon SageMaker and Amazon Bedrock. Garman's announcement serves as a high-profile endorsement of their capability for large-scale, cutting-edge model training.