In a strategic move that could reshape the competitive landscape of enterprise AI, Anthropic has announced the elimination of premium pricing for extended context windows in its flagship Claude models. The company has removed the 5x input token multiplier previously applied to contexts exceeding 200,000 tokens for Claude 3 Opus and Claude 3.5 Sonnet, effectively making million-token processing available at standard rates.

The Pricing Transformation

Until this update, Anthropic's pricing structure imposed significant financial barriers for applications requiring deep analysis of large documents. For contexts beyond 200,000 tokens, the company charged a surcharge that could reach up to 100 percent additional cost—effectively a five-fold multiplier on input tokens. This premium pricing reflected the computational intensity of processing such extensive inputs but made million-token usage prohibitively expensive for many practical applications.

With the new pricing model, both Opus 4.6 and Sonnet 4.6 now offer their full one-million-token context window at standard rates: $5 per million input tokens and $25 per million output tokens for Opus, and $3/$15 for Sonnet. Whether a prompt contains 9,000 tokens or 900,000 tokens no longer affects pricing—a fundamental shift in how long-context AI is monetized.

Expanded Capabilities and Availability

Beyond the pricing changes, Anthropic has significantly increased media processing limits, jumping from 100 to 600 images or PDF pages per request. This enhancement, combined with the pricing overhaul, creates new possibilities for document-intensive workflows across industries.

The new pricing applies to Claude Code (Max, Team, and Enterprise tiers) and is available through major cloud platforms including Amazon Bedrock (though the media limit increase doesn't apply there), Google Cloud Vertex AI, and Microsoft Foundry. This broad availability ensures enterprise customers can leverage these changes across their existing cloud infrastructure.

Technical Context and Performance

Context windows represent a critical parameter in large language models, determining how much information an AI can process in a single interaction. Measured in tokens (roughly equivalent to words or subwords), these windows enable sophisticated tasks like summarizing entire books, reviewing massive legal contracts, analyzing extensive software repositories, or processing long conversation histories without truncation.

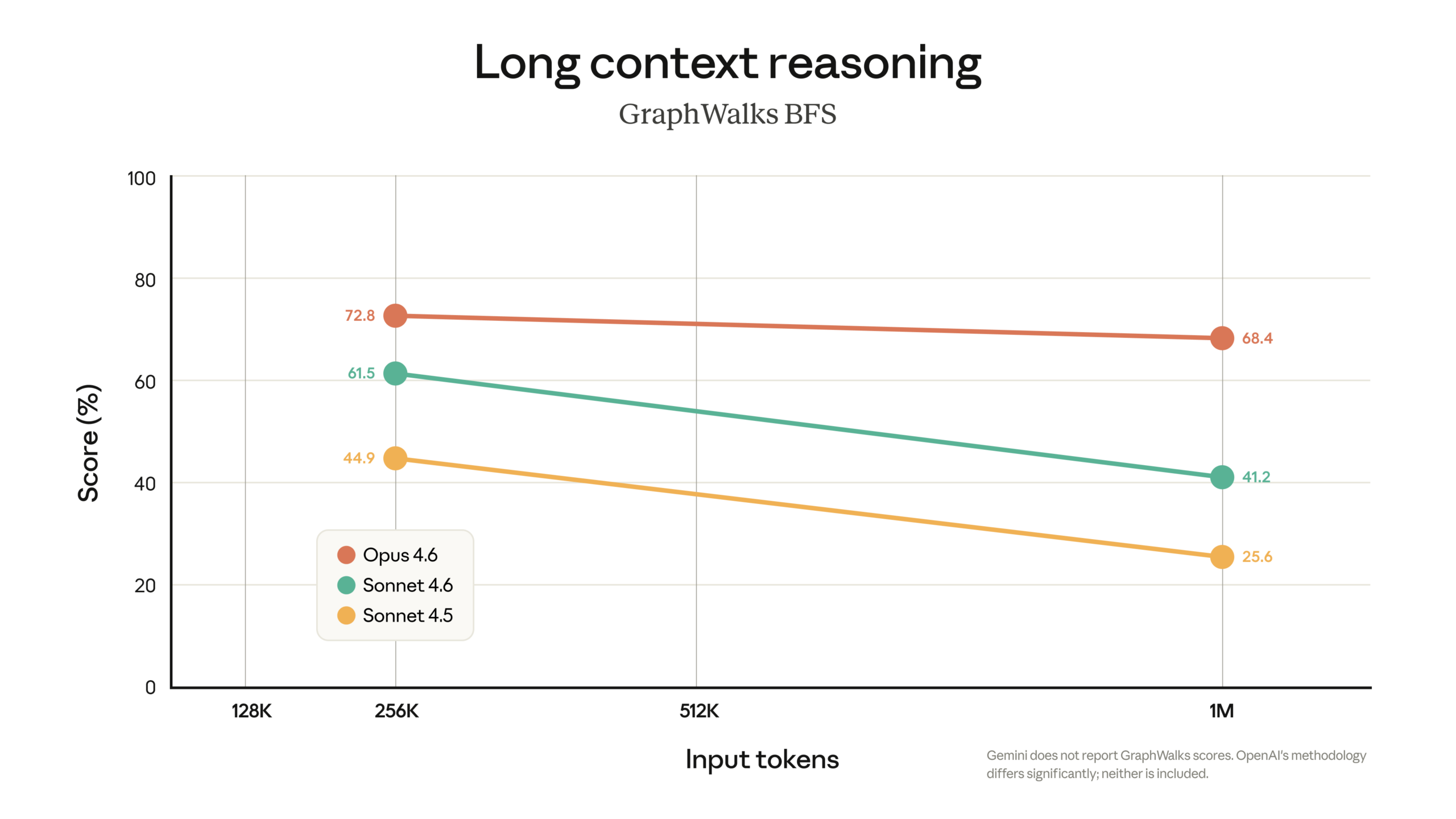

Claude's one-million-token capacity has been technically available but financially inaccessible to many potential users until now. According to Anthropic, both Opus 4.6 and Sonnet 4.6 achieve the highest accuracy among comparable models at full context length in benchmark tests. However, the company acknowledges that the broader challenge of maintaining precision as context windows fill up remains an ongoing area of research and development.

Competitive Implications

This pricing strategy positions Anthropic's models as highly competitive options for applications requiring deep analysis of large documents. By aligning extended context pricing with standard rates, the company addresses one of the primary barriers to adoption for enterprise use cases that involve processing extensive datasets, codebases, or documentation.

The move comes as competitors like OpenAI, Google, and emerging players continue to expand their own context window capabilities. Anthropic's decision to eliminate the premium for long contexts suggests confidence in their technical efficiency and a strategic focus on capturing market share in document-intensive AI applications.

Practical Applications Unlocked

The pricing change fundamentally alters the economics of several AI applications:

- Legal and Compliance: Reviewing entire case files, contracts, or regulatory documents becomes financially viable

- Academic Research: Analyzing complete research papers, theses, or historical archives

- Software Development: Processing entire code repositories for analysis, documentation, or refactoring

- Content Creation: Working with book-length manuscripts or extensive multimedia projects

- Customer Support: Maintaining complete conversation histories across extended customer relationships

The Future of Long-Context AI

While the pricing change represents significant progress, technical challenges remain. The "declining precision as context windows fill up" problem noted by Anthropic continues to be an area of active research across the AI industry. As models process more information, maintaining accuracy and coherence becomes increasingly difficult—a limitation that affects all current large language models regardless of pricing.

Anthropic's move may pressure competitors to reconsider their own pricing structures for extended contexts, potentially accelerating industry-wide adoption of long-context capabilities. For developers and enterprises, this represents not just cost savings but expanded possibilities for what AI can accomplish with comprehensive document analysis.

Source: The Decoder - "Anthropic drops the surcharge for million-token context windows, making Opus 4.6 and Sonnet 4.6 far cheaper"