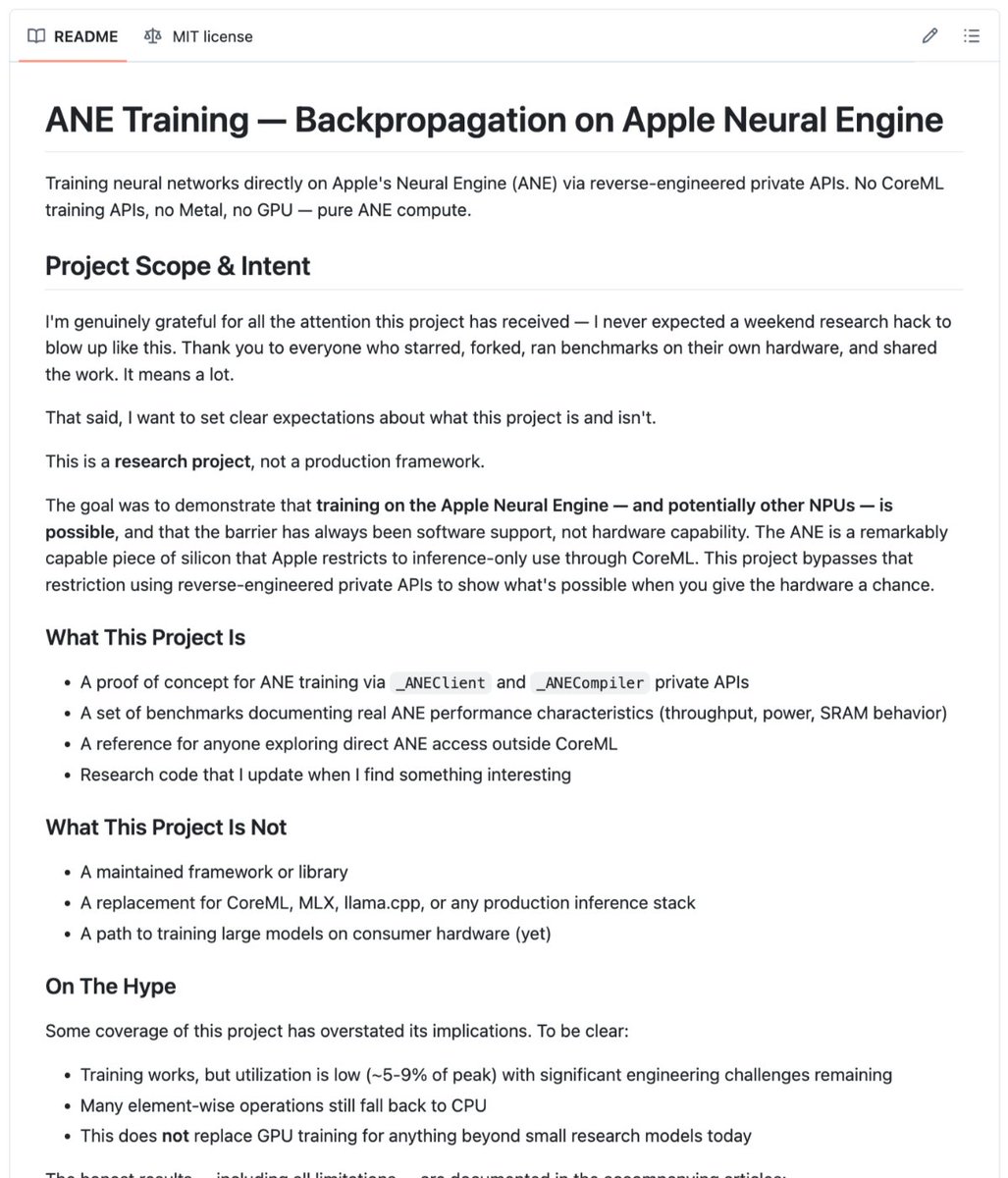

In a significant breakthrough for edge computing, a researcher has successfully reverse-engineered Apple's private Neural Engine (ANE) APIs to enable direct transformer model training on M-series Mac hardware. This development bypasses Apple's CoreML framework entirely, opening up previously restricted capabilities in Apple's specialized AI hardware.

The Neural Engine's Original Limitations

Apple's Neural Engine, embedded in every M-series chip since the M1, was designed with a specific purpose: efficient inference. The hardware accelerator excels at running pre-trained models for tasks like image recognition, natural language processing, and computational photography. However, Apple deliberately restricted its capabilities, providing no public API for training operations, no documentation for backpropagation, and maintaining tight control through CoreML.

This design philosophy reflected Apple's focus on user experience—ensuring battery efficiency and thermal management by limiting the ANE to inference tasks. Training operations, which are computationally intensive and power-hungry, were intentionally left to the CPU and GPU, or pushed to cloud servers.

The Reverse-Engineering Breakthrough

The researcher circumvented these restrictions by directly accessing undocumented _ANEClient APIs and constructing programs in Apple's Model Intermediate Language (MIL). Rather than using Apple's official development tools, the project compiles programs in-memory and feeds data through IOSurface shared memory buffers—a method that completely bypasses CoreML.

Weights are baked into the compiled programs as constants, with each training step dispatching six custom kernels: attention forward, feedforward forward, and four backward passes that compute gradients with respect to inputs. While weight gradients still run on the CPU using Accelerate's matrix libraries, the computationally heavy operations—matrix multiplies, softmax calculations, and activation functions—now execute directly on the ANE hardware.

Technical Implementation Details

The approach represents a significant engineering achievement, requiring deep understanding of Apple's hardware architecture and software stack. By working directly with MIL (Model Intermediate Language), the researcher essentially speaks the same language as Apple's own compiler, allowing direct hardware access without Apple's permission layer.

IOSurface shared memory buffers enable efficient data transfer between CPU and ANE, while the custom kernels represent hand-optimized operations specifically designed for the Neural Engine's architecture. This level of access was previously thought impossible without Apple's cooperation or leaked internal documentation.

Practical Implications

This breakthrough enables three previously impossible scenarios:

Battery-efficient local training: Small models can now be trained directly on Mac hardware without the massive battery drain typically associated with training operations.

On-device fine-tuning: Users can customize AI models with their own data without sending sensitive information to cloud servers or activating power-hungry GPU operations.

Hardware research: Developers can now explore the ANE's true capabilities beyond Apple's officially supported use cases, potentially discovering optimizations and capabilities Apple hasn't publicly documented.

The Future of On-Device AI

If this approach scales effectively, it could fundamentally shift the landscape of personal computing AI. Rather than simply running frozen models downloaded from servers, devices could continuously learn and adapt to individual users' needs while maintaining privacy and efficiency.

The development also raises questions about Apple's walled-garden approach to hardware capabilities. While Apple has historically tightly controlled access to specialized hardware components, this breakthrough demonstrates that determined researchers can unlock capabilities the manufacturer chose to restrict.

Security and Stability Considerations

While technically impressive, this approach comes with significant caveats. Using undocumented APIs means no stability guarantees—future macOS updates could break the implementation without warning. Additionally, bypassing Apple's security layers could potentially introduce vulnerabilities, though the current implementation appears focused on legitimate research purposes.

Apple has not commented on this development, but the company typically discourages use of private APIs in production applications. However, for research and development purposes, this breakthrough provides unprecedented access to Apple's AI hardware capabilities.

Industry Context

This development occurs as the entire tech industry pushes toward more capable edge AI. While companies like Qualcomm and Google have been more open about on-device training capabilities, Apple has maintained its inference-only approach for the Neural Engine. This breakthrough suggests that the hardware itself may be more capable than Apple has publicly acknowledged.

The research also highlights the growing trend of hardware reverse-engineering in the AI space, as researchers seek to maximize performance from specialized accelerators that manufacturers often limit through software restrictions.

Source: @LiorOnAI on X