Key Takeaways

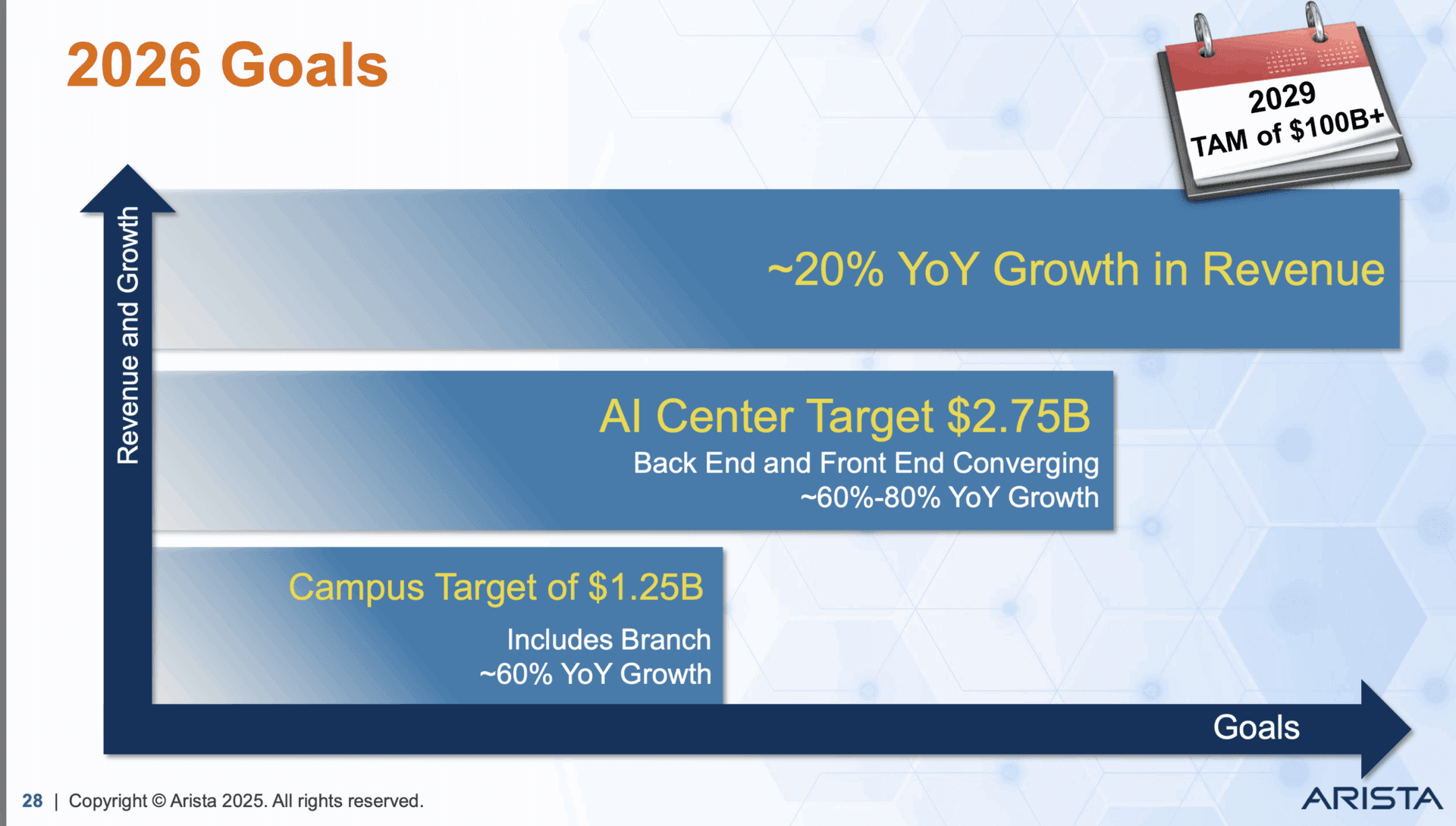

- Arista Networks doubled its 2026 AI networking revenue target to over $3 billion, citing expanded roles for open Ethernet in AI data centers.

- This signals a major shift toward disaggregated, standards-based networking for AI clusters.

What's New

Arista Networks has doubled its 2026 AI networking revenue target, now projecting over $3 billion in annual revenue from AI-related products by 2026. The company, a leading supplier of high-speed data center switches, attributes this revised outlook to the expanding role of open Ethernet in AI infrastructure.

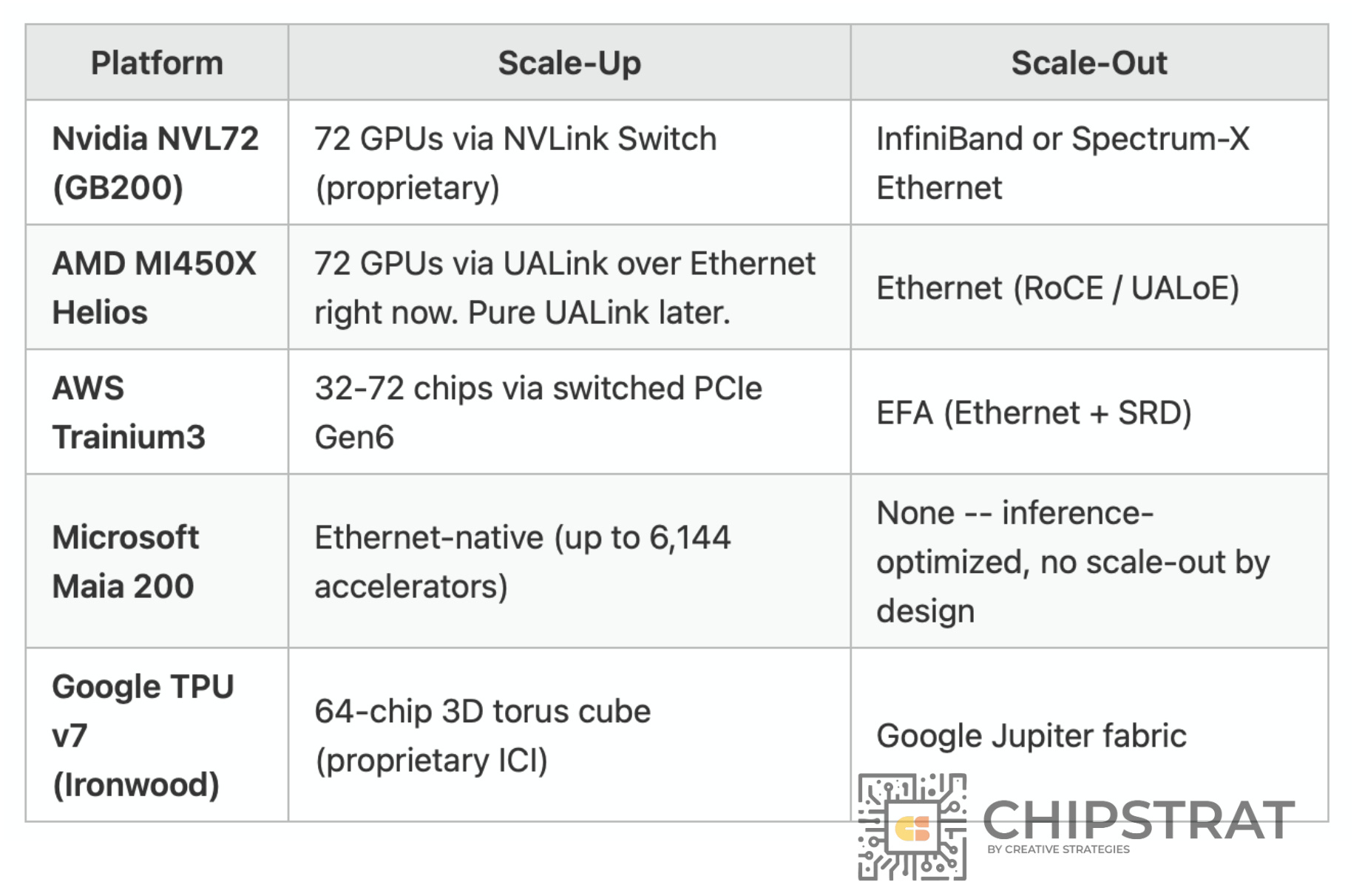

The announcement came during Arista's investor day, where management outlined a vision in which open, standards-based Ethernet switches increasingly displace proprietary InfiniBand and custom interconnects in AI training clusters. Arista's CEO Jayshree Ullal stated that the company's "open networking platform is winning in AI" as hyperscalers and large enterprises seek alternatives to vendor lock-in.

Technical Details

Arista's AI strategy centers on its 7800R4 series of high-density Ethernet switches, which support up to 800 Gbps per port and can scale to clusters of tens of thousands of GPUs. Key technical elements include:

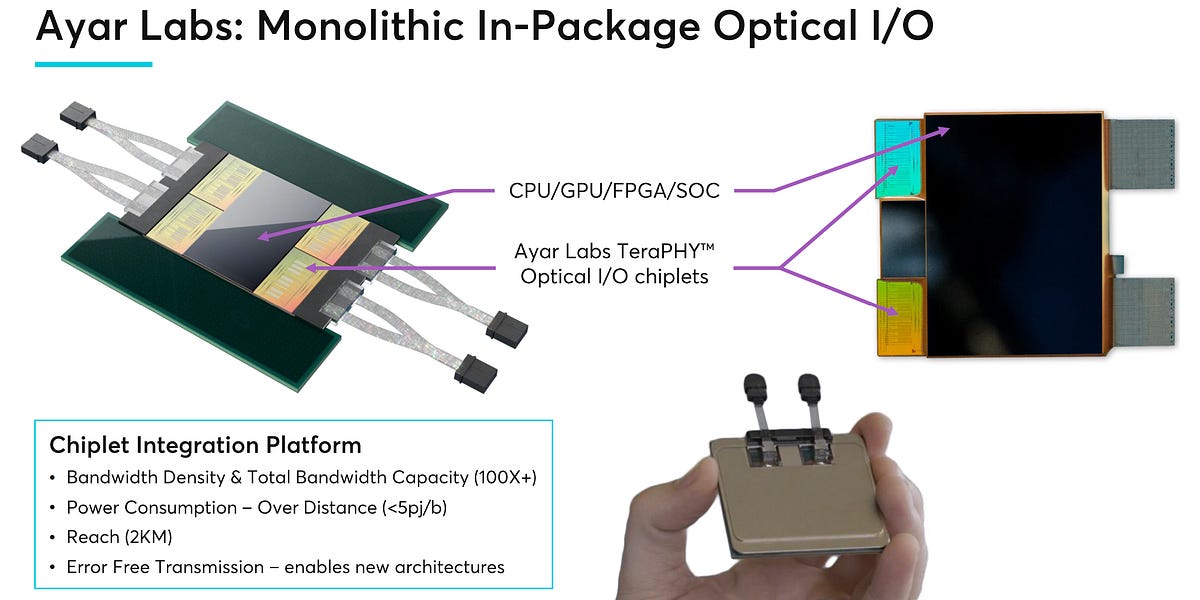

- Open Ethernet: Arista advocates for IEEE 802.3 standard-based Ethernet rather than proprietary protocols like InfiniBand (NVIDIA's ConnectX) or NVLink. This allows customers to mix and match switches, NICs, and optics from different vendors.

- Lossless Fabric: Arista's AI fabric uses RDMA over Converged Ethernet (RoCEv2) and priority flow control to achieve the low-latency, lossless transport required for distributed AI training.

- Telemetry & Automation: The company's CloudVision platform provides real-time visibility into fabric congestion, flow imbalance, and GPU utilization — critical for tuning large-scale AI workloads.

How It Compares

Arista Open Ethernet (RoCEv2) 800 Gbps Microsoft, Meta >$3B NVIDIA InfiniBand (NVSwitch, NVLink) 400 Gbps (HDR), 800 Gbps (NDR) Most hyperscalers ~$10B (est.) Cisco Silicon One (Ethernet) 800 Gbps Large enterprises Not disclosed Juniper Ethernet (disaggregated) 400 Gbps Cloud providers Not disclosedArista's $3B target represents roughly 30% of its total projected 2026 revenue (around $10B), up from an earlier AI revenue target of $1.5B. This implies AI networking will become a major growth driver, though it remains smaller than NVIDIA's data center networking revenue.

What to Watch

- Execution risk: Building and deploying lossless Ethernet fabrics at 100,000+ GPU scale is unproven compared to NVIDIA's InfiniBand, which has been optimized for years.

- Hyperscaler adoption: Microsoft has publicly committed to Ethernet for AI, but Google, Amazon, and Meta are still evaluating. Arista's wins with Microsoft and Meta are positive signals.

- Competitive response: NVIDIA is aggressively pushing InfiniBand and its Spectrum-X Ethernet platform, which offers proprietary enhancements. Cisco is also targeting AI with Silicon One.

- Supply chain: Arista relies on Broadcom's Tomahawk and Jericho chips. Any supply constraints could limit growth.

gentic.news Analysis

Arista's revised target reflects a broader industry trend: the disaggregation of AI networking. For years, NVIDIA's InfiniBand dominated AI training clusters due to its superior performance and tight integration. But as AI clusters scale to 100,000+ GPUs, hyperscalers are pushing back against vendor lock-in. Open Ethernet offers flexibility, lower cost, and multi-vendor sourcing — advantages that align with the operational models of cloud giants.

This follows a pattern we've seen across the AI infrastructure stack: from proprietary ASICs to open RISC-V accelerators, from custom interconnects to standard Ethernet. The bet is that the ecosystem effects of open standards will eventually outweigh the raw performance advantages of proprietary solutions. Arista is positioning itself as the networking equivalent of an open-source AI stack — a bet that is gaining traction.

However, the $3B target is ambitious. NVIDIA's networking revenue (including InfiniBand and Ethernet) was roughly $10B in 2025, and it has a multi-year head start. Arista will need to win substantial share from NVIDIA, Cisco, and others to hit that number. The key variable is whether Ethernet can match InfiniBand's performance for the largest training runs — specifically for all-to-all communication patterns in models with hundreds of billions of parameters.

Frequently Asked Questions

What is open Ethernet and why does it matter for AI?

Open Ethernet refers to standards-based Ethernet switches and NICs that comply with IEEE 802.3 specifications, allowing interoperability between vendors. For AI clusters, this means customers can choose switches from Arista, optics from Finisar, and NICs from Broadcom — rather than being locked into a single vendor's proprietary interconnect. This reduces cost and supply chain risk.

How does Arista's AI revenue target compare to NVIDIA's?

NVIDIA's data center networking revenue (including InfiniBand and Ethernet) was approximately $10 billion in 2025. Arista's revised target of >$3 billion in AI revenue by 2026 represents about 30% of NVIDIA's current networking business. However, NVIDIA is also growing its networking segment rapidly.

What are the technical challenges of using Ethernet for AI training?

Ethernet is not natively lossless — it drops packets under congestion, which is catastrophic for distributed training. To work around this, vendors use RoCEv2 (RDMA over Converged Ethernet) with priority flow control, ECN (Explicit Congestion Notification), and DCQCN (Data Center Quantized Congestion Notification). Tuning these mechanisms for 100,000+ GPU clusters is complex and requires advanced telemetry.

Which companies are already using Arista for AI networking?

Microsoft and Meta are the most prominent hyperscaler customers using Arista's Ethernet-based AI fabrics. Microsoft has publicly stated it uses Ethernet for its internal AI training clusters. Other large enterprises and cloud providers are evaluating or deploying Arista's solutions for smaller-scale AI workloads.